10 - Testing the Offering I: Stated Preference

Lesson 10 Overview

Lesson 10 Overview

Summary

One of the most common pitfalls of new concept introductions is a hidden overreliance on basic stated preference measures. For reasons unbeknownst to me, stated preference and "willingness to pay" surveys have become what appears to be a default choice in new concept efforts.

Part of the overreliance may be due to the fact that stated preference surveys are incredibly straightforward to deploy for a basic user. You design a little experiment, perhaps you create a little intercept survey in a public place, read a brief and show a prototype, and then ask 'if you would buy,' and likely, 'how much would you expect to pay.' Then the basic user compiles results in a few bar charts, and shows management that '70% of people surveyed (n=102) said that they would buy the concept.'

There is a foundational issue, and it only degrades from there: most people, if willing to participate in a survey, will significantly skew to the high (positive) side. In cases where the moderator is physically attractive, the skew can be even more severe.

The reality is that even with the best of intentions and soundest research design, raw verbatim responses of intent tend to be a poor indicator of actual purchase behavior.

This Lesson is designed to point out the shortcomings of stated preference methodologies, how to design to understand consumer preference, and ways to capture purchase preference and intent in your concept development.

Learning Outcomes

By the end of this lesson, you should be able to:

- articulate the strengths and limitations of the stated preference methodologies;

- discern where stated preference methodologies are most valuable;

- evaluate concept development scenarios for best fit with the various stated preference methodologies;

- describe the types of insight each methodology can reveal.

Lesson Roadmap

| To Read | Documents and assets as noted/linked in the Lesson (optional) |

|---|---|

| To Do | Final Case Prospectus |

Questions?

If you have any questions, please send them to my axj153@psu.edu [1] Faculty email. I will check daily to respond. If your question is one that is relevant to the entire class, I may respond to the entire class rather than individually.

Introduction

Introduction

Cross Examining the Witness

Stated preference methodologies are not necessarily a cleanly and distinctly defined set of research tools, they are instead unified by the fact that they rely on gleaning insight, quite literally, from a test audience's "statements." Most commonly in our application, this test audience will be one or more groups of target customers whom we think could create the foundation of the purchase and use of our final offering.

As we will see in the following lesson, the questions underlying stated preference models can sometimes take on the feel of a courtroom examination on paper, and one could consider the goals to be somewhat similar at times. Stated preference models will sometimes ask the participant several very similar questions in hopes of finding any inconsistencies. They may ask what could be considered confusing or overly hypothetical questions in hopes of revealing your inclinations.

These mechanisms are by design, and lie at the heart of stated preference models, as they tend to be highly dependent on very specific research design and statistical analysis. We will be asking the witness batteries of related questions, and then statistically analyzing the results across a statistically significant number of witnesses.

Flawed Recall

One of the weaknesses of stated preference methods is, indeed, that they rely on statements as opposed to actions. These methods rely on not just the participant's recall of their own actions (which are famously biased and subject to post-rationalization), but on their hypothetical decisions on hypothetical products presented in isolation and purchased with hypothetical money participants hypothetically have.

You can perhaps see but a glimmer of a methodological weakness here.

If participants' self reporting was indeed highly accurate, consider the extension of the concept: products and businesses utilizing these methodologies in their offering research would indeed never fail, as there would be no financial risk or uncertainty. They would but simply send out batteries of surveys, and have consumers tell them exactly how much revenue they can expect.

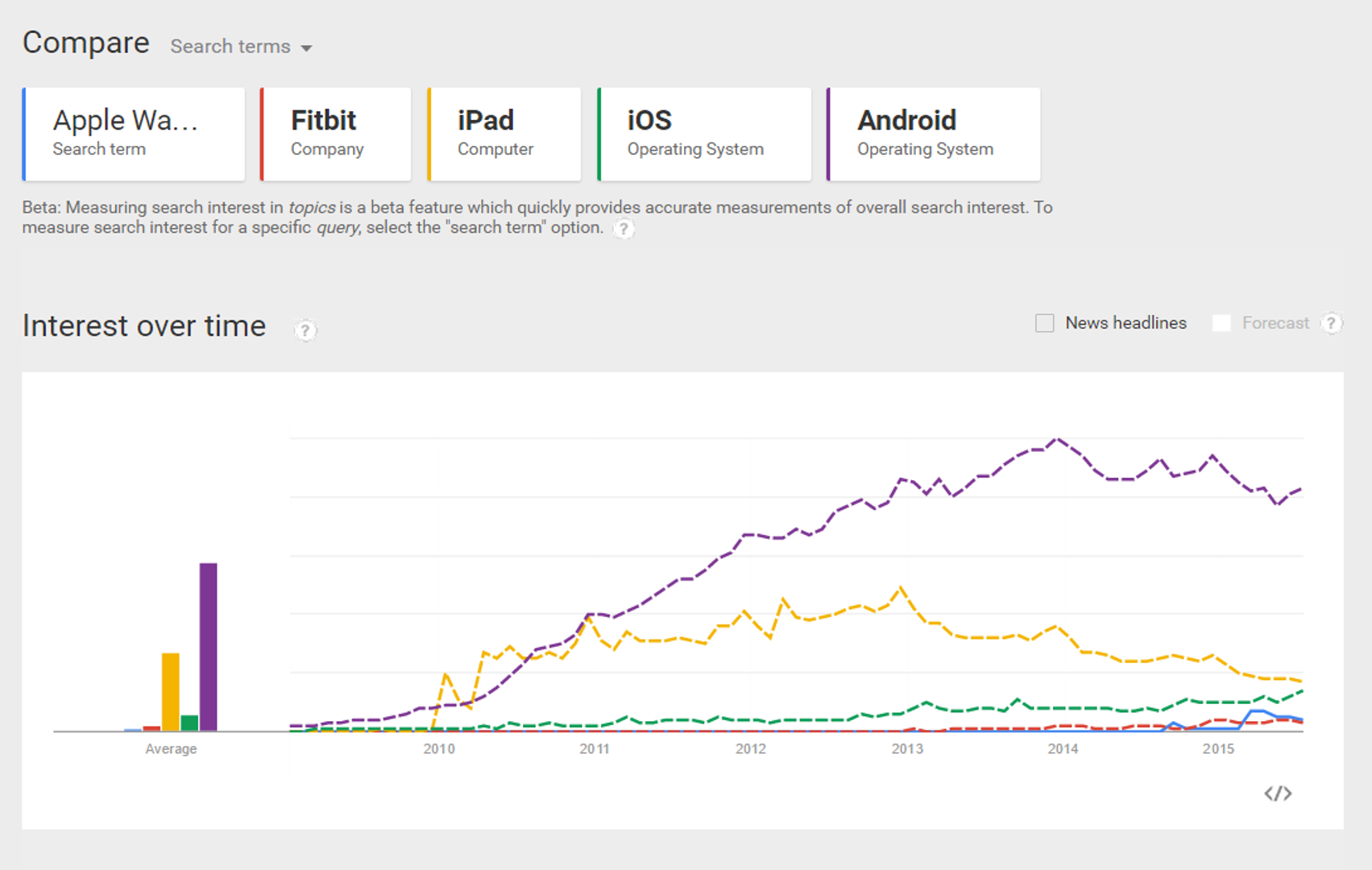

If that were the case, the below would never happen to a company like Apple, the world's 5th largest corporation, upon the release of the Apple Watch:

The fact that one of the most touted product releases from Apple in the past decade (2014 revenue: $183 billion) is struggling to create the same level of interest of a far more limited product from a company .04% the size of Apple (Fitbit estimated 2014 revenue: $754 million), or that sales have dropped 90% since release week [5], is likely something that Apple did not forecast.

And make no mistake, Apple is arguably one of the most successful companies on the planet, especially in regard to innovation, supply chain, forecasting, and new product rollout.

Biased Witnesses

Sustainability-driven innovation specifically faces a unique set of obstacles in stated preference research, and that is the social pull of sustainability creating positive social approval bias in participants. In essence, what this means is that if someone sees sustainability as socially desirable, they will likely self-report their willingness to engage in sustainability-related behaviors and purchases that they actually would partake in "in the real world." In considering the tremendous social influence sustainability can have on people, this is a very real research concern for us in developing sustainability-driven offerings.

To provide some context for how much influence this may have, consider the influence of social approval bias in something as simple as reporting how many fruits and vegetables you consumed the day before.

From "Effects of social approval bias on self-reported fruit and vegetable consumption: a randomized controlled trial" by Miller et al. [6] [emphasis is mine]:

Methods

A randomized blinded trial compared reported fruit and vegetable intake among subjects exposed to a potentially biasing prompt to that from control subjects. Subjects included 163 women residing in Colorado between 35 and 65 years of age who were randomly selected and recruited by telephone to complete what they were told would be a future telephone survey about health. Randomly half of the subjects then received a letter prior to the interview describing this as a study of fruit and vegetable intake. The letter included a brief statement of the benefits of fruits and vegetables, a 5-A-Day sticker, and a 5-A-Day refrigerator magnet. The remainder received the same letter, but describing the study purpose only as a more general nutrition survey, with neither the fruit and vegetable message nor the 5-A-Day materials. Subjects were then interviewed on the telephone within 10 days following the letters using an eight-item FFQ and a limited 24-hour recall to estimate fruit and vegetable intake. All interviewers were blinded to the treatment condition.

Results

By the FFQ method, subjects who viewed the potentially biasing prompts reported consuming more fruits and vegetables than did control subjects (5.2 vs. 3.7 servings per day, p < 0.001). By the 24-hour recall method, 61% of the intervention group but only 32% of the control reported eating fruits and vegetables on 3 or more occasions the prior day (p = 0.002). These associations were independent of age, race/ethnicity, education level, self-perceived health status, and time since last medical check-up.

Using Research Intelligently

That said, there is not a research methodology in existence which does not have significant flaws or limitations. The key for us is to understand the limitations of each methodology and be intelligent in the use of additional research methods to create a well-rounded understanding of the offering.

There are a few significant functional advantages to stated preference methodologies:

- They are time and cost efficient.

- You do not need to have a final product, making it useful for testing product renderings or even concept statements.

- You can change or modify proposed offerings and retest.

- It can provide a useful set of boundaries to guide your decision making in later phases.

- When statistically significant, findings can provide an initial "gate" for the offering development process.

Expert Judgments

Expert Judgments

Mining the Minds of the Domain

Expert judgments, a stated preference methodology, rely not on a volume of opinions of those in the target market, but instead elicit the opinions and judgments of experts who may have decades of experience in domains related to the offering. These experts could range from economists to subject matter experts to innovation experts to pricing strategists, but a key to the expert judgment methodology is to interact with these experts in a very structured and methodical way.

Expert judgments can be valuable not only in that they may be an excellent "jump start" to your team's body of knowledge about the emerging offering, but the experts may offer a depth of experience in a subject matter your team may never have (or need to have). In expert judgment, these subject matter experts may help you to understand the space, the opportunity, potential competition, market acceptance of similar offerings in the past, pricing, and other practical concerns from many different perspectives.

That said, as we will see in a moment, we must always be conscious of the limitations of expertise.

Structuring Expert Judgments

As we just addressed, effective and more formal expert judgment analysis does not start with a phone call and end with some scrawled notes. Expert judgments may be presented in ways to increase the probability of direct and highly focused judgments.

The following excerpt captures some practical and philosophical considerations in eliciting expert judgments, from "Structured Decision Making" by Gregory, Failing, et al [9].:

In an ideal world, uncertainties are reduced quickly and efficiently with research, monitoring, or modeling, and information is provided in time to aid decision making. However, it is not always possible to conduct new research to address key uncertainties, and it is seldom possible to eliminate them even with new research. In such cases, decision analysis suggests the elicitation of subjective technical judgments. There is well-established literature on the methods that are required for eliciting defensible and transparent judgments in the face of significant uncertainty and on the opportunities and limitations for using such judgments as aids to improved management (Morgan and Henrion, 1990; Keeney and von Winterfeldt, 1991).

The steps associated with best practice in structured expert judgment include:

- Identify multiple experts based on an explicit selection process and criteria, and include experts from different domains and disciplines of knowledge (e.g., science versus local knowledge).

- Clearly define the question for which a judgment will be elicited, making sure that the question separates (as much as possible) technical judgments from value judgments.

- Decompose complex judgments into simpler ones. This will improve both the quality of the judgment and, to the extent it helps to separate a specific technical judgment from the management outcomes of that judgment, its objectivity.

- Document the expert's conceptual model. Not only will this help the quality of the judgment and its communication to others, but it will create a clear and traceable account that will facilitate future peer review.

- Use structured elicitation methods to guard against common cognitive biases that have been shown to consistently reduce the quality of judgments (Morgan and Henrion, 1990).

- Express judgments quantitatively where possible. The use and interpretation of qualitative descriptions of magnitude, probability or frequency vary tremendously among individuals. This seems likely to be amplified in a cross-cultural setting.

- Characterize uncertainty in the judgment explicitly, using quantitative expressions of uncertainty wherever possible to avoid ambiguity.

- Document conditionalizing assumptions. Differences in judgments are often explained by differences in the underlying assumptions or conditions for which a judgment is valid.

- Explore competing judgments collaboratively, through workshops involving local and scientific experts, with an emphasis on collaborative learning.

Methods for eliciting probabilistic judgments include:

Fixed value methods. Estimate the probability of being higher or lower than a selected value–what is the probability that abundance (or price or nitrate loading) will be greater than 1000?

Fixed probability methods. Estimate the value associated with a specific probability. "Tell me the abundance (or price or nitrate loading, etc.) that you think has only a 5% chance of being exceeded."

Interval methods. Estimate probabilities associated with intervals. Usually it's useful to focus on medians and quartiles. Elicit the upper and lower extremes (usually using a fixed probability of 5%). Choose a value of abundance so that there is an equal probability that the true value lies above or below the value. This is the median. Then divide the lower range into two bins so that there is an equal probability that the true value falls in either bin. Then do the same for the upper range.

Knowing the Limitations of Experts

As the intrepid innovator trying to launch a new, sustainability-driven offering, it can be quite easy to be minimized by any one (or all) of the experts you engage. For this reason, it is always useful to not feel intimidated, but instead channel your lack of expertise into understanding the expert's position on your offering. Not only will the knowledge be useful in understanding more about the judgment and rationale the expert has applied in evaluating your offering, it will help you understand if the expert's experience falls more toward being a practitioner or a philosopher/lecturer.

Professor Philip Tetlock of the Wharton School has done some incredibly interesting long-term research of the predictive power of experts, and if they indeed are able to predict future outcomes any better than a non-expert person (or the classic example of 'the dart-throwing chimpanzee').

The following is an introduction into arguably his most publicized research regarding the classification and study of "fox" and "hedgehog" experts. Please watch the following 3:57 video.

Video: Everybody's an Expert: Fox vs. Hedgehog Personalities (3:57)

But who are these foxes and hedgehogs? Well there's an essay by Isaiah Berlin that came out about 45 years ago in which he draws on a fragment of Greek poetry from 2,500 years ago by the Greek warrior poet Archilochus, which is roughly translated as: the Fox knows many things but the Hedgehog knows one big thing.

And he defines the ideal type hedgehog as an expert or a professional or a thinker who relates everything to a single central vision, in terms of which, all that they say has significance. So you could be a Marxist hedgehog; you could be a libertarian hedgehog; you could be a boomster hedgehog; or you could be a doomster Malthusian hedgehog. You could be a realist hedgehog; you could be an idealist hedgehog.

The important thing is that you approach history, you approach current events in a deductive frame of mind. You have certain first principles and you try as hard as you can to absorb as many different facts into the framework of those first principles. That might sound like rigidity, but if you think about it for a minute from a philosophy of science point of view it also is parsimony. That is what scientists are supposed to do. They’re supposed to explain as much as possible with as little as possible. So we'll come back to that value tension in a moment.

The ideal type hedgehogs Berlin defined this way: they pursue many ends often unrelated and even contradictory. They entertain ideas that are centrifugal rather than centripetal, without seeking to fit them into, or exclude them from, any one all-embracing inner vision.

Those are the Foxes. So foxes and hedgehogs: foxes are skeptical of big theories. You're not going to find very many foxes who are true believers. And interestingly the foxes who did the best on my forecasting exercises were the least enthusiastic about participating. And they're the most diffident about their ability to forecast because they really do see history in substantial measure as quite unpredictable, whereas the Hedgehogs were more enthusiastic about it. They tend to be more enthusiastic about extending their favorite theories into new domains and they tend to be more confident in their ability to predict.

Now this is some data which I'll just go over very quickly. If you're on the perfect diagonal, the straight line here represents perfect calibration, whereas the curvy lines represent actual groups of human beings making thousands of predictions. And these are aggregations of that. And the key thing to note here is that one of the lines strays further from the perfect diagonal and all the other lines. So the line that strays farthest is the line in which hedgehogs are making long-term predictions within the domains of their expertise, whereas the line that is closest to the perfect diagonal is foxes making short-term predictions within the domain of their expertise.

Now there's an argument that started to unfold about whether the foxes were doing better than the Hedgehogs - not because they're more perceptually accurate, but rather because the foxes are (excuse the pun) - foxes were chickens. The foxes were unwilling to say anything much more than maybe. So the foxes were clinging around the subjective probability point of .5. So one way to test that - if that were true then the Foxes should not be as discriminating as the Hedgehog. The Hedgehogs should maybe lose on calibration, but they should win on discrimination.

In continuing our brief discussion of Tetlock's research into expertise, the following passage is from "Overcoming Our Aversion to Acknowledging Our Ignorance" by Gardner and Tetlock. [11]

In the most comprehensive analysis of expert prediction ever conducted, Philip Tetlock assembled a group of some 280 anonymous volunteers—economists, political scientists, intelligence analysts, journalists—whose work involved forecasting to some degree or other. These experts were then asked about a wide array of subjects. Will inflation rise, fall, or stay the same? Will the presidential election be won by a Republican or Democrat? Will there be open war on the Korean peninsula? Time frames varied. So did the relative turbulence of the moment when the questions were asked, as the experiment went on for years. In all, the experts made some 28,000 predictions. Time passed, the veracity of the predictions was determined, the data analyzed, and the average expert's forecasts were revealed to be only slightly more accurate than random guessing—or, to put more harshly, only a bit better than the proverbial dart-throwing chimpanzee. And the average expert performed slightly worse than a still more mindless competition: simple extrapolation algorithms that automatically predicted more of the same.

Cynics resonate to these results and sometimes cite them to justify a stance of populist know-nothingism. But we would be wrong to stop there, because Tetlock also discovered that the experts could be divided roughly into two overlapping yet statistically distinguishable groups. One group would actually have been beaten rather soundly even by the chimp, not to mention the more formidable extrapolation algorithm. The other would have beaten the chimp and sometimes even the extrapolation algorithm, although not by a wide margin.

One could say that this latter cluster of experts had real predictive insight, however modest. What distinguished the two groups was not political ideology, qualifications, access to classified information, or any of the other factors one might think would make a difference. What mattered was the style of thinking.

One group of experts tended to use one analytical tool in many different domains; they preferred keeping their analysis simple and elegant by minimizing "distractions." These experts zeroed in on only essential information, and they were unusually confident—they were far more likely to say something is "certain" or "impossible." In explaining their forecasts, they often built up a lot of intellectual momentum in favor of their preferred conclusions. For instance, they were more likely to say "moreover" than "however."

The other lot used a wide assortment of analytical tools, sought out information from diverse sources, were comfortable with complexity and uncertainty, and were much less sure of themselves—they tended to talk in terms of possibilities and probabilities and were often happy to say "maybe." In explaining their forecasts, they frequently shifted intellectual gears, sprinkling their speech with transition markers such as "although," "but," and "however."

Using terms drawn from a scrap of ancient Greek poetry, the philosopher Isaiah Berlin once noted how, in the world of knowledge, "the fox knows many things but the hedgehog knows one big thing." Drawing on this ancient insight, Tetlock dubbed the two camps hedgehogs and foxes.

The experts with modest but real predictive insight were the foxes. The experts whose self-concepts of what they could deliver were out of alignment with reality were the hedgehogs.

It's important to acknowledge that this experiment involved individuals making subjective judgments in isolation, which is hardly the ideal forecasting method. People can easily do better, as the Tetlock experiment demonstrated, by applying formal statistical models to the prediction tasks. These models out-performed all comers: chimpanzees, extrapolation algorithms, hedgehogs, and foxes.

But as we have surely learned by now—please repeat the words "Long Term Capital Management"—even the most sophisticated algorithms have an unfortunate tendency to work well until they don't, which goes some way to explaining economists' nearly perfect failure to predict recessions, political scientists' talent for being blindsided by revolutions, and fund managers' prodigious ability to lose spectacular quantities of cash with startling speed. It also helps explain why so many forecasters end the working day with a stiff shot of humility.

Is this really the best we can do? The honest answer is that nobody really knows how much room there is for systematic improvement. And, given the magnitude of the stakes, the depth of our ignorance is surprising. Every year, corporations and governments spend staggering amounts of money on forecasting and one might think they would be keenly interested in determining the worth of their purchases and ensuring they are the very best available. But most aren't. They spend little or nothing analyzing the accuracy of forecasts and not much more on research to develop and compare forecasting methods. Some even persist in using forecasts that are manifestly unreliable, an attitude encountered by the future Nobel laureate Kenneth Arrow when he was a young statistician during the Second World War. When Arrow discovered that month-long weather forecasts used by the army were worthless, he warned his superiors against using them. He was rebuffed. "The Commanding General is well aware the forecasts are no good," he was told. "However, he needs them for planning purposes."

This widespread lack of curiosity—lack of interest in thinking about how we think about possible futures—is a phenomenon worthy of investigation in its own right. Fortunately, however, there are pockets of organizational open-mindedness. Consider a major new research project funded by the Intelligence Advanced Research Projects Activity, a branch of the intelligence community.

In an unprecedented "forecasting tournament," five teams will compete to see who can most accurately predict future political and economic developments. One of the five is Tetlock's "Good Judgment" Team, which will measure individual differences in thinking styles among 2,400 volunteers (e.g., fox versus hedgehog) and then assign volunteers to experimental conditions designed to encourage alternative problem-solving approaches to forecasting problems. The volunteers will then make individual forecasts which statisticians will aggregate in various ways in pursuit of optimal combinations of perspectives. It's hoped that combining superior styles of thinking with the famous "wisdom of crowds" will significantly boost forecast accuracy beyond the untutored control groups of forecasters who are left to fend for themselves.

Other teams will use different methods, including prediction markets and Bayesian networks, but all the results will be directly comparable, and so, with a little luck, we will learn more about which methods work better and under what conditions. This sort of research holds out the promise of improving our ability to peer into the future.

Brochet's Research into Flawed Expert Judgment

One of my favorite examples to illustrate the role of marketing and the imagined consumption experience also happens to dually serve the role of mitigating the weight of expert opinion: Frédéric Brochet's body of research into the wine consumption experience, beginning with his dissertation [12]. In a complimentary vein to Tetlock's research into foxes and hedgehogs, the following video summarizing two of Brochet's studies (indexed to play from 3:10 to 7:06) helps to illustrate that expertise may not always lie with the "experts."

Video: The Trouble with Experts (0:00)

Transcript of The Trouble With Experts

[MUSIC PLAYING]

PRESENTER: Trust me, because I'm an expert.

ANN-MARIE MACDONALD: Been getting good market advice lately?

PRESENTER: Bear Sterns is fine.

ANN-MARIE MACDONALD: Help with those nagging symptoms.

PRESENTER: The one way to cure eczema, gout, arthritis.

PRESENTER: And consultants at work. Not losing faith in the professional experts, are we? Take heart-- qualified help is coming right up.

PRESENTER: [INAUDIBLE]

[INTERPOSING VOICES]

PRESENTER: I'm not going to be bullied by your ranting.

PRESENTER: Only the experts who are guaranteed to be wrong get on television.

ANN-MARIE MACDONALD: I'm Ann-Marie MacDonald, and this is the trouble with the experts.

[MUSIC PLAYING]

PRESENTER: Well Mark, it depends who those people are. Obviously, I represent--

ANN-MARIE MACDONALD: Experts. We can't live without them.

PRESENTER: They have ulterior motives. They're using it. There's something [INAUDIBLE].

ANN-MARIE MACDONALD: They tell us how to fix our cars, decorate our homes, raise our kids, and cook our meals.

PRESENTER: Quarter them. Or you can use small, red onions.

ANN-MARIE MACDONALD: They tell us what wines to drink, what art to buy, and what opinions to hold, as well as how to eat right, exercise right, and live forever.

PRESENTER: If you want to go on a diet, there's someone who's may tell you exactly how you should diet. And if you want to clip your toenails, there's someone who's going to be an expert in that.

ANN-MARIE MACDONALD: Every day, armies of new experts, analysts, pundits, consultants, and other authorities are churned out to fill our needs in the media and elsewhere.

PRESENTER: I'm an expert in prison survival. Trust me, I'm an expert.

[INAUDIBLE]

PRESENTER: [INAUDIBLE]

PRESENTER: Thumbs down to the stock, Amanda. It's going to go to zero.

ANN-MARIE MACDONALD: And we often cede our own opinions to them because, well, they're experts, so they know better, don't they? In the 2008 stock meltdown, we discovered that our most important experts, our financial gurus, didn't know much at all. And no expert predicted the recent Middle East revolution, though everyone had plenty to say afterwards.

[INTERPOSING BROADCAST VOICES]

So what about all the other experts out there? Should we listen to them? Does having expertise mean you make better decisions and better predictions than regular people? Or are they just part of a new cult, an ever-growing expert industry that's become our new religion?

DAN GARDNER: The average expert is about as accurate as a chimpanzee throwing a dart.

DR. BEN GOLDACRE: It's like a sort of vast army of fools. And they're afforded absolute authority in mainstream media.

TJ WALKER: A huge, huge percentage of experts are absolutely full of nothing but BS. And that's your sound bite for today.

ANN-MARIE MACDONALD: It's time to examine the experts and see how reliable they are. And we'll start with the experts who intimidate almost everyone-- wine experts, whose godlike ratings tell us mere mortals what we're supposed to drink to join the fine wine crowd. These experts are among the snootiest of authorities who take one sip of wine and pronounce it to be pecan-flavored with a hint of melted licorice and stone dust. But do wine experts really know what they're talking about? A good place to find out is here in France's Loire Valley.

Frederic Brochet started tasting wine on his father's vineyard when he was just 11. Today, he produces a million bottles a year of Chateau [INAUDIBLE] Ampelidae. But Brochet is also a professor of oenology, the science of wine, at Bordeaux University. He says most wine experts can't tell a great wine from an ordinary one. And he's proved it many experiments.

In one study, Brochet asked a group of wine experts to taste two bottles of Bordeaux, one labeled as a fancy grand cru, the other has ordinary table wine. In fact, the same wine was in both bottles. But 54 of the 56 experts preferred the wine in the better bottle, fooled by what they expected to taste.

In his spare time, Brochet is also an expert who consults for Fauchon, Paris's most prestigious wine store. He holds informal blind tastings here, too, for passing shoppers and some professionals. And he's been known to switch a bottle or two. Brochet is swapping this $30 Chateau Chamirey for this $500 Nuits St-Georges and vice versa. Will anyone know the difference?

Unlike his real experiments, people here can discuss the wines and help each other in their detective work. But even so, most are fooled. This wine consultant rated the $30 wine higher than a $500 one.

[BUZZER]

This non-expert chooses the better wine in the cheaper bottle. But the store's young wine consultant correct hers, only he's been fooled.

[BUZZER]

In one famous study, Brochet found that experts can't even tell white wine from red wine. He served 54 other wine experts a red and white wine to compare. In fact, they were both secretly the same wine, only one was dyed red with a drop of food coloring. Not a single expert spotted the trick. Brouchet says it's all about grape expectations.

Brochet says no wine costs more than $20 a bottle to produce. And the price of very great wine is largely driven by mythology and marketing.

But wine tasters aren't the only ones dictating our tastes. And in some fields, the stakes are breathtaking. In the multi-million dollar world of art, it's hard to know which paintings have great value and are worth buying and which are just imitations. But a good art expert can help you decide, or so you'd think, only here, too, top experts can be fooled by what they expect to see.

This exhibit at London's National Gallery is embarrassing, so disgraceful, these paintings are usually hidden away in the basement. But now, they're out for all to see. It's a collection of fakes and forgeries that fooled the museum's experts and curators for decades.

The museum is bravely displaying its mistakes for all to see. But it's just a small part of the picture when it comes to art experts being fooled by forgers all over the world. How does it happen? Meet British artist John Myatt, who forged more than 200 works by the great masters, from Manet to Matisse, then passed them off as originals with the help of a conman partner.

JOHN MYATT: Nicolas de Stael, if anybody's heard of him.

ANN-MARIE MACDONALD: For almost a decade, Myatt fooled England's top art critics, galleries, museums, and auction houses in what Scotland Yard called the biggest art fraud of the 20th century. Myatt started forging in the late 1980s by visiting museums to study the style of a well-known British painter, Ben Nicholson.

JOHN MYATT: And I looked at the painting, stood there for about an hour or so until I sort of, more or less, knew it backwards, went home, and painted something along the same lines. And it seemed to work quite nicely.

ANN-MARIE MACDONALD: Myatt says his paintings were amateurish at first, yet he and his partner, John Drewe, took two fake paintings by well-known French artist, Roger Bissiere, to the world famous Tate Gallery. They pretended to be art historians and fooled the museum's experts into thinking the paintings were genuine.

JOHN MYATT: And then two people in white coats bring the paintings up. So we're looking down a table, looking at the paintings. And they said, oh, they're just so lovely. Oh, you know. And they were painted in just ordinary house paint on modern canvases. Their expert looked at it. And he said, yeah, looks good to me. I thought it was unbelievable. I just thought it was just too stupid to be true.

ANN-MARIE MACDONALD: By the mid '90s, Myatt had painted almost 200 fake Chagalls, Picassos, Moreaus, and Giacomettis and seen them sell at Europe's top auction houses. Gradually, his forgeries got better. But he still can't believe that so many experts were fooled.

JOHN MYATT: They've been told what they're going to see. And so when they see it, they see it. I feel very sorry for experts, frankly. Even the very best expert is fallible. They will make mistakes. The best fakers have never been caught. The very best fakes are the ones that you think are genuine right now, in the art galleries through the world. So you don't know whether they're fakes or not.

ANN-MARIE MACDONALD: Over at the National Gallery, curator Marjorie Wieseman says even top experts make mistakes because, like wine tasters, part of them wants and expects to believe they found something special.

MARJORIE WIESEMAN: All art historians, all curators, are looking for the next great discovery. So they want to find an important lost masterpiece. And sometimes, they lose sight of doing the right homework. I think what gets in the way most often is greed. And not just financial greed, but also scholarly greed-- you want to be credited with a great discovery.

ANN-MARIE MACDONALD: In 2009, a major Hamburg museum opened an exhibition of Chinese terracotta warriors, only to have them exposed as worthless fakes. Meanwhile, an art expert who ran a German state museum was duped into declaring that a painting with bold splotches of color was the signature of Ernst Wilhelm Nay, a Guggenheim prize-winning artist. In fact, the artist was a chimpanzee.

MARJORIE WIESEMAN: I think that it's museums, auctioneers, private collectors, art historians, scholars. I think everyone is vulnerable to that.

ANN-MARIE MACDONALD: Art and wine may be elusive qualities to judge. But business is made up of cold, hard facts. That's why there are countless business coaches and management consultants whose advice fills bookstores. But what exactly makes them such experts?

When former management consultant Matthew Stewart started out, he didn't have a shred of knowledge about business, just a philosophy degree. But his employer gave him a three week management course that turned him into an overnight expert.

MATTHEW STEWART: I was an accidental consultant. I pretty much fell backward into consulting. The first surprise for me was that my absolute lack of training didn't matter. I didn't know how the stock market worked.

ANN-MARIE MACDONALD: Stewart soon became a jet set consulting star. Traveling all over the world, advising businesses and governments. He explains how anyone can do it if they look the part in his book, The Management Myth, Why The Experts Keep Getting It Wrong.

MATTHEW STEWART: So there are a number of simple tips if you want to be an expert. First thing is it's important to be tall. That's always a good way to establish authority. Second thing is to wear bling-bling, shiny things. Wear things that show that you have wealth on your person. Drive the right cars. Stay in the right hotels.

Well, nothing sells like success. That's very important to establish your expertise. You demonstrate your knowledge and your expertise with the result, the fact that you've turned these forces in your favor.

PRESENTER: Maximize. Minimize.

ANN-MARIE MACDONALD: To pass as a successful expert, says Stewart, you have to sound like one, too.

MATTHEW STEWART: You do need to master a certain amount of jargon, which meant I dropped a lot of bottom lines. And I tried maximizing things, instead of making them better. You don't want to say, our strategy is based on doing what we do well. It's, you say it's based on our core competences. So that's how you become an expert.

ANN-MARIE MACDONALD: In Paris, historian [? Etienne ?] [INAUDIBLE] believes experts are our new priests and jargon is their secret language.

PRESENTER: Well, jargon Is going to be the magical terms, you know, like in most religions. Latin, for instance, for the Catholic church, can be like jargon, in the sense if you don't understand it but the priest does, it means that he's closer to certain powers that you don't deserve to be close to.

ANN-MARIE MACDONALD: Matthew Stewart feels that management gurus are an illusion, that the whole field is a dressed up facade that pretends business is a science run on formulas.

MATTHEW STEWART: There's a notion out there very important for our economy that there are these people who have special access to a kind of expertise. It's an expertise in how to organize businesses, how to run the world. And then that creates this opening for people to step forward and say, I am the expert. I know how to run human organizations in the same way that you, sir, know how to build a cell phone or design a building. And yet, it's unfounded.

ANN-MARIE MACDONALD: But Stewart says if he was a management expert fraud, so were all the rest.

MATTHEW STEWART: You're in a field where, truth is, there are no genuine experts. The field is cracked. The basic idea that there is a kind of science or technology that you can apply in this field, that's just false.

ANN-MARIE MACDONALD: Yet corporations and governments love to hire experts anyway to cover their ass-sets.

DAVID FREEDMAN: Basically, it's a cover-your-butt kind of deal. If things later on go down the tubes, you want to be able to say you listened to some pretty, smart respectable people. And they were wrong, too. If it goes right, of course, then you're a genius. And you don't really actually have to mention all those experts who steered you in the right direction.

ANN-MARIE MACDONALD: There's another area where almost everyone is hungry for expert advice.

PRESENTER: Vitamin D, it is the miracle drug.

PRESENTER: Pomegranate juice, a study says--

PRESENTER: A new study says coffee is good for your heart.

ANN-MARIE MACDONALD: In our health-obsessed society, we look for wonder foods to help us live forever, from miracle omega-3 eggs and salmon to life-saving cereals packaged more like medicine than food.

PRESENTER: Pineapple contains a protein-digesting enzyme called--

ANN-MARIE MACDONALD: We seek our magic answers from an army of new nutrition experts, diet specialists, and others who give us precise advice on what foods to eat and avoid to stay well or ward off cancer.

PRESENTER: Leafy green vegetables, all good stuff.

PRESENTER: Avocado. Avocado.

ANN-MARIE MACDONALD: Dr. Ben Goldacre is a well-known British science critic. He says many nutrition experts have even less training and qualifications than management gurus.

DR. BEN GOLDACRE: They've called themselves nutritionists. What's interesting is that this group of academics who work on researching the relationship between food and health, who also used to call themselves nutritionists, who are now starting to realize they're going to have to change the name for what they do, because it's been so devalued and caricatured by the arrival of this new, bizarre profession.

ANN-MARIE MACDONALD: Goldacre says professional associations often have such informal standards that anyone can get some kind of official-looking certificate. In fact, he mailed away for one from this nutrition association for his deceased cat, Henrietta, and got it.

DR. BEN GOLDACRE: Which shows, you don't have to be a nutritionist. But you also don't have to be a human being, nor do you even have to be alive to be a member of the American Association of Nutritional Consultants.

DR. JOE SCHWARCZ: Ready? Three, two, one. Ah, what do you know? Instead of an explosion, we have a lamp.

ANN-MARIE MACDONALD: Dr. Joe Schwarcz is McGill University's official science watchdog.

DR. JOE SCHWARCZ: The false expert is a huge problem in the science world. Many of these experts, professed experts, really are quacks.

PRESENTER: The results we obtained with thousands of patients with all types of cancer definitely proves Essiac to be a cure for cancer. Did you hear that? The c-word-- cure for cancer. Studies done in a laboratory--

DR. JOE SCHWARCZ: They are promoting cancer treatments that do not work. They're promoting dietary supplements that do not work.

PRESENTER: That says, food-grade hydrogen peroxide is the one way to cure cancer, to eczema, to gout, to arthritis.

DR. JOE SCHWARCZ: And of course, all you have to do is follow the money to see why they are doing this.

ANN-MARIE MACDONALD: Schwarcz says another problem is that even respectable experts get hired to do studies with industries that may compromise their research.

DR. JOE SCHWARCZ: Once you're getting paid, it's very hard to be totally objective. There's always an angle. You know that the people who are paying you want to play it up, even though it's never really stated. So any time that there's money involved, I think the expertise becomes somewhat questionable.

ANN-MARIE MACDONALD: Ben Goldacre says that endless experts promising easy magic bullets in the media and on the internet just distract us from the long term behavior we need to stay healthy.

DR. BEN GOLDACRE: I could write, you know, Dr. Goldacre's Healthy Lifestyle book, like a day-by-day advice diary. And it would say exactly the same thing on all of the 365 pages. You should eat more fresh fruit and vege every day for 70 years.

And I'm really sorry about that. You know, I'm really sorry it's 70 years. I'm really sorry that your five day detox diet won't work. But that's the reality. And I think if you tell people that their five day detox diet will work, they think, well, I can have some chips and sit around watching telly all evening instead of going to the gym.

ANN-MARIE MACDONALD: Coming up. Yes, there are even experts on experts.

DAN GARDNER: When it comes to expert predictions, you don't lose guru status simply because your forecast flops.

ANN-MARIE MACDONALD: Just how widespread is expert mis-expertise? Christopher Cerf is a founder of The National Lampoon. Victor Navasky is former editor of The Nation. The two men direct the Institute of Expertology, a wandering academy that goes wherever experts on experts are needed.

VICTOR NAVASKY: Voila, the Institute of Expertology

ANN-MARIE MACDONALD: Together, they researched a book tracking the great expert predictions of all time, for instance--.

IRVING FISHER: The stock market has reached a permanently high plateau.

ANN-MARIE MACDONALD: Irving Fisher, world famous economist, just before the 1929 stock crash.

[SINGING]

WARNER BROTHERS: Who the hell wants to hear actors talk?

ANN-MARIE MACDONALD: Warner Brothers, 1927.

[CROWDS CHEERING]

In 1962, a Decca recording executive turned down a music group and said.

DECCA RECORDING EXECUTIVE: We don't like their sound. Groups with guitars are on their way out.

ANN-MARIE MACDONALD: After looking at the Beatles.

CHRISTOPHER CERF: You would think by pure chance that the experts, even if they had no expertise, would be right at least 50% of the time. But we have not found a single expert who is right about anything.

ANN-MARIE MACDONALD: OK, maybe he's exaggerating slightly. But in their book of predictions, they show military mavens are no better than other experts.

NAPOLEON: Wellington is a bad general. We will settle this by lunch.ANN-MARIE MACDONALD: Napoleon before losing at Waterloo.

[TRUMPETING]

GENERAL JOHN SEDGWICK: They couldn't hit an elephant at this distance.

ANN-MARIE MACDONALD: Last words, General John Sedgwick, US Civil war. Christopher Cerf says that media experts, often known as pundits, have been mushrooming largely because the media needs them for endless 24/7 cable channels.

CHRISTOPHER CERF: More and more, they use the format of having an expert who is on one side and one who is presumed to be on the other who will have a violent argument right on screen about something.

PRESENTER: Oh yes. Oh, yes.

PRESENTER: No. I said it was a good investment.

VICTOR NAVASKY: Are there more than two sides to every issue? Suppose one person says two plus two is seven and the other person says two plus two is five. Is the truth someplace in between? Is it six?

ANN-MARIE MACDONALD: Science writer David Friedman spent two years writing a book about expert predictions and their accuracy in many fields. His conclusion? They're wrong an astonishing amount of the time.

DAVID FRIEDMAN: Experts are usually wrong. It's that simple. Surprisingly, you can actually put a number on how wrong experts are. And it turns out to be, on average, roughly 2/3 of studies in the top medical journals end being wrong.

ANN-MARIE MACDONALD: And he is talking about respected academic experts. But today, we're told to get an expert for almost everything. We need an installation expert to set up our TV system and a color specialist to paint her walls.

PRESENTER: Right now, grays are popular.

ANN-MARIE MACDONALD: And a relationship expert to sort out our marriage.

MATT TITUS: You should not be deceitful. And when you're married, you're married.

ANN-MARIE MACDONALD: Not to mention the experts we trust with our money and our government finances in a supposed science that's actually bogus to look at the results.

DAVID FRIEDMAN: Economists have studied the wrongness rate in economics journals and have concluded it's very close to 100%. Virtually all of the studies published in economics journals are wrong.

ANN-MARIE MACDONALD: When the economic bubble burst in 2008, Canadian William White was the chief economist for BIS, the central bank for government banks everywhere. Now in Paris, White says even the world's top financial experts couldn't see past their own pet theories.

WILLIAM WHITE: I think the experts over the course of the last few years have done a terrible job. They thought economics was a science. Fact of the matter is that economics is not a science. Economics is highly dependent upon human behavior. It would be a lot better if experts were to start off by recognizing how little we know about the functioning of the economy.

ANN-MARIE MACDONALD: But being wrong doesn't affect your expert status, says this author.

DAN GARDNER: When it comes to expert predictions, the rule is heads I win, tails you forget we had a bet. You don't lose guru status simply because your forecast flops.

ANN-MARIE MACDONALD: Gardner points out The Economist Magazine once ran an experiment comparing 10 year forecasts about the economy and inflation predicted by a varied group of experts.

DAN GARDNER: And among these individuals were corporate CEOs, economists, some very esteemed people, and also some London garbageman. And 10 years passed. And at the top of the table were the London garbagemen.

ANN-MARIE MACDONALD: Meanwhile, the reign of error continues, from trivial decisions to the biggest purchase of our lives.

MIKE HOLMES: Aye, Awesome! Gimme, gimme a top, so I've got--

ANN-MARIE MACDONALD: Meet Mike Holmes, the Canadian TV star who's on a global crusade to expose experts who do shoddy work in home renovation. His latest TV series has a new target, home inspectors.

PRESENTER: And then there's a Holmes inspection.

ANN-MARIE MACDONALD: Yup, they make lots of mistakes, too, really expensive ones. Today, Holmes's crew is filming this Toronto house. It was purchased for $280,000 after a home inspector expert gave it the a-OK. When problems started, the owner turned to Holmes, who discovered it needed almost $200,000 more in repairs. Holmes has seen lots worse. He says the real problem is that home inspectors are seen as highly-qualified experts when many have little or no real expertise.

MIKE HOLMES: To become a home inspector, it's you can snap your finger. There's a one hour course that you can do online. There's a two week course. And really, let's think about this. What's their background? Where did they come from? Were they builders?

The ones that were builders, I want to see as home inspectors. But if you just worked at McDonald's, and you were tired of working at McDonald's and, again, didn't know what to do and you just did a two week course, and all of a sudden, you're a home inspector? And now you, the homeowner, looks at them as an expert?

ANN-MARIE MACDONALD: Despite all the evidence, the expert industry keeps growing, turning out more insta-experts all the time. Coming up, a visit to expert school.

TJ WALKER: Well, it is a fantasy to be a pundit. It is a fantasy to be a guru. So there are a lot of people trying to get in.

PRESENTER: I would like to become a well-known expert. I'd like to be on CNN one day.

ANN-MARIE MACDONALD: The expert industry keeps growing, partly because manufacturing experts has become a whole new business. There are people who claim they can turn anyone into an expert in just a few days-- yes, even you. Welcome to expert school.

TJ WALKER: When that light went on and the camera went on.

ANN-MARIE MACDONALD: TJ Walker teaches people to look and sound like a TV expert in days.

TJ WALKER: Imagine if I came out here today, I am going to really coach you had to be great on your image and really impress people that you're the world's greatest expert. What are you noticing?

PRESENTER: Well, at least it's not your fly.

[LAUGHTER]

TJ WALKER: When it comes to being an expert in the media, if there's one thing that's off, that's the only thing anyone will remember.

PRESENTER: Just really like to take out of this class.

PRESENTER: You know, It's been around for about 25 years.ANN-MARIE MACDONALD: His students pay anywhere from $2,000 to $7,000. They range from successful doctors and business people to PR reps who want to become known in their fields as TV pundits, like Sarah Harding, a former Miss Fitness trying to get known as a TV exercise expert.

SARAH HARDING: Absolutely, I would love to be an expert. I want people to see me as an expert in my field of specialty.

PRESENTER: I would like to definitely become more of a well-known expert in the short sales arena. I'd like to be on CNN one day.

PRESENTER: I'd like to become a sports psychology expert that goes on to new shows and sports shows to discuss sports psychology and how it impacts athletes.

ANN-MARIE MACDONALD: TJ says there's an enormous hunger for experts because of the massive growth of cable TV.

TJ WALKER: There's a huge need for pundits these days for one reason. It's cheap. The cheapest thing to do is to have an opinionated host and bring in a couple of opinionated people, one on one side of a debate, one on another. And it's virtually free. And, well, it is a fantasy to be a pundit. It is a fantasy to be a guru to many people. It's a very attractive career option. It can be intoxicating, so there are a lot of people trying to get in.

ANN-MARIE MACDONALD: TJ says you can become an expert by writing a book or having a degree in something. But the easiest way is just to get some media training and then some exposure. Because once you're on TV once, you're seen as an expert, and print and radio will want you, too.

TJ WALKER: All right, what's the easiest way to get quoted? I guarantee you'll get quoted this way. The easiest way to ever get quoted is this. [PUNCHING SOUND] That's right-- attack.

[YELLING AND ARGUING]

ANN-MARIE MACDONALD: TJ's golden rule is never sound uncertain. The more absolutely sure of yourself you sound, the more likely you are to make a career as a TV expert. So always say always and always say never but never say maybe.

TJ WALKER: Any time you can state something with finality-- absolutely, always, must, he has to do this-- any time you can do that, they're going to quote you again, and again, and again.

ANN-MARIE MACDONALD: So another classroom of premature pundits marches out to sell certainty, part of the growing army of experts who are just experts at giving opinions. A Berkeley professor is the world's leading expert on experts. He's been scrutinizing experts closely for 20 years. Professor Philip Tetlock followed 300 top government and media experts over two decades as they made 82,000 predictions. These included political predictions about world events, questions like--

PHILIP TETLOCK: Will the Soviet Union collapse? Will Germany be reunited? Will Quebec separate?

ANN-MARIE MACDONALD: The results? The experts barely did better at their forecast than monkeys throwing darts. That's like random guessing. And the more well-known and certain the experts were, the more often they got it wrong.

PHILIP TETLOCK: The very worst experts, the experts whose predictions were furthest from reality, had very strong opinions. The formula for getting it really wrong is to have very strong opinions, to be unwilling to revise them in response to new evidence, and to be willing to make predictions that go out far in time.

PRESENTER: We're going to see depression-level unemployment rates, 22%, 25%.

PRESENTER: $200 a barrel oil.

PRESENTER: Bear Stearns is fine! Do not take your money out. This is ri-- if there's one takeaway--

PHILIP TETLOCK: And when you have the combination of all those things, you go off a cliff.

DAVID FREEDMAN: We get the experts who give us the wrongest answers and give it to us in the most certain terms. They're the ones that get quoted in the mass media. We hear the most from them. They're the wrongest.

CHRISTOPHER CERF: So if you're actually thoughtful and admit that there are many sides to an issue, you don't get hired. And you don't get heard. And you're not an expert. So more and more, because of that process over many years, only the experts who are guaranteed to be wrong get on television.

DAVID FREEDMAN: If we were a lot smarter about it, if we wanted better answers, we'd look for the very unconfident expert, the expert who is stammering, and wondering, and constantly, contradicting him or herself. I think boring experts, probably as a rule, are more right.

ANN-MARIE MACDONALD: In almost every field we examined, we heard similar praise for the unsung uncertain expert.

MARJORIE WIESEMAN: When you have an expert that is absolutely certain, for me, that's when alarm bells go off. And I automatically say, mm, no, there's something wrong here. I want to find out more.

WILLIAM WHITE: I think real experts should appear more uncertain, particularly in the area of economics. Of course, it's hard to work your way up the career ladder like that.

ANN-MARIE MACDONALD: But Matthew Stewart says certainty is exactly what business consultants are selling.

MATTHEW STEWART: Now, maybe that's why I don't particularly like the expert business. I mean, I'm always questioning myself. And I'm ridden with self-doubt.

ANN-MARIE MACDONALD: The laws of physics are predictable. But human behavior is too complex for anyone to accurately predict. Yet in uncertain times, we crave certainty in our lives. Is there any area where you can be a successful expert and admit to being uncertain? We searched the skies for it.

PRESENTER: For the greater metropolitan area, today's forecast is 30% chance of showers with a 50% chance of thunderstorms.

ANN-MARIE MACDONALD: Here at Environment Canada, weather forecasts are always given with percentage probabilities behind every prediction. In fact, for longer term forecasts, there's a warning on their site that says their predictions are no better than chance. Senior climatologist, David Phillips.

DAVID PHILLIPS: I mean, it's like saying flip a coin. Throw a dart. Turn the roulette wheel. We have to say something. We can't just sort of put a blank map and say, the weather this month has been canceled. So the best way we can communicate is to give them something but then to also suggest a user beware. Don't trust it. Almost anything is possible.

ANN-MARIE MACDONALD: So why don't our experts give us similar percentages for their predictions? And why do the most confident and cocky experts usually get heard most? When we return, the secret behind the expert industry's success.

PRESENTER: Your relationship that doesn't have a divorce in it. And you'd be more happy than what you are right at the present time.

MARK: And I don't know where else to turn. I'm in my mid 50s. I'm white, relatively well-to-do. I mean, I don't who the hell I'm going to get stuck in prison with, you know. I mean, are these people rapists? Are they murderers? The effect on my family has just been horrible.

LARRY LEVINE: Well, you know? What I'm an expert at this. You've been to my website. You know that I did 10 years. I did all the custody levels. I'm going to be blunt with you, Mark. You're screwed. But we're going to do some damage control.

ANN-MARIE MACDONALD: Meet Larry Levine, the latest addition to the expert industry. Larry is an ex-convict. But now, he advises terrified white collar criminals about what to expect in jail. He's a prison expert who gives a crash course called Fed Time 101.

LARRY LEVINE: Don't go to the showers in the middle of the night, as an example. Don't go places alone. Not necessarily the other inmates you have to worry about, you got to worry about the staff.

When I tell you about what's going to happen on the inside of a prison on the other side of the fence, you need to trust me. Because I'm an expert.

ANN-MARIE MACDONALD: Who can say whether Larry's assured expertise really works. But many clients are desperate to believe him, because they're scared. And there's nowhere else to turn. And that may be why we all turn to experts.

We live in a hectic world with endless, bewildering choices. And we are too overwhelmed to work things out for ourselves, so we seek advice on how to live our own lives and find it in many new preachers, from personal trainers to sexperts and parenting experts.

PRESENTER: Go and use the potty. It will just create more anxiety. Remember, this all--

ANN-MARIE MACDONALD: Our growing dependence on experts just creates more of them.

DR. BEN GOLDACRE: I think we're too easy on ourselves if we caricaturize this process as being about exploiters and victims. I think it's much more interesting than that. I think it's that we want to have these experts. And there are people who want to be experts. And we're kind of, we're all playing this game together.

We want someone to come in and say, don't worry any more. Do this, and it will get better. And if somebody is going to say feel better now, then feeling better now is almost as good as fixing the problem.

WILLIAM WHITE: It's like the little child that always wants to believe that somebody is in charge. And I think one of the most important moments in my life, actually, was when I began my professional career at the Bank of Canada. And what became perfectly clear was that there wasn't actually anybody in charge. But I think we all want to believe that somebody knows and somebody really understands, because the alternative, to confront all of this what is essentially chaos, is very difficult for people to live with, I think.

MATTHEW STEWART: We look for certainty. And the experts give us that. They tell us that it's all OK, that someone knows how it all works. They have a lot more in common with witch doctors or shamans than they would like to admit and that we tend to admit to ourselves.

PRESENTER: Then the relationship will be a relationship that doesn't have a divorce in it. And you'll be more happy than what you are right at the present time.

ANN-MARIE MACDONALD: For millennia, we have used clairvoyance, palm readers, and other fortune tellers to guide our actions and tell us what's going to happen in the future. But in today's modern world, we're too sophisticated to believe in fortune tellers. So we look for replacements. It's been said that those who make a living from their crystal ball are condemned to eat shattered glass. But that doesn't seem to be true for those behind the glass of our TVs who continue to talk, because we're desperate for someone to point the way, any way at all.

MATTHEW STEWART: There's a tribe, when they're out on the hunt and they don't know which way to go, their shamans take out a bone from a carcass. They examine the cracks. They throw it on the ground. And if the cracks point a certain way, they go off in that direction. Turns out that that is an effective way for them to avoid preconceived notions. If they sit down and discuss where should we go, they tend to go in the same direction all the time. Whereas, if they rely on the cracks in the bone and they imagine that it involves some expertise, that sends them off in directions that they might not have gone. And that ultimately proved more fruitful.

DAVID FREEDMAN: In the end, we have to make decisions. We have to do things. So sure, experts of course play a role in our society. If nothing else, they sometimes give us the confidence to act. Typically, doing something is better than doing nothing.

ANN-MARIE MACDONALD: Back in England, forger John Myatt was finally caught by police and sentenced to a year in jail. But when he got out, a surprise was waiting. The Scotland Yard police inspector who had arrested Myatt hired him to do a family portrait and so did the prosecutor and some court officials who appreciated his unusual talents.

Today, Myatt makes a good living selling his forgeries honestly for Legitimate Fakes, LTD. He's painting this fake Manet for Ronnie Wood of the Rolling Stones, whose face will appear on one of the figures. But Myatt is still fooling the experts. Police think 120 of his 200 fakes are still in circulation with private owners, art dealers, and perhaps museums who value them. And Myatt would just as soon leave it that way.

JOHN MYATT: If they do own it and enjoy owning it and everybody thinks it's authentic, you know, including the experts, then why on earth would anybody want to come along and say, oh, you know, I painted that?

ANN-MARIE MACDONALD: So perhaps there is some benefit in getting the wrong advice from experts if the result leaves you happy. Then again, there's definitely an expert out there somewhere with a totally different view. As Yogi Berra once said, it's very hard to make predictions, especially about the future. Experts and the rest of us can catch up on our entire season at cbc.ca/doczone. I'm Ann-Marie MacDonald. Thanks for watching.

Customer Survey

Customer Survey

Surveying Customers to Derive Willingness to Pay (WTP)

Customer surveys to understand acceptance of a product concept and map pricing can seem to be the most straightforward way to quickly understand the potential market, and you may feel that there is no better way to understand the market than to directly ask those in the market what they want and how much they would be willing to pay for it.

While we may apply surveys in many different ways to understand the spaces and the offering, using surveys to directly ask the market what it is willing to pay for an offering is a severely flawed application of the tool.

From Breidart et al. "A Review of Methods for Measuring Willingness-To-Pay" [13]:

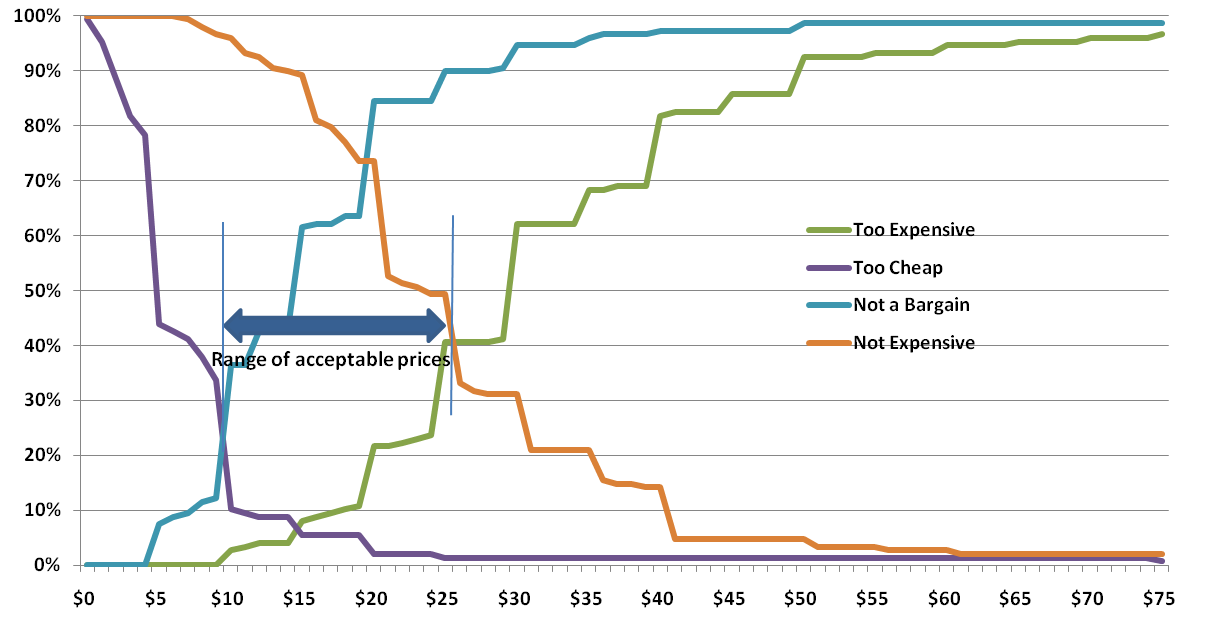

Customer SurveysIf one attempts to forecast consumer behavior in response to different prices, the evident way is to directly ask the customers. One of the first applications of direct surveys was a psychologically motivated method for estimating WTP developed by Stoetzel (1954). Stoetzel's idea was that there is a maximum and minimum price for each product which can be elicited by directly asking the customers. Studies based on this idea consist of the following two questions formulated by Marbeau (1987):

- Above which price would you definitely not buy the product, because you can't afford it or because you didn't think it was worth the money?

- Below which price would you say you would not buy the product because you would start to suspect the quality?"

Directly asking respondents to indicate acceptable prices is referred to as a direct approach to measure WTP. Other researchers continued to build upon this idea and research in this area became quite popular (e.g., Abrams, 1964; Gabor et al., 1970; and Stout, 1969). Van Westendorp (1976) introduced a price sensitivity meter which included two additional questions on a reasonable cheap price and a reasonable expensive price of the product under consideration. Applications of this approach can be still found in commercial applications (for example, the pan-European market research company GfK utilizes the procedure to attain critical price ranges for new or relaunched products).

Recently, several other procedures based on direct customer surveying have been established. An example is the commercial tool BASES Price Advisor by ACNielsen. The tool's procedure presents the subjects with several typical product profiles. The products can be in an early conceptual phase or already marketable. The subjects are then asked to name prices at which they consider a product to have a very good value, an average value, and a somewhat poor value. From the responses, purchase probabilities for different prices are derived. According to Balderjahn (2003, p.392) the price for “a somewhat poor" value could be interpreted as reflecting a respondent's WTP.

Quite obviously, directly surveying customers has some flaws:

- By directly asking the customers for a price, there is an unnatural focus on price which can displace the importance of a product's other attributes.

- Customers do not necessarily have an incentive to reveal their true WTP. They might overstate prices because of prestige effects or understate prices because of consumer collaboration effects. Nessim and Dodge (1995, p. 72) suppose that “buyers in direct responding may also attempt to quote artificially lower prices, since many of them perceive their role as conscientious buyers as that of helping to keep prices down." Nagle and Holden (2002, p. 344) observe the opposite behavior. To not appear stingy to the researcher respondents could also overstate their WTP.

- Even if customers reveal their true valuations of a good, this valuation does not necessarily translate intro real purchasing behavior (Nessim and Dodge, 1995, p. 72).

- Directly asking for WTPs especially for complex and unfamiliar goods is a cognitively challenging task for respondents (Brown et al., 1996). While it remains unclear whether this leads to over- or understating of true valuations a bias is likely to occur. Note, that this effect also occurs in the Vickrey auction and the BDM mechanism which were introduced in the previous section about experiments.

- The perceived valuation of a product is not necessarily stable. Buyers often misjudge the price of a product, especially if it is not a high frequency purchase or an indispensable good (Marbeau, 1987). This can lead to an abrupt WTP change once the customer learns the market price of the product.

An empirical comparison between a field experiment, a laboratory experiment, and a personal interview was carried out by Stout (1969). In this experiment the prices for different products were varied and the changes in demand were measured. The results showed significant quantity changes on systematic price changes in the field experiment. As expected, the demand for the products decreased as the prices were raised and vice versa. For the other two methods, no significant changes in demand for the products could be measured caused by raised and lowered prices. The personal interview even contained reversals. For some respondents the demand increased when the prices were raised.

Overall, directly asking customers' WTP for different products seems not to be a reliable method. Balderjahn (2003, p. 402) explicitly alludes to the distortional effects of direct surveys and pleads against its use. Nagle and Holden (2002, p. 345) even state that “the results of such studies are at best useless and are potentially highly misleading".

As we will see in following topics, there are a few far more valuable applications of tools to understand the attractiveness and potential pricing of our offering. For this reason, I would like us to please consider the limitations of asking consumers direct "would you buy this"" and "how much?" type questions, as they may be far more counterproductive and misleading than they are valuable for our efforts.

For this reason, we will move on to the next methodology.

Conjoint Analysis

Conjoint Analysis

Introduction to Conjoint Analysis

Conjoint analysis tends to be among the most popular stated preference methodologies for developing new offerings, as it helps us understand exactly where the market finds utility in the offering. For example, we could apply conjoint analysis as yet another layer of understanding for our cognitive map, showing us strategic paths and combinations which are perceived to bring the most positive benefit to consumers. While having a consultant perform a full-blown conjoint analysis of the entire cognitive map would likely be prohibitively expensive and require significant sample sizes, conjoint analysis would be very well applied as we choose one or two strategic paths and begin to iterate offerings. In this topic, we will also see what powerful, yet simple software can do to automate both deployment and results of conjoint analysis.

Like many methodologies, conjoint analysis is by no means perfect, but where others attempt to offer a definitive "yes/no/how much" reading for our offering, conjoint analysis tends to be most helpful in showing us "hot spot" attributes and benefits in the offering, and how individual aspects of the offering can increase or decrease perceived utility and value. Please watch the following 4:09 video.

Video: What is conjoint analysis? (4:09)

One of the increasingly popular techniques being used in new product development is an analytical technique called conjoint analysis. The early academic work was done by Professor Paul Green at the Wharton School back in the nineteen-sixties and it's really come into very wide use now. It’s very useful for a number of reasons that I'll get to in a few minutes.

What is conjoint analysis? Well, I think the opening assumption is that if you ask customers, “Do you want this feature? Do you want this feature?” they want everything. But that's not the way it works in the real world. We have to make trade-offs between various features because we usually can't afford to have absolutely everything.

So what Green suggests is a technique where we give people combinations. We give people pairs or groups of products that are a combination of various features and ask them which one they prefer. And the example I always like to give is the following:

Let’s say you're gonna be flying to Paris from here in Boston and I'm gonna give you two options. Okay, option 1 is United Airlines. It's a Boeing 767. The seat width is this. The seat pitch, i.e. the distance in front of you, is this. The food quality is pretty good. The on-time performance is eighty percent and the price is 1450 dollars. So that’s option 1.

Option two is Air France. It’s an Airbus A340. The seat is a little bit wider but the seat pitch is a little bit less. The food quality - of course, it’s Air France so the food is terrific. The on-time performance is a little less good at seventy percent. The price is a little higher at sixteen hundred and fifty dollars

Which of those two do you prefer?

Now if I do that, what I'm doing is I am implicitly asking you to trade off a bunch of potential features or attributes in a product: airline; aircraft; seat width; seat pitch; food quality; on-time performance; and price. And if I create an experimental design and give you enough combinations of products like that I can derive out of that how much utility you derive or how much importance you place on each of those various attributes. And that’s referred to as conjoint analysis.

The term comes - it's a contraction of the words considered jointly. It's a somewhat complex thing to do although there are great tools that have made it quite a bit easier today.

The real benefits of conjoint analysis are two-fold. First of all, the variables can be categorical rather than continuous. So we could have Air France and United where there's no obvious higher lower interval - anything like that. They're simply categorical. The other thing is it's the only technique in all of market research that has been shown to be valid in evaluating price. That is, it answers the question of how much a customer would be willing to pay for a given feature or level of some attribute in a product. The old method of asking a customer, “how much would you be willing to pay for this feature?” is just shown to be completely invalid. The customer either doesn't realistically know or they’ll game the system. I mean no one in their right mind would, if a car dealer asked them, “how much would you pay for this car?” No one in their right mind would tell them the truth, and that's a problem in that old style of trying to get pricing.

Conjoint analysis presents price as a trade-off in a whole series of attributes. It works much, much better.

What is also useful about conjoint analysis is there are quite a few variations and tactics which can be applied to increase validity, ensure appropriate attention from participants, and improve the accuracy of overall findings. In some cases, these variations involve using actual product or credits as the incentive for accuracy, as the participant would actually be receiving the product they choose, or have the right to purchase the product at a certain price.

For one variant of conjoint analysis designed to increase experimental validity, please review "An Incentive-Aligned Mechanism for Conjoint Analysis" by Min Ding. [15]

Options to Structure and Deploy Conjoint Analysis Easily

There are also quite a few reputable software packages that allow us to structure, deploy, and read the results of our conjoint analysis. For a very basic example, Sawtooth Software has made a simplified trial of their Choice-based Conjoint survey tool [16] to allow potential users to try the package.

The video below gives you some feel for the power and usefulness of conjoint analysis software, in this case being the Number Analytics offering. Please watch the following 1:45 video.

Video: Number Conjoint Introduction (1:45)

With conjoint, one is trying to understand the preferences of consumers. And so the software allows you to recover the preferences of consumers at the individual respondent level by using fairly sophisticated hierarchical Bayesian methods when trying to understand the preferences of individual consumers.

Now we’ll look at the analytics of conjoint analysis. You can add the features and the questions and settings.

Now our questionnaire is generated from the conjoint design we just created.

Now we’ve got the results. The first shows importance. Then the utility for each attribute will be shown with different colors.

Check our summary of statistics for these attributes and importance.

Individual estimates.

Demographics.

So, the males dislike Dunkin’ and the Indians like Dunkin’.

Market simulator. So we can see that I changed price.

Van Westendorp Meter

Van Westendorp Meter

Introduction

The Van Westendorp meter (or more properly referred to as the "Van Westendorp Price Sensitivity Meter") is somewhat of an extension of the direct consumer survey we eschewed a few brief topics ago. But, like many methodologies related to pricing and analyzing perceived benefit in offerings, the Van Westendorp offers a few advantages that may make it more appealing in use.

A brief walkthrough of the Van Westendorp in practice from Mike Pritchard [18]:

First, a refresher. Van Westendorp's Price Sensitivity Meter is one of a number of direct techniques to research pricing. Direct techniques assume that people have some understanding of what a product or service is worth, and therefore that it makes sense to ask explicitly about price. By contrast, indirect techniques, typically using conjoint or discrete choice analysis, combine the price with other attributes, ask questions about the total package, and then extract feelings about price from the results.

I prefer direct pricing techniques in most situations for several reasons:

- I believe people can usually give realistic answers about price.

- Indirect techniques are generally more expensive because of setup and analysis.