11 - Testing the Offering II: Revealed Preference

Lesson 11 Overview

Lesson 11 Overview

Summary

To this point in our journey of creating a concept and progressing it toward launch, we are in what I would consider the 'late phase' of concept testing. This is the point where we want to see how the entire 'package' may be received by the marketplace, but where we are still before full launch. I describe the goal in this phase as "dollars and minutes." We want to see, in small scale, how the audience responds to the concept. Ultimately, we are beyond the niceties of stated preference measures and Likert scales and now into real performance.

In this phase of testing, we can further tune the concept and hone messaging, examine areas of underperformance and over performance, and see if the entire concept package appears to generally deliver to expectation.

The beauty of this approach is that all of this is all still happening prelaunch, so in the grand scale of the program, spend and resourcing is still fairly compact. We want to make the best possible case to either fully scale and resource, or otherwise to sever the concept and move onto the next.

The intent of this Lesson is to show you how to "microtest" to understand real concept performance in the market, but in small scale.

Learning Outcomes

By the end of this lesson, you should be able to:

- articulate the strengths and limitations of the revealed preference methodologies;

- discern where microtesting may be most valuable;

- understand the tools and philosophies of microtesting;

- create a framework for microtesting an offering.

Lesson Roadmap

| To Read | Documents and assets as noted/linked in the Lesson (optional) |

|---|---|

| To Do | Case Assignment: Microtesting the Offering

|

Questions?

If you have any questions, please send them to my axj153@psu.edu [1] Faculty email. I will check daily to respond. If your question is one that is relevant to the entire class, I may respond to the entire class rather than individually.

The Importance of Live Testing

The Importance of Live Testing

Seeking Truth, Not Simulation

I'm going to be a touch blunt here, so if you're averse, simply read in a gentle, whispering internal voice.

There is a certain behavior from organizations and people in the sustainability space that an offering "being interesting" or "creating goodwill" or "building brand equity" or "increasing our sustainability cred" is, in and of itself, the end goal. Perhaps this is OK in your organization. Perhaps it is part of a longer term strategy. Fair enough. But this is not typically the case, as if there was a strategy in place, one would be met with more than blank stares when asked, "So how is your program progressing toward its goals so far?"

Here's the thing about any program or offering that just kind of happily floats around in this milky-white ether of suspended animation: If they can't show progress toward whatever goals they have set (if they have set any), they will be cut. Be it a management change or a lean year, they will be cut. Chances are, if "sustainability" as defined by these types of organizations is cut, it isn't coming back.

If you see organizations displaying signs of "sustainability as PR"–large organizations with billions of dollars of revenue and $20,000 annual "sustainability" budgets–chances are they are very much functioning in this space. The program is simply floating along: not "succeeding," but not "failing"... and not yet cut.

Because we see sustainability as an opportunity to "do well while doing good," we see sustainability as presenting us with meaningful opportunities everywhere, on fronts as diverse as employee retention and new product development. Opportunities are measured.

No matter the strategy, be it a social campaign, new product development, brand building, or any other strategy related to our sustainability offering, it lands on Dollars and Minutes. Here's why: Both of those units require a live human being (i.e., customer) to give us something of value to them: their time and/or their money.

This may all seem very capitalistic/oppressive/harsh, but, in actuality, I think you may find it quite liberating. Why? Because if we are truly about sustainability, we must be about sustainable sustainability. What feeds "sustainable sustainability?" Dollars and Minutes. What shows us that our program, initiative, or offering is indeed making an impact? Dollars and Minutes.

Here are a few examples, in application:

- You are a non-profit tasked with creating an offering to reach new types of donors.

- Minutes: The time spent on your site and social sites by potential donors will directly influence the success of the program (i.e., they're aware and finding interest); potential for the offering to attract new volunteers willing to give their time to your cause.

- Dollars: % of new offering prospects which result in donation; total donations in the pipeline.

- You are implementing a new, voluntary, waste minimization program across sites of your organization.

- Minutes: The total time spent by sites engaging in the program; attendance of kickoff meetings.

- Dollars: Reduced disposal costs; reduced waste handling and labor costs; reduced shipping costs from minimized material and dunnage.

- You are releasing a new sustainability-oriented line of products.

- Minutes: The time spent by prospective customers on the offering landing page; time spent by customers requesting information and traveling further down the conversion funnel.

- Dollars: Sales of sustainability-centric product line to existing customers; sales of sustainability-centric product line to new customers; downstream sales to new customers acquired by sustainability-centric offering.

- You have been engaged by a PR firm promising one million print and online impressions in the next month for your sustainability program and new CSR... guess what you want to measure?

Revealed preference methods–testing in the real world–is the path for us to be able to get to Dollars and Minutes as quickly as possible to find truths about our offering.

Because we believe in not only sustainability but also sustainable sustainability, we need to get to those Dollars and Minutes. Regardless of if we are talking about a speaker series on organic farming or a new sustainability-centric product, these two measures show how our offering is providing value to the organization ... and the world.

Without these two measures, we simply create offerings without customers and campaigns without audiences... and if you believe sustainability is about inaction, you're probably in the wrong field.

Beta Testing

Beta Testing

A Page From the Tech Industry

We are going to talk later about a derivative of Beta testing which is a bit different from those applied by the tech industry (more specifically, the software industry). Nonetheless, I would like to offer a primer on the "true" Beta testing.

"Pure" Beta, as applied in tech, tends to be more heavily weighted toward finding bugs and flaws in the product more than garnering live, revealed preference data on how the market receives the offering. Because this desire toward usability and performance testing tends to be the emphasis in the tech industry, their Betas tend to have little to no emphasis on understanding purchase behaviors, instead recruiting participants through either "closed" or "open" Betas.

Closed or "by invitation" Beta tests are as they sound: They are closed to the average Joe, and instead rely on either an outbound invitation from the organization, or for you to apply for consideration for a Beta slot. You will sometimes see this structure implemented when the offering being tested is of a sensitive or confidential nature, when a company wants to hand-pick a group of Beta testers based on past purchases or behaviors, or when they simply want to limit the number of participants.

Open Beta tests allow virtually anyone to participate, perhaps with minimal barriers to ensure the software/product will be used in the proper environment. This testing may allow free and open download of a Beta software version and simply follow up with all users as to their experiences, or in the case of limited physical products, may allow the first 500 or 1000 participants into the Beta before closing it.

As we will see, depending on where we are in our offering development process, we can apply the Beta logic in a wide variety of ways to meet our learning needs at that specific time. For example, if we were early in the offering development and had a prototype we needed early feedback for, we would likely lean toward a closed Beta with customers or organizations with which we are familiar to get some of the prototypes into the field and see how they perform. This would provide us fast feedback without having to recruit new and unproven participants, etc.

Example of a Closed Hardware Beta

As an aside, the below is from one of the most well-executed closed betas I have seen in quite some time. It was for the Steam Machine, a new gaming console from an established content provider.

The specific byproduct I would like to point out here is how Betas, especially closed Betas, can be a fantastic engagement tool for customers and prospects alike.

In the case of the Steam Machine, there were hundreds of thousands, if not millions, of people not among the 300, who were vicariously participating through hourly updates and postings as these mysterious crates began arriving at homes. While I am personally not a gamer, I followed this story in 2013, as it was a fascinating example of what a beautifully deployed Beta can do in a high-engagement group.

If it is any evidence, the video below of unboxing the Steam Machine Beta has almost 500k views. (Feel free to scrub through the following 7:23 video to see how the Beta was presented.)

Video: Steam Machine Unboxing (7:23)

Well, it finally came in today, the Steam Machine. Hi my name is Ellis otherwise known as Oolen on the internet, and I was one of the few lucky people selected by Valve to receive a Steam Machine. It's only in its beta state, but I'm pretty sure it's indicative of what the final version is going to be. So let's crack this open and see what we got. Here's the unit itself. Open this up. Now I'm pretty sure everyone knows what this thing is, but for those who don't it's Valve's attempt at bridging the gap between console and PC players. You play your games on your big screen TV in the living room instead of on your 20 inch monitor. Wow, here it is. It's got audio jacks, it's got USB 2.0 ports, looks like some vga seta. Basically this is a mid, mid to high-level PC, so it is expandable but like i said it's mostly console experience in the living room. It runs natively on a Linux-based operating system called Steam-OS. Now, here's the unit side by side with an Xbox. They're similar in size, but the steam machine is quite a bit heavier. Side profile right there so comparable, but a little bit bigger than the old Xbox.

Here we have the controller and definitely tell this is a beta controller just because the, the plastic feel and I, I believe the concept images had some sort of a touch screen, but here you have four buttons. It's got to concave trackpads as opposed to analog sticks and you know what it's, it's pretty comfortable. It's got a second micro USB port right there and then it has these two buttons we can click right there. I'll make a separate video about the control later. Here you see it next to a dualshock 3 controller. It's quite a bit larger. The weight, it's it's about the same it's a little bigger, so the weight is distributed a little more so the PS3 controller feels heavier. The weight is concentrated more in these wings right here. it's quite a bit lighter in the center, but there's that right there for comparison.

Here we have.. what's this? Thank you for shaping the future of Steam. Your feedback will refine Steam-OS, and this is just an outline of the system. Wi-Fi where that is, if you don't know how to use a HDMI cable this will help you out. Here's some important information just how to place it and you actually place it a horizontal not vertically like I placed it that's why it's good to read the instructions. So let's place the right way which is that. Ok, power cables, HDMI cables, no, this is USB cable, I'm sorry. USB power. I don't know what that is, but I'm sure I will find out soon. And then Steam Operating System recovery, there you go. Seems to be it as far as that goes. Well let's plug this thing in and see how it runs.

So here's what the device looks like turned on. This button right here is actually a button you push it's not a just like a touch and on the side right here it says gforce gtx. So I'm interested to know if you change out the graphics if it will indeed update or this is even customizable at all. So that is cool. Now here's our login screen to log into my account. oh geez, I'm going to have to edit this out so you don't see my password, but this is how you input text actually it's a good time to tell you. Now the controller it takes some getting used to, but I can tell that with some practice you can get some pretty good precision on there. Now here's the main screen it's a pretty streamline. It looks similar to you know, your Xbox dashboard, PS3, you know typical console. Now we can go to our library, view all games. i can see i have almost two hundred fifty games, but not every game you could install from the start. Like I was interested to see how something like Company of Heroes would play on the controller, but we can't have that yet, but I am installing a few games. Going kind of slow, I don't want to install Dota though. Actually I do because that's a good test of the controller Super Meat Boy, Serious Sam, Faster than Light, FTL, Painkiller, and Hotline Miami. A lot of these games such as these two were gifted to me by Valve to test out the machine, but that's it for this video. If you want anymore just tell me in the comments down below. If you want to know how any of the games play. I plan on doing at least one video showcasing what the controller can do on different genres such as shooters strategy games like DOTA and games that require precise platforming like Super Meat Boy.

Well, that wraps it up for this video if you liked it subscribe and favorite. If you didn't like it, subscribe anyway because I have other videos that you might like.

Six Steps of Beta

If we are considering Pure Beta as applied by myriad software companies, the following offers a simplified view of the six steps of Beta.

From the Centercode Beta Testing Process [3]:

Step 1: Project Planning

Before beginning a beta test, the objectives of the project must be defined. It's common for the number of unique goals in a beta test to range from just a few to upwards of 20. Defining these goals in advance ensures that the appropriate number and composition of participants are selected, an adequate amount of time is available, and everyone involved understands what needs to be accomplished.

Step 2: Participant Recruitment

Beta testing begins with the selection of test candidates. The ideal candidates are those who match the product's target market and whose opinions won't be swayed by a prior relationship with the company. Most private beta tests include anywhere from 10 to 250 participants. However, this number is highly dependent on the complexity of the product, the audience involved, the time available for testing, and the individual goals you'd like to achieve.

Step 3: Product Distribution

Next, products are distributed to beta participants. The focus of a beta test is to understand the customer experience as though they purchased the product themselves. With this in mind, beta is most effective when a complete package including all appropriate materials (software, hardware, manuals, etc.) are sent to participants.

Step 4: Collecting Feedback

Once your participants begin to use the beta product, feedback needs to be gathered quickly. This feedback comes in many valuable forms including bug reports, general comments, quotes, suggestions, surveys, and testimonials. With good beta management and communication tools, you can get a lot of feedback from test participants.

Step 5: Evaluating Feedback

A beta test provides a wealth of data about your product and how your customers view it. However, that information is useless unless it's effectively evaluated and organized to be manageable. All feedback should be systematically reviewed based on its impact on the product and relevant teams.

While bugs are often the core focus of a beta, other valuable data can also be derived from the test. Marketing and public relations material, customer support data, strategic sales information, and other information can all be collected from an effective beta test.

Step 6: Beta Conclusion

When a beta test comes to a conclusion, it's important to provide closure to both the project and the beta participants. This means providing feedback to the participants about their issue submissions, updating them on the status of the product, and taking the time to thank and reward them for their effort.

Weakness of Pure Beta

As you can perhaps imagine, having a closed pure Beta with 250 participants as described would provide some extremely useful feedback from users on the software, usability, instructions, and the entire use experience.

What it would NOT help us understand is any revealed preference data on our offering, and if we were to use Beta testing to try to understand market preference, we would have quite a few issues with this methodology:

- Betas of this type are essentially always free, or, in some cases, participants may be compensated with product or vouchers

- Issue #1: No Dollars for us to understand real preferences and behaviors.

- Betas of this type usually have specific guidance and instructions on how to use the product, and what feedback is desired at each step.

- Issue #2: Minutes of participant attention/use are therefore skewed because we are setting behavior, and not letting users find their own way.

- In the case of closed Beta, the organization will be responsible for the composition of those testing the product.

- Issue #3: Bias may be introduced into participant selection in a variety of ways, from heavy early adopter loading to those who would likely have a favorable opinion of the organization and its products. Furthermore, it would be difficult for us to choose users in the target market if we have not yet proven what that target market is.

For those reasons, in our next topic, we will explore a philosophy which has foundations in Beta, but is better suited to our needs in testing the proposition and offering in the market quickly and inexpensively. We may call this philosophy "microtesting."

Philosophies of Microtesting

Philosophies of Microtesting

The "Product Launch" Mindset

There tends to be a belief in testing, developing, and releasing new offerings that is based in the practices and norms of decades past instead of what is possible today, and I would like to delve into this a bit.

When you hear about "product launch," it tends to be framed as a time of finality, that when launch happens, that is IT. The button is pushed, the impact will happen shortly thereafter.

Think of the very linguistics of the term "product launch"... there aren't too many occasions when you get a "do over" on things which are "launched." Oddly, we, as a society and a profession, have elected to have the dominant metaphor for selling "innocuous new product #8956" to be the same term we use for missiles and rockets.

Furthermore, piggybacking on the launch metaphor is that product launch is the "big date," used to rally teams and give visibility to programs, as if the organization is launching a man into orbit. Calendars are marked, countdowns are created, lavish lunches from Chipotle are had for all supporting our product astronauts in their epic journey to market.

If you're simply adding that "innocuous new product #8956" alongside your other 8955 products, the launch mindset may work for you. But if you're releasing a sustainability-driven innovation into a new space, and a category your organization has not sold into before, the launch mindset can be exceptionally counterproductive.

As long as so much emphasis is placed on this single, terminal, end-all launch date, it means that much of the testing will have to be based on closed tests, surveys, and other hypotheticals. It makes sense why stated preference methodologies, despite significant flaws and inaccuracies, could become so popular, as 'There is no way we could possibly sell product before launch!'

Consider also that the launch mindset likely served people well in times when the dominant media were newspaper, radio, TV, catalog, and the like. When you are buying airtime and page ads weeks and months in advance, there was a need for definite dates around which to schedule media.

Today though, for all of the emphasis any organization may place on their product launch, what are the chances it means anything to customers? In all the consumeristic love of things, how many product launch dates really make it into our consciousness every year, especially after removing Apple from consideration? Three? Five?

And what are the chances your epic product launch date will have so much pre-release power to find itself launched into the stratosphere of public consciousness on Day One? Perhaps zero?

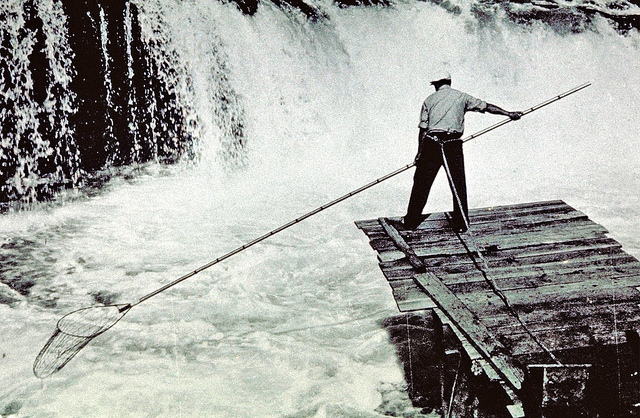

Microtesting: An Approach Inspired by Biologists

Imagine a stream of consciousness connecting us all for hours a day. Our thoughts, our feelings, our needs, what we want for lunch, how we will get there, classes from our favorite University, any and every thought happening in this massive whitewater. This is the internet.

So, if we seek to learn what is happening within that torrent of information, we have the ability to do what a marine biologist would do, and that is dipping a small sampling net into different locations, at different times, and with different mesh sizes, and recording what fish happen to appear in the net.

We are not damming the river to capture and inventory every fish. We are not artificially partitioning the river to create a "simulated environment." We are not trying to blindly calculate how many fish are in this specific stretch of this river by applying some obsolete calculation or methodology.

We are simply, silently, and invisibly dipping a net into the water and seeing what actually happens. This is the philosophy of what I call microtesting.

If we are engaging in microtesting, we must set aside the single-shot Product Launch Mindset, as we will be testing propositions and conducting tests online using a variety of tactics. If you go by the strictest definition, these tactics will indeed constitute "releasing" (or more appropriately, "pre-releasing") the offering to a limited number of customers. It is designed to facilitate small, tightly designed, limited-term tests in the live market from which we can refine the offering. Importantly, it is entirely within your control to limit exactly how many people see the stimulus, exactly what stimulus they see, when you choose to pause the campaign, and even to be able to screen competitors from seeing the stimuli. Want to test around a geographic area in which you may be building a limited test market? You can do that, as well.

Importantly, if your organization still wants a big Launch for the offering, it certainly can, but you will ensure the Launch is based on live learnings, proven messages, and fact.

In the next Lesson, we will cover some of the tactics of microtesting and how they may be applied at this phase in the innovation process to provide us with live data on virtually anything we seek to learn about the offering.

Tactics of Microtesting

Tactics of Microtesting

Brief Introduction to Pay-Per-Click (PPC) Advertising

For the purposes of all of our discussions and to avoid having to address the nuances of multiple ad platforms, "PPC" will refer to Google AdWords, as it controls about 70% of the PPC advertising market.

One of the most useful byproducts of our use of PPC for microtesting is that there is a stunning amount of information and tutorials available for all levels of experience, and essentially anything you need to accomplish. You will quickly see as you microtest that there are many, many PPC consultants and experts out there who do nothing but test and refine campaigns for ecommerce conversion and sales. While our application is a bit different, know that if you use this technique for testing, external resources are ample and easily engaged.

So, please know that there is a pretty significant difference between riding a bike and riding in the Tour de France in regard to the art and science of PPC... but for our purposes, I hope to demonstrate that someone with little experience will be able to set up initial microtesting quite quickly. Please watch the following 3:53 video.

Video: What is AdWords? (3:53)

So what is Adwords? Put simply, AdWords is Google's online advertising platform that can help you drive interested people to your website. AdWords allows you to take advantage of the millions of searches conducted on Google each day. You create ads for your business and choose when you want them to appear on Google above or next to relevant search results. The concept is simple you enter words that are relevant to your products or services, and then AdWords shows your ad on Google when someone searches for that or related words.

So how does AdWords work? Say you search for window repair Google comes through billions of web pages, blogs, and other listings to find the ones most relevant to window repair. These are your search results looks familiar right, but wait there are thousands of search results here. Many of them are other businesses also providing window repair, but not all businesses may be listed among the top results. AdWords gives your business visibility even if your website is not in the top results. AdWords can help get your business to appear on Google in front of many potential customers. They searched they find your business, they click, they could become your customers.

Let's take a look at another example of how AdWords can help you grow your business. Say you want to attract customers in your local area. AdWords lets you pick when and where you want your ads to show. That is you can target your ads so that whenever people in your state, region, city, or neighborhood search for businesses like yours your ads show up next to their search results.

With AdWords you can also display your ad on thousands of sites across the web. Your ads will show up when potential customers are visiting sites related to the products and services you offer. For example, let's say you sell fitness apparel. Your ads might appear on sites that discuss fitness workouts, healthy living, and related topics anyone browsing the web for new workout gear and learning about the latest Fitness trend may be interested in buying from your site.

Lastly, every day millions of people access the internet from their mobile devices. They research products and services, search for local businesses, and click on your ad from their mobile phones to call you directly for more information. Your potential clients are on the move and with AdWords your business can be wherever your customers are. As you can see AdWords can help you attract new customers and grow your business online. In addition to helping you create ads that target the people most likely to buy your products and services at the time they're most ready, it also helps you manage and control your advertising spend. With Adwords you select the maximum amount that you are willing to spend and you only pay when someone clicks on your ad and visits your site.

So what is AdWords? Well, it can be a key part to marketing and growing your business online. It allows potential customers to find you on Google and many other websites. You only pay when potential customers click on your ad and then actually visit your website. It lets potential clients know you're open for business online. It's the smart way to attract customers on the go. It's a handy way to attract potential from near or far. Take your online marketing to the next level and set up your AdWords account today.

A Note on AdWords Keyword Tool

There are myriad short, step-by-step videos [11] on how to get started in setting up a Google AdWords account when you are ready. It takes about three minutes to get started.

While we will be seeing quite a bit of AdWords and we will be mocking up keywords and test designs for this week's assignment, we will not be setting up live AdWords accounts in class.

Here's why:

The AdWords Keyword Tool and other research tools used to be freely available online, until Google required you to create an account to access them. That is usually no problem, but Google no longer allows you to create that account without entering a valid Credit Card. Although you can set the account to not make any charges, I am not comfortable asking you to do so for class.

Happily, for our discussion, we can emulate about 90% of the core function of the Keyword Tool (and more) with SEMRush [12], an excellent package of research tools with fantastic analytics and trend data to help in decision making. Most importantly, it also offers a freely accessible trial. So while it will not provide the direct tap into Google ad pricing and search volumes AdWords would, it is more than ample for our purposes.

I just wanted to be clear there about the disconnect of talking about AdWords, but using SEMRush for research. We will each set up trial accounts for this week's assignment, but the Pro trial is only active for 24 hours, so you will want to delay setting that up until you are ready to begin your assignment.

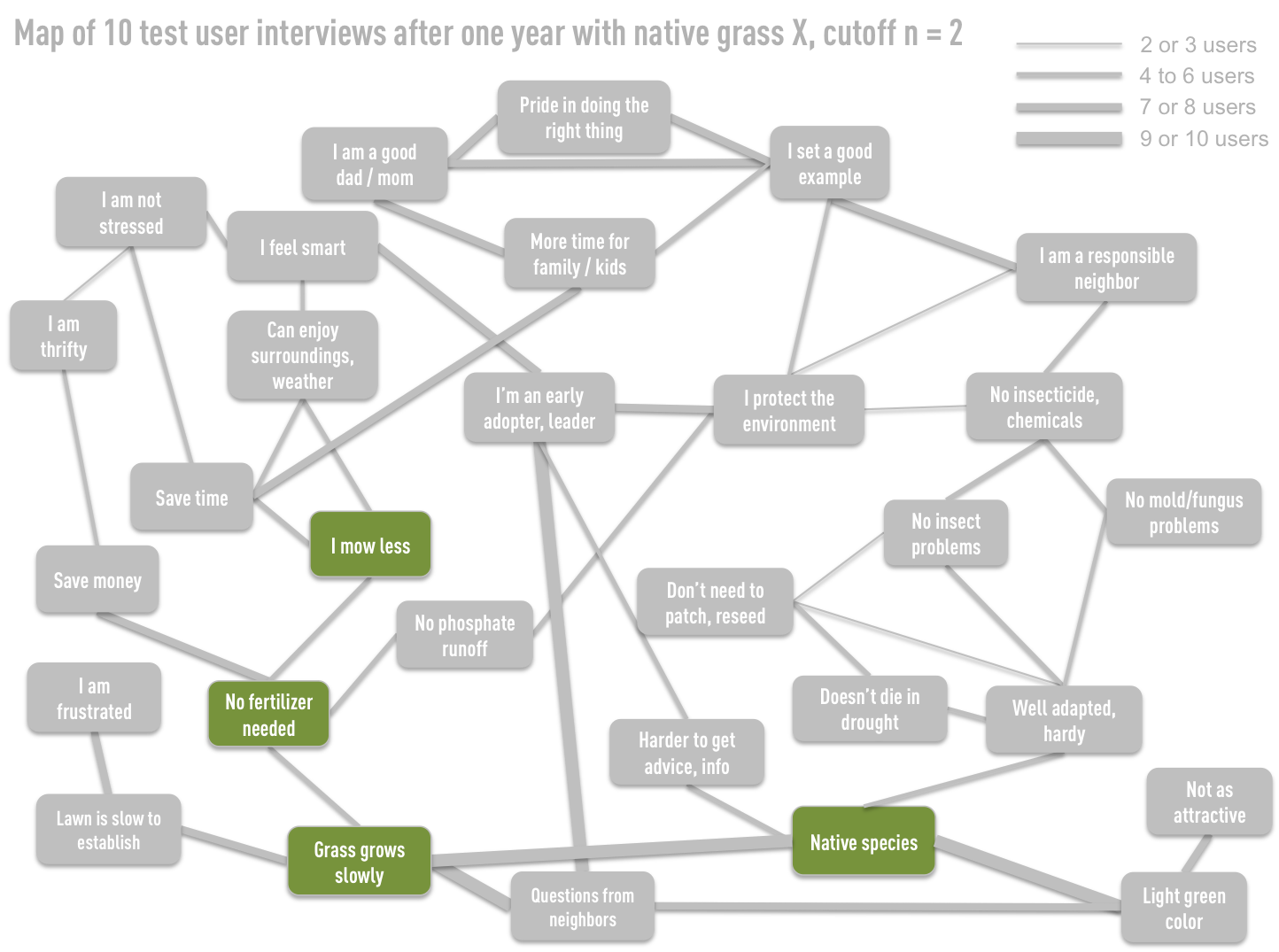

Framing the Experiment

For the sake of this example, let's imagine that we have decided to explore one of the more straightforward strategic paths we proposed in Chapter 9, "The Lean Operation." Here is how we defined that path:

In this case, our goal is to understand if we can dip our net into the stream of people interested in and currently searching related topics/keywords to see what our conversion model could look like. In essence, in this microtest, our first step is to see if the market is interested in our most simplified proposition, and part of the beauty of microtesting is that we may have many tests of different executions on the same path and different paths running simultaneously.

The PPC Ad: Our initial "Escalator Pitch" to Test the Proposition

If you have ever heard of crafting a 30-second "elevator pitch" to effectively pitch a new offering to a prospect, you could think of what we are creating as closer to an "escalator pitch." Having 30 seconds to lay out our proposition on the web is a luxury we do not have, and we are realistically closer to the time we would have to talk to someone passing us on the down escalator while we were riding the up escalator.

At the highest level, we have to condense the most important "hook" of the strategic path into an ad totaling 130 characters. 35 characters of that is the URL you are linking to, so, as for usable message space, we are looking at a scant 95 characters to depict our proposition.

This may sound intimidating, but here are a few elements playing significantly into your favor:

- When your ad displays, people are already searching for information on a related topic, so they are already primed.

- You do not have to get it even close to "right" the first time, which is part of our testing.

- Because it is not an old media "Launch," you can change the ad in 30 seconds, 24x7x365.

- There are no high cost/risk factors.

- 95 characters is more than you think.

For the sake of testing "The Lean Operation" strategic path, let us suppose we want to test the initial viability of three test propositions.

Test Proposition 1: "Tired of Mowing?" In this test, we will actively pursue people shopping for more conventional lawn supplies and attempt to "intercept" them and gauge interest around the "Grass grows slowly" and "I mow less" concepts in the path.

Test Proposition 2: "Savings/spend calculator" In this test, we will again intercept those searching for more conventional lawn supplies. This time, we will call out how much the average home spends on lawn products annually, and what they can save by converting to Native Seed X. We will personalize the message by creating a calculator that will allow the homeowner to enter some basic inputs and get a realistic savings number. This proposition is centered around "No fertilizer needed" and "I mow less."

Test Proposition 3: "Better seed" As a bit of a control, instead of intercepting those with "conventional lawn" interests, in this proposition, we will attempt to sell the prospects of Native Seed X to those already actively searching for native seed. We could consider this as a bit of a counter-strategy to the other two, as the size of the market actively searching for native lawn seed is likely minuscule as compared to conventional lawn products (we will be able to quantify this in a moment). This proposition is centered around "Native seed."

From here, we would go about writing the actual PPC ads for each of the three test propositions in AdWords. Now, of course, we are not going to be the only advertiser in the space, which is also exactly what we desire for the test: to gauge how our proposition performs not in a lab setting, but in the real world, alongside competition.

Defining Keywords

The ads themselves are static, and so we must select those keywords which are related to the content of our ads to determine when they will appear. Almost in the sense of the Cognitive Map itself, we want our ads to essentially parallel when someone is searching for information related to the selected path (i.e., staying on our strategy). This, in essence, is what provides the revealed preference testing. We are not performing a mall intercept survey, or asking random groups of people online... we are placing our proposition in front of those who are actively engaging in the topic and who may be actively looking to purchase products with *real* money.

We would select our keywords based on both our learnings through research and tools to help us make informed keyword decisions in regard to quality and traffic, which we will examine in the next topic.

For "Tired of Mowing," our keywords could be centered around high traffic terms we would want to intercept like "lawn fertilizer," and perhaps we would test lower traffic terms like "mow less" or "low growth lawn."

The keywords for "Savings/spend calculator" could also be similar, but could also perhaps extend to "lawn savings" or "fertilizer coupon" to try to appeal to those who already show a desire to spend less on lawn products.

"Better seed" keywords could be more closely related to the seed itself, as this test is for those already searching for native seeds. "Native lawn seed," "North American grasses," and the like would be our keywords here.

Creating the Landing Page

A landing page, by definition, is the page someone "lands" on after taking an action. Overwhelmingly, that action is clicking on an ad.

The goal of the landing page is to "continue the thought" of the ad, and to quickly express the proposition and urge the visitor to take the desired action. If the PPC ad itself was the "escalator proposition," the landing page is the "elevator proposition," as we may be designing for 30 seconds of attention as opposed to 6 seconds.

Landing page design and high-level optimization is, in and of itself, a science. There are literally thousands of people who do nothing but shift elements of a webpage around, test colors, and revise messages to gauge how it may change response and purchase behavior. In our case, because we are simply looking for "signs of life" in our propositions and to begin to understand which may rise to the top, we do not need stunning levels of landing page refinement like an Amazon would.

What we do need is a landing page which we believe expresses the proposition, and has a measurable call to action clearly on the page. Whether that call to action is a pre-order, a catalog request, a sample request, or an order of the product itself, we want the prospect to take some "next step." Ideally, the next step is indeed purchasing the product in question, but given that we may be in pre-release, an "email me when this product is available" may be a logical replacement.

The proposition itself may be expressed in video, image, text or a mix of all, or, in the case of a concept like the "Savings/spend calculator," a very simple and straightforward calculator. Again, all we are looking to do is to provide that 30 seconds of proposition and interest to engage the visitor and make them take the next step.

A Brief Example of How the Pieces Work Together

Please watch the following 6:02 video.

Video: The 5 Pillars of AdWords Success (6:02)

Every day your customers and millions of people search the web for products and services like yours. They're presented with thousands of options and make quick decisions about whether to click or pass. Marketing your business online with Adwords can help you have more potential customers discover your business and turn them into real customers. There are five key ingredients that will help you make your ads success. Structure your AdWords account, choose the right keywords, right attention-grabbing ads, select the right landing pages, and track who became your customers. A smartly organized account is the first ingredient that can help make your ad successful, so what does that mean? Simply put, it is a good idea to make sure that your keywords or keyword lists are separated into categories or themes and then create ads that tie directly to the themes of those keywords. This helps to make sure that your ads will speak well to potential customers. For example, let's say you offer flower bouquets and potted plants for special occasions. You can create one keyword list that refers to the flower bouquets you offer with an ad that talks about these bouquets and another list of keywords that talk about potted plants and an ad that talk specifically about those. The more tightly you group your list of keywords the easier it will be to create ads that speak to what your potential customers are searching for.

The next key ingredient is to choose keywords that are right for your business. Keywords are simply words or phrases that are relevant or related to your products or services. They're the words you think people will search for in order to find your business, and the words AdWords will use to determine whether your ads will show to someone based on what he or she searched for. There are two key tips for choosing the keywords that are right for your business. Choose keywords that are two to three words long. Remember, keywords can be made up of one word or can be a phrase that is a combination of words. The best keyword strike a balance between being too general and to specific. For example, if you sell flower bouquets, the keyword bouquet maybe too generic and the key word organic pink flower bouquet for Mother's Day maybe too specific. Red roses bouquet maybe just right. Use the keyword tool to find relevant keywords. The keyword tool is a great tool it allows you to enter words or phrases that you consider relevant to your business and it will provide you with a list of related words and phrases that may also be relevant for your business. The results are based on words and phrases that people actually searched for on Google so it can be a great tool to find keywords that speak to potential customers.

Next up, attention-grabbing ads. How do you create ads that speak to potential customers? A good ad should speak to what your potential customers looking for. Give him or her a small taste of what you've got in store. In other words, why should he or she come and visit your website and include a call to action that is what do you want them to do next? Let's go back to our florist example if someone were to search for something related to flower bouquets such as red roses flower bouquets, tulip flower bouquet, or flower bouquets, your ad may read beautiful flower bouquets roses, tulips, lilies, and more. Buy now and get twenty percent off or if someone were to search for phrases related to potted plants such as mini bonsai tree white orchid or beautiful potted plant your ad may read beautiful potted plant bonsais, orchids, baskets, and more. Order now for next day delivery both add speak to what your potential customers are looking for, entices them by mentioning a wide selection, and invites them to purchase.

After creating enticing ads you have to ask yourself, where do I want my potential customers to go to or which page in my website do I want my potential customer to land on after he or she clicks on my ads? Do you want your customer to land on your homepage, or is there a page that may be better suited? The page that someone gets to after clicking on your ad is also referred to as the landing page. Your landing page can be any page on your website. For example, it could be your homepage or a product specific page, however a good landing page is one that addresses whatever the potential customer was looking for. That is rather than making potential customers search your site to find what they want, you can send them right to the page that's dedicated to the specific product or service that was highlighted in your ad. In our example, for the flower bouquets theme the ad should bring potential customers to a site that features a selection of flower bouquets and the landing page for potted plants should feature a variety of beautiful potted plants that the person can choose from.

The final key ingredient for AdWords is to track how your ads are doing. Log into your AdWords account to see how many people saw your ad and clicked on it. To visit your website and with more advanced tracking tools such as Google Analytics you can see how much time people spent on your site after clicking on your ad and if they purchase something or made an inquiry. These insights will tell you which keywords, ads, or landing pages are working best for you and where there's room for improvement. Keep these five ingredients in mind when you create your add and manage your AdWords account. For more information, check out the resources that discuss specifically each of the five sections.

Microtesting Tools

Microtesting Tools

Useful Tools for Microtesting

In testing the early proposition, chances are that we do not have access to massive IT or design resources, and importantly, we are by no means in position to need them. At this point, we are trying to find those propositions for the offering show signs of life so that we may build and refine on them, and importantly, talk to those early adopters to understand what brought them to the offering in the first place.

While I would love to devote an entire course to microtesting (perhaps someday!), what I would like to do is introduce a few tools which can help those of us in resource-constrained, "start up mentality" positions who need to test propositions. Importantly, while mastery of these tools may come with attention and experience, they may be used effectively by those with limited expertise, and you will also find ample tutorials and resources for many of these platforms.

Google AdWords: The Core of any PPC Campaign

As mentioned previously, anything having to do with your PPC ad is created within AdWords. From housing and modifying the ads to setting daily budgets and keywords, it's in AdWords. There are many, many beginner tutorial videos on AdWords on YouTube, as well as very specific topic-oriented optimization videos. If you have the will to learn, one can almost guarantee there is a tutorial or resource on AdWords to help.

To provide some idea, Google's own AdWords channel on YouTube [18] hosts some 460 videos as of the time of writing.

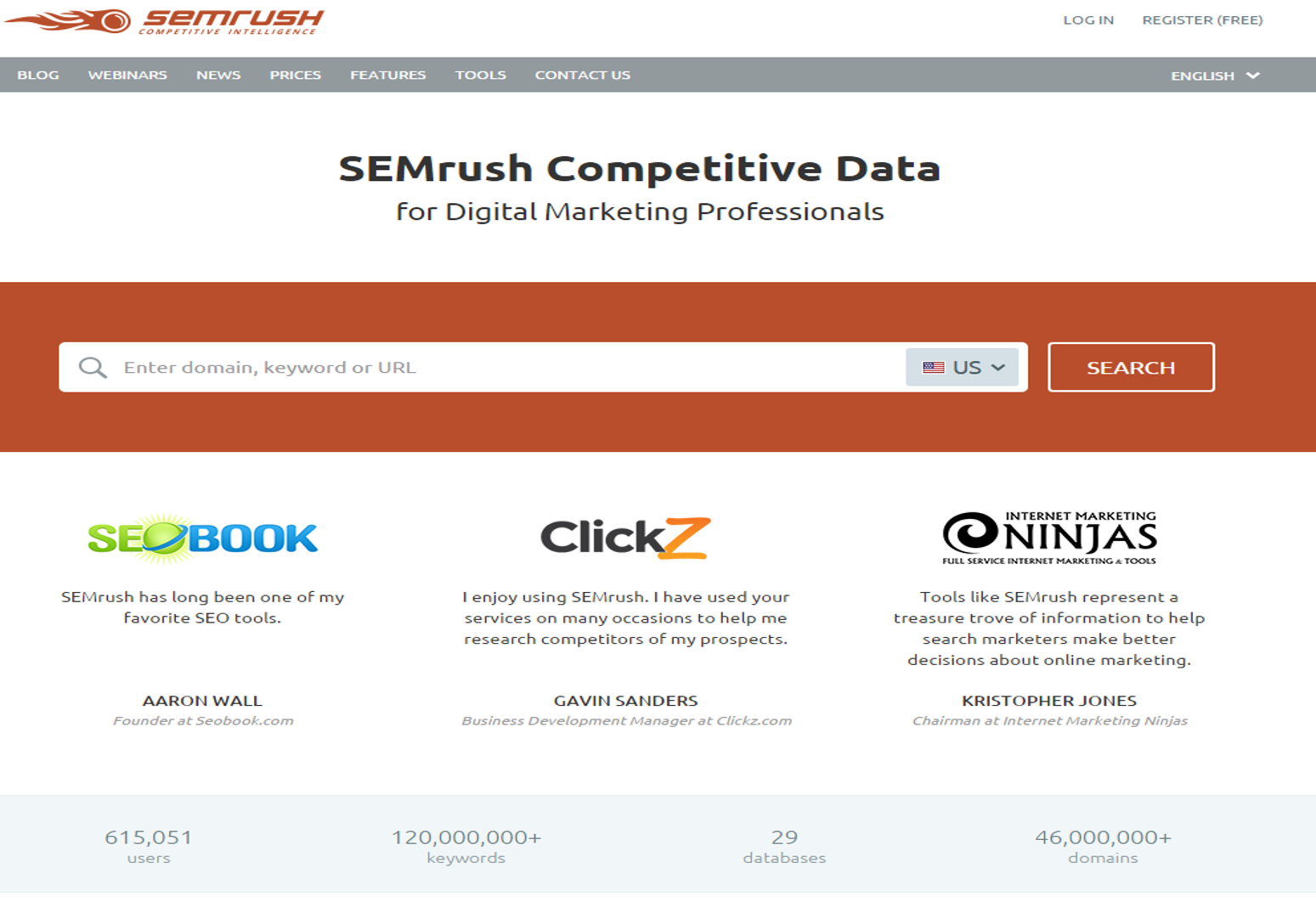

SEMRush: A Deep Research Tool

SEMRush is effective in compiling data on PPC ads, keywords, and competitors into one dashboard, and is, therefore, a great tool for informing us on potential keywords of interest.

What can be extremely useful in Black and Gray Space innovations is that you have the ability to look up a competitor's website to see what keywords and ads they are currently running, and approximately how much is being spent on those keywords... as well as many other valuable research metrics.

For example, for the intercept strategy I would like to consider for "Tired of Mowing?," I would want to research those companies and websites I would want to intercept like Scott's/Miracle-Gro, Lowe's, Home Depot, and others. This would give me some idea of those lawn care and lawn fertilizer keywords they currently use, as well as the PPC ads using those keywords. We can also approach it from another angle by entering the keyword itself, and SEMRush will show us those companies who are currently using those keywords in their PPC ads.

Here is a 3:47 demo video of what SEMRush can do. We will be using this tool in this week's assignment.

Video: Getting Started with SEMrush - Blog Setup Tips (3:47)

[MUSIC PLAYING]

SPEAKER: Thanks for using SEMrush. Let's get started by making a query in the main search bar. We can use the search bar to look up any domain, URL, or keyword in our database, and you'll be brought to all the information in our system about your query. Let's start with a common route to a main query, like ebay.com. This will show you why SEMrush is the web's leading competitor analysis tool. When you query a domain, you're redirected to the domain overview report. Trust me, it's far less complicated than it looks. This is essentially a portal page showing you little snapshots of the larger reports that are available when researching a website.

So where should we go next? How about we check out the keywords bringing in organic traffic to eBay? To do that, we just have to click on the blue number where it says organic search, and we'll open up an organic positions report. We could also go to this report by selecting Positions under the Organic Research tab in the left-hand navigation menu. Here you will see our analysis of the domain's organic search positions set up like a spreadsheet with a combination of metrics like search volume, cost per click, and keyword difficulty, among a few. We can find out where the site ranks in Google results pages and the specific landing pages that each keyword directs traffic to.

Whether it's organic keywords or paid keywords, you can manipulate the columns by sorting and applying multiple filters. Eventually, you can export the report to CSV, XLS, or even PDF form. If you only want to export a specific set of rows instead of the entire list, you can do that as well. Just use the checkboxes in the far left column to pick out the keywords you want before hitting the Export button.

Now let's try a little bit of keyword research. We'll enter the keyword energy drink into our main search bar to bring up a keyword overview page. The keyword overview is a lot like the domain overview, except that it contains information all about your queried keyword and acts like a little keyword profile. Again, we can click on the snapshots to open up more specific reports. Above the fold, we can see reports for phrase match and related keywords that will help you find the best keywords to target in your campaigns. Below, we can see the top domains currently ranking for the keyword in both organic results and paid results in Google AdWords.

Our domain verse domain tool is another great way to do some competitive research. You can compare keywords ranking up to five domain side by side, making it easy to see who's out-performing who. You can also build your own custom reports and charts in the My Reports and Charts sections. These tools are super helpful if you want to impress a client or boss with a detailed report or proposal using our data.

Don't forget to check out our new SEO Keyword Magic tool and our backlinks data. We're constantly building up and improving our software, so don't be surprised if you see new features and reports pop up from time to time as a user. It's our goal to become an all-in-one suite for all digital marketers.

We also offer a powerful set of reporting and technical tools under our Projects section. With one project, you can run a daily position tracker, conduct a site audit, monitor social media accounts, monitor your brand mentions, and audit your backlink profile all from one place. Use these project features to monitor your day-to-day marketing and SEO or PPC progress. Historical data is available at the Guru product level, and if you need raw data, you can visit our API page or send us an inquiry for a custom report. Our video tutorials are found at the top right of every report and on our YouTube page. If you ever need help using our software, customer support is always available by phone or email at mail@semrush.com [20].

[MUSIC PLAYING]

Unbounce: Quickly Create Landing Pages

Unbounce is a specific program to allow for the fast design and testing of landing pages, offering 60 or so relatively turnkey templates [21] which you can easily modify and post without having to set up web domains, hosting, or a raft of other IT-related issues. It also has the ability to track the success of your various landing page designs to determine which has been the most successful to date, which is useful as you go through the process of creating multiple landing pages.

Additionally, it has the ability to change the text on your landing page to match the content of the PPC ad on which someone clicked. So, for example, it can change the headline of the landing page on the fly to match the PPC ad headline for Person A, and show a different headline based on a different ad for Person B. This can allow you to test quite a few more messages without having to create a landing page for each and every one.

The following 2:21 video gives you some feel for the interface:

Video: How to Build Landing Pages with Unbounce (2:21)

Hi there, it's Chris Hamilton with sales tip today www.salestipaday [23]. And today, I'm going to show you a great application called Unbounce.com. It allows you to build landing pages that allows you to harvest email addresses, or sell things, or so on and so forth. Best thing about it is it's a free program. You can use up to 200 visits per month for free, and then it jumps to a paid for account. Literally, when you come here, you just kind of sign up. When you come in here, best thing you do is when you start, come to dashboard. In the dashboard, you click create new page, you can start blank, or choose any of numerous templates that they have, that they've tested that work. When you actually get one of these, you can actually also change colors, a whole bunch of different things. I'll show you one that I'm working on called 5 quotes.

Here's where you start going into the page. This the dashboard just based on the different pages here, but long story short what it is you end up with that kind of almost like a blank canvas. There's template here you start putting your own verbiage in. You start putting in your own information. I've got a share widget off to the side here. This form here, ties into major mail, email accounts like the a-webber, Mail Champ, and Fusion Soft on and so forth. You can add movies in here, you can add html text, a whole bunch different things but this is kind of just how you start you get a building blocks that you can start working on. Basically, when you're done with your page, here's kind of the way it looks on, you know, you can see the the the way it actually looks, and I just clicked out of that by accident, but let's go back. And, there you go. Pretty professional looking and pretty straightforward. I also got to admit, their support is also excellent. I asked a question and got an answer within, I think it was like an hour or something like that, and I'm on their free version.

So, anyways, if you have business where you're trying to give away e-books, harvest names, so on and so forth, it's a great little program you can use to do that sort of stuff. Unbounce.com Hopefully, you find that information useful. Have yourself a great day and go to www.salestipaday [23] for daily sales and marketing ideas.

Squarespace: Quickly Create Microsites Devoted to the Offering

Squarespace is not as purely focused on landing pages as Unbounce, but think of Squarespace's strength as giving you the ability to create a larger "landing site," or "microsite" as they are known. Microsites may only be a few pages deep, but provide deeper information than does a landing page, but prevents having to send someone to your full website and overwhelming/distracting them. Furthermore, your offering is likely still undergoing testing and therefore isn't yet ready to be included on the full site (which also likely requires IT and other constrained resources).

In our process, microsites can become useful for us for those offerings which have already received a couple rounds of testing and refinement via landing pages, so we have some feel for our strongest propositions and what is "working."

Squarespace also provides templates, but more importantly, a visual drag/drop type interface that just works. To give you some idea, I created this microsite for PIG Difference [24] in a few days, and it uses a modified Squarespace backend.

Please watch the following 13:50 video, you don't need to watch the whole thing (unless you want to), but if you scrub through the timeline you can see a little bit about how it works:

Video: Squarespace - Why I'm Switching... (13:50)

Hey guys, Jeffrey from Faded and Blurred and On Taking Pictures. I've started a brand new project for 2013. I'm building myself a brand-new website, new blog, new portfolio, for not only my artwork but also my photography, and I'm building the whole thing with Squarespace. Now if you've listened On Taking Pictures, you've heard Bill and I talk about how great Squarespace is, how it's flexible and customizable, and and it's easy to use, and the templates are fantastic. So I decided to take our own advice and rather than coding my new site by hand, which is something I've done for years or using one of the existing blogging platforms out there that I've also done for years, I'm going to build the whole thing in Squarespace and see if it, as they say, really is everything I need to create an exceptional website. Now I've spent some time in the back end and the admin just seeing how things work. and how things are customizable, to what degree they are customizable, and I've come away so far very impressed. So I wanted to share a couple of the features that have made this an easy decision, and I think they're features that photographers, illustrators, visual artists are really going to appreciate if you decide to build your own website.

Alright, and the first thing the templates which are really, really nice. They're very clean, they're all responsive. so your content your site's going to look great on a computer, a tablet, a phone, without the use of any plugin stall scalable all the way down. They're, they're flexible, they're customizable. For example, if we look at Aviator. Now I can preview this, with Squarespace’s default content, but I can also scroll down and see how actual Squarespace customers have customized or personalized the template to fit their own needs. So it gives you an idea of how flexible each of the templates are, but it also gives you ideas on what to do for your own website. You can look at these things and go home – yeah, I didn't think of doing that, or or I don't quite like this, but if I change a little bit in the other direction, that that might work for me. So it's kind of a cool way to see what you can do extending the look and feel of the default templates. Let's see what's another one that I liked, well this is another one that I like. And again, you can, you can start with the demo content, ok and click through and see what the template looks like and how it works and look at the typography, and and that kind of thing. Or, you can scroll down and see how customers have made this their own. Ok, so again, really nice feature gives you a great idea of what you can do when you start building your own site. And all of this, by and large, is editable visually. So you're not going to spend any time writing code, you're not going to learn, you know, hex numbers for colors, it's it's all visual. If you, if you decide that you want to sign up, they'll give you a free two week trial, no credit card, all you do is click start with this template on whatever template you happen to be on. Fill out the form first name, last name, email, and a password, and that's it. There's no credit card, there's no PayPal, you just click finish and create site and that will begin your two week trial. If you, if you decide that you like Squarespace, they will be happy to convert that into a full account. There are two plans, eight dollars a month, sixteen dollars a month, they both come with a free domain name. There's free customer support 24 hours even for the trial, so as you're working through the trial, if you've got questions, even though you haven't paid them a dime yet, you can still call up and get a real answer which is really cool.

So let's jump over into the back end of my site, and and we'll talk about some of the features that I really like. As you can see, this is actually my site. I haven't done anything other than research so far. I've just looked around to make sure that it's going to be able to do what I wanted to do. Haven't even given it a name, which I can do right now and go ahead and save that. So if we preview this, I think this is the Wexley template. So, here's a blog entry comes with a demo content loaded in, comes with one blog post and a couple of image galleries that you can play around with so when you make changes to the site you can see how those changes are going to be propagated throughout the different pages. Now to edit this in the past, I would have jumped into codo or espresso or something and and been editing lines of code and lines of of text in a style sheet editor. With Squarespace, it's all done visually. Just click on the little paintbrush there and that brings you into the style editing mode and here are the things that you can edit. Here are all the colors that the site uses typography, layout options for spacing, sizing, padding, that kind of thing. If you see something or if you don't see something that you want to edit, you can also use your own custom CSS and they will warn you, hey, if you don't know what you're doing, you know you could break something, but if you're comfortable editing CSS, you can you can extend the the customization even further than what they already offer. So for example, if we wanted to change the color of the site title here, all I do is click on the color chip and I get this color picker, and I can drag around. Again, visually, I'm not having to memorize these hex values or look them up everything is just done in real time and I can see my changes immediately. Right, and I just click away, and there I've set the new color. The same thing goes for fonts. If, if I wanted to change this font of the site title, click the drop-down, you can see I'm using Varela Round. Click the drop down again, and now I've got access to all of the Google fonts and I'm not having to use any sort of font family CSS. I'm not having to go import any of the fonts. It's all visual right here in the style editor, so if I want to change the font - I don't know something with a Serif, maybe Coustard, I just click and the changes made in real time, and that's it done. If I want to change the actual layout of the page I can do that with these sliders here or you can see as I'm moving my mouse around I'm getting these solid boxes and his little dotted lines. I can actually click and drag these dotted lines to change the spacing to change the layout on the site. If I want to add some padding in between my thumbnail images, I can do that. If I want to change the size of the thumbnail images, I can just click and drag, and and this is a fantastic feature. I've spent way too much time editing CSS, and this is actually a pleasure to use. You can do these things visually without having to go into an editor and if you if you like the changes, just click Save. If you've made a mistake or you don't like the way it looks, you can click reset and that gets you back to the default settings for the template. You can also click on different items to get their properties or parameters that you can change so just click on about and that shows me: here's the color, here's the active color, here's the hover color, so if I want to change the, the colors of the navigation, I can do that. If I want to change the typography of the navigation right now, it's using Arial, and again, I can go into my Google fonts and - I don't, let's say, let's say, we want to do Coda, ok, and the change is made in real time and that's it. So, very, very simple. This was one of the features that that I was really excited about, coming from a background of, you know, coding and spending a lot of time in editors. So, save that, and now I'm back into preview mode, and you can see the changes have been made. We've got our little light box here for our gallery, which you can change that as well. You can change the size of the of the images, the layout of the images. If you want to change the way the blog is laid out, you can do that, and same way. Click the pencil, now we're in edit mode, and the way Squarespace handles content is in this idea of blocks. So, for example, this is our sidebar for the blog, and if I wanted to add, I don't know, a search bar, I can click the Add button, and now I've got all of these choices for different types of blocks. If I wanted to add an about text block, I can just click text and type in some text under structure, that's where you'll find the search bar, for example. Click Save, and there I'm done. If I click out of edit mode in preview mode now I've got my search bar. So it really is very intuitive and very easy to use.

One of the other things that I like is the the integration with social media. If you've got sharing services that you use your not having to go out and look for a plug-in that works with your particular version or whatever platform you happen to be on, everything is built-in. Right now, all of these services are enabled. If you, for example, only wanted to use Facebook, Twitter, and Google, you just turn the other services off, and now just those three buttons will appear in the sharing options in the blog posts. You can also connect your social media accounts to Squarespace, so if you want to write a blog post and then publish data to additional services, you can connect your accounts Facebook, Twitter, Tumblr, Flickr, and publish to those accounts. You can even do a Facebook page, so if you've got a business page or fanpage you can publish to that as well. So lots of options for customizing your content and lots of options for getting your content out in front of as many eyes as possible. If you're an Amazon associate, under the general tab here, you can enter in your Amazon associate tag and actually look up and insert Amazon product data right in the blog editor. So, for example, it's let's create a new blog post. We just did a post on this guy here Jon Contino who is a fantastic typographer, illustrator, designer, and I want to include this video. So I'm just going to copy the URL and add a new blog post, give it a title, and we'll put some temporary text. All right, and again click the + add a video block, very simple, and I'll just paste in that URL. Ok and it's going to grab the title, is going to grab the description, if you'd like to add a custom thumbnail, you can simply drag one from your desktop onto this little box here and it will add a custom thumbnail. Click Save, and there's our video. So again, we're not having to look for a plug-in to display the video correctly, and if we wanted to add an Amazon item, let's say one of my favorite designers David Carson. Ok, add one of his books, The End of Print. You've got options for showing title author price and a Buy button. If you if you don't show a Buy button, just clicking on the cover will take your site visitors to Amazon. so I'm going to get rid of all of this stuff but I will add a Buy button and go ahead and click save. Now, it comes in very large, but all you have to do is click and drag to change the size, right. If that's still too large, just keep dragging it down. If that's still too large, drag it down even further, and then we'll click Save, and let's preview this. So here is our video, here is our product from Amazon, and again your Amazon associate ID number will be plugged in there so you get credit for the sale and here's our modified sharing with only the three that we have selected.

So really, really great features so far. I'm, I'm excited to get this thing built and up, and that's that's kind of what I'm what I'm really liking about Squarespace so far. If you'd like to set up in an account, set up a trial, head over to Squarespace.com and they will be more than happy to set you up with a two week free trial. Until then, thanks for watching and I will see you next time.

Microtesting Analytics

Microtesting Analytics

Understanding Results of Your Microtesting

After you have deployed the microtest, you will want to not only understand how each proposition PPC ad performs, but also how long the landing page is able to hold visitors, how many signups/purchases you gain from each (referred to as "conversions") and other interesting data which may pop up.

The most efficient way to do this (and again, the method which will provide you with seemingly endless tutorials/resources/help) is to link your Google AdWords with Google Analytics. This is a one-minute task, is handled semi-automatically within something like Unbounce or Squarespace, and allows you to understand the entire picture of how your propositions are performing relative to each other and overall.

Here is a brief video on the most common metrics for AdWords. Please watch the following 3:09 video.

Video: Understanding AdWords reports and statistics (3:09)

Ted owns Ted's Travel a small travel agency. He advertises with AdWords for two main reasons to get his brand message about his tour packages out there and to increase web visits to keep pages on a site. When Ted first started using AdWords he saw lots of statistics and reports, but didn't know where to begin. That all changed when Ted decided to spend 15 to 30 minutes a week reviewing his account. Now that he knows his way around AdWords those statistics and reports help them make informed decisions about his ads, know specifically where he needs to make changes to his account, and see what's worked and what hasn't over time. Let's watch just what Ted does during one of his weekly check-ins.

Ted's AdWords account is structured with one campaign and to ad groups. he starts on the campaign screen scanning the big picture statistics he finds there. Then he checks his ad groups have to see how he's doing with promoting his brand he checks his impressions. To see how visible his ads are and whether people are click-through to his website, he looks at average position and click through rate. Next he clicks into each ad group to see how individual ads are performing. Since Ted checks these stats routinely he's customized this screen to make it easier to see exactly what he's interested in. Ted is pleased with how his ads are performing so he doesn't make any big adjustments, but he's always on the lookout for new ideas.

So, next he looks at three reports that give him new perspectives on his account. First Ted scans the search terms report to see specific searches that led to someone clicking on one of his ads. He sees that searches for Grand Canyon rafting trips generated clicks. Since he sells this package he knows that he should create a new ad for rafting trips. Next, Ted monitors the auction insights report to see how he compares with other advertisers in the same options. When he sees that other advertiser's ads show up higher on the search results page than his ads he decides to make a few of his keywords more competitive by increasing their bids.

Finally, the top movers report is Ted's personal favorite because it shows him which of his ad groups or campaigns are on the move both up and down. When he sees that his cruise packages ad group is attracting more attention, he decides to build out his keyword list there. Ted finishes his weekly check-in feeling good about his campaigns performance and even better understanding exactly why his campaign is performing so well. For more information about understanding reports and statistics visit the AdWords Help Center.

The video below is specifically about the linking of AdWords and Google Analytics, and its value in allowing us to understand more about the path and actions visitors take after clicking an ad. Please watch the following 4:31 video.

Video: Benefits of Linking your Google Analytics and Adwords Accounts (4:31)

If you have both Adwords and Google Analytics accounts, but haven't yet linked them, you're missing out on valuable insight into your advertising, website, and business as a whole. Adwords and Google Analytics each provide important information, but independently, they don't provide the full picture. Adwords helps your customers find you and provides detailed reporting on ad spend and performance. In your Adwords account, you can see which keywords and ads users click or view, and which directly generate conversions. But Adwords alone only gives you part of the picture. It doesn't show you what customers do on your site after they click or view your ads, but before they convert. Google Analytics fills in this missing information. It helps you see the different paths that visitors take through your site, how visitors are engaging, or not engaging, with your content along the way, and what site factors influence conversion rates, and ultimately, your bottom line. However, without linking accounts, you can't tie this rich information about user behavior back to the specific Adwords keywords or ads that generated the visits. By linking your Analytics and Adwords accounts, you can see the full picture of customer behavior, from the ad click or impression, all the way through your site to conversion.

When you link accounts, you can see additional data that help you optimize your Adwords campaigns and make more informed business decisions. For example, in the Adwords reports inside of Google Analytics, you can view on-site engagement metrics such as Bounce Rate, Pages per Visit, and Average Visit Duration, for each of your Adwords campaigns, ad groups, keywords, and ad texts. These types of metrics help you understand if your Adwords account is driving the right kind of traffic to your site. And they also help you identify areas of your site that you might need to improve. In these reports, you can also see your Adwords cost data and performance metrics, like Average Cost Per Click, Clicks, and Clickthrough Rate. Together, the Adwords and Analytics data in these reports help you better understand what you're spending in your Adwords account and what your return on investment is.

In addition to seeing Adwords information inside your Analytics account, you can easily import your Analytics goals and Ecommerce transactions into Adwords Conversion Tracking, allowing you to make more informed refinements to your campaigns without ever leaving your Adwords account. If you are using Adwords Conversion Optimizer to manage your bids, it will automatically start using Analytics goals and eCommerce transactions once you've imported them into Adwords. This additional performance data better enables Conversion Optimizer to show your ads when you are more likely to get conversions. You can also important Analytics metrics into your Adwords account. You can see Bounce Rate, Average Visit Duration, Pages per Visit, and Percent New Visits on your Adwords Campaigns and Ad groups tabs.

Linking accounts also gives you richer data in the Analytics Multi-Channel Funnels reports. You can see which specific Adwords keywords, ad groups, and campaigns are initiating or assisting conversions, in addition to driving them directly. If you're using Google Display Network remarketing, linking your Adwords and Analytics accounts allows you to extend your remarketing capabilities and build unique lists based on Analytics dimensions and metrics. You can reach people who have already visited your website and deliver ad content specifically tailored to the interests they expressed during those previous visits.

So, link your Adwords and Google Analytics accounts today to see the full picture. And discover how to optimize your Adwords campaigns and improve the performance of your business. Log in to your Google Analytics or Adwords account to link your accounts today. For more information, visit Google.com/adwords or google.com/analytics.

A Note on Your Early Adopters

Aside from what you will learn from the quantitative side of analytics, you can not underestimate pairing those learnings with the qualitative insights you can gain from talking to early adopters. Whether it is something formalized such as an online survey to those visitors who took an action, or simply a phone call a week later to understand their thoughts and expectations, this small step can be invaluable to understand the story behind the analytics.

It is important for us to remember there are people represented by all of those analytics and metrics, and if they have purchased or signed up for more information, their identity is known. What you may find is that you can fall into a certain "stock ticker" mentality as you sift through all of the analytics, where you believe that all answers can be found in the numbers. Sometimes you may find that an ad did extremely well in bringing people in to the landing page, but the landing page did not "convert" well... or that the landing page did an excellent job of keeping visitors, but few purchased or took action. These are the cases when you would want to take those in the minority and contact them to see if there were obstacles they saw, but were able to overcome.

For example, in the case where visitors are spending an average of 10 minutes on the landing page but not taking action, you can take the small handful of those who did order and talk to them. They may say things like, "I had a really hard time finding the order box, but when I did, I was OK," or "the site was really, really slow," or "the video crashed twice, but worked the third time, and that's why I bought." Any one of those insights will help you clear the analytic fog to understand what may have been the obstacles causing the majority of others to leave.

Closing Remarks

Closing Remarks

Believe me that in artistic matters the words hold true: Honesty is the best policy. Better to put a bit more effort into serious study than being stylish to win over the public.

Occasionally, in times of worry, I've longed to be stylish, but on second thoughts I say no–just let me be myself–and express severe, rough, yet true things with rough workmanship. I won't run after the art lovers or dealers, let those who are interested come to me.

In due time we shall reap if we faint not.

- Vincent van Gogh to Theo van Gogh, March 11, 1882 [29]

Understanding the Rough, True Things in Our Offering

Up until this point in the process and our time together, we have gone through painstaking amounts of rigor and research to frame opportunities in the sustainability space, performed initial fieldwork to understand the mind space, mapped the thoughts and feelings of customers, created strategic paths, and perhaps done some hypothetical testing.

But is it now, in microtesting, that the rough, true things about the offering and its potential in the market only begin to become known. There is only one truth, and that is how the offering performs in the live environment.

It has been a long road until this point, but this is the path of creating an original offering based on insight and understanding, not duplication or fabrication. Make no mistake, if successful, others are likely to copy the offering, but in virtually all cases, they will not have the insights underlying their work. It is the insight which allows you to extend the offering and understand it at a deeper level than simply Xeroxing someone else's work. The insight is what allows meaningful, resonant creation.

The offering will continue to be honed and iterated, along with the messaging and other cues. There is no "resolution"... there is no "We're there!" moment when you get to open that bottle of champagne in the back of your filing cabinet.

On Roughness

It is also in this phase where we purposefully avoid marketing gloss, PR, and other forms of publicity. We want to understand how the complete proposition performs by itself, unaided and unclouded by extra marketing. The basic proposition should prove value in and of itself, before we begin to go "pedal down" on marketing and engage agencies.

There is a very specific reason for this: At this point, we are more concerned with understanding "what's in the box" as opposed to "what's on it." Our goal is to understand the core proposition, not what added benefit or buzz our ad agency can create.

This isn't to say that we somehow suppress or undersell the proposition in microtesting, just that we shouldn't cloud it with celebrity endorsements or introductory discounts or flashy gifts.

On Persistence

We must always remember that no matter how promising or disappointing the initial results are, we are incredibly limited in our understanding of the offering in the market.