12 - Honing and Evaluating the Concept

Lesson 12 Overview

Lesson 12 Overview

Summary

In our penultimate step in progressing our concept to launch, the goal is to take what we have learned in microtesting the offering and audit it. Another significant goal is to ensure that we don't have any weak spots in the process.

Whether taking a product to market or another launch, it is extremely important to audit each desired step the target/customer is taking in relation to the concept. And I mean literally each step.

This is of crucial importance due to the fact that your concept is now near being taken to market (if not in market), and is subject to any one of hundreds of potential points of friction, each having nothing to do with the concept itself. The cruel reality for your fledgling concept is that if you are inattentitive at this phase, you may be writing off your concept for poor performance, when it could be anything from the launch website being down to ads not being run to a broken lead acquisition form.

Therefore, we not only audit and test each step of the desired customer experience to ensure they appear to work, we watch the "conversion funnel" to understand how and where people interested in the concept are 'falling off.' If we find that 90% of those coming to the launch microsite are filling out an information form, but only 2% of those leads are being populated into Salesforce for followup, that is a major problem.

Our goal in this Lesson is to understand -- in a very finely-grained way -- the nuances of behavrior of those experiencing the concept. Without that understanding, your perfectly promising concept may find itself on the organizational scrap heap due to a crucial failure of a seemingly minor component. You need to understand the full performance of the concept 'machine,' and any component indicating performance outside of normal parameters.

Learning Outcomes

By the end of this lesson, you should be able to:

- articulate the importance of Conversion Funnels in microtesting;

- understand the role of statistical significance in testing;

- create a meaningful iteration plan for the offering.

Lesson Roadmap

| To Read | Documents and assets as noted/linked in the Lesson (optional) |

|---|---|

| To Do | Final Case |

Questions?

If you have any questions, please send them to my axj153@psu.edu [1] Faculty email. I will check daily to respond. If your question is one that is relevant to the entire class, I may respond to the entire class rather than individually.

The Conversion Funnel

The Conversion Funnel

A Snapshot of a Microtest's Viability

At this point in our microtesting process, we may have a relatively well-formed group of initial keywords leading to a few PPC ads testing our various propositions, which then lead to one or more landing pages. These landing pages, in turn hold the core of the proposition, clearly and impactfully stated, and pair with an appropriate Call to Action (CTA).

Depending on what we are testing, the stage of our offering (prerelease, prototype, waiting list, etc.), and our intent, the CTA could be anything from a simple email form to send more information, to an address to send a physical sample, or to sell the early offering itself. Our goal here is to capture information and potentially sales, as simply knowing the number of visitors passing freely into and out of our site provides us with nothing meaningful by which to make decisions or improve the offering.

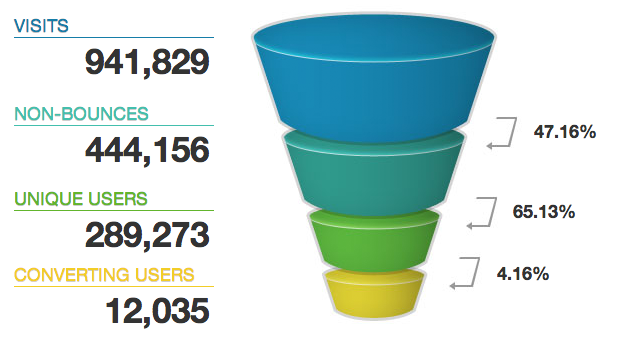

The conversion funnel is a visualization or way of stating the steps in which our audience is engaging with the offering. More importantly, the conversion funnel makes weaknesses in the offering or presentation more readily evident so we can go about fixing them. In a conversion funnel as shown below, our goal in testing the offer is to understand where there may be constrictions in the conversion funnel causing us to lose an undue number of visitors/prospects.

Ultimately, the conversion funnel will become an early model of the offering's viability, and will begin to determine exactly how attractive the space is. It will give us a view of where the needs for improvement are... or if we are ready to "scale up" and increase the number of impressions/ad spend.

Whether talking to CEOs or VCs, a detailed conversion funnel can provide a meaningful picture of initial viability.We can structure our conversion funnel model with whatever criteria we choose to capture the goals of our microtest. The below example from Yahoo Commerce is on the simple side, but gives you an idea of what a basic funnel could look like.

The architecture I find helpful for creating conversion funnels is to consider all of the steps someone takes to reach your offering, the information and actions they take, and what goals you have for information capture and conversion. It is likely each step can be tracked through Google Analytics, AdWords, and form/sales data to provide a valid picture of the funnel.

Furthermore, if you draw your ideal conversion funnel and the steps it would capture on the whiteboard and find that you can only put numbers to 40% of the funnel, that is a sign that you need to capture that data in Analytics or find another way to understand that data realtime.

A Prospect-Centric View of the Funnel

Like all good research, understanding the conversion funnel is many times about perspective.

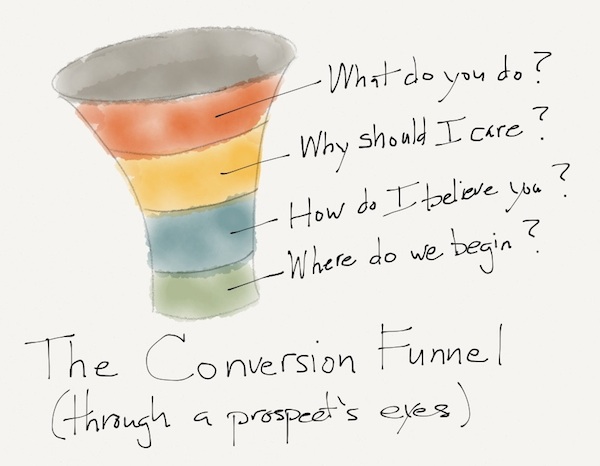

Where we may see statistics on how many prospects move from one area of the conversion funnel to another, we may easily lose sight of the human side of the equation, instead thinking of the funnel as some sort of faceless oracle. For this reason, it is essential to our understanding of the funnel to be able to look at it through a prospect's eyes, as well as their technology.

One example:

You notice that although your PPC ads are doing well, you are losing a significant proportion of customers at the "top of the funnel." In this case, some of your highest-quality keywords are resulting in a huge proportion of sub-minute duration ("bounce") visits to your landing page. Everything looks good on your Mac Pro with dual 30" monitors and fiber connection. What could possibly be wrong?

As much as we need to have an understanding of our prospects, we must understand the technology they are using and how adept they are at using it. Even with the otherwise most engaged prospect, slow page load times and mobile unfriendly design can cause us to lose a significant proportion of worthwhile prospects right off the top.

What you might want to consider as you get further into microtesting (or just as good practice, period), is to set up a second computer in the corner of your office specifically for viewing your sites through the "least common denominator" technology. Google Analytics will show you what browser and platform people are viewing your landing page on, but consider buying a 6+ year old, small monitor PC, loading an obsolete version of Internet Explorer, and slowing your connection speed using one of a few inexpensive programs. This will quite literally allow you to see your microtest assets through a user's eyes, and you may find that it is painfully slow to load, images may be missing, or that nice sample form crashes every time you use it.

Regardless of how you gain a more complete perspective, consider at each step in the funnel what value, proposition, and stimulus you are providing to a prospect. Scott Brinker [3] provides an simple perspective of the conversion funnel's meaning for customers:

Statistical Significance

Statistical Significance

Using Analytics Appropriately

It has probably been some time since some of you have had a Statistics class, which is fine. I would like to cover a few topics here that are not so much concerned with calculation as much as creating–and following–statistically sound goals.

As you begin microtesting, you may begin anxiously watching results as they come in. You might find yourself leaving AdWords open in a small window on your monitor, or otherwise "checking in" frequently. While it certainly is exciting, that excitement and tension can lead you to choose the wrong test winner, and ultimately, the wrong proposition for your offering.

What I would like us to avoid is the all-too-common situation of choosing the winning variation based on some arbitrary goal you had in your head, be it, "First to 200 clicks" or, "First concept with 10 conversions" or, "Whichever looks the best at the end of two weeks." In a more passive form, this is actually quite common, even in the professional PPC world, where a PPC consultant will ask you A) "How long do you want to run the ads?" or B) "How much you want to spend?"

If you happen to keep a spray bottle of water on your desk for your ficus, feel free to use it on whoever asks you this question.

Your correct answer would be C: "Until we have statistically significant results," followed by, "Call me when we spend $X."

I can share from experience that PPC results can take odd and inexplicable "runs" in volume and preference... a week of one-ad-click days followed by a ten-click day, for example. A keyword running as hot as lava for three days and then returning to norms. While it could be tied to social shares, PR, the day of the week, or other factors, many of which you can see in Analytics, sometimes, it is a truly random occurrence.

To prevent our human emotion/anxiety/excitement from getting in the way, we can simply drop a handful of our click statistics into one of a few sites devoted to finding PPC statistical significance [8]and they will tell us if we have reached significance, and if so, what level... or, if we have not, how many more clicks we will require. Some analytics packages have the statistical significance tool built right in.

So, if you've always been dying to apply some of those learnings from Stat into your professional life, using it for the validation of microtesting results is a perfect place to do so.

This may seem like a rather straightforward consideration, which makes sense because it is. But it is also a consideration that is very commonly overlooked.

Iteration

Iteration

A Parable of Turkey

In the personal realm of "offering development," I am somewhat renowned for my Thanksgiving cooking, and my planning and discipline in doing so. I'd like to share a story that might frame iteration a bit.

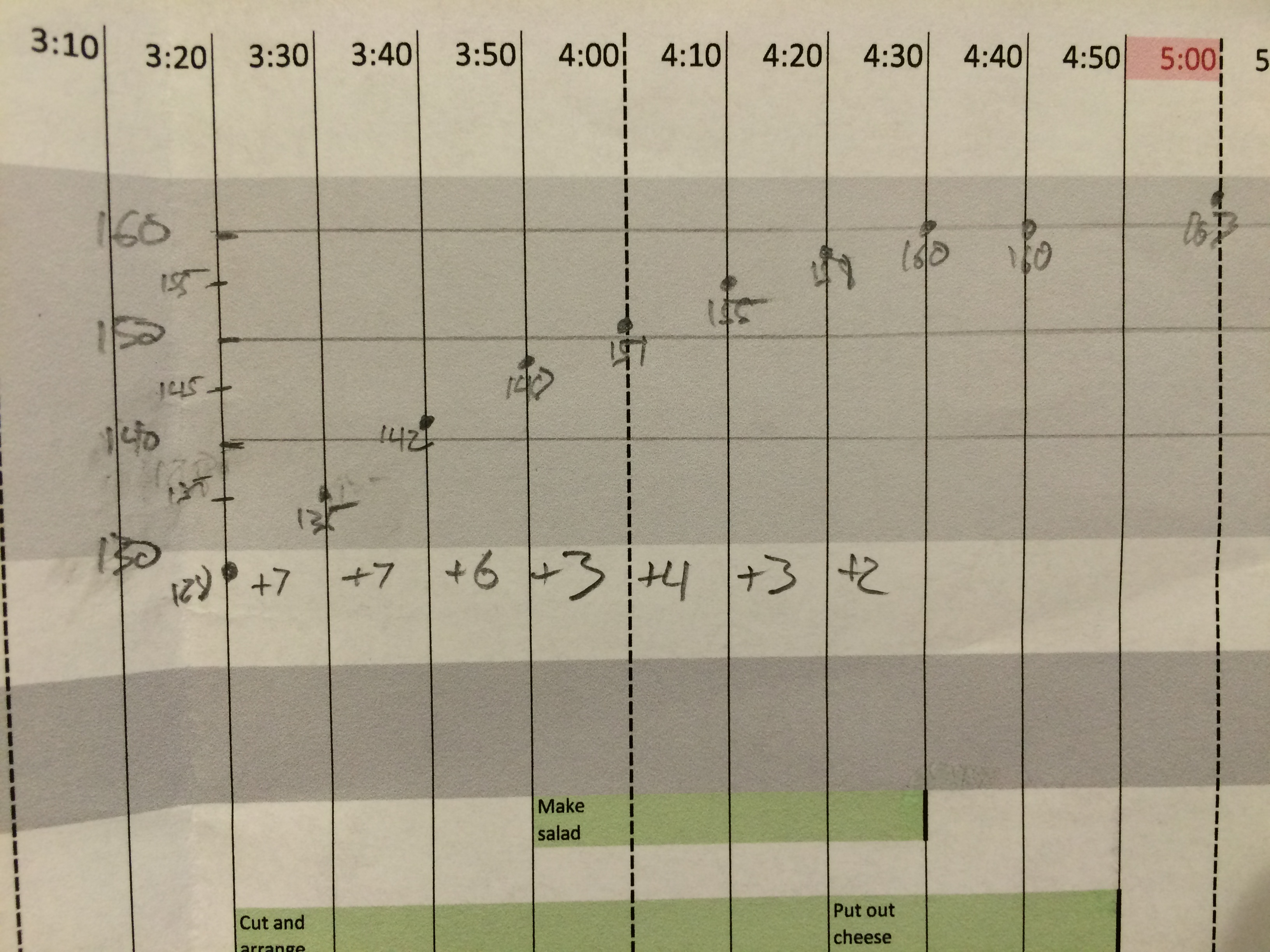

I believe that some of this began in my formative years under watching my grandfather who was a retired High Colonel of the Air Force, a rose gardener, and a cook. I feel I extend the artform through my "maps." Where he would use a post-it note, I would use Excel, plotting each food item of the day on the Y-axis and time in 10 minute increments on the X-axis. Notes on tasks would go in each cell. This would be my ongoing salute of anal-retentivity to The Colonel.

Fast forward to 2014, my most ambitious Thanksgiving to date, hosting and feeding eleven with everything from cannoli shipped from Boston to exotic cheese pairings. The initial food preparation would begin early the morning before for the breads, casseroles, prosciuttos and sides, as well as the honey brining of the star of the show: a 24lb organic, free range, heritage breed turkey.

In the USDA turkey sizing scale, this would fall somewhere between "Extra large" and "Hedonistic." This specimen would be prepared in the smoky convection of my Weber Summit grill, starting at 500 degrees and tapering to 325, as all its gobbling forebears. Delicious, moist, honey brined turkey, followed by a mild tryptophan coma for all.

Thanksgiving this year would be terribly cold, to the point that I would have to place a warming jacket on the propane bottle to keep it flowing. As the grill hit 500, the turkey was placed with care, the digital thermometer probe placed in the turkey, and the display facing the nearest window. The lid was closed.

As I would watch the trusty grill temperature, it was climbing as expected after adding the turkey, but never passed 350. I thought that the sheer mass of the cold turkey paired with the frigid conditions and the cold propane had tapped the BTU output, as all burners were maxed. Nonetheless, I had planned for such occurrences, and had 30 minutes of "coast" I could add to account for the turkey progressing too slowly or quickly.

After the first hour, I went outside to check progress, and noticed that I could feel the grill's heat as I approached. Something was not right.

Due to the massive turkey, the temperature probe inside the grill cover had ever so gently rested on the cotton twine binding the "feet," conducting heat away from the probe and leading to the temperature showing as about 250 degrees too low.

My turkey had spent its first hour at a blistering 600 degrees, not 350.

Despite the foundry-like conditions, the turkey still looked quite good, but was progressing far too quickly. I immediately cut the temperature to 250, but we must remember that any turkey, let alone this heathen, responds slowly to temperature.

Knowing that it could be too late before I could adapt, I got out a ruler and a pencil and drew what you see in the image above: an impromptu time-temperature graph.

My goal would be to adapt to ease the turkey to a 163 degree internal temperature at exactly 5:00, at which time I would remove it from the grill, bring it in, and allow it to naturally stabilize to 165. It would do exactly this, as you can see from the original graph.

Applying the Parable to Offering Iteration

I offer this story as an example for what you will likely encounter in your microtesting, and the actions we can take to test and iterate. You will see that the offering is not progressing as planned, that the relationship of your inputs does not seem to be bearing fruit in the measurable outputs.

In these cases, consider doing something I think of as "isolate and iterate," which is simply an application of the Scientific Method as applied to iteration.

Don't attempt to solve everything at once, but simply attempt to isolate one factor in the offering, iterate it, and test that variable. Proceed slowly and with purpose. Give the results time to develop. Test methodically, as the worst thing you can do is begin to drastically change multiple variables in the offering: you will simply mute any "signal strength" you had before from the problem, making it that much harder to improve things.

As we have covered quite a few times in our time together, this is just another example where innovators, typically seen by others as the "mad scientists" of the organization, are extremely measured in their strategies and responses.

Mike Cassidy on Product Iteration

Mike Cassidy, a VP in innovation and product management at Google and a serial tech entrepreneur himself, has some excellent ideas on the importance of iteration over "masterful knowledge."

The following video (set to play from 1:04 to 4:38) summarizes his seemingly simple approach to iteration, as well as a cooking question he asks when interviewing. Please watch the selected approximately 3 minute-long section of the following 11:42 video.

Video: Mike Cassidy of Google on Product Iteration (11:42)

NINA CURLEY (WAMDA): We're here with Mike Cassidy who is the director of product management at Google and the founder and CEO of four startups prior to that. Mike I just wanted to ask you about, um, you typically advise that speed is the most crucial element in a startup success, and I want to know is having deep experience in a market really necessary for implementing speed in the development your company? You say that you know hiring known talent quickly is important, and knowing what kinds innovation will capture market share is really important, but how can an entrepreneur implement speedy, iterative development in the absence of years of experience?

MIKE CASSIDY (DIRECTOR OF PRODUCT MANAGEMENT, GOOGLE USA): So, interestingly enough all the four companies I did were in quite different areas. One was in computer telephony linking telephones and databases together, one was an Internet search engine, the third one was an instant messenger for online PC video gamers, and forth one was a recommendation site based on recommendations from your friends. So, all four were quite different areas and I believe it's possible to go into different areas and learn about that area quickly and come up with ideas once you get in the area.

NINA CURLEY: So, you didn't have special prior knowledge prior to getting into these regions; you did just a quick study?

MIKE CASSIDY: Right, I didn't know anything about any of those four areas before I joined them. I always joking it's frustrating for me because in the beginning, when I start my companies, nobody will return my phone calls. Nobody knows me at all. And eventually, after a year or so, the company is doing well, and then people start calling me back, but it's always a fresh start in every industry I go into.

NINA CURLEY: But so what special techniques it to imply any special techniques in quickly learning landscape did you develop over time techniques for assessing what you needed to learn when?

MIKE CASSIDY: Yes, so I believe in launching your products about three or three and a half months after you start the company, and then just iterating quickly with sort of improvements to the product overtime. And I believe by launching quickly and then by iterating, you can adjust to the market and find what people really want. For example my third company Xfire, we launched the product three months after we sort of it came up with that idea, and then every two weeks for the next year, we're coming out with a new version of Xfire. And the first version was pretty simple, didn't do a lot of the things the final version did, but we kept listening to the customers and kept into iterating and it's really hard for competitors to stay at a pace you're going, so eventually you keep up. One of the analogies I like to use is any chess player can be a Grandmaster chess player if I can move twice every time the Grandmaster can only move once.

NINA CURLEY: Ah, so you didn't have, you didn't come up with the innovation right at the beginning you really just, it was the pace of the iteration the developed these innovations, and how can you sustain that pace? Does it have to do with scaling your business? Does it have to do with the quality of the people you hire? What is the crucial element for having, for sustaining this level of iteration?

MIKE CASSIDY: That's a great question. I often am asked to give advice to people who have a company and that's struggling a little bit, and they'll say, Mike I've got this problem. The team is is not working as hard, it's kind of a little bit slower getting stuff out. How can I get them to work faster? And I always say, you're asking the wrong question at the wrong time. What you got to get is people who are similar minded at the very beginning. When I do my interviews with people, I ask them questions in the interview that I try to get across this sort of intensity of pace. Like I ask them, how do you cook dinner? And some of them will say, oh well, I don't know. I put something in the oven and I wait; an hour later, it's ready. Other people will say, oh I time everything. I want to eat at exactly at six o'clock, so I have a schedule three minutes before 6 I put the broccoli in, and at ten minutes before 6 I have the water start to boil so it's ready when I put the broccoli in, and at twenty two minutes before I start the salad, and that's the kind of people I want in my start-up company.

NINA CURLEY: So you're really selecting for precision, basically. You want to psychologically pre-select your team; this is the most important thing to psychologically pre-select your team for um..

MIKE CASSIDY: Absolutely, you're selecting for a competitive spirit. You want people who want to win. You're selecting for ownership. Everyone who joins my companies takes a pay cut, but they get equity in the company, and then in so far it's paid of for everyone. We made 22 millionaires at my second company. We made seven millionaires my third company. So yes, you select for people who take ownership for the product. I don't like people who say, well, that's Charlie's responsibility. I want everyone to say I see something wrong, I'll fix it.

NINA CURLEY: Okay, and how much of that comes just intact in the people that you select and how much do you incentivize ownership?

MIKE CASSIDY: So, I'm kind of a cynic about some of these things. I think the best predictor of future behavior is your is past behavior, so I don't believe I can go get people and get them to be, have, feel more ownership and more passion. You got to find people who have that in them to start with and I find them everywhere. At one the most important guys on a second company, I had never worked with before, but I played ultimate frisbee with, this game where you throw the frisbee back and forth and you run up and down the field. He's an amazing ultimate frisbee player he would dive, lay his body out full across the field and I said, I bet he's good to work with, and he was awesome. He was fantastic.

NINA CURLEY: Really? Amazing and what kind of numbers are we talking? Can you throw out any numbers in terms that you know when you do get this iteration going at this pace and you have your team, I mean what levels like?

MIKE CASSIDY: How successful our company is?

NINA CURLEY: Yeah, Yeah, how does that translate into monetary value?

MIKE CASSIDY: So I've, I've have been very lucky. The first company we only raised, I put in five hundred dollars and each of my two friends put in five hundred dollars. We had fifteen hundred dollars. We didn't raise any venture capital. We sold that one for 13 million dollars. The second company we raised 1.4 million dollars in venture capital in the first round, and 500 days later we sold it for 500 million dollars. So, we joked around we were making a million dollars a day. The third company we sold about two years after we launched it for for 110 million dollars to MTV. So, yes with this sort of speed, I think you can generate significant amounts of, you know, market value and also speaking on the usership, the second company, the search engine we were serving 50 million people within a little over a year who were using our search engine. And the third company Xfire, I think we have over 15 million people using the product now. We had a couple million people by the end of the second year using it.

NINA CURLEY: Amazing. So, what you're saying is, it's really not the seed funding that's the issue, it's really the team that you hire that's going to be the critical factor in your success?

MIKE CASSIDY: The people are everything, totally everything. And, everyone says that, but when you when you really live it you know. Yes, the amount of money you have to start with I don't think is is actually that critical.

NINA CURLEY: Okay and to switch tax for a moment I just want to pick your mind about the future of the web. I don't know if you read recent article in Wired called the "The Web is Dead Long Live the Internet" about how we're shifting as consumers we're shifting from browsing on the open web to paying for highly curated experiences in apps things like the iPad the iPhone. How is Google responding to that or, more generally, what sort of product innovation would you advise given this trend? Do you believe this trend?

MIKE CASSIDY: Yeah, so one of the reasons I'm totally excited to be at Google is we're huge proponents of the open web. For example, Android is our, is our phone operating system. We're turning on over 200,000 times every day someone's activating an Android phone. In the search world, we are doing at any given time between fifty and a hundred experiments for improving the quality of search, so we're always sort of making it faster, finding new content. We have over a billion people a week searching on our sites, so we're using that information from the searches they do to come with with new things but.. we believe in open web. We believe that over time the open systems are the one that will eventually win. You can look at throughout history you know them the various things. Certainly there are advantages to in the short term sort of closed systems having less inoperable parts where things can go wrong, but over, eventually the open systems always win.

NINA CURLEY: Okay, that makes sense. I just have one more question for you. Given that approach that Google is always solidly going to be in that camp, what are innovations in, in the Middle East in the Arab world that a startup company could develop that Google would be interested in?

MIKE CASSIDY: Interesting question. I'm really excited about the Arab world. As you know, I was here a few months ago in Jordan and I met a lot of exciting entrepreneurs. I'm meeting a lot of exciting entrepreneurs today. I think some of the things that are most exciting to me are location-based technology. I think there's a lot of things you can do with location-based technology in the Arab world where sort of through the phone systems or other technologies you can locate people and services and products. Whether it's, you know, machine to machine connections or a location inside your phones. You know lots of GPS devices inside phones and there's all sorts of the things you can expose to people different services they might want or different ways of finding your friends. I mean right here this conference, there's this technology we can find certain people the conference by using location. So Google, as you know Google Maps is a very popular feature and we're always interested in location-based things and things with maps and I I think there's lots of opportunity there.

NINA CURLEY: So, in terms of building Arabic content into that, I mean what would make it unique in the region?

MIKE CASSIDY: I'm sorry? Could you say that again?

NINA CURLEY: What would make it unique in the region and building Arabic content into location-based services? Or how important is Arabic content?

MIKE CASSIDY: You know today at Google more than half of all of our revenue comes outside the US. So the Arab region in with three hundred million, you know, consumers is a tremendous opportunity for us. Whether it's Arabic content or even innovative ideas and as you know companies like Maktuo or Gira a are coming with really cool technologies that's very interesting to to Western companies and Western internet companies. So, I think there's tons opportunity.

NINA CURLEY: Okay, cool, thank you.

MIKE CASSIDY: Thank you so much for having me.

I would argue that the quality Mr. Cassidy probes for in his cooking interview question is indeed precision, more so than actual iterative approach. I have a feeling he would enjoy the turkey parable.

As an aside, if you would like a perfect example of exactly what NOT to do in an in-depth interview (or even a web video interview), start the video at about :50 and note the long, leading, multifaceted questions asked by the interviewer. This is a common symptom of "overinterviewing" when seen in research.

Final Remarks

Final Remarks

"One learns to know a country that's basically quite different from what it appears at first sight.

On the contrary, one will say to oneself, I want to finish my paintings better, I want to do them with care; in the face of the difficulties of the weather, of changing effects, a heap of ideas like this finds itself reduced to being impracticable, and I end up resigning myself by saying, it's experience and each day's little bit of work alone that in the long run matures and enables one to do things that are more complete or more right.

So slow, long work is the only road, and all ambition to be set on doing well, false.

For one must spoil as many canvases as one succeeds with when one mounts the breach each morning."

- Vincent van Gogh to Theo van Gogh, November 26, 1889. [11]

'A Heap of Ideas Reduced to Being Impracticable'

This is quite literally how you may find yourself feeling after early microtesting of the concept. What you structured as an elegant test and iteration plan will, all at once, seem completely jumbled. New insights from live users will arrive that will make you question your original strategy. What seemed so simple and so in line with insights may seem to underperform.

You will again question yourself, your strategy, and the offering.

Breathe.

At no point should we expect any of this will be easy, for if it were, every competitor in the market would already be doing it. Take solace in that fact.

In many ways, early market testing will feel as if you are beginning again. This is both refreshing and dangerous, as you will see so many new opportunities and spaces to pursue, but you can be inadvertently drawn away from center. You can lose sight of the original foundational research and insights that led you to pursue the offering in the first place. For this reason, try to make it a habit to take a glance over original research and customer verbatims on a weekly or bi-weekly basis. You are not looking for new insights, you are simply remembering to center yourself in the strategy.

Remember, that at this phase, like the turkey parable, our goal is to measure, understand, and adjust accordingly. Isolate a factor or attribute, test it, and learn. Understand that there are no failures in research, only insights. A positive outcome is an insight just as is a negative outcome. It is our job to continue to purposefully drive at gaining and compiling those insights to improve the offering.

Spoiling Canvases

Another inevitability of the innovation process is that you will indeed 'spoil as many canvases as you succeed with.' Again, there is no question about this. None whatsoever. But you are in no way alone in this struggle.

You will find that having dinner or drinks with those truly holding an innovation mindset -- be they entrepreneurs or intrepreneurs, creatives or logicals -- will many times result in discussions of "war stories" and spectacular failures. This is no accident, as many of those stories will have a moral–a result, which guided that person in future endeavors and innovation pursuits. As the drinks continue to flow, those holding the innovation mindset will typically be the first to engage in vivid self-deprecation, usually also stated using vivid language.

As a researcher, I adore these kind of conversations not only for their content, but for literally being able to create a cognitive map for these people. As the conversations evolve, you begin to see how resilient those holding the innovation mindset are, and many times, how much they embrace that roguish approach.

Closing

As much as everything we have covered in our time together, I have tried to keep reinforcing mindset and mentality as much as the content and classes. "Innovation" is already difficult enough of a space to function in, and when we layer sustainability into the mix, we are truly talking new spaces altogether. If it is any evidence, as you have seen, much of the content for this course is original simply because there are no texts on creating meaningful sustainability-driven innovation. There are texts on "sustainability," there are texts on "innovation," but I believe the knowledge, synthesis, analyses, and processes we have explored this semester are unique to sustainability-driven innovation.

For these reasons, these pursuits will be that much more difficult... and that much more rewarding. Meaningful innovation in the sustainability space–innovation that appeals to the 3Ps AND the customer's needs–is a difficult endeavor, indeed.

In time, these sustainability-driven innovations will not rely on mandates or regulations or social pressure, but will appeal to actual customer needs in a meaningful way. They are not products or services that somehow rely on receiving a "pass" from customers, or appeal to some tiny slice of early adopters in the market who are willing to sacrifice product performance for some perception.

These sustainability-driven innovations will just make sense.

And this is why they will succeed.

And this is how they will make the world a better place.

I hope you have enjoyed our time together as much as I have, and I greatly look forward to reading your final case.

With kind regards-

Andy