Part 4 - Embedded Ethics

Part 4 - Embedded Ethics

Research Choices have Real World Implications

While considerations of procedural ethics require a framework of responsible research behavior, and extrinsic ethics requires an explicit consideration of broader impacts, intrinsic ethics requires a deeper analysis of how the research itself is constructed and where certain choices being made in the line of research embed value judgments and can impact real-world outcomes. For example, the handling of uncertainty and margins of error tend to be mathematical questions concerning the probability of a certain event to occur, yet, these uncertainties can determine real-world decisions about actions, regulations, etc. (Note: Choices made about intrinsic issues can have extrinsic impacts, as the two are intricately related.)

Embedded Values

The basic idea of intrinsic ethics concerns choices that seem to be only considered in mathematical or within the terms of the art, yet can embed certain values and result in different implications as to the application or future direction of the energy and environment knowledge. As well, ethics/values can be embedded in choosing not to pay attention to certain limits or parameters, i.e., in what is not being represented in a given analysis.

Reflexivity in Research

The means to address intrinsic ethics is through reflexive analysis (reflection based on values questions -> course correction) of research choices being made based on the kinds of questions highlighted here. This reflexivity should occur both while conducting research and while engaging in the peer review process.

Some issues to consider about the intrinsic ethics of coupled energy and environment systems

- How are standards of proof, errors, and uncertainties handled in a given analysis?

- What constitutes empirical adequacy and how consistent are results, over how many runs?

- What is the scope? Are some dimensions of the analysis oversimplified?

- What classification typologies are being used (ontologies)?

- How / what methods were selected?

- What went into the choice of research questions?

4.1 Framing of Research

4.1 Framing of Research

Embedded Ethical Choices

Values and ethics become embedded within the production of research, oftentimes at the very decision about research topic and question. Such decisions are rarely made within ideal conditions, where resources and time are of no issue. Research is done dependent on deadlines, budgets, peer review feedback, departmental resources, etc. How research is framed, the choice of explanatory frameworks and global assumptions about variables, and the explanations about causal relationships in a given model all present choices that can embed values about representative samples, as is a common question in biomedical or genetic research.

Choice of Research Questions

Research results are inevitably impacted by the scope and range of research questions. Context dependent values can impact problem choice; whether due to individual interests, funding agency interests, or broader societal interests, contextual values become interwoven into research practice. Further, choice of research question can also influence whether or not certain risks are taken into account, or are able to even be considered within the framework of a given nanotechnology research program.

If we knew what it was we were doing, it would not be called research, would it? –Albert Einstein

Frameworks and Global Assumptions

Interests of the researcher are reflected in accepting certain framework conditions, such as the representational limits of an analysis, or in choosing the values of certain variables, within a model, as being “more” representative of reality than a different variable, model, or limit.

Causal Explanations and Narratives

Causal explanations produce a conception as to what is happening within a given nanotechnology model or analysis. However, many simplifications and reductions are made just to make a model usable, and in doing so, there is no guarantee that a significant causal relationship does not go either unseen or unconsidered.

4.2 Empirical Adequacy and Simplicity

4.2 Empirical Adequacy and Simplicity

How Much Observation, How Simple, and Explanation?

Conducting and publishing research is a process of interpreting observations and describing the results. Questions about research and hypothesis formation point us in a specific direction and guide the interpretation of results. But how do we determine what we are seeing adequately supports our claims? How many observations do we need to make to assume our interpretation is correct? As well, does our research apparatus adequately support our ability to answer our research question in the detail or resolution necessary? How does an observation count if it does not fit our expected results?

Systems are Complex

Complex phenomena require complex models and descriptions. Not adding enough complexity to a research hypothesis could result in oversimplification of a situation, leaving out crucial thresholds or other limits in the system(s) under consideration. Often, in research, a compromise needs to be made, even for reasons of cost, between adequate observations and extremely comprehensive observations (such as sampling across a large site.) All of these choices can potentially lead to a false confidence in projections of model adequacy, which can result in real-world impacts.

The method of science depends on our attempts to describe the world with simple theories: theories that are complex may become untestable, even if they happen to be true. Science may be described as the art of systematic over-simplification—the art of discerning what we may with advantage omit. – Karl Raimund Popper

Empirical Adequacy and Consistency

Were adequate tests conducted to assure the phenomena observed are consistent, is the study reproducible, or is the instrumentation working within viable parameters and/or limits of observation? As nanotechnology is an emerging field with increasingly finer tolerance, many observations and conceptions of adequacy can change over time.

Simplicity/Scope

What is the scope of the study under consideration? Is the study significantly comprehensive to be relevant to various conditions? Is there detail being lost through the over-simplification of a model or representation?

4.3 Standards of Proof and Handling of Uncertainty

4.3 Standards of Proof and Handling of Uncertainty

Proof and Certainty

What constitutes certainty about a given observation? How many times must it be observed to be considered “valid proof” of a particular event? What is considered to be statistically significant for a given event to be occurring? Answering these kinds of questions seems a somewhat arbitrary matter, but consider that what is considered proof in one context is considered a “shadow of doubt” in another context. As well, being wrong in some cases will cost more than being wrong in other cases (as we see in the politics of climate science).

Standards of Proof and Handling of Uncertainties

Standards of proof often incorporate social values. As Anderson writes, “Social scientists reject the null hypothesis (that observed results in a statistical study reflect mere chance variation in the sample) only for P-values\5%, an arbitrary level of statistical significance. Bayesians and others argue that the level of statistical significance should vary, depending on the relative costs of type I error (believing something false) and type II error (failing to believe something true).

Type I and Type II errors:

Type I error: (false positive)

where the test produces a positive result when the negative result is the case, such as in a medical patient testing positive for a disease they do not have. In terms of data analysis, new information falsely changes previous estimates of uncertainty.

Type II error: (false negative)

where the test produces a negative result when the positive result is the case, such as when a medical patient has an ailment that goes undetected by test(s). Regarding data, new information does not correctly change previous estimates of probability of occurrence.

Both types of errors present different costs in different contexts, and result in a choice about values.

In medicine, clinical trials are routinely stopped and results accepted as genuine notwithstanding much higher P-values, if the results are dramatic enough and the estimated costs to patients of not acting on them are considered high enough” (Anderson 2009). Type I and II errors can have significant impacts in energy applications, and will require mindful foresight and consideration both by researcher and peer-reviewers.

4.4 Methods Choices and Classification Strategies

4.4 Methods Choices and Classification Strategies

Choosing Research

Oftentimes when we travel, we determine where we want to go before we know how we are going to get there. Much the same can be said how we approach research. We know the kind of knowledge we would like to gather, or effect we would like to tease out of a certain set of materials, before we know how we are going to get there. Methods selection itself can shift over the duration of the experimental process (though, hopefully not during an experiment!) of a given investigation. As we travel through the research process, we gather data about observations. This data is shaped by our selection of methods, and also conforms to our classification schemes.

As researchers, how we collect data and how we choose to categorize data are two other processes through which values become embedded in research. This suggests that we should pay close attention to how we justify our methods selection, understand the limitations of what our methods allow us to argue, and are able to justify our categorical and organizational choices.

Rumour has it that the gardens of natural history museums are used for surreptitious burial of those intermediate forms between species which might disturb the orderly classifications of the taxonomist.

– David Lambert Lack

Methods Selection

Choice of methods for either data collection and/or analysis reflects the context of the researcher and impact significantly the intellectual merit and framework of the nanotechnology research. “The methods selected for investigating phenomena depend on the questions one asks and the kinds of knowledge one seeks, both of which may reflect the social interests of the investigator” (Anderson 2009). Also, certain methods may not be as applicable in a given situation as others. Comprehensive assessment of methods selection should be clearly stated and justified in the research proposal, included an analysis of possible methods biases.

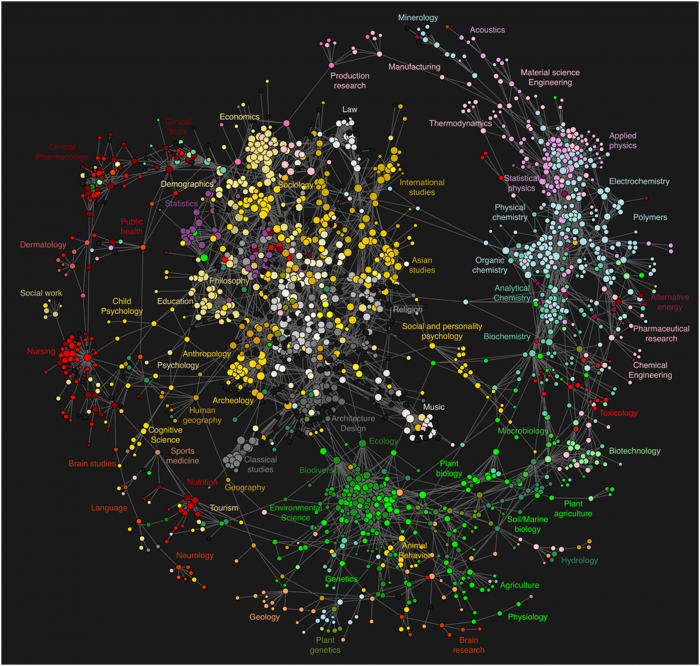

Classifications and Ontologies

The classification of an observation or phenomena, particularly when the classification strategy is being developed, the adequacy of certain definitions, the granularity of classifications, etc., can have significant impacts in later developments, lead to certain oversights, and even lead to misleading conclusions.