Modules

This is the course outline.

Unit 1: Energy and Environment

Unit 1 Introduction: How and Why We Use Energy

Welcome to Unit 1. In this first unit, we will present content in text and video showing the immense value we get from energy, where we get most of our energy, why the energy system must change eventually, and why a faster change would help us.

Each module of the course includes links to topic-related video clips taken directly from Earth: The Operators' Manual, a three-hour miniseries funded by the US National Science Foundation and viewed by millions of people nationwide on PBS. The conceptual foundations of this course were built on the principles and materials created for ETOM.

In addition, the course includes integrated video-enhanced graphics—clicking on many of the images and tables will open a short narrated video from Dr. Alley, explaining the key points. We hope that these will greatly enhance your level of understanding of key concepts presented.

- Why Energy Matters (Module 1)

- What is Energy? (Module 2)

- Oil, Coal & Natural Gas | Drilling, Fracking & Reserves (Module 3)

- The Physics of Global Warming (Module 4)

- The History of Global Warming (Module 5)

To get started, please watch the video below. This particular video will give you a glimpse into what the world's energy usage currently is and what it might be in the future.

![]() Earth: The Operators' Manual

Earth: The Operators' Manual

Video: Humans & Energy (4:32)

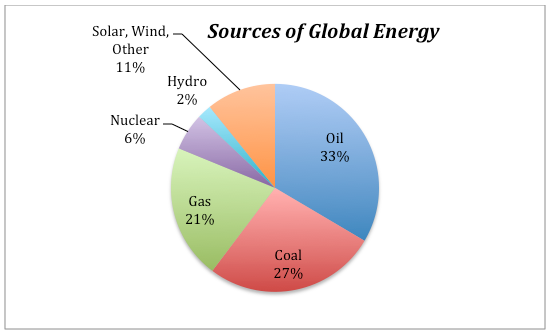

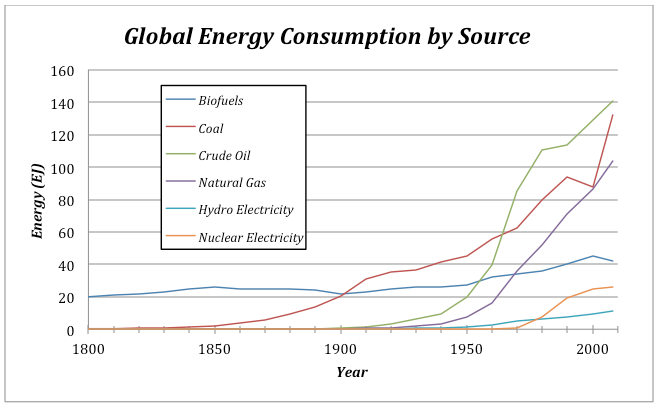

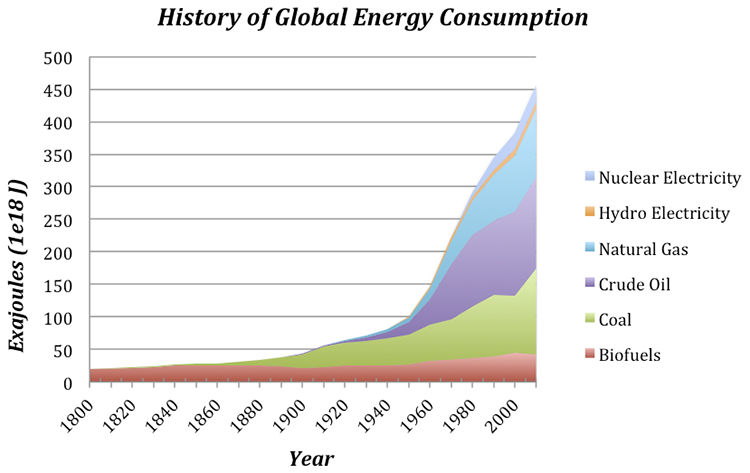

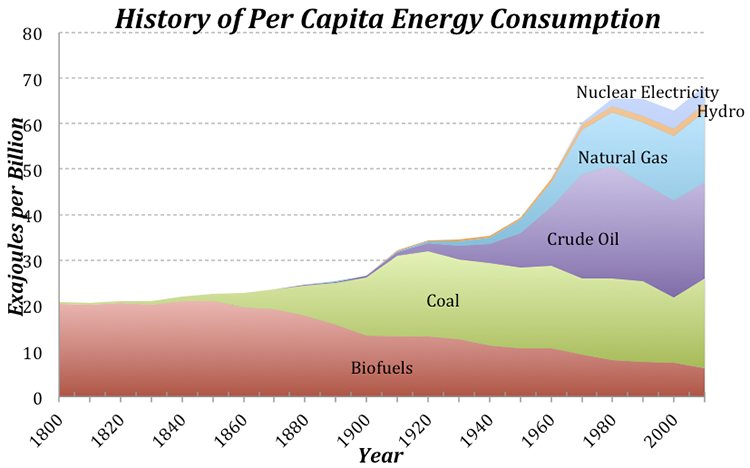

(Richard Alley) Humans need energy. We always have, and always will. But how we use energy is now critical for our survival. It all began with fire...Today, it's mostly fossil fuels. Now we're closing in on seven billion of us and the planet's population is headed toward 10 billion. Our cities and our civilization depend on vast amounts of energy. Fossil fuels-- coal, oil and natural gas--provide almost 80% of the energy used worldwide. Nuclear is a little less than 5%. Hydro-power a little under 6.

And the other renewables--solar, wind and geothermal about 1% but growing fast. Wood and dung make up the rest. Using energy is helping many of us live better than ever before. Yet well over a billion and a half are lagging behind, without access to electricity or clean fuels.

In recent years, Brazil has brought electricity to ten million, but in rural Ceará, some still live off the grid. No electricity, no running water, and no refrigerators to keep food safe. Life's essentials come from their own hard labor. Education is compulsory, but studying's a challenge when evening arrives. The only light is from kerosene lamps. They're smoky, dim and dangerous. Someday, this mother prays, the electric grid will reach her home. (translator) The first thing I'll do when the electricity arrives in my house will be to say a rosary and give praise to God. (Richard Alley) More than half of China's 1.3 billion citizens live in the countryside. Many rural residents still use wood or coal for cooking and heating, although most of China is already on the grid. China has used energy to fuel the development that has brought more than half a billion out of poverty.

In village homes, there are flat screen TVs and air conditioners. By 2030, it's projected that 350 million Chinese, more than the population of the entire United States, will move from the countryside to cities... a trend that's echoed worldwide. Development in Asia, Africa, and South America will mean three billion people will start using more and more energy as they escape from poverty. Suppose we make the familiar if old-fashioned 100 watt light bulb our unit for comparing energy use. If you're off the grid, your share of your nation's energy will be just a few hundred watts, a few light bulbs. South Americans average about 13 bulbs. For fast-developing China, it's more like 22 bulbs. Europe and Russia, 5,000 watts, 50 bulbs. And North Americans, over ten thousand watts, more than 100 bulbs.

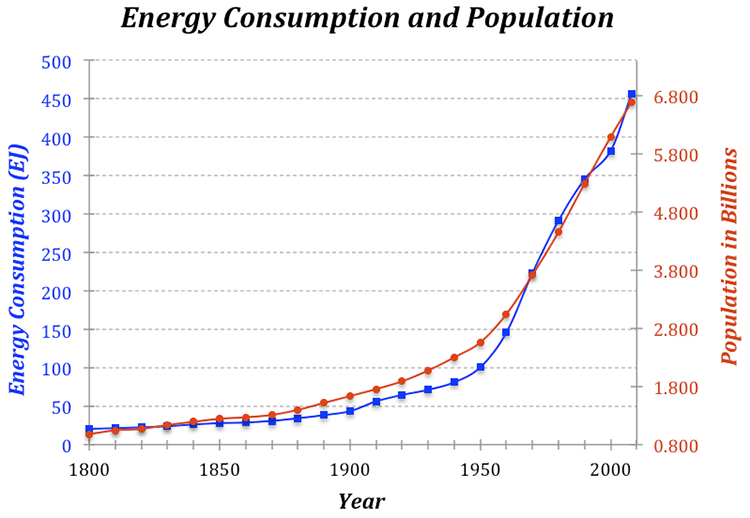

Now let's replace those light bulbs with the actual numbers. Population, shown across the bottom and energy use, displayed vertically, off the grid to the left, North America to the right. If everyone, everywhere, started using energy at the rate North Americans do, the world's energy consumption would more than quadruple, and using fossil fuels, that's clearly unsustainable. No doubt about it, coal, gas, and oil have brought huge benefits. But we're burning through 'em approximately a million times faster than nature saved them for us, and they will run out. What's even worse, the carbon dioxide from our energy system threatens to change the planet in ways that'll make our lives much harder. So why are fossil fuels such a powerful, but ultimately problematic, source of energy?

Unit Goals

Upon completion of Unit 1, students will be able to:

- Recognize the natural and human-driven systems and processes that produce energy and affect the environment

- Explain scientific concepts in language non-scientists can understand

- Find reliable sources of information on the internet

- Use numerical tools and publicly available scientific data to demonstrate important concepts about the Earth, its climate, and resources

Unit Objectives

In order to reach these goals, the instructors have established the following objectives for student learning. In working through the modules within Unit 1, students will:

- Recognize that even really smart people have failed when climate changed

- Explain how machines and trade have helped other people avoid catastrophe

- Describe how we have burned through energy sources in the past

- Show that people can make money and save the world at the same time

- Recall that using energy doesn’t make it go away, it is just converted into a less useful form

- Recognize the many units of energy and power

- Show that the amount of energy used by people around the world is much larger than the 100 watts inside most people converted from food

- Recall that around 85% of the energy we use is derived from fossil fuels

- Analyze energy use and production in a country other than the United States

- Recall that oil, coal and natural gas are produced naturally by well-understood processes

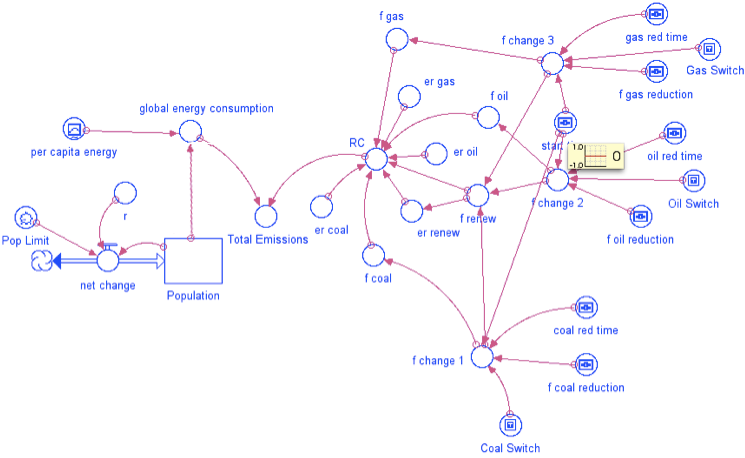

- Evaluate the effects of technology, economics, and population growth on fossil fuel production using computer models

- Demonstrate that our current consumption of fossil fuels is not sustainable by exploring future scenarios with computer models

- Recall that carbon dioxide has a well-understood and physically unavoidable warming influence on Earth’s climate

- Recognize that positive feedbacks amplify changes, and negative feedbacks reduce them

- Recall that multiple independent records from different places using different methods all show that both CO2 and temperature are rising

- Explain that patterns of global warming in the past century can only be reproduced by considering both natural and human influences on climate

- Use a model to show that global climate always finds a steady state, but certain factors may influence how long it takes to get there

- Demonstrate that greenhouse gases are the most significant factor controlling surface temperature

- Summarize how the Earth’s history confirms the warming influence of carbon dioxide

- Recognize that past climate changes have greatly affected plants and animals, usually in unpleasant ways

- Recall that future rise in CO2, and therefore surface temperature is likely to be much worse than what we have experienced in the past 100 years

- Explain how small amounts of climate change are worse for poor people, and larger amounts are bad for everyone

- Assess what you have learned in Unit 1

Assessments

| Module | Assessment | Type |

|---|---|---|

| 1. Why Energy Matters | Get Rich and Save the World | Discussion: Find an Article |

| 2. What is Energy? | Energy Use Around the World | Discussion: Search and Compare |

| 3. Oil and Coal and Natural Gas | Peak Oil Model | Summative - Stella Model |

| 4. Global Warming: Physics | Global Climate Model | Summative - Stella Model |

| 5. Global Warming: History | Learning Outcomes Survey | Self-Assessment |

Module 1: Why Energy Matters

Module 1: Why Energy Matters

Overview

We will get to the facts and figures soon enough, but in Module 1 we will start with stories of our ancestors showing the immense value, but real difficulties of energy use.

When drought strikes, people who can drill wells, pump water and trade for food are much better off than people without diesel pumps and trucks. Drought ended the civilization of the Ancestral Puebloan people of what is now the southwestern United States but was much less damaging to the people of Oklahoma more recently. However, before diesel, gasoline, and other fossil fuels, we often burned whales and trees much faster than they grew back, causing real problems.

Within this module, the focus is to get you thinking about the value of energy, and how difficult getting that energy can be—both historically and currently.

Note that we do not expect you to become experts on ancestral Puebloans or Oklahomans—they serve as examples. We could have told similar stories from China, or Europe, or Guatemala, or many other places with many other people. This is really about all of us.

Goals and Objectives

Goals and Objectives

Goals

- Recognize the natural and human-driven systems and processes that produce energy and affect the environment

- Explain scientific concepts in language non-scientists can understand

- Find reliable sources of information on the internet

Learning Objectives

This unit is mostly about helping you see how much good we get from energy. By the end of this module, you should be able to:

- Recognize that even really smart people have failed when climate changed

- Explain how machines and trade have helped other people avoid catastrophe

- Describe how we have burned through energy sources in the past

- Show that people can make money and save the world at the same time

Roadmap

| To Read | Materials on the course website (Module 1) Get Rich and Save the World [1] |

|

|---|---|---|

| To Do | Module 1 Discussion Post Module 1 Discussion Comments Quiz 1 |

Due Wednesday Due Sunday Due Sunday |

Questions?

If you prefer to use email:

If you have any questions, please email your faculty member through your campus CMS (Canvas/Moodle/myShip). We will check daily to respond. If your question is one that is relevant to the entire class, we may respond to the entire class rather than individually.

If you have any questions, please post them to Help Discussion. We will check that discussion forum daily to respond. While you are there, feel free to post your own responses if you, too, are able to help out a classmate.

Unfriending Fossil Fuels

Unfriending Fossil Fuels

Fossil Fuels have become our best friends—oil, coal, and natural gas power about 85% of the global economy. These energies are absolutely essential today to keep us healthy and happy. Seven billion people inhabit the planet—a planet with whales in the oceans and trees on the land—because we have mostly switched from burning trees and whales for energy to burning fossil trees and fossil algae.

But, we are burning those fossils about a million times faster than nature saved them for us. We cannot continue these practices very far into the future because the resources will no longer be available. If we burn most of our available resources before we make major progress on sustainable alternatives, we risk dangerous shortages of energy in a world that is much harder to live in because of damaging climate change. Given this, we are faced with the difficult task of "un-friending" our best friends—fossil fuels.

According to the “Help” page on a major social networking site, "un-friending" someone is as simple as going to the right website and clicking “Un-friend." Even that simple act has generated a truly amazing number of online discussions that explore the implications, reasons, impacts, options, and ethics of "un-friending." Switching from fossil fuels is far more serious as it involves changing how we spend almost $1 trillion per year just in the U.S., for example.

To begin, let’s take a quick tour of just how valuable fossil fuels are to us. Later, we will look at the dangers of continued reliance on fossil fuels. Looking at the good and the bad of fossil fuels will help us make sense of the issues at hand.

Required Reading

Get Rich and Save the World [1] is an article by Dr. Richard Alley from the Earth: The Operators' Manual website. This will give you more background before moving on to the next section in this module.

At the end of this module, you will be asked to join in an online discussion of the module content with other course participants. You may access the Week 1 Discussion Forum at any time, but we suggest that you work through all of the content first so you are ready to fully engage in the topic-related discussion(s).

Dealing with Drought

Dealing with Drought

Short Version: Drought or other natural disasters can cause even really smart people to fail badly if they don't get enough help. However, with plenty of fossil-fueled tools and trade, the dangers of natural disasters have been reduced greatly. Here, we consider two cases of people responding to severe droughts — one before the age of fossil-fuel energy, the other during the age of fossil-fuel energy.

Friendlier but Longer Version: We could tell many stories about the benefits of fossil fuels. Here is one. The details of this story are not especially important, but the basic idea is greatly important—our ability to use fossil fuels to power our tools makes us much better off.

A few years ago, a great group of Penn State students, faculty, and film professionals toured many of the national parks of the US southwest. We hiked to the bottom of the Grand Canyon, rafted the Colorado below the Glen Canyon dam, slept on the slick rock at Canyonlands, and otherwise had a truly wonderful trip.

Many of us were especially fascinated by Mesa Verde. Ancestral Puebloan (often called Anasazi) people lived at that site for roughly 700 years—much longer than the history of the Americas since Columbus—first on top of the mesa, but then moving to build intricate dwellings in caves down the mesa sides, commuting up ladders and steps carved in the rock to work the fields on top. But, after most of a millennium, the people left.

Archaeological sites are almost always open to interpretation and argument. We know what was left behind, and we can learn much of what was going on around the area, but the record is necessarily incomplete and viewed through the lens of who we are.

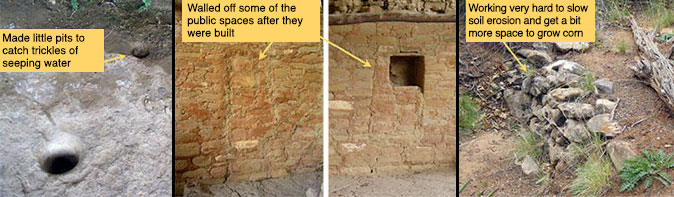

Still, much of the Mesa Verde story is rather clear. The national park rangers showed us the little holes that the people painstakingly carved in the rock in the dwelling caves to capture a trickle of water. We marveled at the carefully constructed check dams, stones set to stop the erosion of the mesa top and catch a little soil and water to grow a little more corn. Food-storage structures were built in places that were very difficult to reach. And, toward the end, windows between different parts of the cliff dwellings were blocked with rocks, dividing people.

Video: Mesa Verde Story (8:29)

PRESENTER: This is a wonderful, but a little bit disturbing, story of some really smart people living in southwestern Colorado, ancestral Puebloans, at a place called Mesa Verde. They lived there for hundreds of years and left shortly before Columbus got to the New World. A lot of their farming was done on top of a mesa, and they lived in caves down the side of the mesa in these wonderful structures made mostly out of stone. Here is a modern ladder with a person for scale.

And what I want you to notice here, in addition to the great buildings, is up here on top. This fairly clearly was a granary-- this is where they kept their food late in their existence there. It's probable, not certain, but it's probable that this is a little bit like a modern person putting the cookies way up on the upper shelf, so they won't eat them before they're ready to. Accept this wasn't the cookies, this was the food. And that might be something you'd do if food was really getting scarce.

Now that structure we just looked at, if you go behind it, you find it's actually built in a cave. And this is sandstone, and there's a little bits of shale and water sort of percolates down through here. And then it hits the shale, and it seeps out. So it's a little bit damp back there, in a generally dry climate.

And if you look around back here on this rock, do you see? You see things like this-- little holes they dug in the rock so that when water came seeping out, it would fall into these holes, drip in, and you could take a cup and get a little bit of water. And that's what you do if you're really thirsty because that doesn't look like the best water for us. But if that's all the water you have, that's what you do.

Now they built these wonderful structures, and some of them had windows between, say, your house and mine, or your room and mine. And late in the occupation, it appears that they walled the windows off. And usually, when you're walling the window off, you're trying to keep something out. And it is possible that they were not getting along with their neighbors quite as well as they might once have if they're walling off the windows within the structure.

Now if you go up on top where they grew the corn, this actually was built by the ancestral Puebloans, hundreds of years ago. And it stops erosion. So there's a little tiny stream comes through here, but this little dam of rocks catches a little bit of soil, where you could grow a little bit of corn. And it traps a little bit of water, and a little bit of soil. And so you're looking at people that really were shepherding what they had, conserving. And apparently, they needed this.

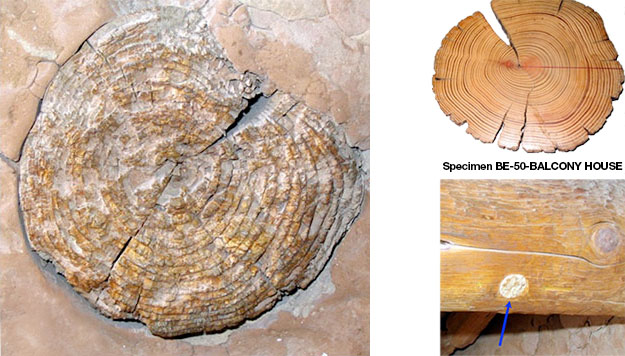

What you see then, is people who were living on the edge. Hard to get enough food, hard to get enough water, life was getting hard. If you look around the structure, it's mostly made of stone, because there weren't many trees. But there's a little bit of wood in the houses there at Mesa Verde.

If you know anything about wood, you know that it has layers. And the layers, when the tree is happy, it grows a thick ring. And when the tree is unhappy, it grows a thinner ring. And you can see different thicknesses of rings in this.

And you can take cores, and you can count the layers, and count how thick the layers are. And in a place like Mesa Verde, where it's very dry, a happy tree is one that has rain. And an unhappy tree doesn't.

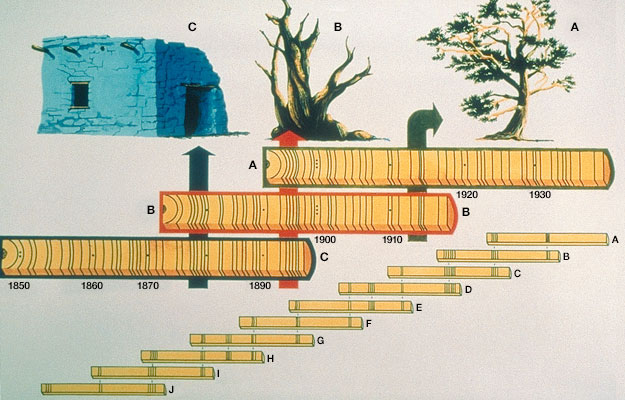

It's also possible, if you have a living tree, you can take a core from it, and you can see the pattern of thick and thin rings in that core. There might be dead trees nearby, and you can see the pattern of thick and thin rings in that, and you can match them up between trees. And you can go back to the wood in the archaeological sites, and you can find the same pattern. And so you can make a tree ring record, which is longer than the life of any given tree, with the thickness of the ring telling you how much it rained.

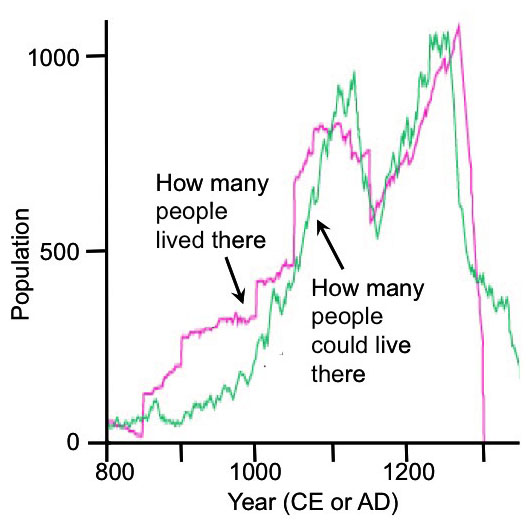

So now what can you do? Archaeologists and tree ring people got together. And they made the plot that you're going to see here. The year 800 is on your left, the year 1200, 1300 there, which is sort of the crash.

And so first of all, you have in green here, a history of how many people could live in the area. And this is actually done just down the road from Mesa Verde, at a place called Long House Valley that had less trade, so it was easier to work on. And what they did is they looked at the trees, and they said how thick a layer is, is how much it rained.

Rain also tells you how much corn could be grown, and corn is how many people could live there. So the green curve is from trees and from knowledge of the people-- how many people could live in this place. Independently, archaeologists went in and they looked at the classical things-- how many people were living there? You know, how many burials, and how many houses, and that sort of thing. And so they looked at how many people were living there, and that's this curve.

And what you'll notice is within the scientific uncertainties of all this, these are the same record. How many people lived there, and how many people could live there, are essentially the same. When it got wet, population went up. When it got dry, we don't know whether they died, or whether they left, or whether they quit having babies. But the population went down.

And the rains came back, and so did the people. And then the rains left. And at some point, the people said, we are out of here. We're going to become environmental refugees, and we're going to go someplace it rains more. And they left.

And so these are very smart people, doing amazing things. But they were controlled by the climate, ultimately. And it got them in the end.

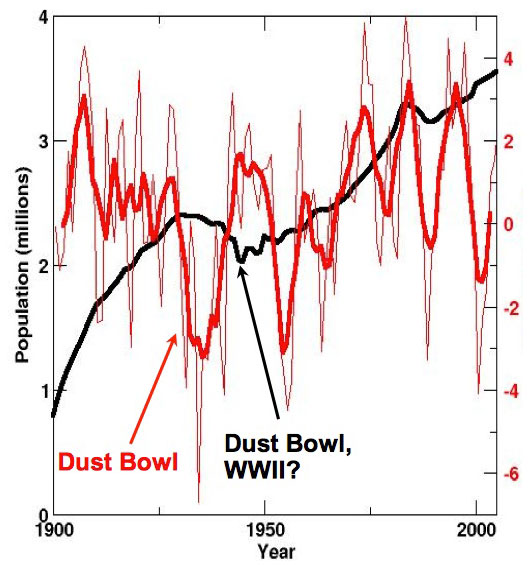

Now, this is more recent. This is Oklahoma from the year 1900 to the year 2000. And the red curve here now is a history of drought.

And down is really dry, and this thing right down here is the Dust Bowl. And that made great literature, but it made lousy living, and you've probably seen the pictures of the Okies headed for California to get away from the dust. But look at the population history of Oklahoma, and you'll see that while the Dust Bowl kicked them-- and then World War II, some probably going off to war-- it's not nearly the disaster it was for the ancestral Puebloans.

Now, these are also smart people, but these are people that when the drought hit, some of them got in gasoline-powered cars and they drove away. But some of them had food delivered in gasoline-powered cars, or in diesel-powered trains. They had diesel-powered well drilling, that they could drill a well, and they could run motors, or run windmills to pump water out. And so they had more tools, and they had more stuff-- and those were fossil-fueled.

And more recently, when other droughts have occurred, it really hasn't affected the population much at all. Little tiny changes, but really not too much. And so what you can see is our having fossil fuels, and having machines, and having trade has greatly insulated us from what otherwise would be disasters for even very smart people.

Some of the evidence we saw at Mesa Verde of people dealing with hard times caused by a drought.

The evidence is very clear that the people were conserving water and soil, working to maintain and improve their ability to grow food. The hard-to-reach food storage might be a truly serious version of someone hiding something on the top shelf so they don’t eat it before they should, and the window-blocking is at least suggestive of increasing social stresses.

To learn more of this story, scientists went to Long House Valley in Arizona, a simpler place nearby that was occupied by the same people. Recall that the age of a tree can be learned by counting its yearly rings. These rings are easy to see in places where there are pronounced seasons because trees grow rapidly during the spring and early summer, putting on a lot of new wood that appears lighter in color, and then during the fall and winter, the growth slows way down and very little wood is added; this late-season wood is denser and darker. So, one thick light band and a thin darker band make up one year. This is sometimes not the case for trees that grow in the tropics, where there may be little difference between summer and winter, however, if tropical settings with defined wet and dry seasons, trees do develop annual rings. The important thing is that there needs to be a seasonality for trees to develop annual rings. In the dry climate of a place like Long House, trees grow better when it is wetter in the growing season, so a tree will thicker annual rings — the ring thickness is directly correlated to the amount of rainfall. In colder climates, the ring width can be correlated to temperatures during the growing season — warmer temperatures lead to thicker rings.

Thus, tree rings preserve a record of the climate history — rainfall in drier regions and temperature in colder regions. And, living trees overlap in age with trees that were used in construction, or trees that died but haven’t rotted yet. Using the pattern of thick and thin years to match the modern and older wood (a technique called cross-dating), the history of rainfall can be extended beyond the life of a single tree. Cross-dating has enabled us to produce continuous tree ring records that go back about 12,000 years even though the oldest living tree is just a bit over 5,000 years.

Rain and Population

Rain and Population

Rain can grow corn as well as trees, and corn can grow people. Thus, knowing something about trees, corn, and people, a team of scientists can start with tree rings and learn how many Ancestral Puebloan people could have lived in an area. Meanwhile, archaeologists are able to use their techniques of digging and dating to learn how many people actually lived in an area. Teams of archaeologists and tree-ring climatologists did this research at the “end of the road” in the small, remote Long House Valley, which was not a trading center.

What they learned is striking, as shown in the figure.

Next, take a look at a similar history, from Oklahoma over the last century. The Dust Bowl of the 1930s was a major drought, made worse by various economic decisions about land use. Wonderful literature documents the terrible economy and environment, as people suffered and died.

Yet, even today, where the economic resources are not available, as in the African Sahel, droughts have huge consequences and drive widespread starvation or migrations of environmental refugees.

Every person I ever met who studied the ancestral Puebloan people of Mesa Verde and surroundings has come away deeply impressed with the resourcefulness and cleverness of the people. The difference between Puebloan and Oklahoman success during drought is not because one group was smart and the other wasn’t. But the technologies and trade are vastly different (for many reasons!), and the people who could call on more tools and more help were more successful. Some of those tools were wind-powered, but most ran on fossil fuels, and success has increased as the use of fossil fuels increased.

Want to know more?

Take a look at the Enrichment called Burning for Learning

Running Out of Trees

Running Out of Trees

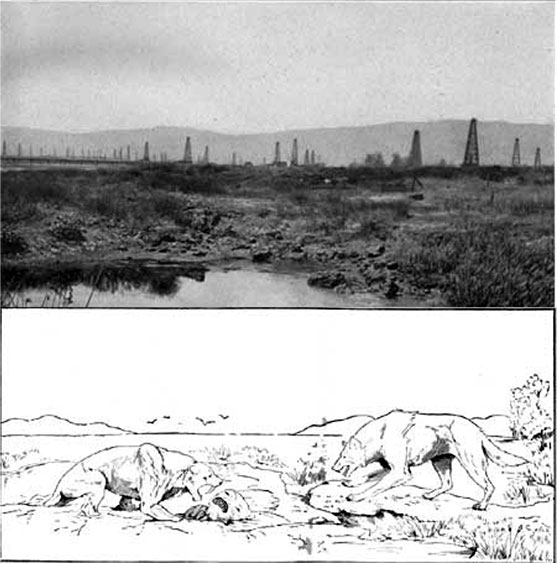

The early European settlers in central Pennsylvania (and many other places) wanted iron, turning rusty soils into pig iron in dozens of different furnaces (including Pennsylvania’s Centre Furnace, just down the hill from Penn State’s University Park Campus, where this is being written), and then turning the hunks of iron into useful things in forges (including Pennsylvania’s Valley Forge).

Video: Days of Iron (2:10)

This is a Centre Furnace. The road runs up the hill to Penn State University Park campus and the town of State College. But State College didn't even exist when the university was founded in 1855. The university was built up the hill from the Iron Furnace, and they've been making iron here since 1791.

This is a glass slag. This is what was left when they melted the ore to get the iron out and drained that away and then this chilled and it froze to make the glass. Melting the ore took energy. And the energy came from charcoal, and the charcoal came from trees. To fire a furnace for a year took more than a half a square mile of trees. But the furnace was served by an independent community and it had people in it who built houses and heated them in the winter and cooked, and that took wood too.

Running a furnace and what was around it, took a square mile of trees a year. And there were lots of furnaces and lots of forages, like Valley Forge, that turned the iron into useful things. Furnace closed in 1858. Production moved west to use better ores and to use coal as a fuel because the trees were gone. It was about the same time as peak whale oil, and just before the first modern oil well up the road here in 1859. Today, we have whales and we have trees because we burn fossil algae and fossil trees, oil and coal, and natural gas.

Pennsylvania by itself had dozens of iron furnaces. The early iron furnaces and forges were fueled by charcoal, which was made from trees. As many as 100 workers would spend fall and winter making the charcoal for just one furnace, which used trees from more than half a square mile (more than a square kilometer) per year. Those people were burning a lot of trees in their fireplaces in winter as well, and the forge that converted the pig iron to useful things required as much charcoal as a furnace. Thus, forests and iron-making didn’t coexist for very long—the Commonwealth of Pennsylvania was rapidly converted from “Penn’s Woods” to the “Pennsylvania desert”, with almost no trees or wildlife remaining. You cn see this deforestation in the US in the form of some maps here [3]. And it wasn’t just Pennsylvania, or just Europeans—the growth of the iron industry in China led to deforestation, too, and many other people around the world have cut trees much faster than they grew back.

Running Out of Whales

Running Out of Whales

The flickering light of a fireplace or wood stove isn’t great for reading in a dark Pennsylvania winter, so people have burned many other things for light. In Pennsylvania and elsewhere in the US, wealthy early European settlers preferred burning whale oil, which didn’t stink like tallow candles (made from animal fat), and didn’t blow up like the alcohol-turpentine mixture known as camphine. At its peak, the Yankee whaling fleet had 10,000 sailors on ships, scouring the far reaches of the ocean for whales to supply oil. Populations of the main species pursued by the Yankee whalers dropped precipitously, and the Yankee production of whale oil followed, with prices rising greatly, from a low that would be about $7/gallon today, to a peak of almost $25/gallon. The total amount of whale oil collected by the Yankee whalers in the 1800s is roughly the same as the total amount of oil (petroleum) imported by the United States in a week—if we hit a shortage of our modern energy sources, we cannot easily go back to our former sources!

Video: Whales Celebrate Oil in Pennsylvania (0:48)

PRESENTER: This is an editorial cartoon that was published in the magazine, the publication, Vanity Fair in the year 1861, just before the US Civil War. And it is the grand ball given by the whales in honor of the discovery of the oil wells in Pennsylvania. And you'll notice the whales in their evening dress being served by frogs. And it's just before the Civil War, so you have the oil wells of our native land, may they never secede. And you have oils well that ends well. And we whale no more for our blubber. We have whales because we burn fossil algae. We don't burn whales to see at night anymore.

As the US got out of the whaling business, others—particularly Norwegians—got into it, using new technologies including faster boats and harpoon cannons to hunt species that had eluded the Yankee whalers. But even the vast resource of fast Antarctic whales proved small compared to the hunger of humans, and soon those whales were depleted as well.

Video: Peak Whale Oil (2:16)

PRESENTER: This is the history of whale oil production from the Yankee fleet from New England in the United States from the year 1800 on your left to the year 1880 on your right. And you'll see that they got better and better at whaling. And then, they went over peak whale oil and down the other side.

A lot of this was things like there's a civil war over here, when some whaling ships were sunk to block Southern harbors. The whaling fleet is crushed in the ice off of Alaska over here, and insurance prices go through the roof. But they were up off of Alaska because they couldn't find whales anywhere else. And that's what was going on.

Now here, there are 10,000 men on ships out of New England looking for whales in the world oceans. And there's lots more people working in New England to process the whale oil and what have you. Because you kill the whale and you boil the whale to make the oil, but then all the pieces of the whale were used for various things.

Now as they get better at whaling, the price went down. And the low point here is about $7 a gallon for whale oil that was used in lamps. As soon as peak whale oil was hit, the price of whale oil went up to $23 a gallon. And this is the equivalent of modern money.

And so what you find is it's not when you run out of the resource that the price goes up. It's as soon as the resource starts to get scarce. Now indeed, the free market worked in some sense. People went up the road from where I'm speaking to you and they drilled the first modern oil well, the Drake Well, in 1859. But you'll notice even that didn't really bring the price back down.

All of this oil-- 100 years of whaling-- 10,000 men at the peak-- collected as much whale oil as about one week of modern US oil imports. So there's really no chance that we can actually go back to the way we used to do things.

Video: Polka Oil (0:47)

PRESENTER: This is actually the cover of a piece of sheet music that was published in 1864 in New York. This is "The American Petroleum Polka," or charge, or gallop, or waltz, or march. And it has a picture of a beautiful Pennsylvania scene, the oil well spouting its oil. Now oil was black back then. Oil is still black. But you couldn't have black oil falling on the lady's pink dress, so they made the oil white. And then bragging, "This oil well threw pure oil a 100 feet high." people understood the value that you get from oil, from petroleum. And they celebrated that.

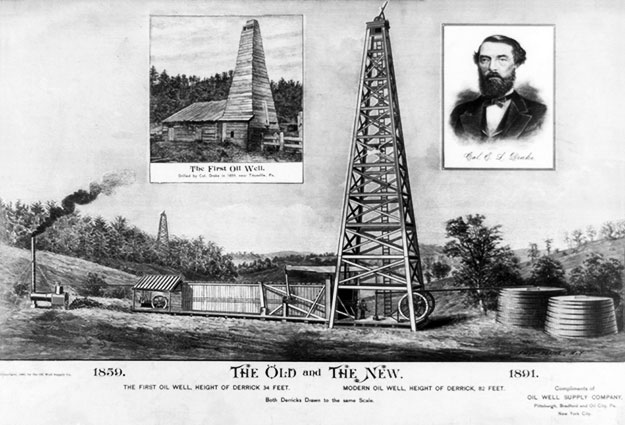

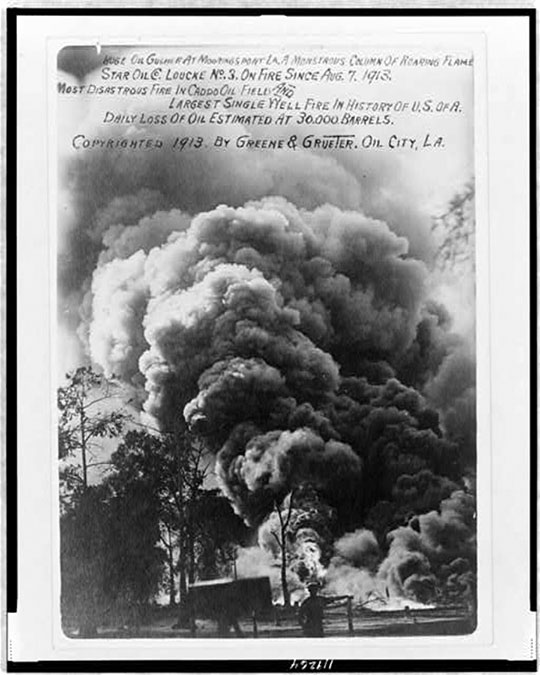

The first modern oil well was drilled in Pennsylvania along Oil Creek, up the road from where Dr. Alley lives, in 1859, shortly after peak whale oil in the US and the sharp rise in whale-oil prices. The impact was understood even then, with the magazine Vanity Fair in 1861 publishing an editorial cartoon showing the “Grand Ball of the Whales in Honor of the Oil Wells of Pennsylvania”, featuring the sign “Oils well that ends well”. The cover of the 1864 sheet music American Petroleum Polka features a Pennsylvania scene including a lady in a pink dress and an oil well that “…threw pure oil 100 feet high” (30 m).

Earth: The Operators' Manual

Earth: The Operators' Manual

Video: America's Energy Past: A 3-minute clip on "peak whales".

Narrator: But to have a sustainable energy future, we have to do things differently than in the past. Richard Alley explains-- We've been burning whatever was at hand for a long, long time. But as we see repeatedly with energy, you can burn too much of a good thing. And there are patterns in the human use of energy and if we're stupid enough to repeat them, burn all the fossil fuel remaining on the planet and put the CO2 into the air, we will cook our future.

Take what we did to trees in North America, for example. When the first settlers arrived on America's east coast, the forests were so thick, you could barely see the sky. That soon changed. And the forests almost completely disappeared as more and more trees were cut down to meet the heating, cooking and building needs of a growing population. Making iron needed lots of furnaces and the furnaces ran on charcoal made from trees.

You can trace that history in tell-tale place names from my home state of Pennsylvania. So farewell virgin forests, hello Pennsylvania Furnace, Lucy Furnace, Harmony Forge, and Valley Forge of Revolutionary War fame. Large areas of forest were soon depleted, and charcoal making and iron production moved on, to repeat the process elsewhere. Peak Wood, meaning the time of maximum production, came as early as the first decades of the 19th century or even before that for some parts of the east coast. The pattern of using up an energy resource until it was nearly gone was repeated at sea.

As America's population grew, so did their need for a better way to light the night. So whaling crews went to sea, on the hunt for the very best source of illumination... whale oil. At first, large numbers of whales were found nearby. They could just be towed to shore. But by the 1870s, we'd burned so many whales to light our evenings, that all the easy whales were gone. Whale-oil prices roughly doubled. Now ships had to travel close to the poles in search of bowhead whales. Their oil wasn't as good. And conditions were really dangerous. In 1871, up in the Arctic, 33 ships were trapped in the ice and crushed. Just as happened with America's forests, we'd exploited the most easily accessible resources and hadn't stopped until we'd almost used them up. Lucky for us, in 1859 a cheaper and more abundant source of energy had been discovered with Edwin Drake's successful oil well, drilled in Titusville, Pennsylvania. And for 150 years, America ran and grew on oil and coal.

Discussion Assignment

Reminder!

After completing your Discussion Assignment, don't forget to take the Module 1 Quiz. If you didn't answer the Learning Checkpoint questions, take a few minutes to complete them now. They will help your study for the quiz and you may even see a few of those question on the quiz!

Discussion Question

Objective:

Show that people can make money and save the world at the same time. Find an article online about someone who has made money by doing something that conserves energy or generates energy in a new way that is less damaging to the Earth than traditional fossil fuel extraction and burning. Share it with the other students in this course and discuss the various ways entrepreneurs have approached this issue.

Goals:

- Find reliable sources of information on the internet

- Communicate scientific ideas in language non-scientists can understand

Read:

Get Rich and Save the World [1] from Earth the Operator's Manual

Description:

Many of us pessimistically accept the idea that in order to make money and progress, we have no choice but to inflict some amount of damage on the Earth and its environment. But there are those out there who have flipped this axiom on its head by finding ways to make money by doing things that help the Earth. For this activity, search online for an article to share with the class. The article should describe one way in which someone or some company has found a way to make money by saving energy or by developing new alternative means of producing energy.

Start by searching the terms "energy entrepreneurs" or "environmental entrepreneurs". Click around until you find something interesting.

Once you find an article you would like to share, write 2-3 sentences summarizing the content. Then, write an additional 1-2 sentences explaining your thoughts on making money and helping the world. Explain in your own words why you think it is or is not possible or necessary to implement these ideas on a global scale.

Instructions:

Your discussion post should include a link to the article you have chosen, a summary 100-150 words in length, and a personal commentary 75-100 words in length. Your original post must be submitted by midnight on Wednesday. In addition, you are required to comment on at least one of your peers' posts by midnight on Sunday. You can comment on as many posts as you like, but please try to make your first comment to a post that does not have any other comments yet. Once you have an idea of what you want your post to be, go to the course discussion for your campus and create a new post.

Scoring Information and Rubric:

The discussion post is worth a total of 20 points. The comment is worth an additional 5 points.

| Description | Possible Points |

|---|---|

| link to appropriate article posted | 5 |

| summary provides a clear description of the article content (100-150 words) | 10 |

| well-reasoned comment on your own article included in your post (75-100 words) | 5 |

| well-reasoned comment on someone else's article and post (75-100 words) | 5 |

Summary and Final Tasks

Summary and Final Tasks

Summary

Our history is thus quite clear. Life is hard if we have to do everything for ourselves. We rely on arranging for help, getting energy from outside us. As we have learned to hunt, gather and control energy, we have gained the ability to survive droughts, cold, and other problems that might have defeated us before. But, even for resources such as whales and trees that can grow back, we often over-harvest until they become scarce (or disappear entirely, as we have done to many species such as the wooly mammoths of ice-age North America). When we switched to heavy use of fossil fuels, we reduced our reliance on some of the earlier sources—we have whales and trees today because we rely on burning oil, coal and natural gas.

Reminder - Complete all of the Module 1 tasks!

You have reached the end of Module 1! Double-check the Module Roadmap table to make sure you have completed all of the activities listed there before you begin Module 2.

Enrichments

Use the links to go to the enrichments for Module 1. These materials are not required and will not be covered in the assessments, but they are interesting and will add to your understanding.

Burning for Learning

Do you ever empty the lawn-mower bag to get your dinner? Or chew up a handful of wheat or leaves from the maple tree? How about raw meat?

Cows can succeed by eating grass, but they have four stomachs and spend a lot of time “chewing their cud” to help break down the grass to be digested. Caterpillars can eat wheat or maple leaves, but a whole lot of a caterpillar is a digestive tract. And many predators eat raw meat.

But, we don’t do any of these things. We have mastered the art of using fire to cook our food. This kills parasites, but it also starts the process of digestion. We don’t have the type of digestive system that would allow us to get enough energy out of leaves and grass or “raw” wheat and raw meat, to keep us active enough to grow, harvest or catch those foods in the wild. If you are dieting to lose weight, eating raw vegetables is a great idea; if you are trying to survive the winter as a fur trapper in some remote part of the Yukon, you might look for something that supplies a bit more energy.

Fire may be the big difference between humans and other primates. If we didn’t cook, we wouldn’t get enough energy from our food to supply our big brains. Instead, we’d need a bigger or longer digestive system to process leaves and seeds and roots and raw meat, but the extra digestive system would use up a lot of the energy it extracted from such things to keep itself alive, with not enough energy left over to support all the extra gray matter between our ears. We really may have needed to burn to learn!

We’ll probably never know for sure whether fire was really required for us to survive as humans, but there is no question that it makes life easier in many ways. Staying warm in an Arctic winter is much, much easier with a fire than without one. Fire helps in scaring away predators, killing bad things in food, and more. For example, the native people of the eastern US grew corn, beans, and squash in clearings in the forest. Chopping down trees with stone axes is not easy; “girdling” by cutting the bark will kill the trees, and fire can then be used to clear the land and keep it clear. (Slash-and-burn agriculture is not a new invention!)

Burning wood is just one of the ways that we humans use to get someone or something else to do some of our work for us. Rather than being limited by the energy we can get from our metabolism (the food we “burn” inside of us), we get lots of extra energy by burning other things outside of us. We burn coal, natural gas, and petroleum to generate most of our electricity and power our machines. We all use this energy and our share of it is something like 100 times as great as the energy we consume in the form of food! So our external energy use is far greater than our internal energy use from food.

Shortly after the last ice age ended, hunter-gatherers in many parts of the world began settling down and developing agriculture. This switch to growing food may not have been possible during the highly variable climate of the ice age. This switch helped fuel a major growth in population that continues today. But, by many measures, the switch also caused the new farmers to become less healthy, eating a less varied diet and suffering from more diseases-disease organisms and parasites enjoyed it when their human hosts settled down close together, making it easy to cause more sickness! You will find LOTS of ideas about why our ancestors settled down and started growing crops. One big possibility is that the world was nearly full of hunter-gatherers-the good places for finding something to eat were already taken, people died in marginal areas during bad years, people didn’t want their children to die, so they developed a new “technology” to feed themselves.

Very few people today have spent enough time with a shovel or hoe to know how difficult agriculture can be, even with modern tools. Plowing and cultivating are hard work. So, perhaps as early as 8000 years ago, people were figuring out how to get oxen to pull plows. This was NOT an easy undertaking, requiring selective breeding to domesticate wild creatures, then feeding those creatures and protecting them from predators and keeping them from running away, and inventing yokes and plows and convincing the oxen to wear the yokes and pull the plows. Yet all of this effort and more was easier for early agriculturalists than actually doing the digging themselves. Once again, people were getting ahead by getting something else to do their work for them.

Module 2: What is Energy?

Module 2: Overview

Why take a course on energy? With over $1 trillion spent per year on energy in the US alone, the knowledge you gain from this course may help you in your career and your everyday life. And because we currently rely on a completely unsustainable energy system that must change, your knowledge may help the long-term health of civilization. Plus, believe it or not, the subject really is interesting!

In this module, we’ll go over some of the basics—how do we talk about energy, what is it, how much of it do we use, and such. Back in the late 1990s, NASA lost a $125 million Mars orbiter because some members of the mission team were figuring out its location using metric units (e.g., meters, centimeters, liters) also called the International System of Units (SI) [10], others were using English units (e.g., feet, inches, ounces); the different groups didn’t recognize this and convert properly — a very expensive mistake! The situation with energy is actually more confusing than that. So, bear with us, and we’ll try to start off in the right direction.

Goals and Objectives

Module 2 Goals and Objectives

Goals:

- Recognize the natural and human-driven systems and processes that produce energy and affect the environment

- Explain scientific concepts in language non-scientists can understand

- Find reliable sources of information on the internet

By the end of this module, you should be able to:

- Recall that using energy doesn’t make it go away, it is just converted into a less useful form

- Recognize the many units of energy and power

- Show that the amount of energy used by people around the world is much larger than the 100 watts inside most people converted from food

- Recall that around 85% of the energy we use is derived from fossil fuels

- Analyze energy use and production in a country other than the United States

Roadmap

| To Read | Materials on the course website (Module 2) | |

|---|---|---|

| To Do | Module 2 Discussion Post Module 2 Discussion Comments Quiz 2 |

Due Wednesday Due Sunday Due Sunday |

Questions?

If you prefer to use email:

If you have any questions, please email your faculty member through Canvas. We will check daily to respond. If your question is one that is relevant to the entire class, we may respond to the entire class rather than individually.

If you prefer to use the discussion forums:

If you have any questions, please post them to the Help Discussion Form. We will check that discussion forum daily to respond. While you are there, feel free to post your own responses if you, too, are able to help out a classmate.

Three Examples

Energy is Forever, but Useful Energy Is Not

Physicists have found that in our normal lives, energy is neither created nor destroyed — it is conserved. But as energy is used, it is changed from a concentrated, useful form to a spread-out, less-useful form, eventually becoming useless to us. To learn what Einstein has to say, read the Enrichment on the next page. But first, let's look at three examples.

Want to know more?

If you are worried about Einstein and atomic bombs and want to learn more about it, read the Enrichment called Einstein's Special Relativity Theory E=mc2!

Example 1: Potato Chips

Throw a bag of potato chips on the floor, and stomp on it. Keep stomping until all of the chips are reduced to dust. Then, on a really windy day, go to the top of a hill and throw the dust as high as you can.

There are still calories in that potato-chip dust. If you could somehow re-bag your chip dust, you could eat it and then go about your business, fueled by the energy stored in the potato chips. In the real world, bacteria are going to get that energy, because it would take you much more energy to gather up the potato-chip dust than you could ever get by eating it, even if you wanted to.

Energy itself is a little like your potato chips. Energy doesn’t disappear when you use it to do something you want, but the energy is changed to a less useful form until eventually, it is completely useless to you. If yu eat the potato chips, your body will digest them and turn them into fuel that keeps your body going, which in part means generating heat to keep your body temperature at an average of 98.6°F, and then some of that heat is emitted from your body, traveling out in the form of infrared radiation, which is a form of energy. So the energy stored in the chips has been put to use and has changed from chemical energy in the chips to thermal energy that your body emits. And that thermal energy gets dispersed and is not really useful anymore, although it is conserved.

Video: Potato Chip (2:57)

DR. RICHARD ALLEY: These are potato chips-- crisps, in England. The chemicals in here are a concentrated source of energy that my body could store for later, or it could burn now to power me to do things that I think I need to do, like mow the lawn. And this is gasoline. The chemicals in here are a concentrated store of energy that I can use to power my lawnmower, to help me mow the lawn.

So if I were to take my chips, dump them on the driveway, and stomp on them with my big boots, the chemicals, the energy, would still be in there, but it just wouldn't be as useful to me. Especially if I took my lawnmower—

And I spread them all over everything.

So the stuff is there, the energy is there, but I've made it no longer useful. In exactly the same way, there's now less gasoline in the mower than there used to be. I have burned it. The stuff has gone into water vapor and CO2 in the air. And the energy, a little bit of it, made noise to annoy the neighbors. But eventually, that just heated up the surroundings. And a lot of went right into heat, so if you touched the wrong piece on this mower, you would burn yourself now.

So what we see in the real world, normal times, stuff and energy are not lost or made, but they're changed from one type to another. And with energy, we tend to change it from useful types to things that are not as useful, and eventually to heat that spreads out and does no good for us. A lot of the history of humanity has been finding concentrated sources of energy and trying to get useful things out of it as we change it into useless heat that spreads around the world. That may give you an idea that we'll come back to later.

If we were using sun or wind or hydropower to run an electric mower, I'd be making a lot less noise, I'd be making a lot less heat. I'd be using the energy I bought for what I wanted, rather than wasting it.

Example 2: Gas in the Car

The chemical energy in a full gas tank in your car is enough for you to drive 400 miles or so. As you burn the gas, the muffler gets hot, and you warm the air and the tires and the road a little—you are turning the gasoline’s energy into heat. You could put a little thermoelectric device in your tailpipe and generate enough electricity to run your music player, or you could blow some of the hot air through the heater to keep you warm on a cold winter day—the heat can still be useful—but you’re using lots more energy to move the car than you’ll ever get back. After you stop the car and the muffler cools off, the heat energy has been spread out into the air and is being radiated away to space — if you had a thermal camera, you could take a picture of it. A satellite can even see the heat going to space, and make a map of how warm or cold the Earth is, so there is still some use in that energy...but not much. And eventually, the energy will spread uniformly across the universe and be completely useless.

Example 3: Bungee Jumping

While Dr. Alley was in New Zealand filming footage for Earth: The Operators' Manual, he took the opportunity to test another use of energy (his energy) by bungee jumping. He gained potential energy (the ability to fall down fast) by climbing up to the top of the jump. That is turned into kinetic energy (motion, the ability to collide with things) by jumping off. After the thrilling few seconds of the jump, all that energy ends up heating the surroundings a bit and is no longer useful.

The key piece of knowledge to take away from these three examples of how energy is changed from a useful to a non-useful form is: if you want to keep doing things, you need new sources of concentrated energy. That’s what this course is about!

Powering the Big Units

Powering the Big Units

Short Version: Energy is the ability to do something, and is measured in joules or calories or kilowatt-hours or in other ways. Power is how fast you do it, and is measured in watts or horsepower or in other ways. Your 2000-calories-per-day diet is the same as a single 100-watt light bulb burning all day. Let's take a closer look!

Friendlier but Longer Version: Suppose that you are an employee at a Pennsylvania power company. Your customers buy a lot of kilowatt-hours of electricity to run their microwave ovens and music players, but your power plant needs to be turned off for maintenance. Your boss tells you to buy some power from a hydroelectric company in Quebec but they don't have any kilowatt-hours for sale -- all they offer are megajoules. What do you do?

Unit Conversion

Unit Conversions

Mistakes in unit conversions really can cost an immense amount of money. We are NOT going to turn this course into a worksheet on unit conversions, and we won’t require you to memorize unit conversions, but we will explain some of the key points next—enough to let you keep your hypothetical job with the power company...and maybe a real job someday.

Words such as energy, work, and power are tossed around in casual conversation but have very careful definitions in engineering and science, and for the people who buy and sell energy. You can think of energy as the ability to do something. Wind up an old-style alarm clock, and the spring has stored some mechanical energy, which is available to move the clock hands and make the ticking sound. Water above a dam has gravitational potential energy and can flow down under gravity, driving a generator to make electricity, or floating your boat to the sea. The chemical bonds in the gasoline in your car have chemical energy, and if you make the gas hot enough with a spark in your engine in the presence of oxygen, the bonds will change and make the car go.

When you are “using” the energy, it is doing work. Pushing you across the country, or moving the clock hands, or moving your boat down the river, require overcoming friction and wind resistance and such. So does using a plow to break the soil and turn it over so you can grow food and lots of other things. How fast you use energy, or how fast you do work, is power. You do some amount of work in climbing the stairs to the next floor, but doing it in 10 seconds requires more power for a shorter time than doing it in 10 hours.

In terms of units, and how you’ll answer your boss about the Quebec contract, energy can be measured in calories. A Big Mac has just over 500 calories, so 4 sandwiches provide just over the 2000 calories that a typical person would eat in a day. Most of the world measures energy in joules rather than calories, and those 4 Big Macs are just over 8 million joules, which is the same as 8 megajoules.

If you were on a starvation diet, you might make those 4 Big Macs last a month—a low-power diet! But if you eat 2000 calories per day and “burn” them inside you to make you go—normal power for a person—that is the same as 8 million joules per day, or roughly 100 joules per second, which is called 100 watts. Amazing as it may seem, all your skills and brilliance and good looks and charm use energy at the same rate as one old incandescent light bulb! Your energy use—your personal power—is a bit higher than 100 watts when you’re up and doing things, and lower when you’re sleeping, but averages out to 100 watts. We don’t usually define a “people power” unit, but 750 watts, or 7.5 people, is a usual definition of 1 horsepower.

So, energy can be measured in calories or joules and is the ability to do something, while power in calories per day or joules per second is how rapidly you do it, and a watt is a shorthand way of saying joules per second. But, suppose you have 10 old-style light bulbs turned on all the time, so you’re using 1000 watts or 1 kilowatt. Each hour, the computer at the power company says you have spent another hour using 1 kilowatt, so they add the price of 1 kilowatt-hour of electricity to your bill. At the end of 24 hours, you are billed for 24 kilowatt-hours. Kilowatt-hours, like calories and joules, provide a way to measure energy. The Quebec company uses joules, you use kilowatt hours, and you’ll keep your job because you know (maybe with some help from the internet) that 1 kilowatt-hour is 3.6 million joules, so those Québécois are not going to beat you in a deal!

Note:

In case you feel a sudden urge to actually do calculations with these, you might recall that the calorie you eat is sometimes also written as a capital-C Calorie, and is the energy to raise 1 kilogram of water by 1 degree C, distinguished from a calorie that is written with a lower-case c and is the energy to raise 1 gram of water by 1 degree C. So when we read about food calories, we are really talking about kilocalories. This is another reason why most of the world uses joules.

You should also know that there are many more ways to measure these things, which you do not need to learn now, but which you should know exist. People often use British Thermal Units, or BTU's, for energy, and BTU's per hour for power, but occasionally they get sloppy and say “BTU” when they mean “BTU per hour”. Or, they get lazy and say that one quadrillion BTU's is a “quad” and just quit talking about BTU's. People who sell natural gas have figured out how many BTU's, or joules, or calories, can be obtained by burning a particular amount of typical natural gas, and how much space that gas occupies at standard temperature and pressure, so they may measure energy in cubic feet of gas, or cubic meters of gas, even though they know that this depends on temperature and pressure and the particular gas. Barrels of oil can be used the same way. And, it goes on from here—refrigeration workers in the US talk about power in terms of “tons of cooling” linked to the power needed to freeze a ton of water in a day (one ton of cooling is approximately 3510 watts).

Now, we hope it is obvious that unless you are planning to work in cooling, or you have rather strange friends you would like to impress, it is probably not a good idea to clog your brain with the conversion factor between watts and tons of cooling! But you should know that a few fundamental ideas such as energy and power have been made to look very complicated by having a lot of names and units. And you also should know that many jobs you might be hired for will require you to figure out: 1) how things traditionally have been measured; and 2) how to convert to what other people are doing in their jobs. And if you can’t do that reliably, there is a high chance that you will be fired!

Energy and the US Economy

Energy and the US Economy

Short Version: Energy is 10% of the US economy—over $1 trillion per year, or $4000 per year for each person, with roughly $1000 of that leaving the country, to supply the average US resident with more than 100 times more energy than they use internally. About 85% of the energy used is from fossil fuels, which are being burned much faster than nature makes more.

Friendlier but Longer Version: During the course, we’ll take a look at the big sources of energy, the big issues in energy use, the “why you might care” and “what it means to you” questions. For now, a few more-or-less connected numbers and graphs may be useful. This course is not about having you memorize numbers, but you should be aware of magnitudes—which things are really big and matter a lot, versus those that are small and can be safely ignored (unless you’re the wonk on this topic and need to know everything!).

As you just saw, the food you burn inside powers you at the same rate, on average, as a bright old-style light bulb (100 watts) that is turned on. But, the food may have been cooked, after it was shipped to you in a refrigerated truck after it was harvested by a corn-picker or combine from a field that first was plowed by a tractor. The plowing and harvesting and trucking and refrigerating and cooking all required energy. You probably are reading this on an electric-powered computer, in a room that is heated in winter and cooled in summer using energy. If there is glass on the computer screen, it started out as sand, which was melted using energy. Aluminum or iron or other metals were smelted from ores, using energy.

Video: Energy Use (1:09)

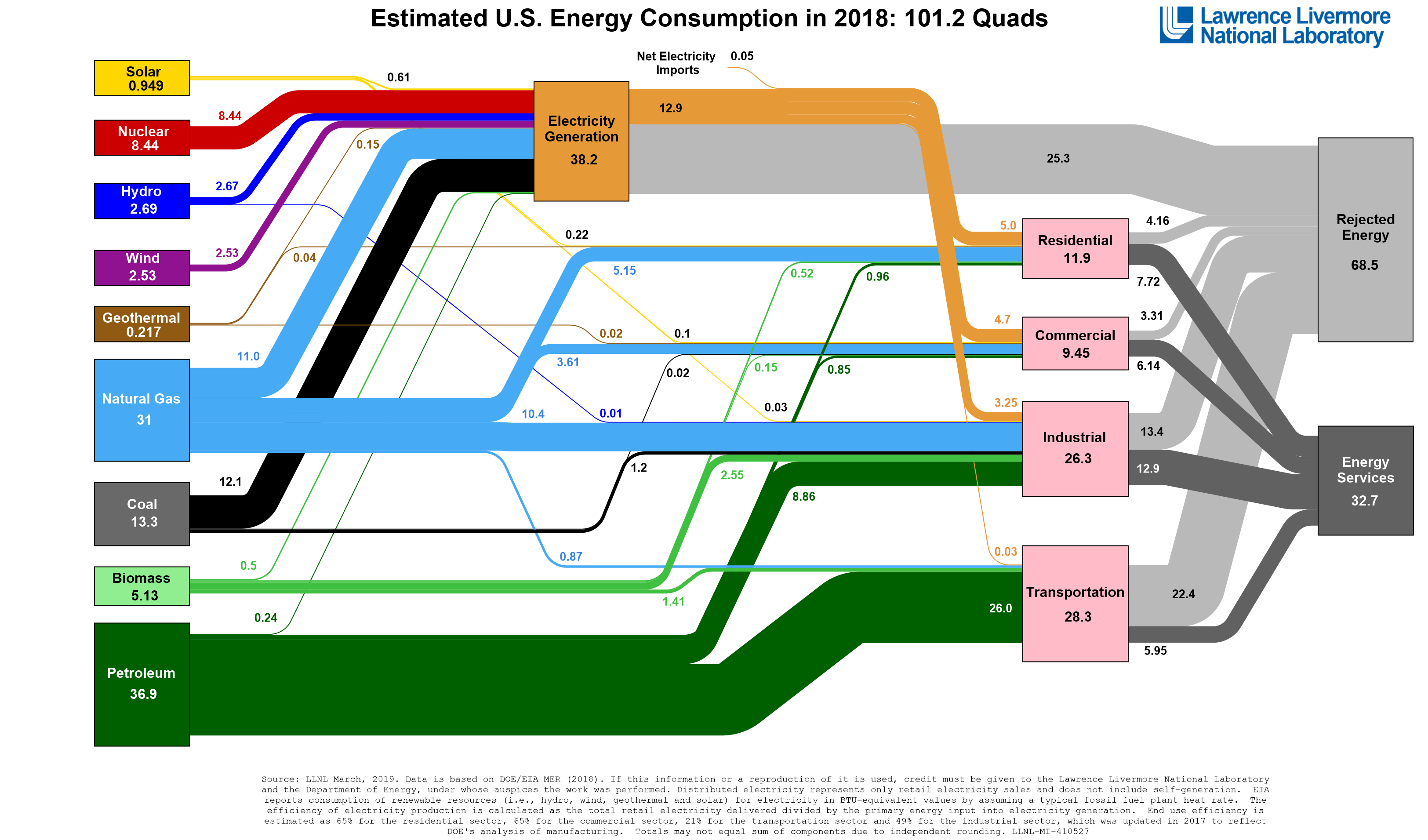

PRESENTER: This is US energy use in the year 2010 from the Energy Information Agency of the United States government.

And what you'll notice is that renewables over here make up about 8% of the mix, similar to nuclear-- used for electric power. And then you have all of these different fossil fuels. Coal-- 21%, dropping a little bit. Natural gas-- 26%, and rising a little bit. And the biggest thing has been a reliance on petroleum, what's usually called oil. If you add these together, it's 84%.

For the world, for most other countries, this dominance by fossil fuels also happens in those other countries and for the world as a whole. We really are fossil fueled.

You get the idea. And, if you add up all that energy, there is a lot of it. The total energy use in the US economy, divided by the number of people, comes to a bit over 10,000 watts per person—all together, everything that is going on around you to take care of you involves more than 100 times the energy use inside of you. You don’t really have more than 100 incandescent bulbs burning all the time to take care of you, but all the plowing and harvesting and trucking and refrigerating and cooling and smelting and melting and heating and cooling and … that do take care of you are using energy at the same rate as more than 100 old light bulbs, or 100 of you.

You might imagine that you have 100 energy “serfs” doing your bidding… but if you actually had 100 serfs to do your bidding, they would spend most of their effort taking care of themselves and staying alive rather than doing for you. Plus, there is no way that those serfs could actually pick up your car and run down the highway at 65 miles per hour (100 km per hour)!

This much energy doesn’t come cheaply, though. Energy costs are roughly one-tenth of the entire US economy. That comes to about $1 trillion per year recently, or about $4000 per person per year, with roughly $1000 of that spent outside the US to pay for energy imports. (These numbers bounce around some from year to year; you can get updates at the US Energy Information Administration [11]. So, each year, a US resident is sending ~$1000 to people outside the US, primarily to pay for gasoline. Those people overseas may use those dollars to buy US-made products, or to visit the US, or to buy US companies, or to buy camels or classic paintings, or to buy bullets, or in other ways—once the money is sent over the border, it is theirs….

US Energy Use

US Energy Use

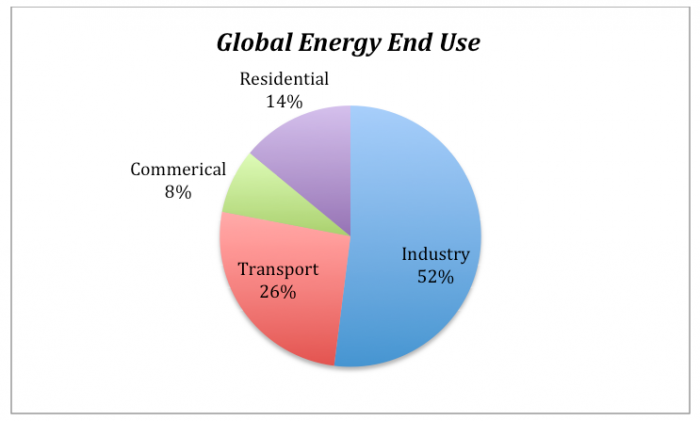

Energy use in the US is dominated by fossil fuels—oil (or more formally, petroleum), gas (or more formally, natural gas), and coal (which is generally just called coal). Recently, fossil fuels have been totaling about 85% of energy sales in the US (and more-or-less 85% worldwide), with the rest of US use split more-or-less equally between nuclear and renewables. (In 2010, the US Energy Information Administration gave US energy supply as Oil 37%; Gas 26%; Coal 21%; Nuclear 8%; Renewables 8%. This was used to move us around (transportation 28%), to build things (industrial use 20%), to heat and cool houses (residential 11%) and to power our plugged-in gizmos (electricity 40%).

Video: U.S. Energy Supply (0:52)

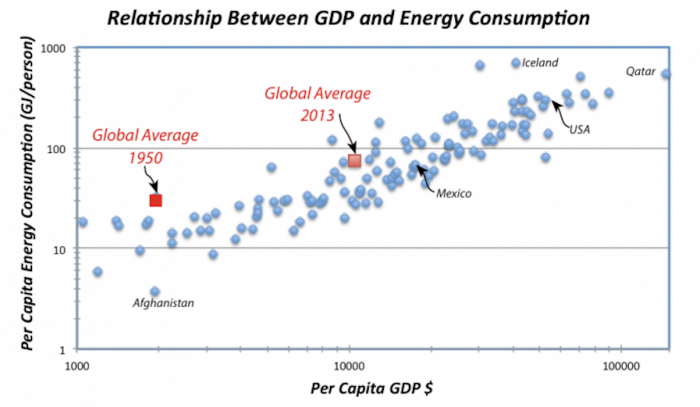

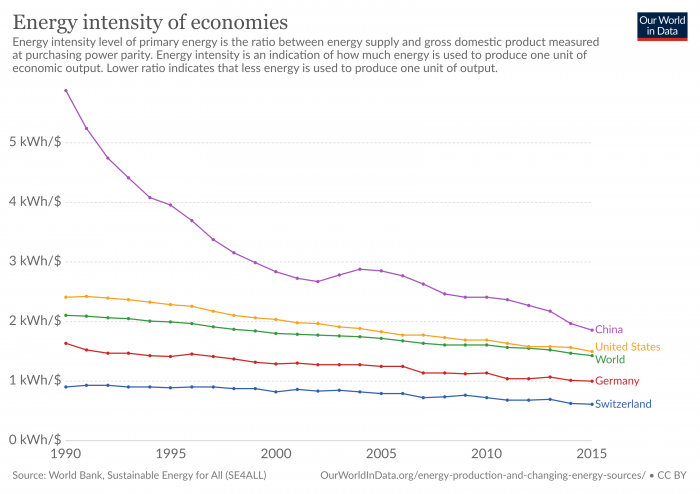

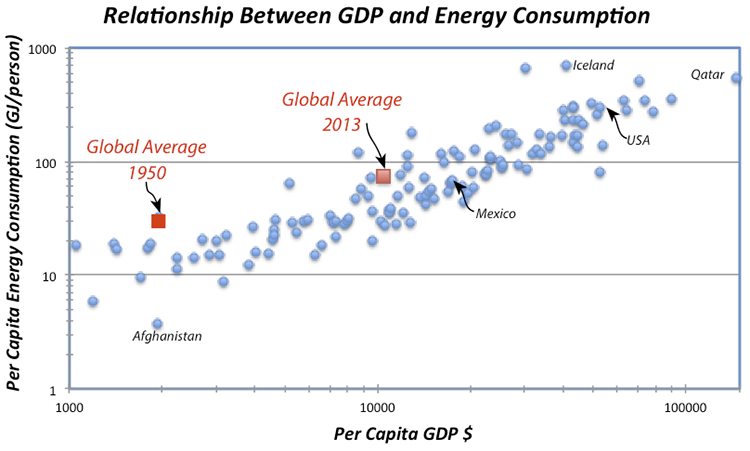

PRESENTER: These are a couple of plots showing some information about human use of energy and economy. Use of energy per person per year is on the bottom-- zero, not using any over to using a huge amount. And this amount is about 100 times more energy used outside the person than inside.

And what you'll notice is this is economic activity-- how big the economy is, how many dollars per person per year. And poor people don't use much energy, and rich people use a lot of energy.

Now, you might first think that that means that rich people are just wasteful. But what you see above is how much energy is needed to generate $1 of economic activity, and there just isn't much relationship there. So it's not that rich people are wasting more energy. They get as much activity out of a barrel of oil as poor people do. They just generate way more economy. And so you use more energy when you're rich, basically.

Source: The figure is modified by Richard Alley from Figure 1.3, US Energy Information Administration, "http://www.eia.gov/totalenergy/data/annual/index.cfm#summary [12]">Annual Energy Review 2010

We’ll revisit these issues later. US usage per person is a little smaller than some countries, but (much) larger than many others. Per person, the world averages roughly 1/4 of US use. Most of the world's economy is dominantly fossil-fueled with people often getting about 85% of their energy from fossil fuels as in the US, and energy is often about 10% of the economy.

Activate Your Learning

In the previous section, we learned that the average person in the US uses ~10,000 watts of energy while producing only 100 watts from the food they eat. If average world energy use is about 1/4 of that in the US, and assuming all people produce about the same amount of energy from the food they eat, do people worldwide create as much energy from eating food as they use in their daily lives?

Click for answer.

For now, though, it should be evident that if we spend 10% of our money on energy, it impacts everything—jobs and security and environment and more. As we saw in last week's Discussion, there are great options for making money and saving money by doing things better in the energy business. But, over the last few decades, we actually have doubled the amount of economic activity squeezed out of each barrel of oil or ton of coal—bright people have been working on this, and making or saving much more money might take a lot of effort or some new inventions.

Perhaps most importantly, the current system is grossly unsustainable. As we will see in upcoming content, the store of fossil fuels in the Earth is limited, and we are removing them much more rapidly than nature makes new ones. With essentially everything we do relying on energy use and 85% of the energy system relying on unsustainable fossil fuels, a lot of things will need to change.

![]() Earth: The Operators' Manual

Earth: The Operators' Manual

Video: China: In with the New - A 4-minute clip on China's movement toward alternative energy use.

Narrator: If the US military is the largest user of energy in America, China is now the largest consumer on the planet. At 1.3 billion, China has a population about 4 times larger than the U.S. So average per person use and CO2 emissions remain about one quarter those of Americans. But, like the U.S. military, China is moving ahead, full speed, on multiple, different sustainable energy options. And it pretty much has to-- Cities are congested. The air is polluted. Continued rapid growth using old technologies seems unsustainable. Photographer: I count to three... Narrator: This meeting in Beijing brought together mayors from all over China, executives from state-owned enterprises, and international representatives. The organizer was a U.S.-Chinese NGO, headed by Peggy Liu.

Peggy Liu: Over 20 years, we're going to have 350 million people moving into cities in China, and we're going to be building 50,000 new skyscrapers, the equivalent of ten Manhattans, 170 new mass transit systems-- I mean it's just an incredible, incredible scale. Narrator: This massive, rapid growth comes with a high environmental cost. Martin Schoenbauer: They're recognizing that they're spending as much as six percent of their gross domestic product on environmental issues. Narrator: In 2009 China committed 35 billion dollars, almost twice as much as the U.S., to energy research and incentives for wind, solar, and other clean energy technologies. It's attracted an American company to set up the world's most advanced solar power research plant. China now makes more solar panels than any other nation. But it's also promoting low-tech, low-cost solutions. Solar water heaters are seen on modest village homes. Some cities have them on almost every roof.

Peggy Liu: China is throwing spaghetti on the wall right now, in terms of over 27 different cities doing L.E.D. street lighting, or over 20, 30 different cities doing electric vehicles.

Narrator: But visit any city and you can see that the coal used to generate more than 70% of China's electricity has serious consequences with visible pollution and adverse health effects. China uses more coal than any other nation on Earth. But it's also trying to find ways to burn coal more cleanly.

Peggy Liu: In three years, 2006 to 2009, while China was building one new coal-fired power plant a week, it also shut down inefficient coal plants. So, you know, it's out with the old, and in with the new. And they're really trying hard to invent new models.

Narrator: This pilot plant, designed for Carbon Capture and Sequestration, was rushed to completion in time for Shanghai's 2010 World Expo. It absorbs and sells carbon dioxide, and will soon scale up to capture three million tons a year that could be pumped back into the ground, keeping it out of the air.

Schoenbauer: Here in China they are bringing many plants on line in a much shorter time span than it takes us in the U.S.

Peggy Liu: China is right now the factory of the world. What we'd like to do is turn it into the clean tech laboratory of the world. Narrator: If nations choose to pay the price, burning coal with carbon capture can offer the world a temporary bridge until renewables come to scale.

Peggy Liu: China is going to come up with clean energy solutions that are cost effective and can be deployed at large scale. In other words, solutions that everybody around the world wants.

Discussion Assignment

Reminder!

After completing your Discussion Assignment, don't forget to log into Canvas and take the Module 2 Quiz. If you didn't answer the Learning Checkpoint questions, take a few minutes to complete them now. They will help your study for the quiz and you may even see a few of those question on the quiz!

Discussion Question

Objective:

Compare energy consumption in the U.S. to that in other countries. Find the total per capita energy use for a country of your choosing. Is it more or less than that in the US? Is it growing at the same rate? Why might this be?

Goals:

- Find reliable sources of information on the internet

- Communicate scientific ideas in language non-scientists can understand

Description:

Throughout this module, most of the facts and figures about energy have been for the United States. Of course, the entire world uses energy in varying capacities. Take a moment to take a look at what is happening outside the US. If you live in another country, or if your family is from another country, what is the energy situation there and how is it different from the US? Perhaps you have visited another country or heard something interesting about energy production or consumption elsewhere in the world.

As a starting point, go to the U.S. Energy Information Administration website [13] and look at per capita energy consumption in the US vs. your country of choice between 1980 and 2015. Use the DATA pull-down menu to select "Primary Energy Consumption" then click on the Time Series icon below the map. Next, click on the Select Data icon and in the window that pops up, select Energy Intensity in item 2 and Population in item 4 and then click on View Data at the bottom of this window. Then click on the Select Countries icon and another window will pop up -- here, click on All Countries and you will see a list of all the countries, then click View at the bottom of this window. Scroll down below the graph and you will see a list of all the countries -- if you click on the graph icon to the right of a country, the data will appear on the graph; click on another country and its data will also appear.

If the above link does not work, try an alternative source, IEA Energy Atlas [14].Make sure that you select TPES/Population (which is tons of oil equivalent per person), then scroll down to the very bottom of the page, where you can make a graph that compares your country with the United States.

How does the per capita use in 2015 compare with the US and your country? How does the change in use from 1980 to 2015 compare? Given what you know about the country, what factors do you think might contribute to differences in energy use?

Next, find one fact about energy consumption or production in the country you have chosen that you think is especially interesting, and tell us why you think your country has this particular feature. For example, oil use may be increasing as industry grows in a developing nation. Or wind energy may be growing rapidly because you have a long and windy coastline. Maybe you live near a volcano and get all your power from geothermal energy.

Instructions

Your discussion post should be 150-200 words and should include the name of the country you have chosen to research as well as numerical data comparing energy consumption in the US to that in your country of choice. Make sure the questions posed above are answered completely. Your original post must be submitted by Wednesday. In addition, you are required to comment on one of your peers' posts by Sunday. You can comment on as many posts as you like, but please make your first comment to a post that does not have any posts yet. Once you have an idea of what you want your post to be, go to the course discussion for your campus and create a new post.

Scoring Information and Rubric

The discussion post is worth a total of 20 points. The comment is worth an additional 5 points.

| Description | Possible Points |

|---|---|

| states name of country and includes numerical data (with units!) for energy consumption in US and chosen country | 5 |

| compares current or recent usage (2010 is close enough) and change in usage (1980-2010) for US and country of choice | 5 |

| identifies at least one reason why energy use in chosen country might differ from that in US | 5 |

| includes one interesting fact about energy use or production that is particular to country of choice | 5 |

| well-reasoned comment on someone else's post | 5 |

Summary and Final Tasks

Module Summary

We love the good things we get from using energy, and we use a lot of it. When we “use” energy, it doesn’t disappear, but it is changed to a form that is less useful, and eventually, it becomes totally useless to us. So, we spend a lot of effort into finding sources of concentrated energy that we can use. How rapidly we use energy is called power. You could use most of the energy in your food to sprint down a racetrack, generate high power, and then rest up afterward with low power, or you could use the same amount of energy in the same amount of time by walking steadily with intermediate power output. We measure energy in joules or calories or kilowatt-hours, and power in watts or calories per day or in other ways. Food burning inside us averages about 100 watts, but in the US the energy use outside is more than 100 times larger, and almost everyone almost everywhere uses far more energy outside than inside—the global average is roughly 25 times more energy use outside than inside. And, for most of the world, the energy used is primarily fossil fuels—85% in the US, and similar for most places. Typically, this is about 10% of the economy. So, we spend a lot of money to get good things from energy.

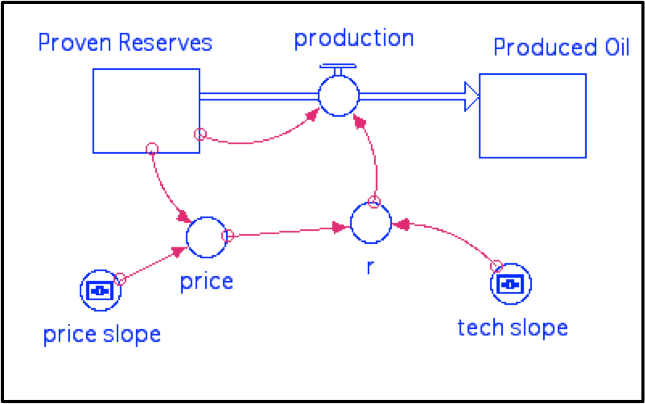

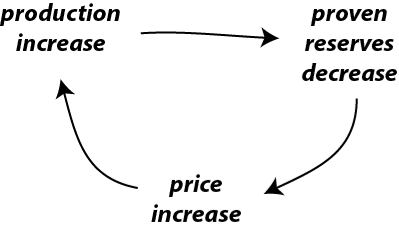

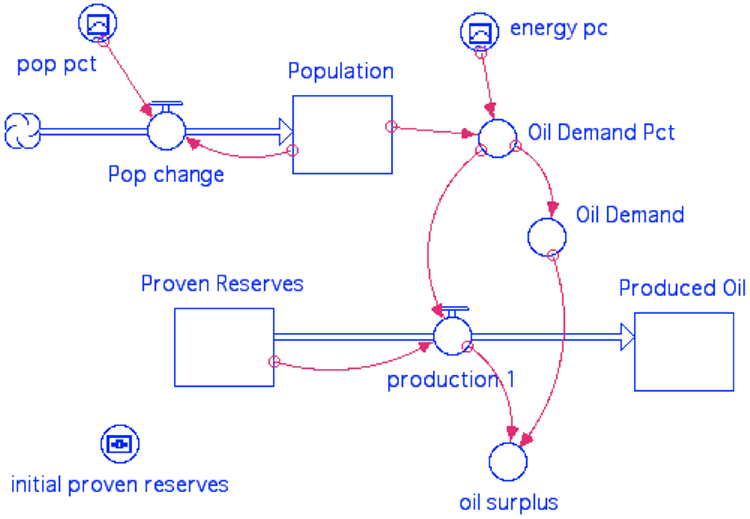

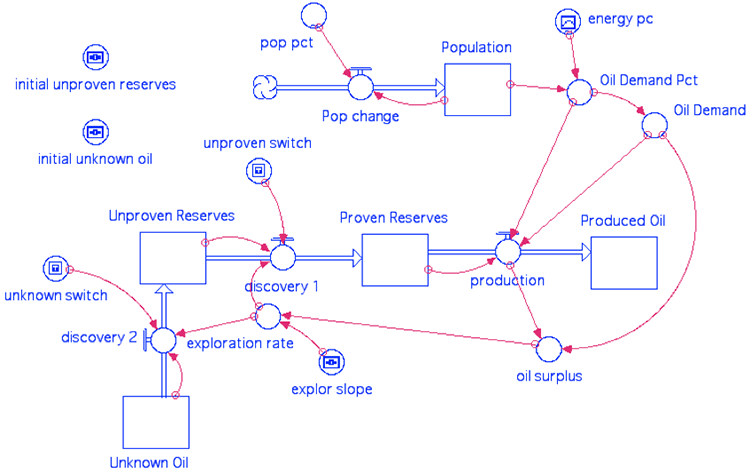

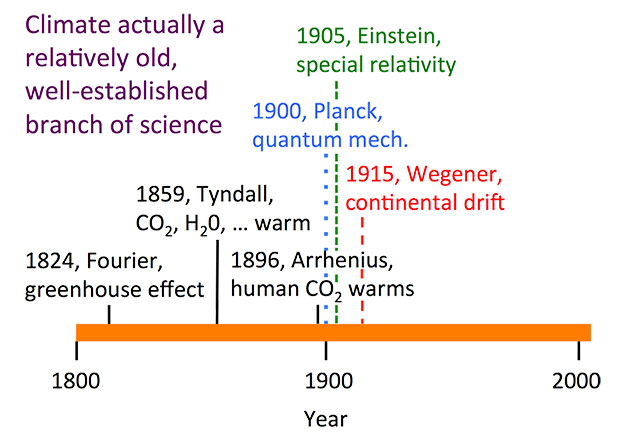

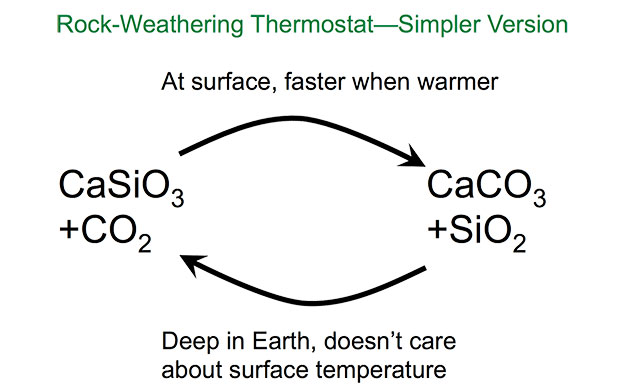

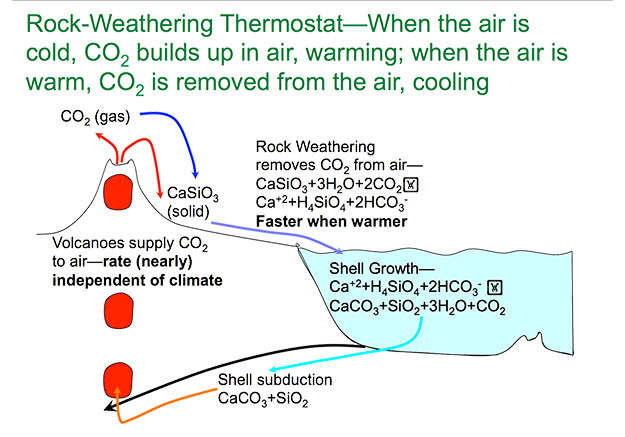

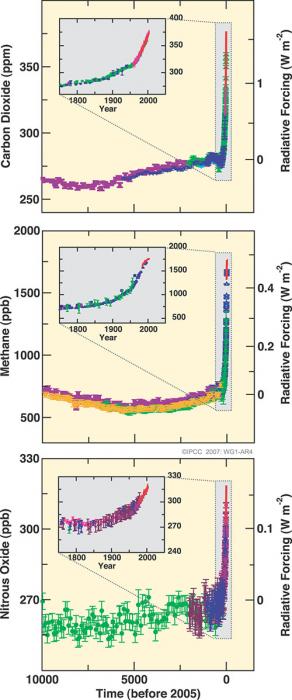

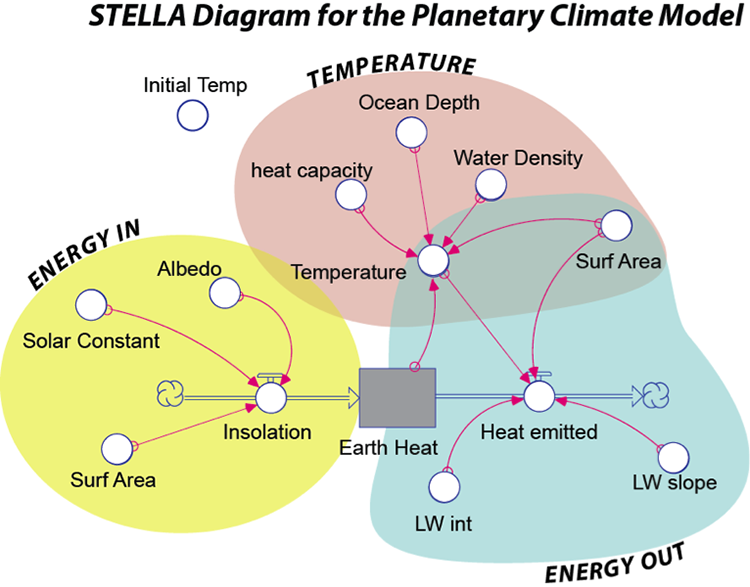

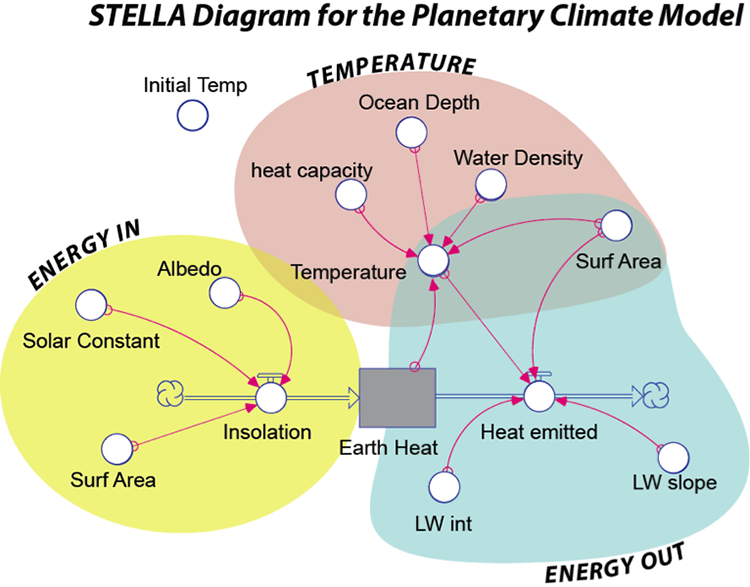

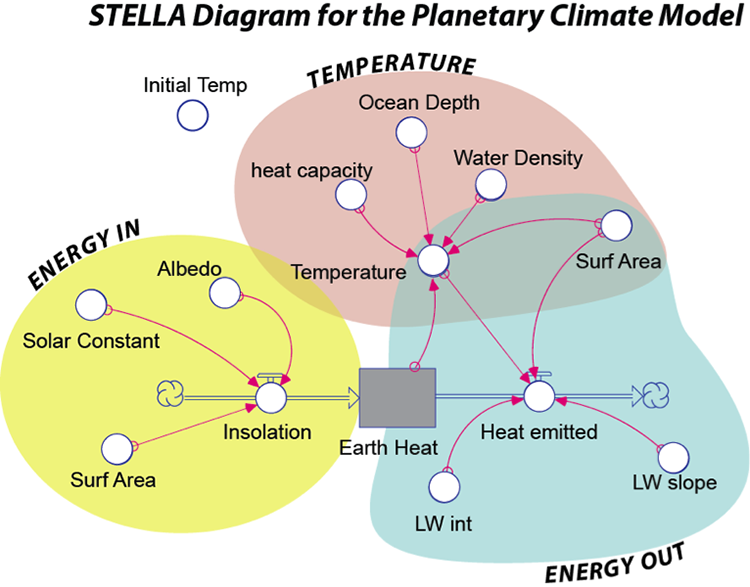

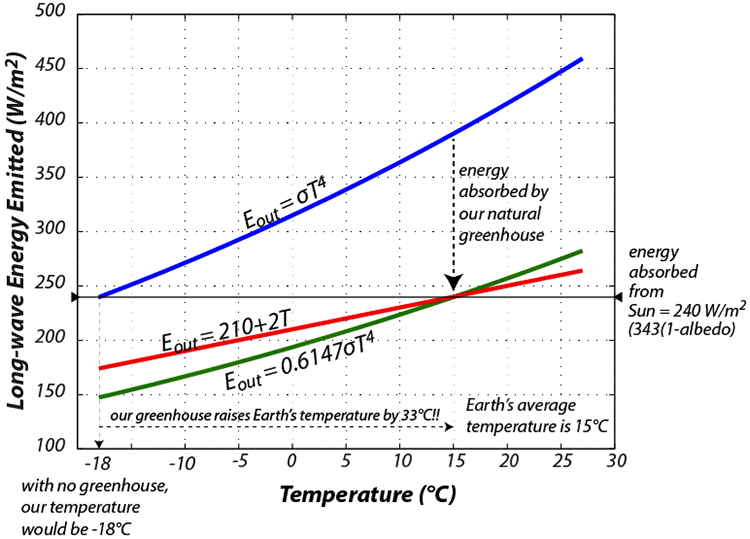

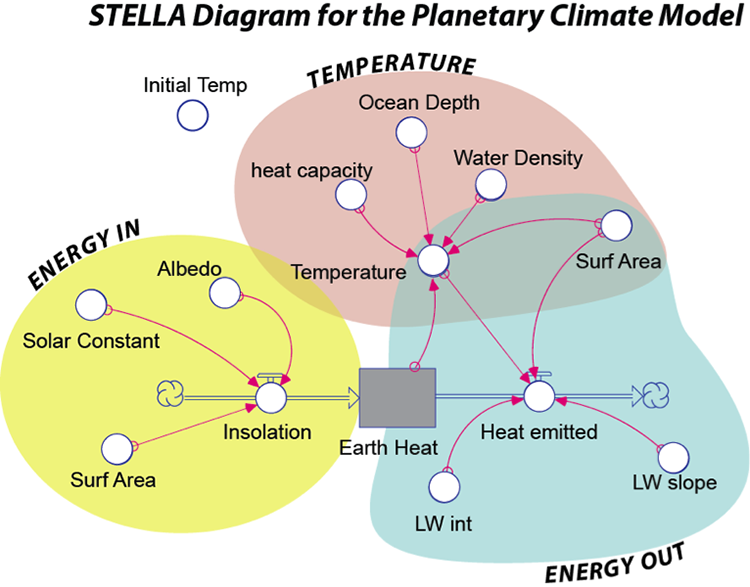

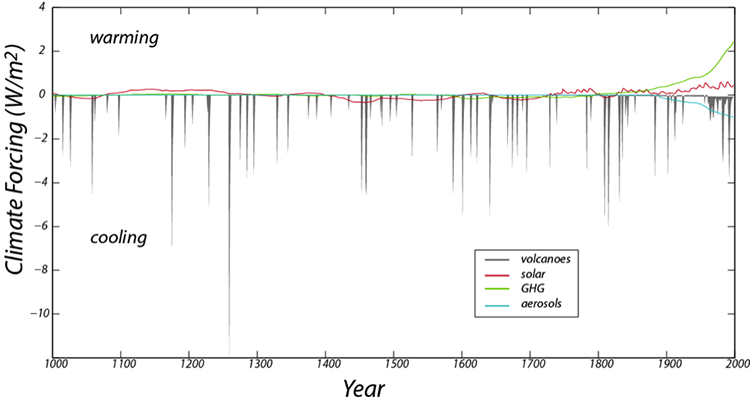

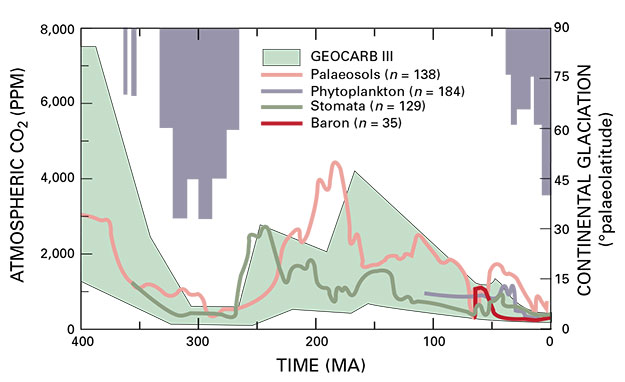

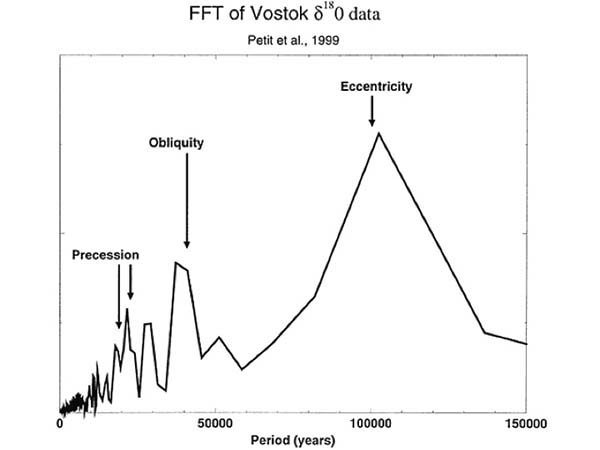

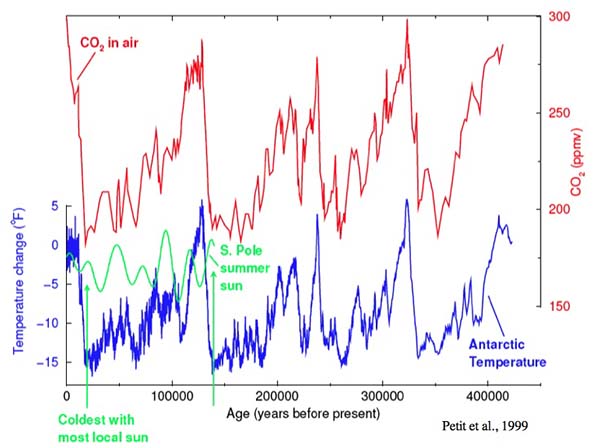

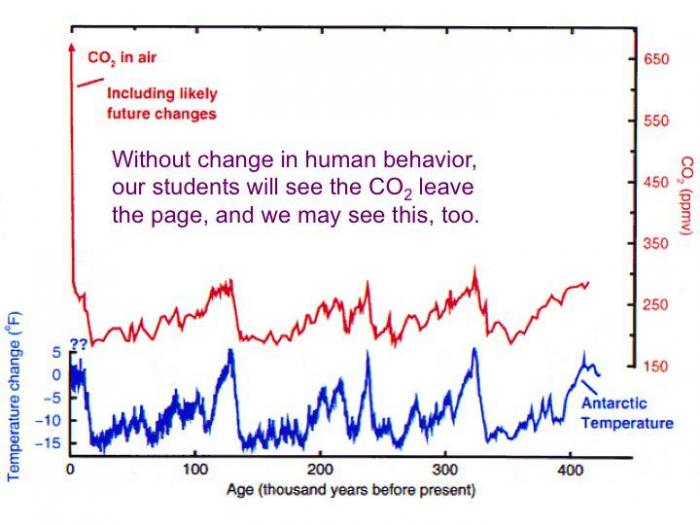

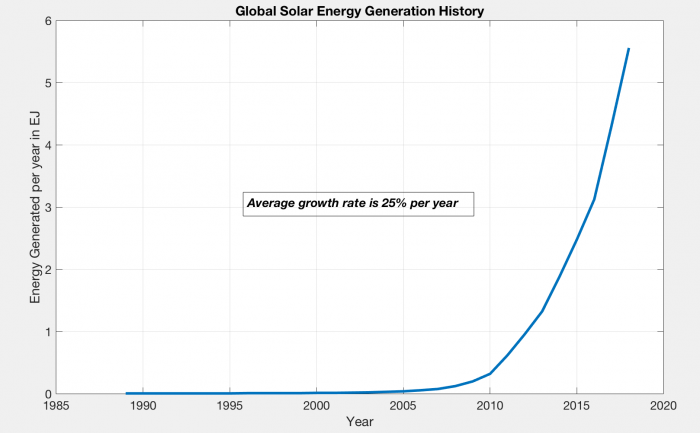

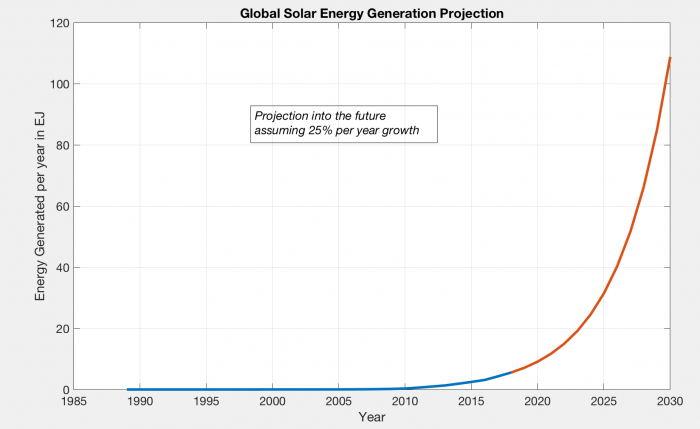

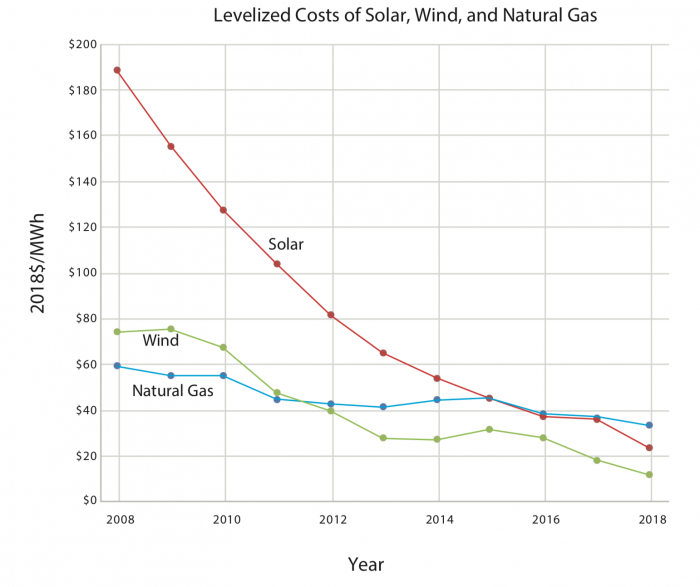

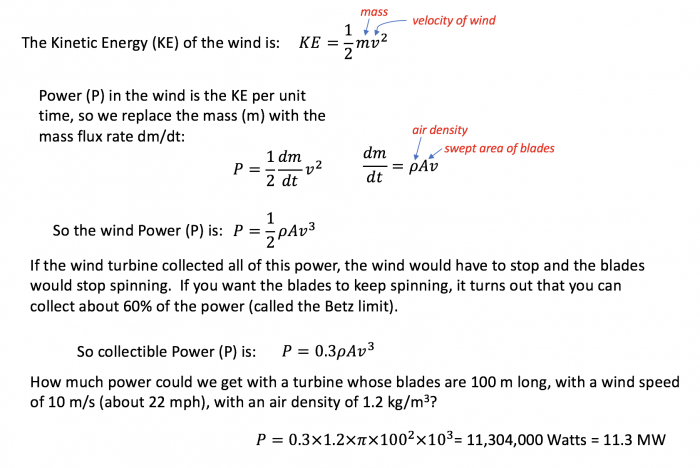

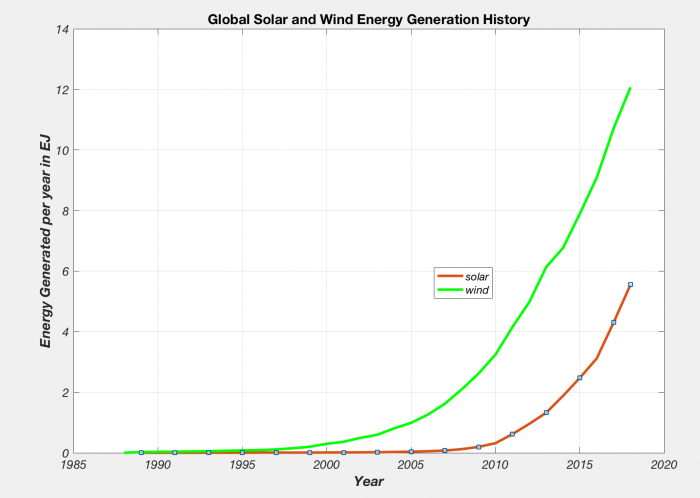

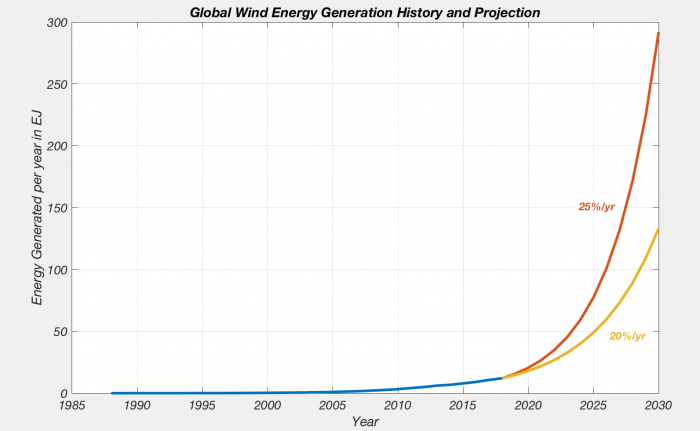

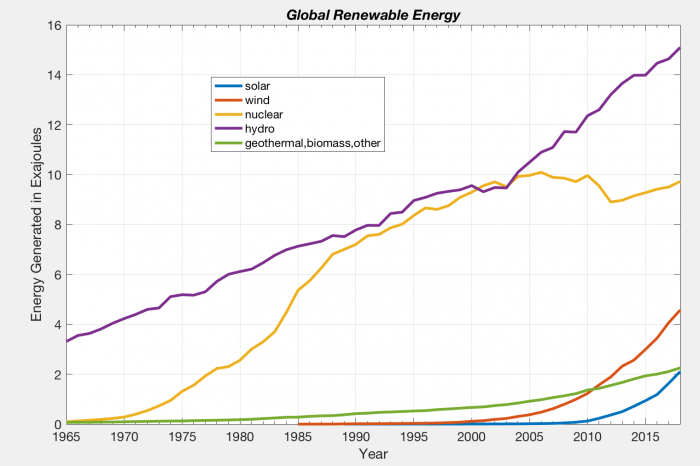

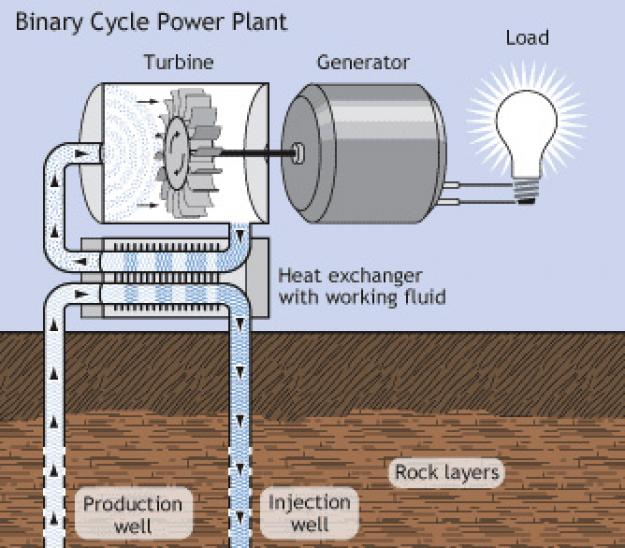

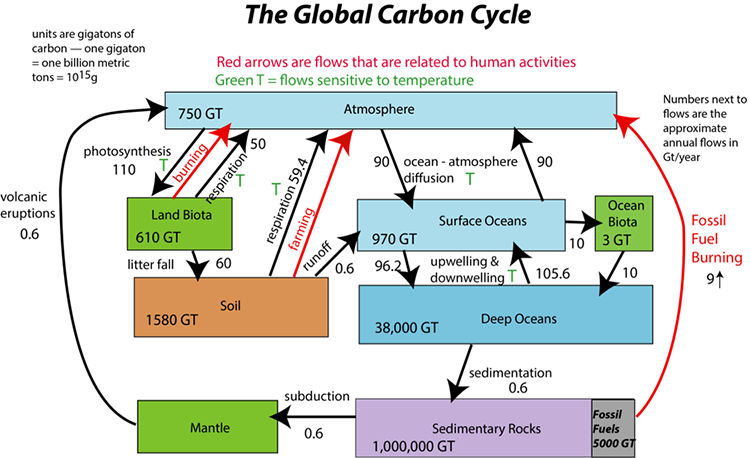

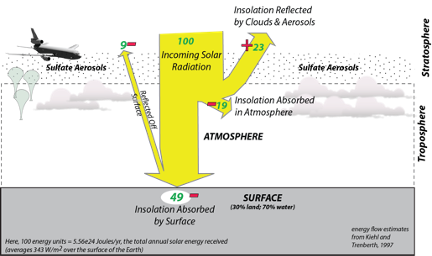

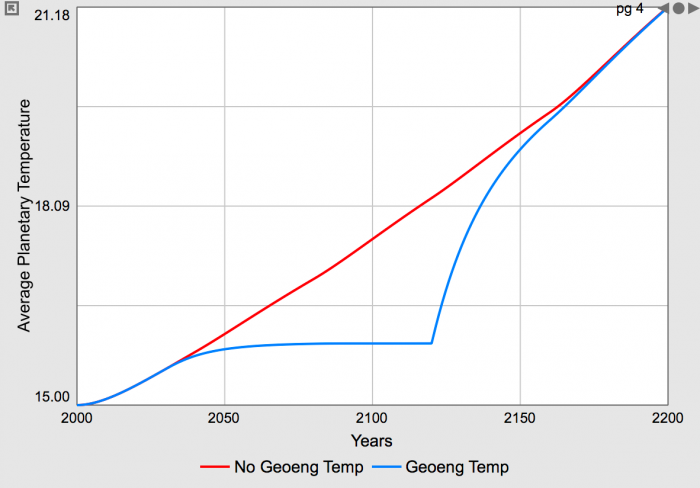

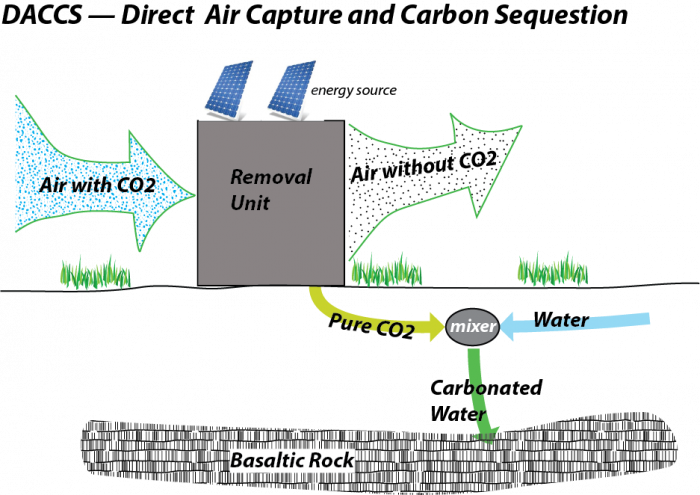

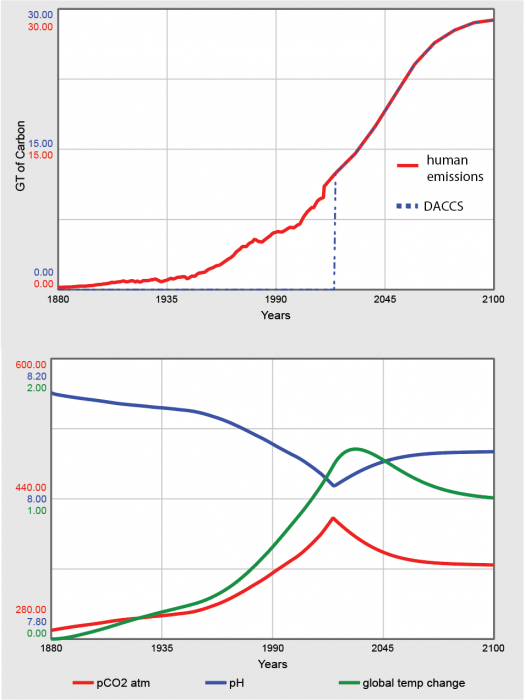

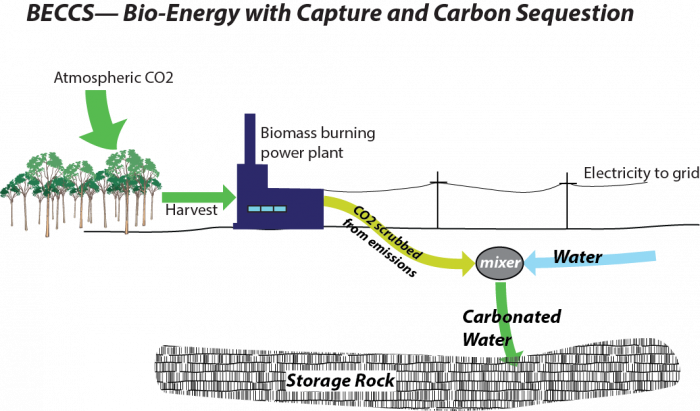

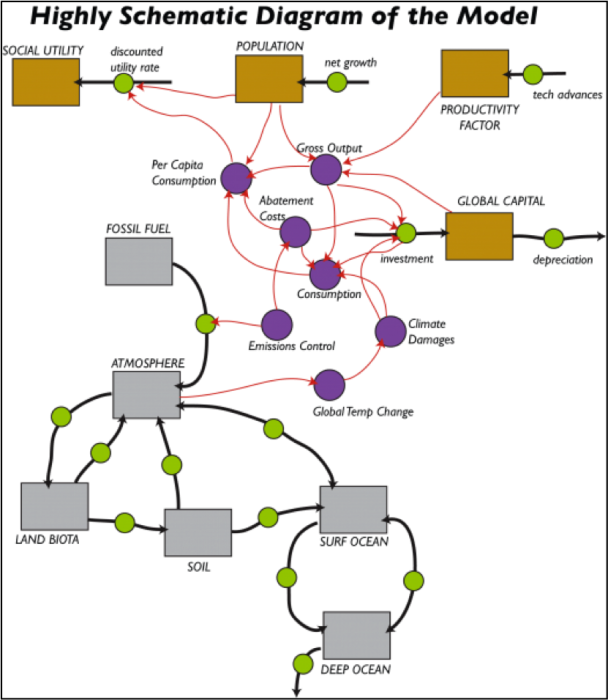

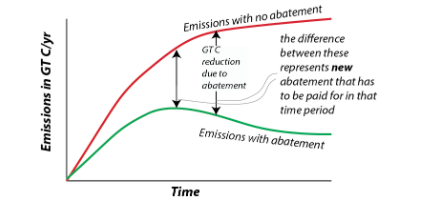

Reminder - Complete all of the Module 2 tasks!