Chapter 7: Remote Sensing: Imaging Our World

Overview

Chapters 5 and 6 focused on acquisition and application of geographic data that are collected in the world using GPS-enabled devices and other means (e.g., traditional field surveys). This chapter moves the focus to acquisition and application of geographic data collected remotely, using a range of remote sensing technologies. Remote sensing is the measurement of an object without direct contact; the Office of Naval Research derived the term remote sensing in the early 1960s.

This chapter considers the characteristics and uses of raster data produced with airborne and satellite remote sensing systems. Remote sensing is a key source of data for land use and land cover mapping, agricultural and environmental resource management, mineral exploration, weather forecasting, and global change research.

Remotely sensed images are now prevalent in many aspects of our daily lives. You are exposed to imagery through media sources, such as CNN or Fox News, and you can view imagery across the world with Google Maps or Bing Maps. You will encounter examples of imagery from these and other sources in this chapter. In addition to introducing these types of data products, you will also learn about some of the techniques that are used to analyze such images.

Objectives

The overall goal of Chapter 7 is to acquaint you with the properties of data produced by satellite-based sensors. Specifically, in the chapter, you will learn to:

- compare and contrast the characteristics of image data produced by photography and digital remote sensing systems;

- use the Web to find Landsat data for a particular place and time;

- explain why and how remotely sensed image data are processed; and what types of corrections are necessary for getting geographically accurate information;

- understand how remotely sensed imagery is turned into a range of map products through application of photogrammetric techniques.

Table of Contents

- Introducing Remote Sensing

- Electromagnetic Radiation

- Resolution

- Multi-spectral Image Processing

- Survey of Multispectral Imagery Types and Their Applications

- Other Types of Imagery

- Case Study: Using Landsat for Land Cover Classification for NLCD

- Orthoimagery

- Glossary

7.1 Introducing Remote Sensing

Remote sensing is defined in Chapter 1 as data collected from a distance without visiting or interacting directly with the phenomena of interest. The distance between the object and observer can be large, for example imaging from the Hubble telescope, or rather small, as is the case in the use of microscopes for examining bacterial growth. In geography, the term remote sensing takes on a specific connotation dealing with space-borne and aerial imaging systems used to remotely sense electromagnetic radiation reflected and emitted from Earth’s surface. Space-borne remote sensing suggests the use of sensors attached to satellite systems continually orbiting around the Earth. In contrast, aerial imaging systems are typically sensors attached to aircraft and flown on demand, meaning that their data capture is not continuous.

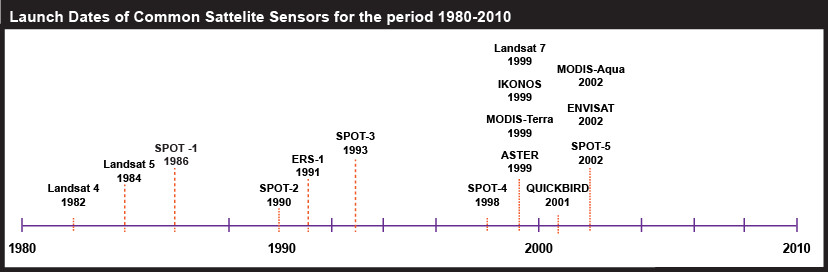

Aerial photographs were first captured using balloons and pigeons, but with the invention of the airplane in 1903, a new method for aerial image acquisition was instated. The beginning of the modern remote sensing age began with the launch of the first satellite, Sputnik, in 1957. Since that point in time, numerous satellites have been launched carrying sensors, instruments for capturing electromagnetic energy emitted and reflected by objects on the Earth's surface. While early remote sensing was based on photographs, most of today’s remote sensing uses such sensors. Figure 7.1 below shows the launch dates of some of the more common remote sensing sensors. Later in this chapter, we will describe their specific uses in more detail.

| Year | Satellite |

|---|---|

| 1982 | Landsat-4 |

| 1984 | Landsat-5 |

| 1986 | SPOT-1 |

| 1990 | SPOT-2 |

| 1991 | ERS-1 |

| 1993 | SPOT-3 |

| 1998 | SPOT-4 |

| 1999 | Landsat-7 |

| 1999 | IKONOS |

| 1999 | MODIS-Terra |

| 1999 | ASTER |

| 2001 | QUICKBIRD |

| 2002 | MODIS-Aqua |

| 2002 | ENVISAT |

| 2002 | SPOT-5 |

Remote sensing systems work in much the same way as a desktop scanner you may connect to your personal computer. A desktop scanner creates a digital image of a document by recording, pixel by pixel, the intensity of light reflected from the document. Color scanners may have three light sources and three sets of sensors, one each for the blue, green, and red wavelengths of visible light. Remotely sensed data, like the images produced by your desktop scanner, consist of reflectance values arrayed in rows and columns that make up raster grids. An example of a satellite used to scan the surface of the Earth to produce such raster images is provided in Figure 7.2.

Remote sensing is used to solve a host of problems across a wide variety of disciplines. For example, Landsat imagery is used to monitor plant health and foliar change. In contrast, imagery such as that produced by IKONOS is used for geospatial intelligence applications and monitoring urban infrastructure. Other satellites, such as AVHRR (Advanced High-Resolution Radiometer), are used to monitor the effects of global warming on vegetation patterns on a global scale. The MODIS (Moderate Resolution Imaging Spectroradiometer) Terra and Aqua sensors are designed to monitor atmospheric and oceanic composition in addition to the typical terrestrial applications. View animations of NASA’s MODIS satellite images over 2007 wildfires in Southern California [1].

Next, it is important to understand the basic terminology used to describe electromagnetic energy. Analysis of the reflectance of this energy can be used to characterize the Earth’s surface. You will see that digital remote sensing is like scanning a paper document with a desktop scanner, but more complicated, due to factors that include movement of both the Earth and the sensors and the atmosphere intervening between them. In the following section, we will learn how objects on the Earth's surface reflect and emit electromagnetic energy in ways that allow for the analysis of objects and phenomena on the Earth's surface.

7.2 Electromagnetic Radiation

Most remote sensing instruments measure the same thing: electromagnetic radiation. Electromagnetic radiation is a form of energy emitted by all matter above absolute zero temperature (0 Kelvin or -273° Celsius). X-rays, ultraviolet rays, visible light, infrared light, heat, microwaves, and radio and television waves are all examples of electromagnetic energy.

The graph above shows the relative amounts of electromagnetic energy emitted by the Sun and the Earth across the range of wavelengths called the electromagnetic spectrum. Values along the horizontal axis of the graph range from very long wavelengths (TV and radio waves) to very short wavelengths (cosmic rays). Hotter objects, such as the sun, radiate energy at shorter wavelengths. This is exemplified by the emittance curves for the Sun and Earth, depicted in Figure 7.3. The sun peaks in the visible wavelengths, those that the human eye can see, while the longer wavelengths that the Earth emits are not visible to the naked eye. By sensing those wavelengths outside of the visible spectrum, remote sensing makes it possible for us to visualize patterns that we would not be able to see with only the visible region of the spectrum.

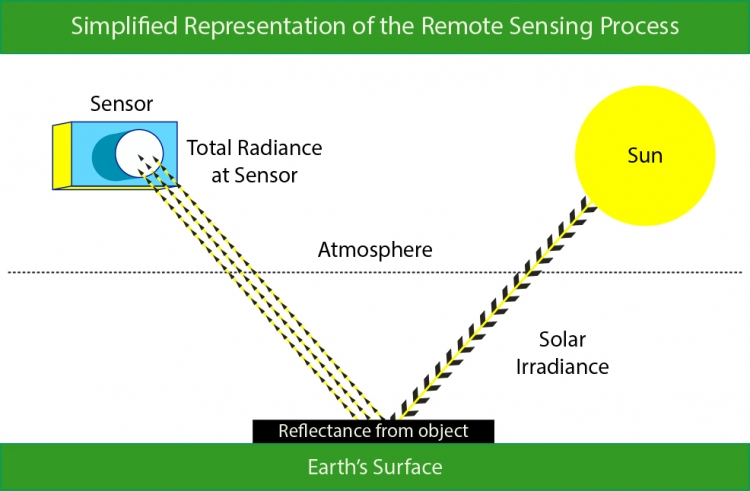

The remote sensing process is illustrated in Figure 7.4. During optical remote sensing, a satellite receives electromagnetic energy that has been (1) emitted from the Sun, and (2) reflected from the Earth’s surface. This information is then (3) transmitted to a receiving station in the form of data that are processed into an image. This process of measuring electromagnetic energy is complicated by the Earth’s atmosphere. The Earth's land surface reflects about three percent of all incoming solar radiation back to space. The rest is either reflected by the atmosphere, or absorbed and re-radiated as infrared energy. As energy passes through the atmosphere, it is scattered and absorbed by particles and gases. The absorption of electromagnetic energy is tied to specific regions in the electromagnetic spectrum. Areas of the spectrum which are not strongly influenced by absorption are called atmospheric windows. These atmospheric windows, seen above in Figure 7.3, govern what areas of the electromagnetic spectrum are useful for remote sensing purposes. The ability of a wavelength to pass through these atmospheric windows is termed transmissivity. In the following section, we will discuss how the energy we are able to sense can be used to differentiate between objects.

7.2.1 Visual Interpretation Elements

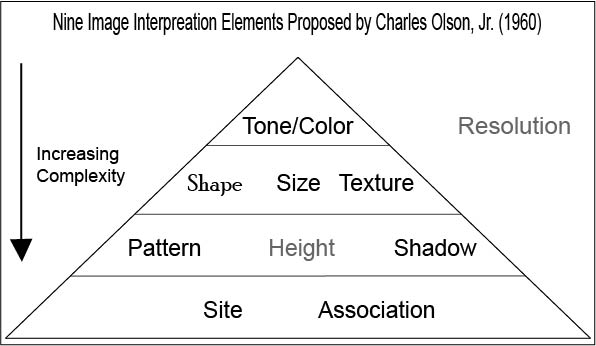

You have seen how a sensor captures information about the reflectance of electromagnetic energy. But, what can we do with that information once it has been collected? The possibilities are numerous. One simple thing that we can do with a satellite image is to interpret it visually. This method of analysis has its roots in the early air photo era and is still useful today for interpreting imagery. The visual interpretation of satellite images is based on the use of image interpretation elements, a set of nine visual cues that a person can use to infer relationships between objects and processes in the image.

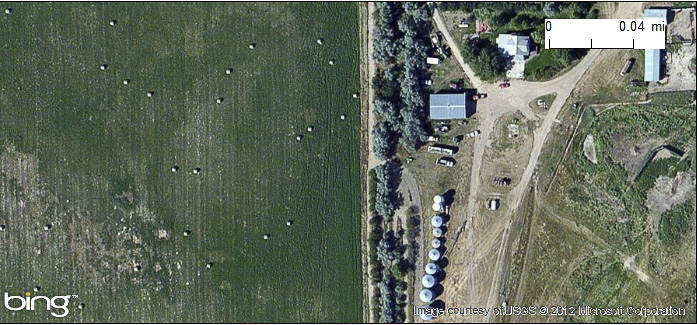

7.2.1.1 Size

The size of an object in an image can be visually discerned by comparing the object to other objects in the scene that you know the size of. For example, we know the relative size of a two-lane highway, but we may not be familiar with a building next to it. We can use the relative size of the highway and the building to judge the building’s size and then (having a size estimate) use other visual characteristics to determine what type of building it may be. An example of the use of size to discern between two objects is provided in figure 7.6.

7.2.1.2 Shape

There are not many cases where an individual object has a distinct shape, and the shape of an object must be considered within the context of the image scene. There are several examples where the shape of an object does give it away. A classic example of shape being used to identify a building is the Pentagon, the five-sided building in figure 7.7 below.

7.2.1.3 Tone/Color

In grayscale images, tone refers to the change in brightness across the image. Similarly, tone refers to the change in color in a color image. Later in this chapter, we will look at how we can exploit these differences to automatically derive information about the image scene. In Figure 7.8 below, you can see that the change in tone for an image can help you discern between water features and forests.

7.2.1.4 Pattern

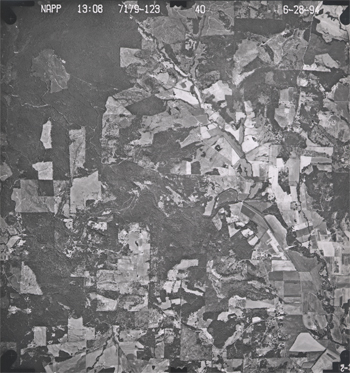

Pattern is the spatial arrangement of objects in an image. If you have ever seen the square plots of land as you flew over the Midwest, or even in an aerial image, you have probably used the repetitive pattern of those fields to help you determine that the plots of land are agricultural fields. Similarly, the patten of buildings in a city allows you to recognize street grids as in Figure 7.9 below.

7.2.1.5 Shadow

The presence or absence of shadows can provide information about the presence or absence of objects in the image scene. In addition, shadows can be used to determine the height of objects in the image. Shadows also can be a hindrance to image interpretation by hiding image details, as in Figure 7.10 below.

7.2.1.6 Texture

The term texture refers to the perceived roughness or smoothness of a surface. The visual perception of texture is determined by the change in tone, for example, a forest is typically very rough looking and contains a wide range of tonal values. In comparison, a lake where there is little to no wind looks very smooth because of a lack of texture. Whip up the winds though, and the texture of that same body of water soon looks much rougher, as we can see in Figure 7.11.

7.2.1.7 Association

Association refers to the relationships that we expect between objects in a scene. For example, in an image over a barnyard you might expect a barn, a silo, and even fences. Also, the placement of a farm is typically in rather rural areas. You would not expect a dairy farm in downtown Los Angeles. Figure 7.12 shows an instance where association can be used to identify a city park.

7.2.1.8 Site

Site refers to topographic or geographic location. The context around the feature under investigation can help with its identification. For example, a large sunken hole in Florida can be easily identified as a sink hole due to limestone dissolution. Similar shapes in the desserts of Arizona however are more likely to be impact craters resulting from meteorites.

7.2.2 Spectral Response Patterns

You have now seen the possibility of visually interpreting an image. Next, you will learn more about how to use the reflectance values that sensors gather to further analyze images. The various objects that make up the surface absorb and reflect different amounts of energy at different wavelengths. The magnitude of energy that an object reflects or emits across a range of wavelengths is called its spectral response pattern.

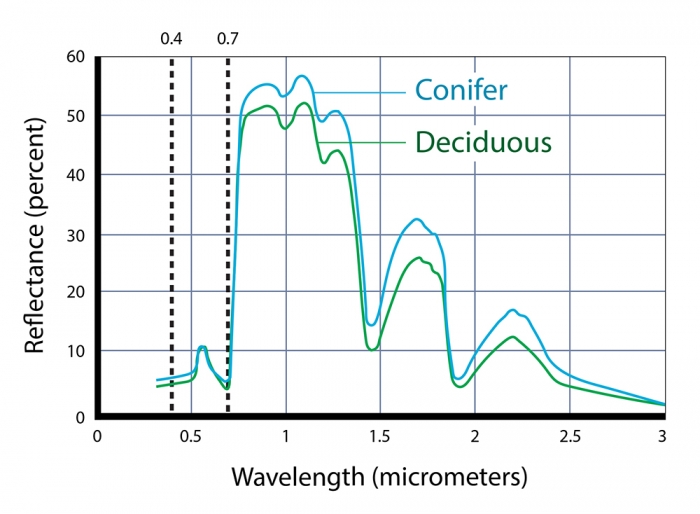

The following graph illustrates the spectral response pattern of coniferous and deciduous trees. The chlorophyll in green vegetation absorbs visible energy (particularly in the blue and red wavelengths) for use during photosynthesis. About half of the incoming near-infrared radiation is reflected (a characteristic of healthy, hydrated vegetation). We can identify several key points in the spectral response curve that can be used to evaluate the vegetation.

Notice that the reflectance patterns within the visual band are nearly identical. At longer, near- and mid-infrared wavelengths, however, the two types are much easier to differentiate. As you'll see later, land use and land cover mapping were previously accomplished by visual inspection of photographic imagery. Multispectral data and digital image processing make it possible to partially automate land cover mapping, which, in turn, makes it cost effective to identify some land use and land cover categories automatically, all of which makes it possible to map larger land areas more frequently.

Spectral response patterns are sometimes called spectral signatures. This term is misleading, however, because the reflectance of an entity varies with its condition, the time of year, and even the time of day. Instead of thin lines, the spectral responses of water, soil, grass, and trees might better be depicted as wide swaths to account for these variations.

7.2.2.1 Spectral Indices

One advantage of multispectral data is the ability to derive new data by calculating differences, ratios, or other quantities from reflectance values in two or more wavelength bands. For instance, detecting stressed vegetation amongst healthy vegetation may be difficult in any one band, particularly if differences in terrain elevation or slope cause some parts of a scene to be illuminated differently than others. However, using the ratio of reflectance values in the visible red band and the near-infrared band compensates for variations in scene illumination. Since the ratio of the two reflectance values is considerably lower for stressed vegetation regardless of illumination conditions, detection is easier and more reliable.

7.2.2.2 Normalized Vegetation Index

Besides simple ratios, remote sensing scientists have derived other mathematical formulae for deriving useful new data from multispectral imagery. One of the most widely used examples is the Normalized Difference Vegetation Index (NDVI). NDVI can be calculated for any sensor that contains both a red and infrared band; NDVI scores are calculated pixel-by-pixel using the following algorithm:

NDVI = (NIR - R) / (NIR + R)

R stands for the visible red band, while NIR represents the near-infrared band. The chlorophyll in green plants strongly absorbs radiation within visible red band during photosynthesis. In contrast, leaf structures cause plants to strongly reflect radiation in the near-infrared band. NDVI scores range from -1.0 to 1.0. A pixel associated with low reflectance values in the visible band and high reflectance in the near-infrared band would produce an NDVI score near 1.0, indicating the presence of healthy vegetation. Conversely, the NDVI scores of pixels associated with high reflectance in the visible band and low reflectance in the near-infrared band approach -1.0, indicating clouds, snow, or water. NDVI scores near 0 indicate rock and non-vegetated soil.

The NDVI provides useful information relevant to questions and decisions at geographical scales ranging from local to global. At the local scale, the Mondavi Vineyards in Napa Valley California can attest to the utility of NDVI data in monitoring plant health. In 1993, the vineyards suffered an infestation of phylloxera, a species of plant louse that attacks roots and is impervious to pesticides. The pest could only be overcome by removing infested vines and replacing them with more resistant root stock. The vineyard commissioned a consulting firm to acquire high-resolution (2-3 meter) visible and near-infrared imagery during consecutive growing seasons using an airborne sensor. Once the data from the two seasons were georegistered, comparison of NDVI scores revealed areas in which vine canopy density had declined. NDVI change detection proved to be such a fruitful approach that the vineyards adopted it for routine use as part of their overall precision farming strategy (Colucci, 1998).

7.3 Resolution

So far, you've read that remote sensing systems measure electromagnetic radiation, and that they record measurements in the form of raster image data. The resolution of remotely sensed image data varies in several ways. As you recall, resolution is the least detectable difference in a measurement. In this context, four of the most important kinds of measurement for which resolution is a consideration are spectral, radiometric, spatial, and temporal resolution.

7.3.1 Spectral Resolution

First, there is spectral resolution, the ability of a sensor to detect small differences in wavelength. For example, panchromatic film is sensitive to a broad range of wavelengths but not to small wavelength differences. An object that reflects a lot of energy in the green portion of the visible band would be indistinguishable in a panchromatic photo from an object that reflected the same amount of energy in the red band, for instance. A sensing system with higher spectral resolution would make it easier to tell the two objects apart.

7.3.2 Spatial Resolution

Spatial resolution refers to the coarseness or fineness of a raster grid. The grid cells in high resolution data, such as those produced by digital aerial imaging, or by the IKONOS sensor (described in detail below), correspond to ground areas as small as one square meter. Remotely sensed data, whose grid cells range from 15 to 80 meters on a side, such as the Landsat ETM+ and MSS sensors (also described below), are considered medium resolution. The cells in low resolution data, such as those produced by NOAA's AVHRR (Advanced Very High Resolution Radiometer) sensor (see below), are measured in kilometers.

The higher the spatial resolution of a digital image, the more detail it contains. Detail is valuable for some applications, but it is also costly. Although data compression techniques reduce storage requirements greatly, the storage and processing costs associated with high resolution satellite data often make medium and low resolution data preferable for analyses of extensive areas.

7.3.3 Radiometric Resolution

A third aspect of resolution is radiometric resolution, the measure of a sensor's ability to discriminate small differences in the magnitude of radiation within the ground area that corresponds to a single raster cell. The greater the bit depth (number of data bits per pixel) of the image that a sensor records, the higher its radiometric resolution is said to be. The AVHRR sensor, for example, stores 210 bits per pixel, meaning that the sensor is able to differentiate between 1023 intensity levels. In contrast, the Landsat sensors record the 28 bits per pixel (referred to as 8-bit), or just 256 intensity levels. Thus, although its spatial resolution is very coarse (~4 km), AVHRR takes its name from its high radiometric resolution.

7.3.4 Temporal Resolution

Temporal resolution describes the amount of time it takes for a sensor to revisit a given location at the same viewing angle during its orbit. The temporal resolution is dependent upon the sensor’s capabilities to adjust the sensor direction, swatch overlap, and the latitude at which the image is being taken. Temporal resolution is extremely important to consider when performing change analysis or for tracking temporal events. Aerial photography gives users the most flexibility when it comes to temporal resolution because flights are not limited by a continual orbital path.

Practice Quiz

Registered Penn State students should return now take the self-assessment quiz on the Nature of Image Data.

You may take practice quizzes as many times as you wish. They are not scored and do not affect your grade in any way.

7.4 Multi-spectral Image Processing

One of the main advantages of digital data is that they can be readily processed using digital computers. Over the next few pages, we focus on digital image processing techniques used to correct, enhance, and classify digital, remotely sensed image data.

7.4.1 Image Correction

As suggested earlier, scanning the Earth's surface from space is like scanning a paper document with a desktop scanner, only a lot more complicated. Raw remotely sensed image data are full of geometric and radiometric flaws caused by the curved shape of the Earth, the imperfectly transparent atmosphere, daily and seasonal variations in the amount of solar radiation received at the surface, and imperfections in scanning instruments, among other things. Understandably, most users of remotely sensed image data are not satisfied with the raw data transmitted from satellites to ground stations. Most prefer preprocessed data from which these flaws have been removed.

Relief displacement is one source of geometric distortion in digital image data, although it is less of a factor in satellite remote sensing than it is in aerial imaging, because satellites fly at much higher altitudes than airplanes. Another source of geometric distortions is the Earth itself, whose curvature and eastward spinning motion are more evident from space than at lower altitudes.

The Earth rotates on its axis from west to east. At the same time, remote sensing satellites like IKONOS, Landsat, and the NOAA satellites that carry the AVHRR sensor, orbit the Earth from pole to pole. If you were to plot on a cylindrical projection the flight path that a polar orbiting satellite traces over a 24-hour period, you would see a series of S-shaped waves. As a remote sensing satellite follows its orbital path over the spinning globe, each scan row begins at a position slightly west of the row that preceded it. In the raw scanned data, however, the first pixel in each row appears to be aligned with the other initial pixels. To properly georeference the pixels in a remotely sensed image, pixels must be shifted slightly to the west in each successive row. This is why processed scenes are shaped like skewed parallelograms when plotted in geographic or plane projections.

The reflectance at a given wavelength of an object measured by a remote sensing instrument varies in response to several factors, including the illumination of the object, its reflectivity, and the transmissivity of the atmosphere. Furthermore, the response of a given sensor may degrade over time. With these factors in mind, it should not be surprising that an object scanned at different times of the day or year will exhibit different radiometric characteristics. Such differences can be advantageous at times, but they can also pose problems for image analysts who want to create a mosaic, by adjoining neighboring images together, or to detect meaningful changes in land use and land cover over time. To cope with such problems, analysts have developed numerous radiometric correction techniques, including Earth-sun distance corrections, sun elevation corrections, and corrections for atmospheric haze.

To compensate for the different amounts of illumination of scenes captured at different times of day, or at different latitudes or seasons, image analysts may divide values measured in one band by values in another band, or they may apply mathematical functions that normalize reflectance values. Such functions are determined by the distance between the earth and the sun and the altitude of the sun above the horizon at a given location, time of day, and time of year. To make the corrections, analysts depend on metadata that includes the location, date, and time at which a particular scene was captured.

In addition to radiometric correction, there is a need for images to be geometrically corrected. Geometric correction and orthorectification are two methods for converting imagery into geographically-accurate information. Geometric correction is applied to satellite imagery to remove terrain related distortion and earth movement based on a limited set of information. In contrast, orthorectification uses precise sensor information, orbital parameters, ground control points, and elevation to precisely align the image to a surface model or datum. At the end of this chapter, you will read more about orthorectification as it relates to aerial imagery.

7.4.2 Image Enhancement

Correction techniques are routinely used to resolve geometric, radiometric, and other problems found in raw remotely sensed data. Another family of image processing techniques is used to make image data easier to interpret. These so-called image enhancement techniques include contrast stretching, edge enhancement, and deriving new data by calculating differences, ratios, or other quantities from reflectance values in two or more bands, among many others. This section considers briefly two common enhancement techniques: contrast stretching and derived data. Later, you'll learn how vegetation indices derived from two bands of AVHRR imagery are used to monitor vegetation growth at a global scale.

Consider the pair of images shown side by side below. Although both were produced from the same Landsat MSS data, you will notice that the image on the left is considerably dimmer than the one on the right. The difference is a result of contrast stretching. As you recall, Landsat data have a precision of 8 bits, that is, reflectance values are encoded as 256 intensity levels. As is often the case, reflectance in the near-infrared band of the scene partially shown below include an intensity range of only 30 to 80 in the raw image data. This limited range results in an image that lacks contrast and, consequently, appears dim. The image on the right shows the effect of stretching the range of reflectance values in the near-infrared band from 30-80 to 0-255, and then similarly stretching the visible green and visible red bands. As you can see, the contrast-stretched image is brighter and clearer.

7.4.3 Image Classification

Along with military surveillance and weather forecasting, a common use of remotely sensed image data is to monitor land cover and to inform land use planning. The term land cover refers to the kinds of vegetation that blanket the earth's surface, or the kinds of materials that form the surface where vegetation is absent. Land use, by contrast, refers to the functional roles that the land plays in human economic activities (Campbell, 1983).

Both land use and land cover are specified in terms of generalized categories. For instance, an early classification system adopted by a World Land Use Commission in 1949 consisted of nine primary categories, including settlements and associated non-agricultural lands, horticulture, tree and other perennial crops, cropland, improved permanent pasture, unimproved grazing land, woodlands, swamps and marshes, and unproductive land. Prior to the era of digital image processing, specially trained personnel drew land use maps by visually interpreting the shape, size, pattern, tone, texture, and shadows cast by features shown in aerial photographs. As you might imagine, this was an expensive, time-consuming process. It's not surprising then that the Commission appointed in 1949 failed in its attempt to produce a detailed global land use map.

Part of the appeal of digital image processing is the potential to automate land use and land cover mapping. To realize this potential, image analysts have developed a family of image classification techniques that automatically sort pixels with similar multispectral reflectance values into clusters that, ideally, correspond to functional land use and land cover categories. Two general types of image classification techniques have been developed: supervised and unsupervised techniques.

7.4.3.1 Supervised Classification

Human image analysts play crucial roles in both supervised and unsupervised image classification procedures. In supervised classification, the analyst's role is to specify in advance the multispectral reflectance or, in the case of the thermal infrared band, emittance values typical of each land use or land cover class.

For instance, to perform a supervised classification of the Landsat Thematic Mapper (TM) data shown above into two land cover categories, Vegetation and Other, you would first delineate several training fields that are representative of each land cover class. The illustration below shows two training fields for each class; however, to achieve the most reliable classification possible, you would define as many as 100 or more training fields per class.

The training fields you defined consist of clusters of pixels with similar reflectance or emittance values. If you did a good job in supervising the training stage of the classification, each cluster would represent the range of spectral characteristics exhibited by its corresponding land cover class. Once the clusters are defined, you would apply a classification algorithm to sort the remaining pixels in the scene into the class with the most similar spectral characteristics. One of the most commonly used algorithms computes the statistical probability that each pixel belongs to each class. Pixels are then assigned to the class associated with the highest probability. Algorithms of this kind are known as maximum likelihood classification. The result is an image like the one shown below, in which every pixel has been assigned to one of two land cover classes, vegetation and “other.”

7.4.3.2 Unsupervised Classification

Image analysts play a different role in unsupervised classification. They do not define training fields for each land cover class in advance. Instead, they rely on one of a family of statistical clustering algorithms to sort pixels into distinct spectral classes. Analysts may or may not even specify the number of classes in advance. Their responsibility is to determine the correspondences between the spectral classes that the algorithm defines and the functional land use and land cover categories established by agencies like the U.S. Geological Survey. An example in Section 7.7 below outlines how unsupervised classification contributes to the creation of a high-resolution national land cover data set.

Practice Quiz

Registered Penn State students should return now take the self-assessment quiz about Image Processing.

You may take practice quizzes as many times as you wish. They are not scored and do not affect your grade in any way.

7.5 Survey of Multispectral Imagery Types and Their Applications

There are a number of sources for satellite imagery, and the choice of which imagery you would need is dependent upon the context of the problem that you wish to solve. It is impossible to give you an in-depth survey of all of the available sensors and their data because of the sheer number of sensors in orbit. Instead, in the next section, we explore examples of remotely sensed image data produced by measuring electromagnetic energy in the visible, near-infrared, and thermal infrared bands.

7.5.1 IKONOS

When the Russian space agency first began selling its space surveillance imagery in 1994, a new company called Space Imaging, Inc. was chartered in the United States. Recognizing that high-resolution images were then available commercially from competing foreign sources, the U.S. government authorized private firms under its jurisdiction to produce and market remotely sensed data at spatial resolutions as high as one meter. By 1999, after a failed first attempt, Space Imaging successfully launched its IKONOS I satellite into an orbital path that circles the Earth 640 km above the surface, from pole to pole, crossing the equator at the same time every day. Such an orbit is called a sun synchronous polar orbit, in contrast with the geosynchronous orbit of communications and some weather satellites that remain over the same point on the Earth's surface at all times.

IKONOS' panchromatic sensor records reflectances in the visible band at a spatial resolution of one meter, and a bit depth of eleven bits per pixel. The expanded bit depth enables the sensor to record reflectances more precisely, and allows technicians to filter out atmospheric haze more effectively than is possible with 8-bit imagery. Archived, unrectified, panchromatic IKONOS imagery within the U.S. is available for as little as $7 per square kilometer, but new orthorectified imagery costs $28 per square kilometer and up.

The previous paragraph highlighted the one-meter panchromatic (pan) data produced by the IKONOS satellite sensor. Pan data is not all that IKONOS produces, however. It is a multispectral sensor that records reflectances within four other (narrower) bands, including the blue, green, and red wavelengths of the visible spectrum, and the near-infrared band. The range(s) of wavelengths that a sensor is able to detect is called its spectral sensitivity.

| Spectral Sensitivity | Spatial Resolution |

|---|---|

| 0.45 - 0.90 µm (panchromatic) | 1m |

| 0.45 - 0.52 µm (visible blue) | 4m |

| 0.51 - 0.60 µm (visible green) | 4m |

| 0.63 - 0.70 µm (visible red) | 4m |

| 0.76 - 0,85 µm (near IR) | 4m |

Credit: Pennsylvania State University.

A competing firm called ORBIMAGE acquired Space Imaging in early 2006, after ORBIMAGE secured a half-billion dollar contract with the National Geospatial-Intelligence Agency. The merged companies are now called GeoEye. [5]

7.5.2 Landsat TM and ETM

As NASA prepared to launch Landsat 4 in 1982, a new sensing system was added called Thematic Mapper (TM). TM was a new and improved version of Landsat Multispectral Scanner (MSS) that featured higher spatial resolution (30 meters in most channels) and expanded spectral sensitivity (seven bands, including visible blue, visible green, visible red, near-infrared, two mid-infrared, and thermal infrared wavelengths). An Enhanced Thematic Mapper Plus (ETM+) sensor, which includes an eighth (panchromatic) band with a spatial resolution of 15 meters, was onboard Landsat 7 when it successfully launched in 1999.

The spectral sensitivities of the TM and ETM+ sensors are attuned to both the spectral response characteristics of the phenomena that the sensors are designed to monitor, as well as to the windows within which electromagnetic energy are able to penetrate the atmosphere. The following table outlines some of the phenomena revealed by each of the wavelengths bands, phenomena that are much less evident in panchromatic image data alone.

| Band | Phenomena revealed |

|---|---|

| 0.45 - 0.52 µm (visible blue) | Shorelines and water depths (these wavelengths penetrate water) |

| 0.52 - 0.60 µm (visible green) | Plant types and vigor (peak vegetation reflects these wavelengths strongly) |

| 0.63 -0.69 µm (visible red) | Photosynthetic activity (plants absorb these wavelengths during photosynthesis) |

| 0.76 - 0.90 µm (near IR) | Plant vigor (healthy plant tissue reflects these wavelengths strongly) |

| 1.55 - 1.75 µm (mid IR) | Plant water stress, soil moisture, rock types, cloud cover vs. snow |

| 10.40 - 12.50 µm (thermal IR) | Relative amounts of heat, soil moisture |

| 2.08 - 2.35 µm (mid IR) | Plant water stress, mineral and rock types |

Phenomena revealed by different bands of Lndsat TM/ETM data.

Table Credit: USGS

Until 1984, Landsat data were distributed by the U.S. federal government (originally by the USGS's EROS Data Center, later by NOAA). Data produced by Landsat missions 1 through 4 are still available for sale from EROS. With the Land Remote Sensing Commercialization Act of 1984, however, the U.S. Congress privatized the Landsat program, transferring responsibility for construction and launch of Landsat 5, and for distribution of the data it produced, to a firm called EOSAT.

Dissatisfied with the prohibitive costs of unsubsidized data (as much as $4,400 for a single 185 km by 170 km scene), users prompted Congress to pass the Land Remote Sensing Policy Act of 1992. The new legislation returned responsibility for the Landsat program to the U.S. government. Data produced by Landsat 7 is distributed by USGS at a cost to users of $600 per scene (about 2 cents per square kilometer). Scenes that include data gaps caused by a "scan line corrector" failure are sold for $250; $275 for scenes in which gaps are filled with earlier data.

7.5.3 AVHRR

AVHRR sensors have been onboard sixteen satellites maintained by the National Oceanic and Atmospheric Administration (NOAA) since 1979 (TIROS-N, NOAA-6 through NOAA-15). The data the sensors produce are widely used for large-area studies of vegetation, soil moisture, snow cover, fire susceptibility, and floods, among other things.

AVHRR sensors measure electromagnetic energy within five spectral bands, including visible red, near infrared, and three thermal infrared. As we discovered earlier, the visible red and near-infrared bands are particularly useful for large-area vegetation monitoring through the calculation of NDVI (see 2.2.2 for review of the concept NDVI).

| Spectral Sensitivity | Spatial Resolution |

|---|---|

| 0.58 - 0.68 µm (visible red) | 1-4 km* |

| 0.725 - 1.10 µm (near IR) | 1-4 km* |

| 3.55 - 3.93 µm (thermal IR) | 1-4 km* |

| 10.3 - 11.3 µm (thermal IR) | 1-4 km* |

| 11.5 - 12.5 µm (thermal IR) | 1-4 km* |

Wavelengths are expressed in micrometers (millionths of a meter). Spatial resolution is expressed in kilometers (thousands of meters). *Spatial resolution of AVHRR data varies from 1 km to 16 km. Processed data consist of uniform 1 km or 4 km grids.

Credit: NASA

The NOAA satellites that carry AVHRR sensors trace sun-synchronous polar orbits at altitudes of about 833 km. Traveling at ground velocities of over 6.5 kilometers per second, the satellites orbit the Earth 14 times daily (every 102 minutes), crossing over the same locations along the equator at the same times every day. As it orbits, the AVHRR sensor sweeps a scan head along a 110°-wide arc beneath the satellite, taking many measurements every second. (The back and forth sweeping motion of the scan head is said to resemble a whisk broom.) The arc corresponds to a ground swath of about 2400 km. Because the scan head traverses so wide an arc, its instantaneous field of view (IFOV: the ground area covered by a single pixel) varies greatly. Directly beneath the satellite, the IFOV is about 1 km square. Near the edge of the swath, however, the IFOV expands to over 16 square kilometers. To achieve uniform resolution, the distorted IFOVs near the edges of the swath must be resampled to a 1 km grid (resampling is discussed later in this chapter). The AVHRR sensor is capable of producing daily global coverage in the visible band, and twice daily coverage in the thermal IR band.

7.6 Other Types of Imagery

The remote sensing systems you've studied so far are sensitive to the visible, near-infrared, and thermal infrared bands of the electromagnetic spectrum, wavelengths at which the magnitude of solar radiation is greatest. IKONOS, AVHRR, and the Landsat MSS, TM, and ETM+ instruments are all passive sensors that only measure radiation emitted by other objects.

There are two main shortcomings to passive sensing of the visible and infrared bands. First, clouds interfere with both incoming and outgoing radiation at these wavelengths. Secondly, reflected visible and near-infrared radiation can only be measured during daylight hours. This is why the AVHRR sensor only produces visible and near-infrared imagery of the entire Earth once a day, although it is capable of two daily scans.

7.6.1 Microwave Data

Longwave radiation, or microwaves, are made up of wavelengths between about one millimeter and one meter. Microwaves can penetrate clouds, but the sun and Earth emit so little longwave radiation that it can't be measured easily from space. Active remote sensing systems solve this problem. Active sensors like those aboard the European Space Agency's ERS satellites, the Japanese JERS satellites, and the Canadian Radarsat, among others, transmit pulses of long wave radiation, then measure the intensity and travel time of those pulses after they are reflected back to space from the Earth's surface. Microwave sensing is unaffected by cloud cover, and can operate day or night. Both image data and elevation data can be produced by microwave sensing, as you will discover in the sections on imaging radar and radar altimetry that follow.

7.6.2 Imaging Radar

One example of active remote sensing that everyone has heard of is radar, which stands for Radio Detection And Ranging. Radar was developed as an air defense system during World War II and is now the primary remote sensing system air traffic controllers use to track the 40,000 daily aircraft takeoffs and landings in the United States. Radar antennas alternately transmit and receive pulses of microwave energy. Since both the magnitude of energy transmitted and its velocity (the speed of light) are known, radar systems are able to record either the intensity or the round-trip distance traveled by pulses reflected back to the sensor. Systems that record pulse intensity are called imaging radars.

In addition to its indispensable role in navigation, radar is also an important source of raster image data about the Earth's surface. Radar images look the way they do because of the different ways that objects reflect microwave energy. In general, rough-textured objects reflect more energy back to the sensor than smooth objects. Smooth objects, such as water bodies, are highly reflective, but unless they are perpendicular to the direction of the incoming pulse, the reflected energy all bounces off at an angle and never return to the sensor. Rough surfaces, such as vegetated agricultural fields, tend to scatter the pulse in many directions, increasing the chance that some back scatter will return to the sensor.

The imaging radar aboard the European Resource Satellite (ERS-1) produced the data used to create the image shown above. The smooth surface of the flooded Mississippi River deflected the radio signal away from the sensor, while the surrounding rougher-textured land cover reflected larger portions of the radar pulse. The lighter an object appears in the image, the more energy it reflected. Imaging radar can be used to monitor flood extents regardless of weather conditions. Passive instruments like Landsat MSS and TM that are sensitive only to shorter wavelengths are useless as long as cloud-covered skies prevail.

Practice Quiz

Registered Penn State students should return now take the self-assessment quiz about Visible and Infrared Imagery.

You may take practice quizzes as many times as you wish. They are not scored and do not affect your grade in any way.

7.7 Case Study: Using Landsat for Land Cover Classification for NLCD

The USGS developed one of the first land use/land cover classification systems designed specifically for use with remotely sensed imagery. The Anderson Land Use/Land Cover Classification system, named for the former Chief Geographer of the USGS who led the team that developed the system, consists of nine land cover categories (urban or built-up; agricultural; range; forest; water; wetland; barren; tundra; and perennial snow and ice), and 37 subcategories (for example, varieties of agricultural land include cropland and pasture; orchards, groves, vineyards, nurseries, and ornamental horticulture; confined feeding operations; and other agricultural land). Image analysts at the U. S. Geological Survey created the USGS Land Use and Land Cover (LULC) data by manually outlining and coding areas on air photos that appeared to have homogeneous land cover that corresponded to one of the Anderson classes.

The LULC data were compiled for use at 1:250,000 and 1:100,000 scales. Analysts drew outlines of land cover polygons onto vertical aerial photographs. Later, the outlines were transferred to transparent film georegistered with small-scale topographic base maps. The small map scales kept the task from taking too long and costing too much, but also forced analysts to generalize the land cover polygons quite a lot. The smallest man-made features encoded in the LULC data are four hectares (ten acres) in size, and at least 200 meters (660 feet) wide at their narrowest point. The smallest non-man-made features are sixteen hectares (40 acres) in size, with a minimum width of 400 meters (1320 feet). Smaller features were aggregated into larger ones. After the land cover polygons were drawn onto paper and georegistered with topographic base maps, they were digitized as vector features, and attributed with land cover codes. A rasterized version of the LULC data was produced later.

The successor to LULC is the USGS's National Land Cover Data (NLCD). Unlike LULC, which originated as a vector data set in which the smallest features are about ten acres in size, NLCD is a raster data set with a spatial resolution of 30 meters (i.e., pixels represent about 900 square meters on the ground) derived from Landsat TM imagery. The steps involved in producing the NLCD include preprocessing, classification, and accuracy assessment, each of which is described briefly below.

7.7.1 Preprocessing

The first version of NLCD--NLCD 92--was produced for subsets of ten federal regions that make up the conterminous United States. The primary source data were bands 3, 4, 5, and 7 (visible red, near-infrared, mid-infrared, and thermal infrared) of cloud-free Landsat TM scenes acquired during the spring and fall (when trees are mostly bare of leaves) of 1992. Selected scenes were geometrically and radiometrically corrected, then combined into sub-regional mosaics comprised of no more than 18 scenes. Mosaics were then projected to the same Albers Conic Equal Area projection (with standard parallels at 29.5° and 45.5° North Latitude, and central meridian at 96° West Longitude) based upon the NAD83 horizontal datum.

7.7.2 Image Classification

An unsupervised classification algorithm was applied to the preprocessed mosaics to generate 100 spectrally distinct pixel clusters. Using aerial photographs and other references, image analysts at USGS then assigned each cluster to one of the classes in a modified version of the Anderson classification scheme. Considerable interpretation was required, since not all functional classes have unique spectral response patterns.

| Level I Classes | Level II Classes | |

|---|---|---|

| Water | 11 | Open Water |

| 12 | Perennial Ice/Snow | |

| Developed | 21 | Low Intensity Residential |

| 22 | High Intensity Residential | |

| 23 | Commercial/ Industrial/Transportation | |

| Barren | 31 | Bare Rock/Sand/Clay |

| 32 | Quarries/ Strip Mines/Gravel Pits | |

| 33 | Transitional | |

| Forested Upland | 41 | Deciduous Forest |

| 42 | Evergreen Forest | |

| 43 | Mixed Forest | |

| Shrubland | 51 | Shrubland |

| Non-Natural Woody | 61 | Orchards/Vineyards/Other |

| Herbaceous Upland Natural/Semi-natural Vegetation | 71 | Grasslands/Herbaceous |

| Herbaceous Planted/Cultivated | 81 | Pasture/Hay |

| 82 | Row Crops | |

| 83 | Small Grains | |

| 84 | Fallow | |

| 85 | Urban/Recreational Grasses | |

| Wetlands | 91 | Woody Wetlands |

| 92 | Emergent Herbaceous Wetlands |

Table credit: USGS.

7.7.3 Accuracy Assessment

The USGS hired private sector vendors to assess the classification accuracy of the NLCD 92 by checking randomly sampled pixels against manually interpreted aerial photographs. Results from the first four completed regions suggested that the likelihood that a given pixel is correctly classified ranges from only 38 to 62 percent. Much of the classification error was found to occur among the Level II classes that make up the various Level I classes, and some classes were much more error-prone than others. USGS encourages users to aggregate the data into 3 x 3 or 5 x 5 pixel blocks (in other words, to decrease spatial resolution from 30 meters to 90 or 150 meters), or to aggregate the 21 Level II classes into the nine Level I classes.

National Land Cover Dataset Classification System Legend:

| Color Key | RGB Value | Class Number and Name |

|---|---|---|

| Blue | 0, 0, 255 | 11 Open Water |

| White | 255, 255, 255 | 12 Perenniallce/Snow |

| Light Orange | 255, 204, 0 | 21 Low Intensity Residential |

| Orange | 255, 153, 0 | 22 High Intensity Residential |

| Red | 255, 0, 0 | 23 Commercial/Industrial/Transportation |

| Eggshell White | 229, 229, 204 | 31 Bare Rock/Sand/Clay |

| Brown | 128, 77, 51 | 32 Quarries/Strip Mines/Gravel Pits |

| Neon Pink | 255, 0, 255 | 33 Transitional |

| Green | 0, 178, 0 | 41 Deciduous Forest |

| Dark Green | 0, 102, 0 | 42 Evergreen Forest |

| Teal | 0, 178, 178 | 43 Mixed Forest |

| Olive Green | 178, 178, 0 | 51 Shurbland |

| Purple | 153, 25, 229 | 61 Orchards/Vineyards |

| Tan | 229, 204, 153 | 71 Grassland/Herbaceous |

| Yellow | 255, 255, 0 | 81 Pasture/Hay |

| Light Pink | 255, 179, 204 | 82 Row Crops |

| Pink | 204, 77, 128 | 83 Small Grains |

| Gray | 178, 178, 178 | 84 Fallow |

| Neon Green | 128, 255, 0 | 85 Urban/Recreational Grasses |

| Seafoam | 128, 255, 204 | 91 Woody Wetlands |

| Neon Teal | 0, 255, 255 | 92 Emergent Herbacious Wetlands |

7.8 Orthoimagery

One important use of remote sensing is as input to the production of reference maps that cover the U.S. (and other countries). An important part of the process of utilizing remotely sensed information for mapping is rectification of the imagery; that process produces orthoimages. The U.S. Federal Geographic Data Committee (FGDC, 1997, p. 18) defines orthoimage as "a georeferenced image prepared from an aerial photograph or other remotely sensed data ... [that] has the same metric properties as a map and has a uniform scale." Unlike orthoimages, the scale of ordinary aerial images varies across the image, due to the changing elevation of the terrain surface (among other things). The process of creating an orthoimage from an ordinary aerial image is called orthorectification. Photogrammetrists are the professionals who specialize in creating orthorectified aerial imagery, and in compiling geometrically-accurate vector data from aerial images. So, to appreciate the requirements of the orthoimagery and its use in national mapping efforts (which will be discussed in more detail in Chapter 8), we first need to investigate the field of photogrammetry.

7.8.1 Photogrammetry

Photogrammetry is a profession concerned with producing precise measurements of objects from photographs and photoimagery. One of the objects measured most often by photogrammetrists is the surface of the Earth. Since the mid-20th century, aerial images have been the primary source of data used by USGS and similar agencies to create and revise topographic maps. Before then, topographic maps were compiled in the field using magnetic compasses, tapes, plane tables (a drawing board mounted on a tripod, equipped with a leveling telescope like a transit), and even barometers to estimate elevation from changes in air pressure. Although field surveys continue to be important for establishing horizontal and vertical control, photogrammetry has greatly improved the efficiency and quality of topographic mapping.

A vertical aerial photograph is a picture of the Earth's surface taken from above with a camera oriented such that its optical axis is vertical. In other words, when a vertical aerial photograph is exposed to the light reflected from the Earth's surface, the digital imaging surface (historically, it was a sheet of photographic film) is parallel to the ground. In contrast, an image you might create by snapping a picture of the ground below while traveling in an airplane is called an oblique aerial photograph, because the camera's optical axis forms an oblique angle with the ground.

A straight line between the center of a lens and the center of a visible scene is called an optical axis. The nominal scale of a vertical air photo is equivalent to f / H, where f is the focal length of the camera (the distance between the camera lens and the image surface -- usually six inches), and H is the flying height of the aircraft above the ground. It is possible to produce a vertical air photo such that scale is consistent throughout the image. This is only possible, however, if the terrain in the scene is absolutely flat. In rare cases where that condition is met, topographic maps can be compiled directly from vertical aerial photographs. Most often, however, air photos of variable terrain need to be transformed, or rectified, before they can be used as a source for mapping.

Government agencies at all levels need up-to-date aerial imagery. Early efforts to sponsor complete and recurring coverage of the U.S. included the National Aerial Photography Program (NAPP [6]), which replaced an earlier National High Altitude Photography program in 1987. NAPP was a consortium of federal government agencies that aimed to jointly sponsor vertical aerial photography of the entire lower 48 states every seven years or so at an altitude of 20,000 feet, suitable for producing topographic maps at scales as large as 1:5,000. More recently NAPP has been eclipsed by another consortium called the National Agricultural Imagery Program (NAIP [7]).

Aerial photography missions involve capturing sequences of overlapping images along many parallel flight paths. In the portion of the air photo mosaic shown below, note that the photographs overlap one another end to end, and side to side. This overlap is necessary for stereoscopic viewing, which is the key to rectifying photographs of variable terrain. It takes about 10 overlapping aerial photographs taken along two adjacent north-south flight paths to provide stereo coverage for a 7.5-minute quadrangle.

Try This

Use the USGS' EarthExplorer [8] to identify the vertical aerial photograph that shows the "populated place" in which you live. How old is the photo? (EarthExplorer is part of a USGS distribution system.)

Note: The Digital Orthophoto backdrop that EarthExplorer allows you to view is not the same as the NAPP photos the system allows you to identify and order. By the end of this lesson, you should know the difference! If you don't, use the Chapter 6 Discussion Forum to ask.

7.8.2 Perspective and Planimetry

To understand why topographic maps can't be traced directly off of most vertical aerial photographs, you first need to appreciate the difference between perspective and planimetry. In a perspective view, all light rays reflected from the Earth's surface pass through a single point at the center of the camera lens. A planimetric (plan) view, by contrast, looks as though every position on the ground is being viewed from directly above. Scale varies in perspective views. In plan views, scale is everywhere consistent (if we overlook variations in small-scale maps due to map projections). Topographic maps are said to be planimetrically correct. So are orthoimages. Vertical aerial photographs are not.

As discussed above, the scale of an aerial photograph is partly a function of flying height. As terrain elevation increases, flying height in relation to the terrain decreases and photo scale increases. As terrain elevation decreases, flying height increases and photo scale decreases. Thus, variations in elevation cause variations in scale on aerial photographs. Specifically, the higher the elevation of an object, the farther the object will be displaced from its actual position away from the principal point of the photograph (the point on the ground surface that is directly below the camera lens, Figure 7.10). Conversely, objects at positions lower than the mean elevation of the surface will be displaced toward the principal point. This effect, called relief displacement, is illustrated in the diagram below. Note that the effect increases with distance from the principal point; scale distortion is zero at the principal point.

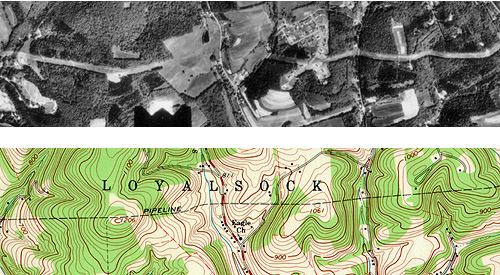

Compare the map and photograph below. Both show the same gas pipeline, which passes through hilly terrain. Note the deformation of the pipeline route in the photo relative to the shape of the route on the topographic map. The deformation in the photo is caused by relief displacement. The photo would not serve well on its own as a source for topographic mapping.

Confused? Think of it this way: where the terrain elevation is high, the ground is closer to the aerial camera, and the photo scale is a little larger than where the terrain elevation is lower. Although the altitude of the camera is constant, the effect of the undulating terrain is to zoom in and out. The effect of continuously-varying scale is to distort the geometry of the aerial photo. This effect is called relief displacement.

Distorted perspective views can be transformed into plan views through a process called rectification. Digital aerial photographs can be rectified using specialized photogrammetric software that shifts image locations (encoded digitally as pixels) toward or away from the principal point of each photo in proportion to two variables: the elevation of the point of the Earth's surface at the location that corresponds to each pixel, and each pixel's distance from the principal point of the photo.

Another way to rectify perspective images is to view pairs of images stereoscopically.

7.8.3 Stereoscopy

If you have normal or corrected vision in both eyes, your view of the world is stereoscopic. Viewing your environment simultaneously from two slightly different perspectives enables you to estimate very accurately which objects in your visual field are nearer, and which are farther away. You know this ability as depth perception.

When you fix your gaze upon an object, the intersection of your two optical axes at the object form what is called a parallactic angle. The keenness of human depth perception is what makes photogrammetric measurements possible.

Your perception of a three-dimensional environment is produced from two separate two-dimensional images. The images produced by your eyes are analogous to two aerial images taken one after another along a flight path. Objects that appear in the area of overlap between two aerial images are seen from two different perspectives. A pair of overlapping vertical aerial images is called a stereopair. When a stereopair is viewed such that each eye sees only one image, it is possible to “see” a three-dimensional image of the area of overlap.

If you have access to a pair of red-cyan (anaglyph) glasses (some of you might have a cardboard pair obtained for viewing 3D movies), you will be able to see the image in this video in 3D: Micro-Images Stereo Zoom-In [9] (the video has a 3D control that allows you to manipulate some viewing options, but you will not see 3D without either a pair of anaglyph glasses or special graphics hardware on your computer). Without such glasses, you will see a somewhat messy looking merger of slightly offset images in these colors.

7.8.4 Rectification by Stereoscopy

Aerial images need to be transformed from perspective views into plan views before they can be used to trace the features that appear on topographic maps, or to digitize vector features in digital data sets. One way to accomplish the transformation is through stereoscopic viewing.

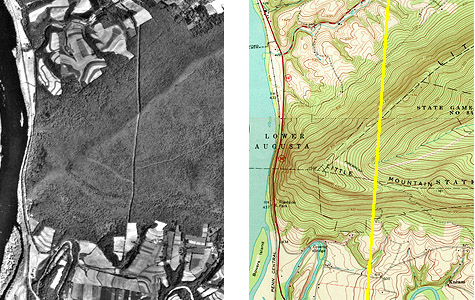

Below are portions of a vertical aerial photograph and a topographic map that show the same area, a synclinal ridge called "Little Mountain" on the Susquehanna River in central Pennsylvania. A linear clearing, cut for a power line, appears on both (highlighted in yellow on the map). The clearing appears crooked on the photograph due to relief displacement. Yet, we know that an aerial image like this one was used to compile the topographic map. The air photo had to be rectified to be used as a source for topographic mapping.

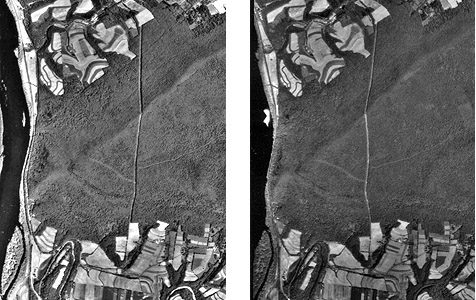

Below are portions of two aerial photographs showing Little Mountain. The two photos were taken from successive flight paths. The two perspectives can be used to create a stereopair.

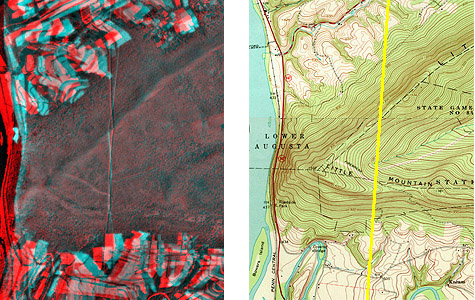

Next, the stereopair is superimposed in an anaglyph image. Using red/cyan glasses, you should be able to see a three-dimensional image of Little Mountain in which the power line appears straight, as it would if you were able to see it in person. Notice that the height of Little Mountain is exaggerated due to the fact that the distance between the principal points of the two photos is not exactly proportional to the distance between your eyes.

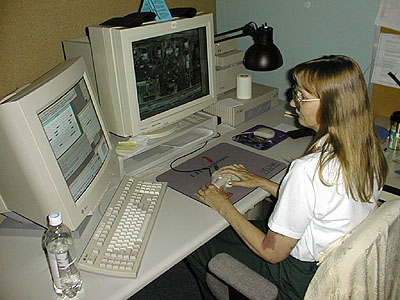

Photogrammetrists use instruments called stereoplotters to trace, or compile, the data shown on topographic maps from stereoscopic images like the ones you've seen here. The operator pictured below is viewing a stereoscopic model similar to the one you see when you view the anaglyph stereo images with red/blue glasses. A stereopair is superimposed on the right-hand screen of the operator's workstation. The left-hand screen shows dialog boxes and command windows through which she controls the stereoplotter software. Instead of red/blue glasses, the operator is wearing glasses with polarized lens filters that allow her to visualize a three-dimensional image of the terrain. She handles a 3-D mouse that allows her to place a cursor on the terrain image within inches of its actual horizontal and vertical position.

7.8.5 Orthorectification

An orthoimage (or orthophoto) is a single aerial image in which distortions caused by relief displacement have been removed. The scale of an orthoimage is uniform. Like a planimetrically correct map, orthoimages depict scenes as though every point were viewed simultaneously from directly above. In other words, they represent the surface as if every optical axis were orthogonal to the ground surface. Notice how the power line clearing has been straightened in the orthophoto on the right below.

Since the early 1990s, orthophotos have been commonly used as sources for editing and revising of digital vector data.

7.8.6 Digital Orthophoto Quadrangle (DOQ)

Digital Orthophoto Quads (DOQs) are raster images of rectified aerial photographs. They are widely used as sources for editing and revising vector topographic data. For example, the vector roads data maintained by businesses like NAVTEQ and Tele Atlas, as well as local and state government agencies, can be plotted over DOQs then edited to reflect changes shown in the orthoimage.

Most DOQs are produced by electronically scanning, then rectifying, black-and-white vertical aerial photographs. DOQs may also be produced from natural-color or near-infrared false-color photos and from digital imagery. Like USGS topographic maps, scale is uniform across each DOQ as a result of the rectification process.

Most DOQs cover 3.75' of longitude by 3.75' of latitude (the ' symbol represents minutes). A set of four DOQs corresponds to each 7.5' quadrangle. (For this reason, DOQs are sometimes called DOQQs--Digital Orthophoto Quarter Quadrangles.) For its National Map, USGS has edge-matched DOQs into seamless data layers, by year of acquisition.

7.9 Summary

This chapter provides a broad introduction to the process of sensing the Earth remotely from satellites and aircraft. Remotely sensed data have become a critical input to our ability to understand the Earth system, to monitor weather and other environmental events, to plan cities and manage resources, to monitor environmental change, and many other applications. Important among the applications is the use of remotely sensed information as an input to mapping the surface of the Earth (its "relief"). Chapter 8: Representing Surfaces will include additional attention to remote sensing and photogrammetry as one of the major tools in the process of representing the surfaces.

Practice Quiz

Registered Penn State students should return now take the self-assessment quiz about Photogrammetry.

You may take practice quizzes as many times as you wish. They are not scored and do not affect your grade in any way.

7.9 Glossary

Remote sensing: Data collected from a distance without visiting or interacting with the phenomena of interest.

Space-borne remote sensing: The use of sensors attached to satellite systems continually orbiting around the Earth.

Aerial imaging systems: Sensors attached to aircraft and flown on demand, meaning that their data capture is not continuous.

Sensors: Instruments for capturing electromagnetic energy emitted and reflected by objects on the Earth's surface.

Electromagnetic radiation: A form of energy emitted by all matter above absolute zero temperature (0 Kelvin or -273° Celsius).

Electromagnetic spectrum: Relative amounts of electromagnetic energy emitted by the Sun and the Earth across the range of wavelengths.

Visible wavelengths: The peak wavelengths of electromagnetic spectrum which humans can see.

Atmospheric window: Areas of the electromagnetic spectrum which are not strongly influenced by absorption.

Transmissivity: The ability of a wavelength to pass through these atmospheric windows.

Image Interpretation Elements: A set of nine visual cues that are used to interpret imagery. Those elements are: size, shape, color/tone, pattern, shadow, texture, association, height, and site.

Spectral Response Pattern (spectral signature): The magnitude of energy that an object reflects or emits across a range of wavelengths.

Normalized Difference Vegetation Index: Mathematical formula for calculating the “greenness” in a scene using Near-Infrared and Red bands from an image.

Spatial Resolution: Refers to the coarseness or fineness of a raster grid.

Spectral Resolution: The ability of a sensor to detect small differences in wavelength.

Radiometric Resolution: The measure of a sensor's ability to discriminate small differences in the magnitude of radiation within the ground area that corresponds to a single raster cell.

Temporal Resolution: Describes the amount of time it takes for a sensor to revisit a given location at the same viewing angle during its orbit.

Geometric Correction: Is applied to satellite imagery to remove terrain related distortion and Earth movement based on a limited set of information.

Radiometric Correction: Techniques for removing noise from imagery, including Earth-sun distance corrections, sun elevation corrections, and corrections for atmospheric haze.

Mosaic: Adjoining neighboring images together in a way that preserves their geographic relationship.

Land cover: The kinds of vegetation that blanket the Earth's surface, or the kinds of materials that form the surface where vegetation is absent.

Land use: The functional roles that the land plays in human economic activities (Campbell, 1983).

Maximum likelihood classification: One of the most commonly used algorithms computes the statistical probability that each pixel belongs to each class. Pixels are then assigned to the class associated with the highest probability.

Sun synchronous polar orbit: Orbital path that circles the Earth 640 km above the surface, from pole to pole, crossing the equator at the same time every day.

Geosynchronous orbit: Orbital path common to communications and some weather satellites that remain over the same point on the Earth's surface at all times.

Instantaneous Field of View: The ground area covered by a single pixel.

Passive remote sensors: Only measure radiation emitted by other objects (IKONOS, Landsat, AVHRR).

Active remote sensors: Transmit pulses of long wave radiation, then measure the intensity and travel time of those pulses after they are reflected back to space from the Earth's surface (JERS, ERS, Radarsat).

Imaging Radar: Active remote sensor system that record pulse intensity or the round-trip distance traveled by pulses reflected back to the sensor.

Georeference: Define the images existence in a physical space, establishing a location in terms of map projection and coordinate system.

Orthoimage: A georeferenced image prepared from an aerial photograph or other remotely sensed data ... [that] has the same metric properties as a map and has a uniform scale.

Orthorectification: The process of creating an orthoimage from an ordinary aerial image.

Photogrammetry: Profession concerned with producing precise measurements of objects from photographs and photoimagery.

Optical Axis: A straight line between the center of a lens and the center of a visible scene.

Vertical Aerial Photograph: Is a picture of the Earth's surface taken from above with a camera oriented such that its optical axis is vertical.

Perspective View: All light rays reflected from the Earth's surface pass through a single point at the center of the camera lens.

Planimetric View: Looks as though every position on the ground is being viewed from directly above.

Relief Displacement: Objects at positions lower than the mean elevation of the surface will be displaced toward the principal point.

Rectification: Process by which distorted perspective views can be transformed into plan views.

Stereoscopic: Viewing your environment simultaneously from two slightly different perspectives enables you to estimate very accurately which objects in your visual field are nearer, and which are farther away.

Parallactic Angle: The intersection of your two optical axes at the object form when you fix your gaze upon an object.

Stereopair: A pair of overlapping vertical aerial images.

Stereoplotter: Instruments to trace, or compile, the data shown on topographic maps from stereoscopic images.

Anaglyph Image: Images contain two differently filtered colored images, one for each eye, to create a 3 dimensional viewing perspective.

Digital Orthophoto Quad (DOQ): Raster images of rectified aerial photographs.