Lesson 2: Sensors, Platforms, and Georeferencing

Lesson 2 Introduction

Remote sensing can be done from space (using satellite platforms), from the air (using aircraft), and from the ground (using static and vehicle-based systems). The same type of sensor, such as a multispectral digital frame camera, may be deployed on all three types of platforms for different applications. Each type of platform has unique advantages and disadvantages in terms of spatial coverage, access, and flexibility. A student who completes this course should be able to identify the appropriate sensor platform combination for a variety of common GIS applications.

Lesson 2 introduces the most common types of sensors used for mapping and image analysis. These include aerial cameras, film and digital, as well as sensors found on commercial satellites. New cameras and sensors are being introduced every year, as the remote sensing industry grows and technology advances. The principles of sensor design introduced in this lesson will apply to new as well as older instruments used for image data capture. This course will focus on optical sensors, those which passively record reflected and radiant energy in the visible and near-visible wavelength bands of the electromagnetic spectrum. Other courses in this curriculum delve into both active sensors (such as lidar and radar) and passive sensors that operate outside the optical portion of the spectrum (thermal and passive microwave).

Digital images are clearly very useful - a picture is worth a thousand words - in many applications, however, the usefulness is greatly enhanced when the image is accurately georeferenced. The ability to locate objects and make measurements makes almost every remotely sensed image far more useful. Georeferencing of images is accomplished using photogrammetric methods, such as aerotriangulation (A/T) or Structure from Motion (SfM). Geometric distortions due to the sensor optics, atmosphere and earth curvature, perspective, and terrain displacement must all be taken in account. Furthermore, a reference system must be established in order to assign real-world coordinates to pixels or features in the image. Georeferencing is relatively simple in concept, but quickly becomes more complex in practice due to the intricacies of both technology and coordinate systems.

Lesson Objectives

At the end of this lesson, you will be able to:

- describe various types of remote sensing instruments used to create base map imagery and elevation data, including film cameras, digital multispectral and hyperspectral sensors, lidar and radar;

- describe common platforms for deployment of sensors, including fixed-wing and rotary-wing aircraft, satellites, and ground-based vehicles;

- identify appropriate sensor/platform combinations for a variety of geospatial applications;

- describe technologies and methods used to georeference remotely sensed data;

- explain the difference between a datum, coordinate system, and map projection;

- identify primary coordinate systems used for imagery and elevation data in the conterminous United States;

- identify metadata fields that describe georeferencing in a variety of image and elevation data sets acquired from public domain sources;

- import imagery and elevation data into ArcGIS in the correct geographic location, identifying and compensating for missing or incorrect information in the provided metadata.

Questions?

If you have any questions now or at any point during this week, please feel free to post them to the Lesson 2 Questions and Comments Discussion Forum in Canvas.

Remote Sensing Platforms

Using the broadest definition of remote sensing, there are innumerable types of platforms upon which to deploy an instrument. Discussion in this course will be limited to the commercial platforms and sensors most commonly used in mapping and GIS applications. Satellites and aircraft collect the majority of base map data and imagery used in GIS; the sensors typically deployed on these platforms include film and digital cameras, light-detection and ranging (lidar) systems, synthetic aperture radar (SAR) systems, multispectral and hyperspectral scanners. Many of these instruments can also be mounted on land-based platforms, such as vans, trucks, tractors, and tanks. In the future, it is likely that a significant percentage of GIS and mapping data will originate from land-based sources; however, due to time constraints, we will only cover satellite and aircraft platforms in this course.

Since the launch of the first Landsat in 1972, satellite-based remote sensing and mapping has grown into an international commercial industry. Interestingly enough, even as more satellites are launched, the demand for data acquired from airborne platforms continues to grow. The historic and growth trends for both airborne and spaceborne remote sensing are well-documented in the ASPRS Ten-Year Industry Forecast [1]![]() . The well-versed geospatial intelligence professional should be able to discuss the advantages and disadvantages for each type of platform. He/she should also be able to recommend the appropriate data acquisition platform for a particular application and problem set. While the number of satellite platforms is quite low compared to the number of airborne platforms, the optical capabilities of satellite imaging sensors are approaching those of airborne digital cameras. However, there will always be important differences, strictly related to characteristics of the platform, in the effectiveness of satellites and aircraft to acquire remote sensing data.

. The well-versed geospatial intelligence professional should be able to discuss the advantages and disadvantages for each type of platform. He/she should also be able to recommend the appropriate data acquisition platform for a particular application and problem set. While the number of satellite platforms is quite low compared to the number of airborne platforms, the optical capabilities of satellite imaging sensors are approaching those of airborne digital cameras. However, there will always be important differences, strictly related to characteristics of the platform, in the effectiveness of satellites and aircraft to acquire remote sensing data.

One obvious advantage satellites have over aircraft is global accessibility; there are numerous governmental restrictions that deny access to airspace oversensitive areas or over foreign countries. Satellite orbits are not subject to these restrictions, although there may well be legal agreements to limit distribution of imagery over particular areas.

The design of a sensor destined for a satellite platform begins many years before launch and cannot be easily changed to reflect advances in technology that may evolve during the interim period. While all systems are rigorously tested before launch, there is always the possibility that one or more will fail after the spacecraft reaches orbit. The sensor could be working perfectly, but a component of the spacecraft bus (attitude determination system, power subsystem, temperature control system, or communications system) could fail, rendering a very expensive sensor effectively useless. The financial risk involved in building and operating a satellite sensor and platform is considerable, presenting a significant obstacle to the commercialization of space-based remote sensing.

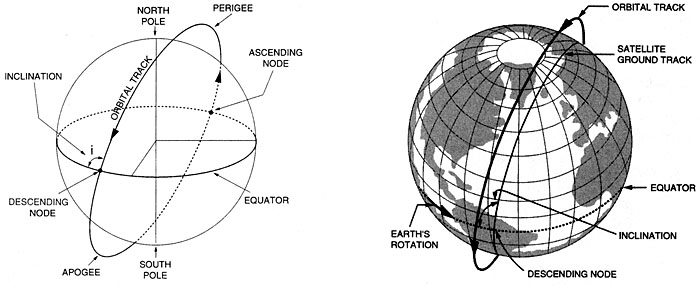

Satellites are placed at various heights and orbits to achieve desired coverage of the Earth's surface [3]. When the orbital speed exactly matches that of the Earth's rotation, the satellite stays above the same point at all times, in a geostationary [4] orbit. This is useful for communications and weather monitoring satellites Satellite platforms for electro-optical (E/O) imaging systems are usually placed in a sun-synchronous [5], low-earth orbit (LEO) so that images of a given place are always acquired at the same local time (Figure 2.02). The revisit time for a particular location is a function of the individual platform and sensor, but generally, it is on the order of several days to several weeks. While orbits are optimized for time of day, the satellite track may not always coincide with cloud-free conditions or specific vegetation conditions of interest to the end-user of the imagery. Therefore, it is not a given that usable imagery will be collected on every sensor pass over a given site

Aircraft often have a definite advantage because of their mobilization flexibility. They can be deployed wherever and whenever weather conditions are favorable. Clouds often appear and dissipate over a target over a period of several hours during a given day. Aircraft on site can respond with a moment's notice to take advantage of clear conditions, while satellites are locked into a schedule dictated by orbital parameters. Aircraft can also be deployed in small or large numbers, making it possible to collect imagery seamlessly over an entire county or state in a matter of days or weeks simply by having lots of planes in the air at the same time.

Aircraft platforms range from the very small, slow, and low flying (Figure 2.03), to twin-engine turboprop and small jets capable of flying at altitudes up to 35,000 feet. Unmanned platforms (UAVs) are becoming increasingly important, particularly in military and emergency response applications, both international and domestic. Flying height, airspeed, and range are critical factors in choosing an appropriate remote sensing platform, and you will learn about this in more detail later in the lesson. Modifications to the fuselage and power system to accommodate a remote sensing instrument and data storage system are often far more expensive than the cost of the aircraft itself. While the planes themselves are fairly common, choosing the right aircraft to invest in requires a firm understanding of the applications for which that aircraft is likely to be used over its lifetime.

The scale and footprint of an aerial image is determined by the distance of the sensor from the ground; this distance is commonly referred to as the altitude above the mean terrain (AMT). The operating ceiling for an aircraft is defined in terms of altitude above mean sea level. It is important to remember this distinction when planning for a project in mountainous terrain. For example, the National Aerial Photography Program [6] (NAPP) and the National Agricultural Imagery Program [7] (NAIP) both call for imagery to be acquired from 20,000 feet AMT. In the western United States, this often requires flying much higher than 20,000 feet above mean sea level. A pressurized platform such as the Cessna Conquest (Figure 2.04) would be suitable for meeting these requirements.

With airborne systems, the flying height is determined on a project-by-project basis depending on the requirements for spatial resolution, GSD, and accuracy. The altitude of a satellite platform is fixed by the orbital considerations described above; scale and resolution of the imagery are determined by the sensor design. Medium-resolution satellites, such as Landsat, and high-resolution satellites, such as GeoEye, orbit at nearly the same altitude, but collect imagery at very different ground sample distance (GSD).

1 Sun-Synchronous Orbit. (2007, November 27). On Wikipedia, The free encyclopedia. Retrieved December 4, 2007, from http://en.wikipedia.org/wiki/Sun-synchronous_orbit [5]

Optical Sensors

In this lesson, you will be introduced to three types of optical sensors: airborne film mapping cameras, airborne digital mapping cameras, and satellite imaging systems. Each has particular characteristics, advantages, and disadvantages, but the principles of image acquisition and processing are largely the same, regardless of the sensor type. Lesson 3 will cover photogrammetric processing of data from these sensors to produce orthophotos [8] 1 and terrain models.

The size, or scale, of objects in a remotely sensed image varies with terrain elevation and with the tilt of the sensor with respect to the ground, as shown in Figure 2.05. Accurate measurements cannot be made from an image without rectification, the process of removing tilt and relief displacement. In order to use a rectified image as a map, it must also be georeferenced to a ground coordinate system.

If remotely sensed images are acquired such that there is overlap between them, then objects can be seen from multiple perspectives, creating a stereoscopic view, or stereomodel. A familiar application of this principle is the View-Master [9] 2 toy many of us played with as children. The apparent shift of an object against a background due to a change in the observer's position is called parallax [10] 3. Following the same principle as depth perception in human binocular vision, heights of objects and distances between them can be measured precisely from the degree of parallax in image space if the overlapping photos can be properly oriented with respect to each other; in other words, if the relative orientation is known (Figure 2.06).

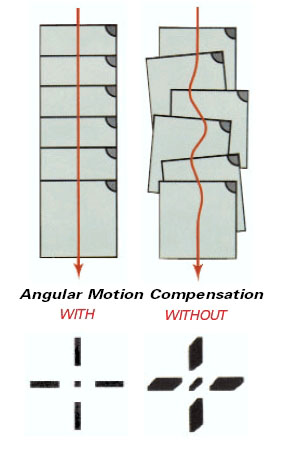

Airborne film cameras have been in use for decades. Black and white (panchromatic), natural color, and false color infrared aerial film can be chosen based on the intended use of the imagery; panchromatic provides the sharpest detail for precision mapping; natural color is the most popular for interpretation and general viewing; false color infrared is used for environmental applications. High-precision manufacturing of camera elements such as lens, body, and focal plane; rigorous camera calibration techniques; and continuous improvements in electronic controls have resulted in a mature technology capable of producing stable, geometrically well-defined, high-accuracy image products. Lens distortion can be measured precisely and modeled; image motion compensation mechanisms remove the blur caused by aircraft motion during exposure. Aerial film is developed using chemical processes and then scanned at resolutions as high as 3,000 dots per inch. In today's photogrammetric production environment, virtually all aerotriangulation, elevation, and feature extraction are performed in an all-digital work flow.

Figure 2.07 shows a Leica RC-30 aerial film camera. A hole is cut in the fuselage of the aircraft, and the camera is set in a gyro-stabilized mount as shown in Figure 2.08. This minimizes the effects of instantaneous aircraft motion and keeps the camera pointing perpendicular to the ground; the result is a sharper image and more controlled coverage from photo to photo (Figure 2.09).

In the United States, laboratory calibration of film aerial cameras is performed by the US Geological Survey, Optical Science Laboratory, in Reston, VA. If you are ever in that area, you can arrange for a visit to this unique and interesting facility; the services provided there have ensured the quality and accuracy of photogrammetrically-produced maps in the US for many decades. Most aerial survey projects require the aerial camera and lens to have been calibrated by the USGS no less than three years before the beginning of the project.

You can find a great number of USGS camera calibration certificates on the web in the Keystone Aerial Surveys Calibration Report [11] database. Camera systems are calibrated as a unique combination of camera body, lens, and film magazine. If you search the Keystone database for lens number 13366, for example, you should see the following result.

Table 3.01: Example Keystone database search results.

| Cam Num | Lens Num | CFL | Report Date | Lens Type | Platen | Report |

|---|---|---|---|---|---|---|

| 5325 | 13366 | 153.287 | 5/12/2000 | 6 | 707 | 5325-051200 |

| 5325 | 13366 | 153.301 | 10/1/2003 | 6 | 5325-707 | 5325-100103 |

| 5325 | 13366 | 153.298 | 10/30/2006 | 6 | 707 | 5325-103006 |

Lens 13366 has been calibrated 3 times, always with camera number 5325. When using the Keystone database, you can click on the report link for the most recent calibration to see the complete report. The camera is a Wild RC30 4; one of the most advanced and precise aerial film mapping cameras manufactured. Pay particular attention to the sections on

- calibrated focal length

- lens distortion

- lens resolving power (AWAR)

- principal points and fiducial coordinates.

Now look at the calibration report for lens number 13081, camera number 2961, and dated 1/5/2005. This is Wild RC10 camera manufactured in the 1980s, an early predecessor to the RC30. Compare the reports, particularly the values shown for AWAR. Mapping projects executed today often specify a minimum allowable AWAR for the camera lens.

Airborne digital mapping cameras have evolved over the past few years from prototype designs to mass-produced operationally stable systems. In many aspects, they provide superior performance to film cameras, dramatically reducing production time with increased spectral and radiometric resolution. Detail in shadows can be seen and mapped more accurately. Panchromatic, red, green, blue, and infrared bands are captured simultaneously so that multiple image products can be made from a single acquisition (Figure 2.10).

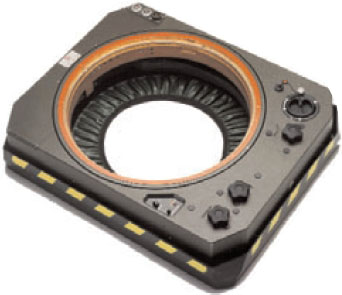

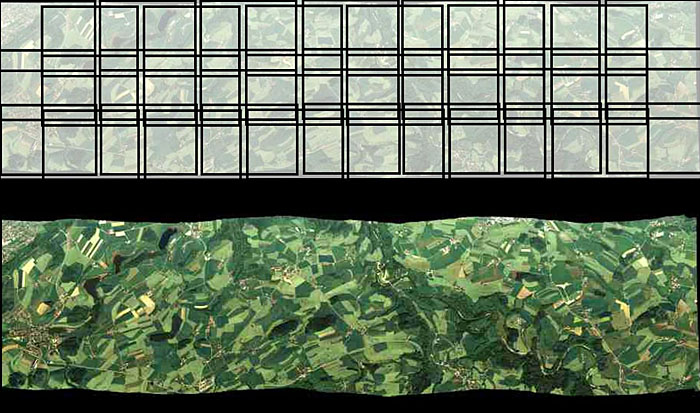

Digital camera designs are of two types: area arrays, and linear push-broom, or line-scanning, sensors. An area array camera, such as the Intergraph DMC (Figure 2.12) uses one or more two-dimensional charge-coupled device (CCD) arrays to create an image equivalent to a single frame image from an aerial film camera. The entire scene is imaged at the same moment in time, providing the same rigid geometric relationship for all points in the image with respect to each other. Coverage of an area of interest is provided with a block of overlapping photos, as shown in Figure 2.11. A push-broom sensor, such as the Leica ADS40 (Figure 2.13 and Figure 2.14), comprises multiple linear arrays, facing forward, down, and aft, which simultaneously capture along-track stereo coverage not in frame images, but in long continuous strips, or pixel carpets, made up of individual lines 1 pixel deep. Multiple linear CCD arrays capture panchromatic, near-infrared, red, green, and blue bands (Figure 2.15). Pointing the CCD arrays at aft, nadir, and forward viewing angles allows the sensor to capture multiple perspectives of the same point on the ground in a single pass, creating the stereo views required to extract elevation information (Figure 2.16).

Credit: R. Reulke, S. Becker, N. Haala, U. Tempelmann. “Determination and improvement of spatial resolution of the CCD-line-scanner system ADS40.” ISPRS Journal of Photogrammetry and Remote Sensing, Volume 60, Issue 2, 81-90. https://doi.org/10.1016/j.isprsjprs.2005.10.007 [12]

High-resolution satellite imagery is now available from a number of commercial sources, both foreign and domestic. The federal government regulates the minimum allowable GSD for commercial distribution, based largely on national security concerns; 0.6-meter GSD is currently available, with higher-resolution sensors being planned for the near future (McGlone, 2007). The image sensors are based on a linear push-broom design. Each sensor model is unique and contains proprietary design information; therefore, the sensor models are not distributed to commercial purchasers or users of the data. Through commercial contracts, these satellites provide imagery to NGA in support of geospatial intelligence activities around the globe.

Calibration of digital aerial cameras and satellite sensors is a much more complex process than calibration of a film camera. The digital sensor should be characterized for its radiometric response as well as for internal geometry. ASPRS and a number of federal government agencies have been working with sensor manufacturers to establish guidelines and procedures for sensor calibration and data product characterization. It is an ongoing effort, and standards are just beginning to emerge. The USGS Remote Sensing Technologies Project maintains a website with information on digital camera calibration [13] efforts, as well as collaborative efforts, such as the Interagency Digital Imagery Working Group [14] (IADIWG) and the Joint Agency Commercial Imagery Evaluation [15] (JACIE) group.

As digital aerial photography has matured, it has become integrated into many consumer-level, web-based applications, such as Google Earth and numerous navigation and routing packages. Microsoft has recently deployed a large number of aerial survey planes equipped with the Vexcel UltraCam sensor in an ambitious Global Ortho [16] program. Their goal is to provide very high-resolution color imagery over the entire land surface of the Earth, made publicly available through the Bing Maps platform. The following video (4:47) describes the data acquisition experience and process.

Video: Keystone Aerial Surveys: i Fly UltraCam (4:47)

[MUSIC PLAYING] We have 16 airplanes that we fly all over the United States. We have the largest fleet of aircraft totally dedicated to aircraft imagery collection in the United States, if not the world. Keystone purchased their first UltraCam in 2005. Keystone was in a situation where they had been collecting analog inventory for years, ever since the '60s. We identified that we had to go digital. We had to do it to survive. So our choice then was to pick out the right camera. There was a lot of competition from other companies, and we felt that to be state-of-the-art and to offer our clients what they needed, we chose UltraCam because of price point and what we considered quality and service that they provided.

If we had not upgraded to digital, specifically the UltraCam, we would have been considered a second class operator. We went from having one UltraCam-D to three UltraCam-X systems in only a matter of years. I think the real telling thing is once we have flown a project in digitally with our UltraCam, they don't want film anymore. Keystone's coming to a point where we're about to take our one millionth UltraCam frame. [MUSIC PLAYING] So here we are. The mount is green. We're about to take pictures here in about two seconds. And there's our first shot. I'm getting a quick view. An exact representation of the imagery that we are recording right now. So we'll go up on a four hour flight and at the end of it, I'm pretty certain that that imagery was good. Whereas, with film, you really don't know what's on it. You don't want to do a flight twice. So we're basically able to operate cheaper.

This spring was almost the tipping point. We were actually setting film cameras on the ground in our big season with people asking for digital imagery instead of the film imagery because of the quality. We had another client when we first delivered the digital imagery for them, they were able to read the serial number on a manhole cover. It's not just an image, there's so much more. Time, altitude, who flew it, camera serial numbers, the airplane it was installed in, the exact time of the photo, the sun angle of the photo. Basically, if you can type it, we can record it. The advantage of the UltraCam with its collecting all the bands allows us to do things with it that were not possible with an analog camera just using one film. We're collecting panchromatic, RGB color, and infrared at the same time. Infrared allows you to see wetland studies, impervious surface studies. Also [? distressed ?] foliage studies. You're dealing with nearly 14 bits. That 14 bits gives you the ability to see inside of shadows or fly under lower light conditions. With the X, we can now change cassettes in flight and really go all day long without having to land to download that.

They have three people on staff that, phone call away, they will either send us the parts or they'll be here to fix our camera. They have a service facility in Boulder where they can actually calibrate the camera. We chose to upgrade because Microsoft seems to have a very strong commitment to keeping their product up-to-date. Version 2.1 has some of our specific requests for reporting tools and better logging tools. The radiometry upgrades have also made it virtually a no-brainer to upgrade. The advantage that Vexcel has given us is the constant upgrades of these cameras. They're coming up with new inventions all the time. They had the D. Then they came up with the X. And now the XP. So when you have an UltraCam, you're basically buying into a product line that you will be able to use into the future. It's allowed Keystone to stay at the forefront of the profession in terms of offering the best equipment. UltraCam gives you beautiful, accurate imagery. It's reliable and has support that's second to none I'm Ken Potter from Keystone Aerial Surveys and I fly UltraCam.

1 Orthophoto. (2007, November 21). In Wikipedia, The free encyclopedia. Retrieved December 4, 2007, from http://en.wikipedia.org/wiki/Orthophoto [8]

2 View-Master (2007, November 9). In Wikipedia, The free encyclopedia. Retrieved December 5, 2007, http://en.wikipedia.org/wiki/View-master [9]

3 Parallax. (2007, 29 November). In Wikipedia, The free encyclopedia. Retrieved December 5, 2007, http://en.wikipedia.org/wiki/Parallax [10]

4 Wild was purchased by Leica Geosystems, which has since been incorporated into Hexagon. The camera referenced in this report is of the same type shown in Figure 7.