Lessons

This is the course outline.

Lesson 1: Introduction to the Unmanned Aerial System

Lesson 1 Introduction

Welcome to Lesson 1! In this lesson, you will become familiar with the history behind the use of the UAS. You will also be familiar with the current status of the UAS development. In addition, you will be exposed to the different classes of UAV/UAS according to their size, weight, and missions.

At the end of this lesson, you will have a working knowledge about how the unmanned aerial missions started, the current status and the classes of the UAV/UAS.

Lesson Objectives

At the successful completion of this lesson, you should be able to:

- describe the historical evolution of the UAS;

- recognize the defense contribution to the UAS development;

- recognize the civilian contribution to the UAS development;

- understand the current state of the UAS development;

- describe the objectives beyond the use of the UAS;

- classify different UAS according to their make and characteristics;

- describe the different classes of the UAS.

Lesson Readings

- Chapter 1 of textbook 1: Bankhart et al., Introduction to the Unmanned Aircraft Systems, 2nd edition

- Chapters1 & 2 of textbook 2: Fahlstrom, et al., Introduction to UAV Systems (Aerospace Series), 4th edition

- Pacchioli, D., "Programming autonomous vehicles to fly like birds [1]"

- Chiles, J., "Drones for Hire [2]"

- Maksel, R., "Robot Reporters [3]"

- Commercial UAV News report: "6 Predictions for 2016: UAV Experts Discuss Important Developments for Commercial Drone Applications [4]"

- Parts I, II, & III of Collier Crouch, C., thesis "Integration of mini-UAVs at the tactical operations level : implications of operations, implementation, and information sharing" [5] (pdf)

- Watts, et al., Remote Sensing [6]

Lesson Activities

- Participate in the Lesson 1 Discussion Forum.

- Take the Lesson 1 Quiz (by the end of Lesson 2).

- Review the final project details [7] document.

UAS History

In this section, you will learn about the history of UAS development and its introduction to civilian and military applications.

The history of flying objects, or the unmanned aerial vehicle in its rudimentary forms, extends way back to ancient civilizations. The Chinese, around 200 AD, used paper balloons (equipped with oil lamps to heat the air) to fly over their enemies after dark, which caused fear among the enemy soldiers who believed that there was divine power involved in the flight.

In the United States, during the Civil War, both Union and Confederate forces launched balloons laden with explosives and attempted to land them in supply or ammunition depots and explode them.

As a matter of fact, the idea of unmanned aerial objects came long before manned flights. This was for the obvious reason of removing the risk of loss of life in conjunction with these experimental objects. In modern times, the idea of unmanned flying objects developed to meaning flying aerial vehicles, or aircraft, without pilots on board. Thanks to advancements in technology, the maneuvering and control of piloted flight can be sufficiently mimicked.

Names like aerial torpedo, radio-controlled vehicle, remotely piloted vehicle (RPV), remote controlled vehicle, autonomous controlled vehicle, pilotless vehicle, unmanned aerial vehicle (UAV), unmanned aircraft system (UAS), and drone are names that may be used to describe a flying object or machine without a pilot on board.

The main challenge that faced early aerospace pioneers of piloted and pilotless airplanes alike was the issue of controlling flight once the flying object was up in the air. The Wright Brothers (1903), and at about the same time, Dr. Samuel Pierpont Langley, taught the aviation world a lot about the secrets of controlled flight. Afterwards, the war machine of WWI put intense pressure on inventors and scientists to come up with innovations in all aspects of flight design including power plants, fuselage structures, lifting wing configurations and control surface arrangements. By the time WWI ended, modern day aviation had been born.

In late 1916, the US navy funded Sperry Gyroscope Company (later named Sperry Corporation) to develop an unmanned torpedo that could fly a guided distance of 1000 yards to detonate its warhead close enough to an enemy warship. Almost two years later, on March 6, 1918, after a series of failures, Sperry efforts succeeded in launching an unmanned torpedo to fly a 1000-yard course in stable guided flight. It dived onto its target at the desired time and place, and later was recovered and landed. With this successful flight, the world’s first unmanned aircraft system, which is called Curtiss N-9, was born.

In the late 1930s, the U.S. Navy returned to the development of drones. This was highlighted by the Navy Research Lab’s development of the Curtis N2C-2 drone. (See Figure 1). The 2500-lb. bi-plane was instrumental in testing the accuracy and efficiency of the Navy anti-aircraft defense system.

World War II accelerated the development of aviation science in general and the unmanned aircraft in particular. Both the Germans and the allies successfully utilized unmanned combat aircraft. The most extensive program came about during the Vietnam War, as advances in technologies made UAVs more effective. Ryan Firebee drones by Teledyne-Ryan Aeronautical of San Diego, California were flown extensively over North Vietnam and conducted various tasks, such as reconnaissance and signals intelligence missions, leaflet drops, and surface-to-air missile radar detection.

In most recent experience, US forces used drones in the wars in Bosnia, Iraq, and Afghanistan, and drones are in continuous use in the war on terrorism around the world.

To Read

- Chapter 1 of textbook 1, Introduction to the Unmanned Aircraft Systems

- Section 1.1 and 1.2 of chapter 1 of textbook 2, Introduction to UAV Systems (Aerospace Series)

UAS System Overview

The way a pilotless aircraft is controlled determines its categorization. In general, there are three main names for pilotless aircraft:

- Unmanned Aerial Vehicle (UAV): a pilotless aircraft that is either manually controlled with a joystick or a mouse or autonomously flown by following a preprogrammed mission. The acronym UAV is the most widely used term in describing a civilian pilotless aircraft.

- Remotely Piloted Vehicle (RPV): a pilotless aircraft that is steered or controlled from a remotely located position. Manually controlled pilotless aircraft means manually controlling the aircraft position by manually adjusting its heading, altitude, and speed. In many cases, the terms UAV and RPV are interchangeably used to describe any pilotless aircraft.

- Drone: one of the oldest terms used to describe a pilotless aircraft. A drone is defined as a pilotless aircraft controlled by radio signal. Even with the emerging of the UAV and RPV names, the name “drone” is still used even for civilian pilotless aircraft. For the purpose of this course, the word drone is used to describe a pilotless aircraft equipped with a lethal payload. It is usually used by defense apparatus.

Whether it is named a UAV, an RPV, or a drone, at a minimum, the pilotless aircraft should include the following elements:

- air vehicle

- mission planning element

- command and control element

- communication link

- launch and recovery element (for some of them)

- payload

More details will be provided in Lesson 2.

To Read

- Pacchioli, D. Penn State, "Programming autonomous vehicles to fly like birds. [8]" Last modified January 15, 2014. Accessed February 4, 2014.

UAS Status

Although studying the origins of UAS development is crucial to understanding the evolution of UAV/UAS, its status in modern times is what we’re concerned with in this course.

UAV/UAS has shown sporadic appearance over time, and individual appearances have lacked momentum and continuity. It has become the pattern for UAV/UAS to serve a limited purpose and then discontinue as the purpose is satisfied. The utilization of UAS during the Vietnam War is a good example of such a sporadic rise of the use of drones. Very little was achieved surrounding the development of UAS after the war ended. This is not the case with the current period of unmanned aircrafts development.

In the last two decades, UAV technology has become very strong. This is mainly due to advancements in the fields of GPS, IMU, and electronics. Since the wars in Bosnia, Iraq and Afghanistan, the pilotless aircraft industry has witnessed increased and sizable investment that has continued to the present time.

The use of pilotless aircraft in Desert Storm in 1991, and later in Desert Shield, can be considered to be the first wide-scale deployment of UAS/UAV. During Desert Storm, some 500 UAS sorties were conducted to support intelligence gathering and to guide heavy artillery from battleships in the Persian Gulf. The success in deploying UAS in desert storm convinced militaries around the world of the usefulness of UAS in spotting enemy locations and directing artillery units.

Strong opposition to the use of UAS for defense purposes came from manned aircraft pilots and their leadership. They found a weakness in the technology that supported their claims. They built their case on the vulnerability of the data link, especially for the UAS, that relies on line-of-site based operation. However, advances in the space communications field, especially GPS, weakened their claim, as the space-based data link made the UAS no less more vulnerable than the piloted aircraft.

The United States has committed valuable resources and investments to the development of the modern UAS. NASA was immensely involved in such developments, as it is clear in the following video clip.

Four Decades of UAVs - NASA, UAV Simulation - AIAA Policy Symposium, March 2013 (4:48)

This video has clips of the following types of UAVs.

Lifting Body Remotely Piloted Vehicle (Hyper III, 1969)

Remotely Piloted Research Vehicle (Piper PA-30, 1970)

F-15 Remotely Piloted/Spin Research Vehicle (SRV, 1975)

Drones for Aerodynamic and Structural Testing (Firebee/DAST, 1977)

Highly Maneuverable Aircraft Technoloby (HiMAT, 1979)

Controlled Impact Demonstration (Boeing 720 CID, 1984)

Expendable Air-Launched Orbital Booster (Pegasus, 1990)

Spacecraft Autoland Demonstrator (Space Wedge, 1991)

Environmental Research Aircraft & Sensor Technology (Perseus, 1993)

Environmental Research Aircraft & Sensor Technology (Theseus, 1996)

Environmental Research Aircraft & Sensor Technology (Altus, 1996)

Environmental Research Aircraft & Sensor Technology (Pathfinder, 1997)

Environmental Research Aircraft & Sensor Technology (Centurion, 1998)

Environmental Research Aircraft & Sensor Technology (Helios, 2001)

High Altitude Endurance Unmanned Aerial Vehicle (Tier III, 1996)

Tailless Fighter Agility Research Aircraft (X-36, 1997)

Lifting Body Crew Return Vehicle (X-38, 1998)

Space Maneuvering Vehicle (X-40 SMV, 2000)

Inflatable Wing Technology Demonstrator (I-2000, 2001)

High Altitude Lon-Endurance Research Aircraft (Altair, 2002)

Scramjet Engine Experiment (X-42 Hyper-X, 2001)

Unmanned Combat Aerial Vehicle (X-45, 2002)

MQ-9 Predator B UAS (Ikhana, 2007)

Blended Wing Body [BWB] (X-48B, 2007)

RQ-4 Environmental Science Aircraft (Global Hawk, 2007)

UCAV Demonstrator (Phantom Ray, 2011)

Hydrogen Powered HALE (Phantom Eye, 2007)

Hybrid Wing Body [HWB] (X-48C, 2012)

The use of UAS in US Army combat operations grew from 51 operational UAS in 2001 to over 4000 in 2010. Studying the “US Army Unmanned Aircraft Systems Roadmap 2010-2035” can tell a lot about the importance of UAS in the US Army’s current and future activities. The Roadmap states that “Army UAS are the ‘Eye of the Army’ and support information dominance by providing the capability to quickly collect, process, and disseminate information to reduce the sensor-to-shooter timeline. In addition, UAS support tactical echelons in Army and joint operations and provide the warfighter a tactical advantage through near real-time situation awareness, multi-role capabilities on demand (including communications, reconnaissance, armed response, and sustainment applications), and the ability to dynamically retask.”

UAS use for daily civilian activities is no less important than it is for the armed forces. UAS are used around the world for different tasks, and most recently, for package delivery. The first video below (1:12) provides a fairly good idea about the different types and uses for the modern day UAS. UAS commercial use outside the United States is growing rapidly, as is illustrated in the second video clip. Growth in the commercial UAS market within the United States is slower than one would like to see due to tight regulations by the FAA. UAS is not allowed to be used for any commercial purposes in the United States. In a recent report, 6 Predictions for 2016: UAV Experts Discuss Important Developments for Commercial Drone Applications [4], by Jeremiah Karpowicz of the Commercial UAV News, the author discusses the latest developments in the UAS market and technologies as well as predictions on the status of UAS use for commercial applications.

"Unmanned Aerial System (UAS): Equipment and Applications" (1:12)

Video of Unmanned Aerial Systems taking off, in flight and landing. The UAS's are flying over mountains, neighborhoods, airports, roads, forestfires, lakes, oceans, and military operations. The following words appear on the screen indicating how UAS's are used: Disaster Response, Weather Monitoring, Maritime Security, Border Security, Firefighting, Drug Interdiction, Search and Rescue, and Wildlife Management.

"Drone On: The Future of UAV over the US Motherboard" (22:22)

[MUSIC PLAYING] BRIAN A. ANDERSON: Hey, it's Brian with Motherboard. I've got one word for you-- drones. Now, you've probably heard a little bit about drones in the news lately. These things fly all throughout the Middle East and the Horn of Africa. When they're not spying on suspected terrorists, they're probably killing them with Hellfire missiles.

But here's the thing. Drones are coming to the States. They're actually already here. They're being used to keep an eye on things, so they're not going to kill you, at least not yet. Motherboard has been fascinated with drones for a while now. It seems there's some misconceptions about the age of unmanned aerial vehicles.

To try and clear the air just a little bit, we're going to head out and talk to some people who are building drones, who are selling drones all over the world. With any luck, we hope to fly some drones, as well. We have absolutely no idea what we're getting into.

New York City captured by a Swiss drone hobbyist. As you're probably thinking, yes, this is illegal as all hell. And I'll be the first to say that doing this sort of thing over the site of the worst terrorist attacks on American soil? Probably not the best idea. The drone view that you've seen probably looks a bit more like this. Or more accurately, this.

The grainy, pixelated, bird's-eye views that Unmanned Aerial Vehicles, or UAVs, offer have become wildly popular on the internet. Maybe you've heard of the grim footage under its nom de YouTube, drone porn. How did we arrive at the robo wars? And where are they taking us?

To get an idea, we left our Brooklyn offices for Washington, DC, to meet up with PW Singer, one of the world's foremost experts on military robotics.

PW SINGER: We are wrestling with what it means to live, work, and even fight through a robotics revolution. The technology that we're using, with things like the Predator or the PackBot-- those are Model T 4. Those are Wright brothers equivalents. But even with that first generation, we're seeing impact on questions like, how do we catch up our laws in war, but also how do we start to catch up our laws domestically as we start to see that technology move over to the domestic side?

We're seeing an evolution that is following many other technologies. And the story of the airplane is, I think, a good illustration of where we're at and the impact of the war on an industry that becomes a game-changer. The flying machine was once thought as mere science fiction. Then the Wright brothers make it real. Within a couple years, it's utilized in war.

In World War I, at the start, they're not armed. They're just used for observation. Then they jerry-rig arm them. Then they start to specially design them to be armed. And then by the end of World War I, you see all these other roles being visualized for planes that soon move over to the commercial sector. Passenger, postal delivery, medical evacuation-- you name it.

Same exact thing is happening with robotics. First science fiction, then becomes real. The Predator was originally unarmed, just used for observation. Then they jerry-rig arm it. Then they specially design them to be armed. Now we're seeing all sorts of other roles.

BRIAN A. ANDERSON: One of the latest developments in militarized drones is autonomy-- being able to tell your drone where to go and then basically setting the thing on cruise control until it gets there is a game-changer. At the same time, drone technology is doing what most any other killer app does as it proliferates. It's becoming smaller.

We actually noticed this evolution last year when Vice was in Amman, Jordan, home to SOFEX, the world's largest military weapons expo.

SHANE SMITH: You know when you were a kid, you used to have those little model airplanes and somebody's dad would be a real nerd and have a model airplane? Now, it's all model airplane-style drones that can take pictures or drop bombs.

BRIAN A. ANDERSON: We want to check out some of these drones and size up their market, so we decided to go back.

[AIRPLANE ENGINES WHINING]

Drones are becoming hot commodities for armed forces around the world. Some 600 companies from well over 50 countries are dabbling with drone tech for both spying and killing. And nowhere is this more evident than among the trade booths at SOFEX, where we first meet this guy, a rep for a Turkish drone company.

FATIH SENKUL: My name is Fatih Senkul. I am working for Atlantis Unmanned Vehicle Solutions developing unmanned vehicles, like Aeroseeker. Some photographers want to use it for surveillance purposes, military issues, and maybe some go track-and-seek missions. Some of the military, even the Turkish Army-- what's the payload? They asked. We said, 500 grams. So let's put a very little camera and just put 500 grams of bomb and they will do a suicide attack.

That's one of the issues they offered we hadn't thought of. This is something that the military's thinking.

BRIAN A. ANDERSON: If that sounds crazy, well, then there's this.

FATIH SENKUL: I am a fan of Terminator and I love these movies, and I really would like to see some of them in the future, like 2030 maybe. So I am trying to do my best to see them, yes.

BRIAN A. ANDERSON: Unlike Fatih, I'm in no rush to hasten the rise of the machines. The next guy we meet at SOFEX maybe isn't either. Then he says something almost as crazy.

CHRIS BARTER: To me, drone means you've got something that's operational on its own. It's kind of doing its own thing, like a Hal of 2001, A Space Odyssey.

BRIAN A. ANDERSON: Here's hoping his robot, the Scout, has no intentions of becoming self-aware, like Hal, and refusing to open the pod bay doors. Now to be clear, the Scout is built by Aeryon Labs, a Canadian drone firm. Datron and its reps, like Chris, work with Aeryon on the supply side of the chain. Chris Barter is a drone dealer.

The Scout is the flagship UAV in Datron's suite of tactical robotics. Take one look at its size, and it's pretty clear that the Scout is nothing more than a surveillance system. It can fly at speeds up to 30 miles per hour. It's fully operational from negative 22 to 122 degrees Fahrenheit. And it can withstand wind gusts up to 50 miles per hour.

It's a compact, capable machine, and has been sought after by the likes of NOAA, the US Coast Guard, and FEMA.

PRESENTER 1: Is there anything that you can give me?

CHRIS BARTER: Like a hand--

PRESENTER 1: Yeah.

CHRIS BARTER: Like a brochure or something like that?

PRESENTER 1: I think we're going to hopefully be contacting you very, very soon.

CHRIS BARTER: Sounds like a plan.

BRIAN A. ANDERSON: After Chris closes the deal, he invites us back to Datron's headquarters just outside of San Diego. In addition to drones, he promises there's going to be some pretty decent surfing.

[AIRPLANE ENGINE WHINING]

CHRIS BARTER: I'm a pretty Buddhist guy. There's not much that makes me tic out there, outside of bad driving and bad surfing.

BRIAN A. ANDERSON: Would you ever use a Scout to shoot some pretty gnarly, big wave surfing footage?

CHRIS BARTER: Oh absolutely, man. That's actually one of my dreams, is to take it out to Pipeline or [INAUDIBLE].

BRIAN A. ANDERSON: So even though Chris exudes the calculating precision of a drone capitalist, he's a surfer dude at heart, and maybe even a drone hobbyist. And he isn't the only one who views drones as being a whole lot more than killers and spies. This is Justin Wellender. Notice those goggles he's putting on. Those allow for what's known as first-person viewing. So suddenly, what the drones camera sees transmits back to Justin's goggles, in effect allowing him to fly.

But we'll get back to the hobbyist later.

[MUSIC PLAYING]

Southern California has long been a hub of aerospace R&D. And today, drone firms like Datron are popping up all over the region. You can call it Drone Valley or even the Drone Zone. Datron's campus is in one of these cookie-cutter industrial parks. But soon enough we find the place and we're greeted by Chris and two of his colleagues. We'll get down to the brass tacks. Who buys a Scout?

CHRIS BARTER: I will not go into specific customers by name, but I can address customer bases that we will go after.

PRESENTER 2: The scout is man-packable, and offers fast setup, ease of use, and hot-swappable payload capabilities. The snap-together assembly requires no tools, and total assembly to takeoff time can be measured in seconds.

CHRIS BARTER: We're targeting the guy, be it the law enforcement officer, be it the squad guy who's out in a combat theater, who doesn't want to rely on some guy flying a system in Las Vegas that's being launched out of an airport that's 7,000 miles away.

BRIAN A. ANDERSON: Unlike the Scout, most so-called hunter-killer drones are flown out of Force bases throughout the American West. Many people lose sleep over the thought of these hulking drones, but many others accept the new bug-splat warfare.

CHRIS BARTER: I have no qualms when I read the news about a drone strike in Pakistan. What troubles me is that people have a tendency to kind of lump in a lot of these unmanned systems, one with another. So a Scout, which is unarmed and will probably always be unarmed, is meant specifically for surveillance, will never be harming any individual, for the most part, unless any kind of accident.

PAUL WILSON: The unit really and truly flies itself. It just waits for us to tell it when to take off, how high to go, how fast to fly, where we want it to go, and what to look at. All of our status says we're OK. We've got a GPS accuracy of 2.6 meters. So we're ready to take off. It spins up. It says, I've done all my check, so now I'm ready to take off. So I take off.

The vehicle is very good at flying itself, and it just listens to the directions of how high we want it to go, where we want it to go to, and what we want it to look at.

CHRIS BARTER: We've had a lot of interest in special use cases, like in Nome, Alaska, where they actually had an oil tanker trying to ship oil into Nome. Unfortunately, the harbor froze really early in the year. And what they actually did with the Scout was they took it off and they took photographs of the ice surrounding this harbor. And using post-processing software, they were able to actually map out the sea ice thickness so they could navigate this tanker accordingly. So it's a pretty diverse system.

BRIAN A. ANDERSON: It takes some convincing, but eventually Chris and his team let us take this diverse system of theirs for a spin. So I'm gazing up at this airborne robot, only to see it looking back down at me. I begin to feel the sting of my own privacy potentially being compromised, and I can't help but wonder if Chris and Datron feel the same.

CHRIS BARTER: Yes, we do empathize with the security and privacy rights. But we're more so focused on supporting that agency, supporting that firefighter, or supporting that law enforcement officer going into the building who needs to know either what's happening in that building, in a tactical type of situation, or what's happening on the other side. So really, it's in the court of public opinion how that gets flushed out.

BRIAN A. ANDERSON: Datron doesn't want to talk about privacy, but Chris hopes everyday civilians will come to see something like the Scout as a friend, not big brother.

CHRIS BARTER: As we deploy these into real world environments, what I hope happens is that people obtain an understanding of how these systems are actually working for them, as opposed to against them.

BRIAN A. ANDERSON: How do you think we did?

PAUL WILSON: You guys did pretty good you. Took off and you landed exactly where you wanted it to, and you didn't crash a thing. You did good.

BRIAN A. ANDERSON: No blood.

PAUL WILSON: No blood. No blood, no dents, no scratches.

BRIAN A. ANDERSON: Now that we've gotten a glimpse of the defense and professional side of this equation, we decide to check out some of the folks at the leading edge of hobby drones. A few miles down the road from Datron is 3D Robotics, a company that represents a drastic culture shift in drone tech. Alan and Sam, two engineers of the company, give us a quick run of the lab.

ALAN SANCHEZ: This is where we design all the frames, the autopilot, all the circuits, and also where we play around. So this is just where everything starts. And then the manufacturing, shipping, and testing's on the other side.

So right now, the word drone I feel has a negative connotation, especially with all the wars that have been going on, and military drones being the most common use of the word. But really, a drone is a machine that can pre-program or that has a level of autonomy that can do a job that the user can't do or doesn't want to do.

So what we're doing is turning regular RC aircraft, or even helicopters, quadcopters, into autonomous vehicles. With our autopilot, you just drop it into your existing vehicle and turn it into a fully autonomous aircraft, something that wasn't available for the masses before. And then what to do with that? That's where the user comes in. We're selling the tool, and it's up to the user to come up with a use for it. And you go buy scissors and do something bad with it, so it's basically the same thing.

CHRIS ANDERSON: I'm Chris Anderson. I'm the co-founder of 3D Robotics and I founded DIY Drones, the community that spawned us initially. This is not my day job. My day job is I'm the editor of Wired.

BRIAN A. ANDERSON: Shortly after taping this interview, Chris Anderson announced his departure from Wired to focus on 3D Robotics full-time.

CHRIS ANDERSON: Well, what you're looking at here is what we think of as something like the Apple from 1977, coming out of the Home brew Computing Club, amateurs, hobbyists, not the IBMs of the day. They're the technology in your cell phone-- the sensors, and the processors, and the wireless, et cetera. The fact that this has become cheap, and available, and ubiquitous is the enabling technology of the personal drone movement.

And we don't come out of the aerospace industry. We definitely don't come out of military. We come out of the hobbyist world, and what you're seeing here is just a bottoms-up open source community-based attempt to take a technology was once a military-industrial one and democratize it, make it available to everybody, and introduce the word personal to drone.

BRIAN A. ANDERSON: Minutes later, we're heading to a nearby field that serves as one of the main proving grounds for 3D's aircraft. Alan and Sam bring along two drones-- a small quad copter and a more traditional RC glider. We're curious to see how these guys stack up against the pro model, like the Scout.

CHRIS ANDERSON: These things are light. The planes are foam. They hit you on the head, they'll just bounce off. They won't hurt you. But they don't have weapons. They can't carry anything very heavy. They're designed basically like radio-controlled toy airplanes, but they just have a brain.

ALAN SANCHEZ: Yeah. So these things take a while to get some altitude.

PRESENTER 3: [INAUDIBLE]

Yeah.

BRIAN A. ANDERSON: And just like that, our graceful flight is cut short. The guys are spooked by a small private plane passing through our airspace, which brings us to the Federal Aviation Administration's stance on drones.

ALAN SANCHEZ: Since the FAA doesn't really have rules for what we make, we just piggyback onto RC aircraft. And so we're limited by altitude. We can only fly 400 feet or below. We have to fly within line-of-sight. Just various rules that are there that maybe we can do away with because our drones are more capable than that.

BRIAN A. ANDERSON: While 3D's quad copter and the Scout might look similar, their differences far outweigh any similarities. 3D's shoots seemingly better looking footage than the Scout that we flew, for one thing. Just compare the two. Then again, what the Scout might lack in visuals, it makes up in durability. And of course, the GPS and the slick user interface allow for reconnaissance and search-and-rescue capabilities that put it above and beyond 3D's systems, which are more or less pimped-out aircraft.

We fly 3D's drones the old-fashioned way-- with RC controllers. But thanks to 3D's autopilot, these are autonomous aircraft, meaning that just like the Scout, you can tell your DIY drone where to go, let it get there by itself, and then regain control once it's at point B. I can see how it would be easy to drop out and kick it in the Drone Zone forever, but it's time we get back to New York.

[PLANE ENGINE WHINING]

So we've made it back to Brooklyn. Before this trip, a lot of my thinking about today's drone world came with a certain alarmism. And to a degree, I think it still does, and for good reason. When you're playing with toys like these, it can be hard to forget that drone technologies are evolving in large part to be really, really good at killing a lot of people.

ALAN SANCHEZ: So if you want it to come back to you--

BRIAN A. ANDERSON: Yeah, I will.

ALAN SANCHEZ: --take this switch and pull it all the way down.

BRIAN A. ANDERSON: So I think that rigging up big Predator and Reaper drones to incinerate innocent civilians, and American citizens on foreign soil, is too much of the stuff of war crimes and extrajudicial killings. And I certainly don't sit well with the thought of a spying robot peering into my apartment window. But when it comes to some of the tactical and hobbyist drone deployments that I saw out in Jordan and San Diego, I kind of caught the bug.

It's getting harder and harder to argue against the fact that for certain scenarios, drones this makes sense. Think about Aeryon giving a couple of Scouts to Libyan rebels last year to help aid their fight against Muammar Gaddafi's forces. We all know how that story ended.

[GUNFIRE]

But beyond the war time theater, think of the myriad possibilities that drones open up for research, filmmaking, even the next generation of taco delivery. Or think about a guy like Justin, who's just really, really stoked on flying. My guess is what the domestic drone scape is going to look like in the next 5 to 10 years is about as good as yours.

But having spoken with people like Chris Barter and Sam and Alan out at 3D, I can say with relative assurity that drones are going to become more a part of our everyday lives than they already are. Should we be concerned about that? Absolutely. But so long as these drones are being put to legitimate uses, that's maybe not the worst thing, is it?

[DRONE ENGINES WHINING]

To Read

- Chiles, J. Air and Space Magazine, "Drones for Hire [2]." Last modified January 2013. Accessed February 4, 2014.

- Maksel, R. Air and Space Magazine, "Robot Reporters [3]." Last modified November 06, 2012. Accessed February 4, 2014.

- Commercial UAV News report "6 Predictions for 2016: UAV Experts Discuss Important Developments for Commercial Drone Applications [4]"

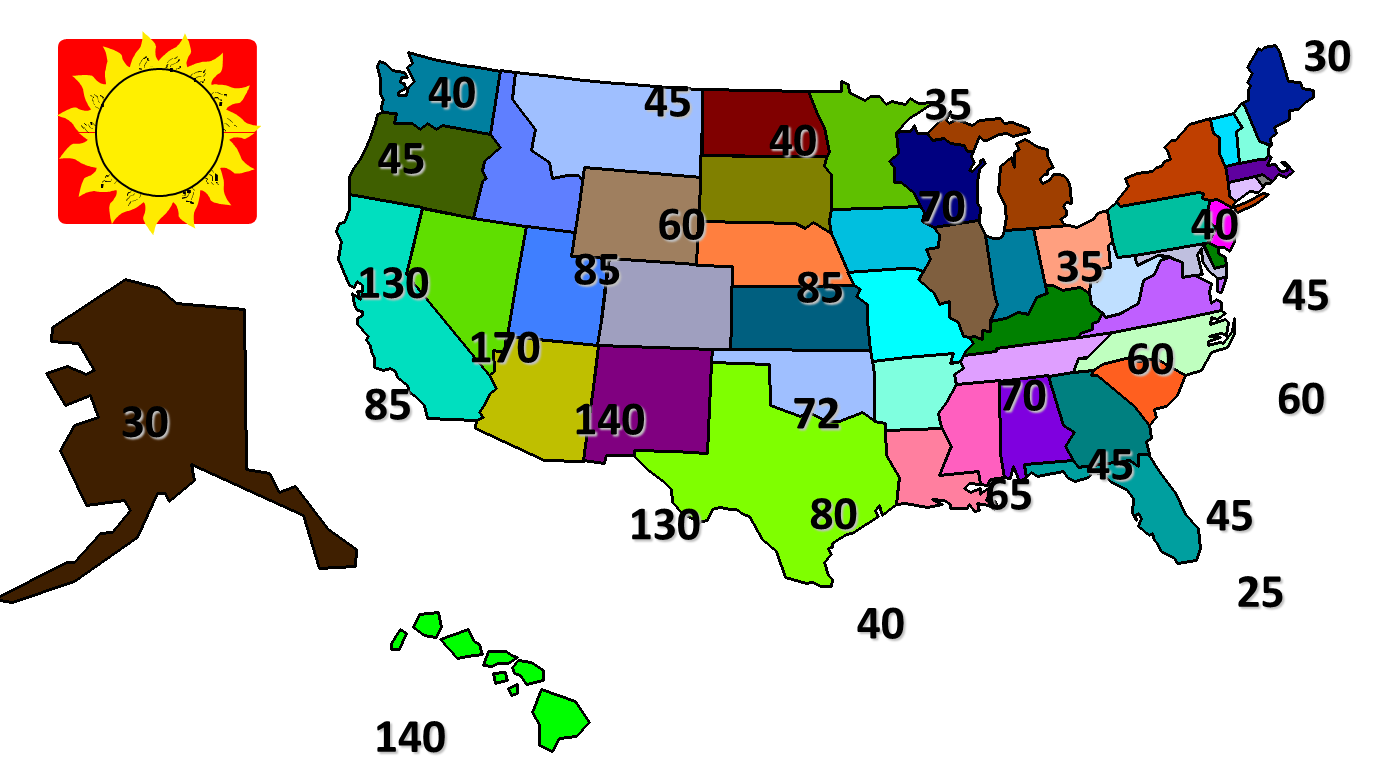

Classification of the Unmanned Aerial Systems

There is no one standard when it comes to the classification of UAS. (In this course, the terms UAS and UAV will be used interchangeably.) Defense agencies have their own standard, and civilians have their ever-evolving loose categories for UAS. People classify them by size, range and endurance, and use a tier system that is employed by the military. The US National Aviation Intelligence Integration Office [9] website provides good overview for the global UAS classification categories. For classification according to size, one can come up with the following sub-classes:

- Very small UAVs

- Micro or Nano UAVs

- Small UAVs

- Mini UAVs

- Medium UAVs

- Large UAVs

UAVs can also be classified according to the ranges they can travel and their endurance in the air, using the following subclasses developed by the US military:

- Very low cost close-range UAVs

- Close-range UAVs

- Short-range UAVs

- Mid-range UAVs

- Endurance UAVs

According to the U.S. Department of Defense, UAVs are classified into five categories, as shown in Table 1:

| Category | Size | Maximum Gross Takeoff Weight (MGTW) (lbs) | Normal Operating Altitude (ft) | Airspeed (knots) |

|---|---|---|---|---|

| Group 1 | Small | 0-20 | <1,200 AGL* | <100 |

| Group 2 | Medium | 21-55 | <3,500 | <250 |

| Group 3 | Large | <1320 | <18,000 MSL** | <250 |

| Group 4 | Larger | >1320 | <18,000 MSL | Any airspeed |

| Group 5 | Largest | >1320 | >18,000 | Any airspeed |

|

*AGL = Above Ground Level |

||||

Classification According to Size

Very small UAVs

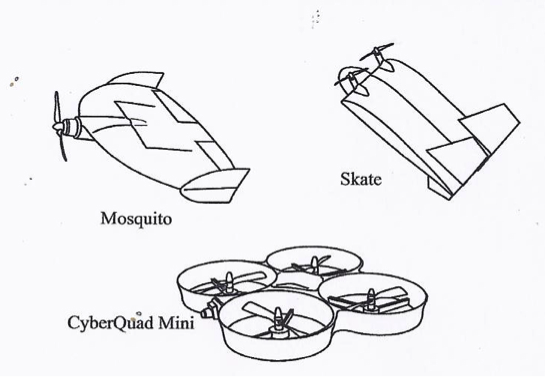

The very small UAV class applies to UAVs with dimensions ranging from the size of a large insect to 30-50 cm long. The insect-like UAVs, with flapping or rotary wings, are a popular micro design. They are extremely small in size, are very lightweight, and can be used for spying and biological warfare. Larger ones utilize conventional aircraft configuration. The choice between flapping or rotary wings is a matter of desired maneuverability. Flapping wing-based designs allow perching and landing on small surfaces. Examples of very small UAVs are the Israeli IAI Malat Mosquito (with wing span of 35 cm and endurance of 40 minutes), the US Aurora Flight Sciences Skate (with wing span of 60 cm and length of 33 cm), the Australian Cyber Technology CyberQuad Mini (with 42x42 cm square), and their latest model, CyberQuad Maxi. See Figure 1.1, below.

Small UAVs

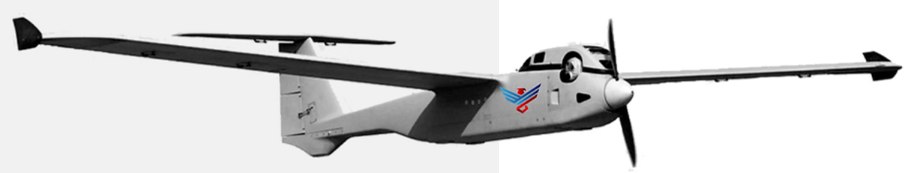

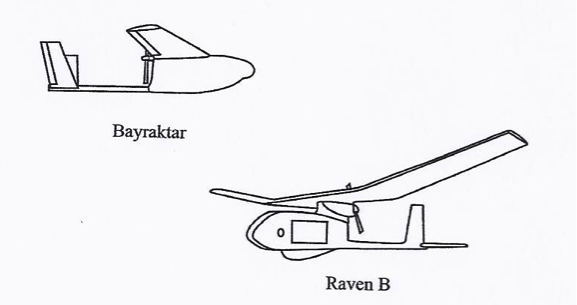

The Small UAV class (which also called sometimes mini-UAV) applies to UAVs that have at least one dimension greater than 50 cm and no larger than 2 meters. Many of the designs in this category are based on the fixed-wing model, and most are hand-launched by throwing them in the air as shown in Figure 1.2. Examples of members of this small UAV class are:

- the 1-meter long RQ-11 Raven, by US Aero Vironment with a wingspan of 1.4 m;

- the Turkish Bayraktar [13] (Figure 1.5), which weighs about 5 kg and has a data link range of 20 km;

- the US Army RQ-7 Shadow (Figure 1.3);

The The AiRanger™, by American Aerospace and shown in Figure 1.4. The The AiRanger™ [14] is a crossover UAV between a small and a medium-sized system

Some of the UASs of this class are based on a rotary-wing design.

Medium UAVs

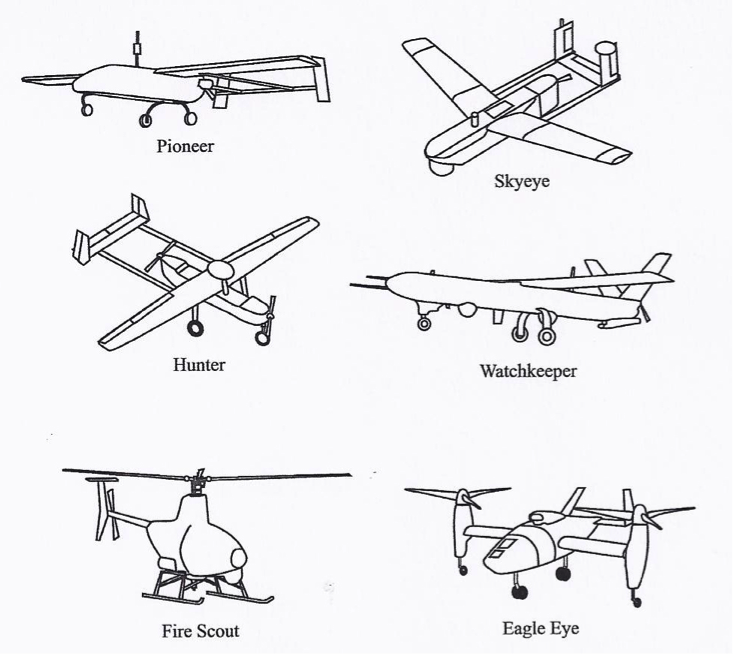

The medium UAV class applies to UAVs that are too heavy to be carried by one person but are still smaller than a light aircraft. They usually have a wingspan of about 5-10 m and can carry payloads of 100 to 200 kg. Examples of medium fixed-wing UAVs are (see Figure 1.6, below) the Israeli-US Hunter and the UK Watchkeeper. There are other brands used in the past, such as the US Boeing Eagle Eye, the RQ-2 Pioneer, the BAE systems Skyeye R4E, and the RQ-5A Hunter. The Hunter has a wingspan of 10.2 m and is 6.9 m long. It weighs about 885 kg at takeoff. The RS-20 by American Aerospace is another example of a crossover UAV that spans the specifications of a small and medium sized UAV. Many other medium UAVs can be found in the reading assignment. There are also numbers of rotary-based medium sized UAVs.

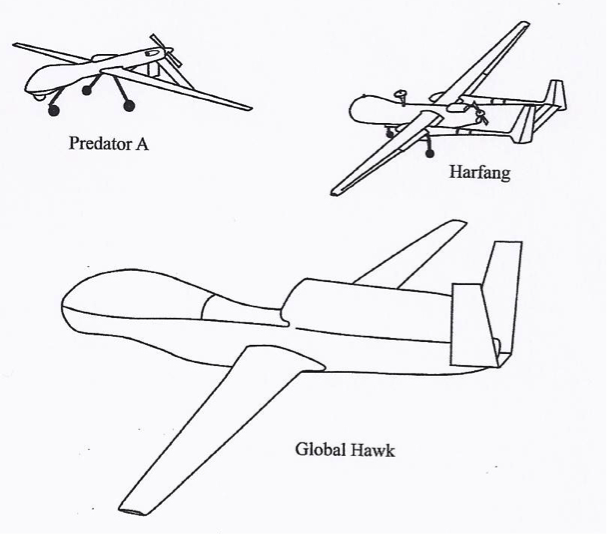

Large UAVs

The large UAV class applies to the large UAVs used mainly for combat operations by the military. Examples of these large UAVs are the US General Atomics Predator A and B and the US Northrop Grumman Global Hawk (Figures 1.7 and 1.8).

Classification According to Range and Endurance

Very low-cost, close range UAVs

This class includes UAVs that have a range of 5 km, endurance time of 20 to 45 minutes, and cost of about $10,000 (2012 estimate). Examples of UAVs in this class are the Raven and Dragon Eye. UAVs in this class are very close to model airplanes.

Close range UAVs

This class includes UAVs that have a range of 50 km and endurance time of 1 to 6 hours. They are usually used for reconnaissance and surveillance tasks.

Short range UAVs

This class includes UAVs that have a range of 150 km or longer and endurance times of 8 to 12 hours. Like the close range UAV, they are mainly utilized for reconnaissance and surveillance purposes.

Mid-range UAVs

The mid-range class includes UAVs that have super high speed and a working radius of 650 km. They are also used for reconnaissance and surveillance purposes, in addition to gathering meteorological data.

Endurance UAVs

The endurance class includes UAVs that have an endurance of 36 hours and a working radius of 300 km. This class of UAVs can operate at altitudes of 30,000 feet. They are also used for reconnaissance and surveillance purposes.

To Read

- Chapter 2, of textbook 2, Introduction to UAV Systems (Aerospace Series)

- Crouch, C. Integration of Mini-UAVs at the Tactical Operations Level: Implications of Operations, Implementation, and Information Sharing [18]

To Do

- Read the paper “Remote Sensing [6]” by Watts, et al. Highlight agreements and differences in the UAS classifications system between the one adopted in the paper and the one given in this lesson. Post your opinion in the discussion forum for Lesson 1. Respond to at least one posting from your peers. (3 points)

Missions of the UAVs

In this section, we will discuss the different missions of the UAS.

Naming the different missions for UAVs is a difficult task, as there are so many possibilities and there have never been enough systems in use to explore all the possibilities. However, the two main classifications for UAS missions are the following:

- The military mission: Military applications focus on weapons delivery and guided missile support, as well as directing artillery and spotting enemy positions.

- The civilian mission: Civilian applications of UAS are open to the imagination, and only time will tell of the future missions of UAVs for civilian applications. As of today, civilian missions include various applications such as:

- security awareness;

- disaster response, including search and support to rescuers;

- communications and broadcast, including news/sporting event coverage;

- cargo transport;

- spectral and thermal analysis;

- critical infrastructure monitoring and inspection, including power facilities, ports, bridges, and pipelines;

- commercial photography, aerial mapping and charting, and advertising.

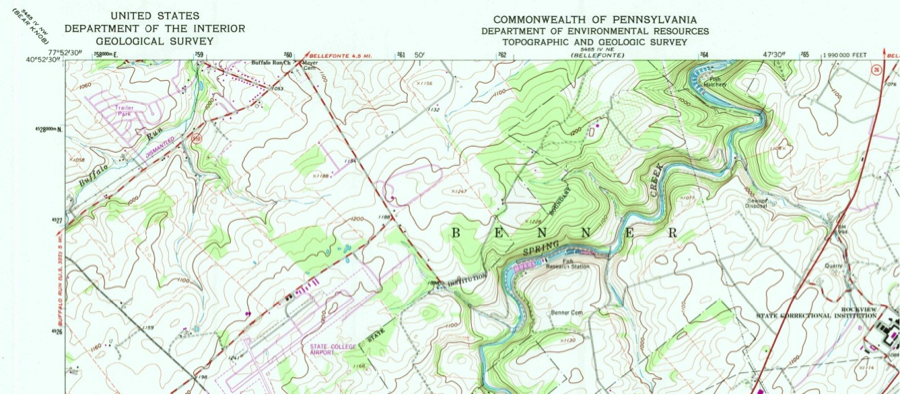

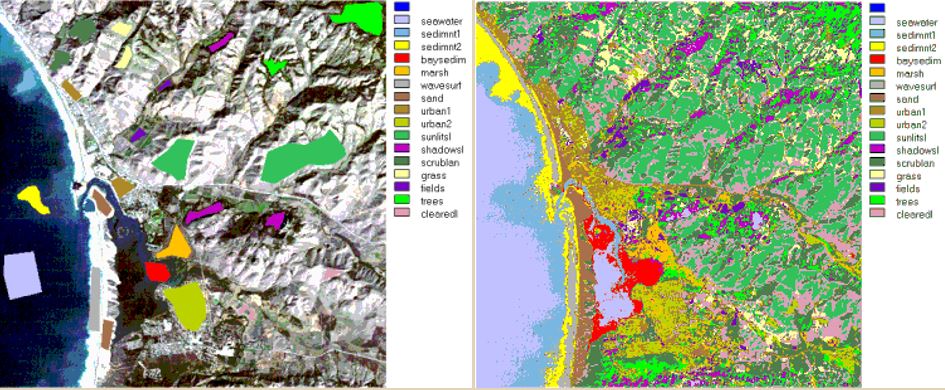

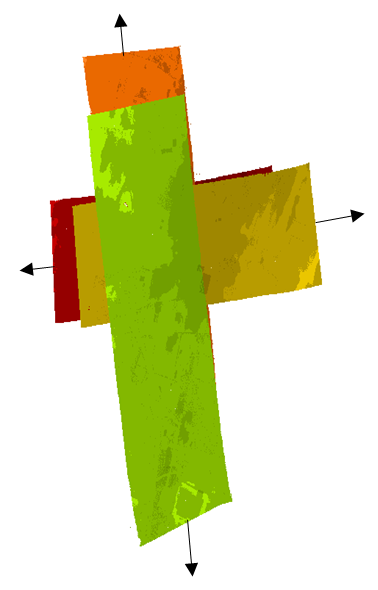

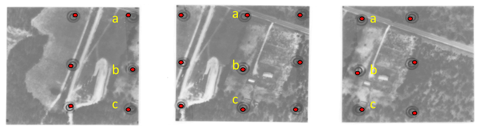

On the geospatial and mapping applications side, the UAS can be used for the following activities:

- aerial photography

- mapping

- LIDAR

- volumetric surveys

- digital mapping

- contour mapping

- topographic mapping

- digital terrain modeling

- aerial surveys

- photogrammetry

- temporal/spatial correlation for terrain reconstruction

- geophysical survey

Military and civilian missions of UAS overlap in many areas. They both use UAS for reconnaissance and surveillance. In addition, they both use UAS as a stationary platform over a point on the ground from which to perform many of the communications or remote sensing satellite functionalities with a fraction of the cost.

To Read

- Section 2.5 of chapter 2 of textbook 2 Introduction to UAV Systems, 4th edition

Summary and Final Tasks

Summary

We have now concluded the materials for Lesson 1, which walked us through the early history of UAS development. As is the case with most emerging modern technologies, we find the US defense program behind UAS development and its introduction to the civilian market. In addition, we learned about the different classifications for UAS. We also learned about the current status and the different applications of UAS.

One thing I would like to emphasize here is the fact that there is no single civilian owner of a large size UAS (such as the one used by the military, which is the size of a Boeing 737). In other words, there is a large gap between the size and sophistication of UAS used by the military and the ones used by civilians, which are characterized by smaller size and lesser sophistication. I believe that the reason behind this gap is strict regulation surrounding the operation of UAS in the National Airspace (NAS). Such a gap will diminish once civilian UAS has access to the NAS.

As for this lesson’s readings, try to read as much as you can through the materials available on the Internet, as it is a great resource. There is no one good textbook available so far on the subject. That is why I recommend buying, if you can, the two supplementary references listed under the course requirements in addition to the designated textbook.

(Note: Unless it is an online quiz or assignment, all deliverables should be organized and submitted in a Word document. Figures should be scanned and inserted in the document.)

Final Tasks

| 1 | Complete the Lesson 1 Quiz by the end of Lesson 2 |

|---|---|

| 2 |

Complete your participation in the discussion forum on the "Agreement and Differences in UAS Classification" detailed in Classification of the Unmanned Aerial Systems [19] by the end of Lesson 2 |

| 3 | Review the final project details in Canvas. |

Lesson 2: Unmanned Aerial System Elements

Lesson 2 Introduction

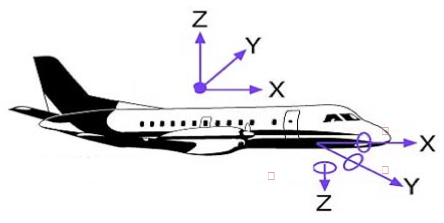

Welcome to Lesson 2! In this lesson, you will become familiar with the elements that combine to create an operational Unmanned Aerial System (UAS). Most UASs consist of an Unmanned Aerial Vehicle (UAV), human elements, payload, control elements (for a larger system it will be a ground control station (GCS) or mission planning and control station (MPCS)), and a data link communication unit (Figure 2.1). Military versions of the UAS will have an additional weapon system platform and supporting soldiers as part of the human element.

In addition, you will understand and develop knowledge about the different acquisition and auxiliary aerial sensors that are usually carried on board the UAS payload. Finally, at the end of this lesson, you will have a working knowledge of the different components forming a UAS and how the different components relate and interact with one another, the data acquisition sensors, and the auxiliary sensors that accompany a UAS mission, such as GPS and IMU.

Lesson Objectives

At the successful completion of this lesson, you should be able to:

- describe and identify the different elements of an Unmanned Aerial System (UAS);

- understand the functionality of each element making the UAS;

- explain how the different elements of a UAS complement each other;

- understand the basics in regard to an aerial vehicle design;

- describe different payloads;

- identify the different miniaturized sensors used for remote sensing;

- understand the fundamentals of digital cameras and LiDAR;

- understand the basics principals of GPS and IMU.

Lesson Readings

- Sections 3.1, 3.2, 3.3, 3.4, 3.5, and 3.6 of chapter 3 of textbook 1: Bankhart et al., Introduction to the Unmanned Aircraft Systems

- Chapter II from Crouch, C. thesis, “Integration of mini-UAVS at the tactical operations level: Implications of operations, implementation, and information sharing. [20]”

- Section 2.6 of the report "Eyes of the Army, U.S. Army Roadmap for Unmanned Aircraft Systems 2010-2035 [21]."

- Chapter 13 of textbook 2: Fahlstrom, et al., Introduction to UAV Systems (Aerospace Series)

- Chapter 9 of textbook 2: Fahlstrom, et al., Introduction to UAV Systems (Aerospace Series)

- Chao, H. et al., "Towards Low-cost Cooperative Multispectral Remote Sensing Using Small Unmanned Aircraft Systems [22]"

- Chapter 10, 11, and 12 of textbook 2: Fahlstrom, et al., Introduction to UAV Systems (Aerospace Series)

- Chapter 17 of textbook 2: Fahlstrom, et al., Introduction to UAV Systems (Aerospace Series)

- Williams, K., Human Factors Implications of Unmanned Aircraft Accidents: Flight-Control Problems [23], FAA report

- Takanmaki, I, et al., "How and why Unmanned Aircraft Vehicles can improve Real-time awareness? [24]"

Lesson Activities

- Complete the Discussion Forum for Lesson 2: SWOT Analysis

- Take the Lesson 1 and 2 quizzes

The Air Vehicle

The air vehicle is the airborne part of the system. The air vehicle here means the aircraft, in conjunction with the payload, that forms an Unmanned Aerial System (UAS). In general, the unmanned aircraft is usually called an Unmanned Aerial Vehicle (UAV) and can be either a fixed-wing or rotary airplane that flies without a human on board.

The UAV is a complicated system including structures, aerodynamic elements such as wings and control surfaces, propulsion systems, control systems, communication elements, and launch and recovery subsystems. Larger UAVs use fuel-powered engines in order to attain flight, while smaller UAVs typically use either gasoline-powered engines or electrically powered engines. When the UAV has sensors and payloads, it is customarily called an Unmanned Aerial System (UAS). In this course, the terms UAS and UAV will be used interchangeably to mean the same. Due to inclusion of the word "unmanned," there has been some resistance in recent years to use of the names Unmanned Aircraft and Unmanned Aerial Vehicle. There is a push to adopt the term Remotely Piloted Aircraft (RPA) or Remotely Piloted Vehicle (RPV) because of the crucial human involvement related to the operation of the system. UAVs come in all different sizes and shapes; however, the following are the major factors to be considered in designing a UAV:

- take-off weight

- wing span

- length

- endurance speed

- endurance time

- weight of fuel

- engine power

- engine capacity

- engine weight

- airframe weight

To Read

- Sections 3.1 and 3.2 of chapter 3 of Introduction to the Unmanned Aircraft Systems

- Chapter II from: Collier C. Crouch, 2005, “Integration of mini-UAVS at the tactical operations level: Implications of operations, implementation, and information sharing, [20]” master’s thesis, Naval postgraduate school, Monterey, California.

- Section 2.6 of the report "Eyes of the Army, U.S. Army Roadmap for Unmanned Aircraft Systems 2010-2035. [21]" It is a good reading about UAS definitions.

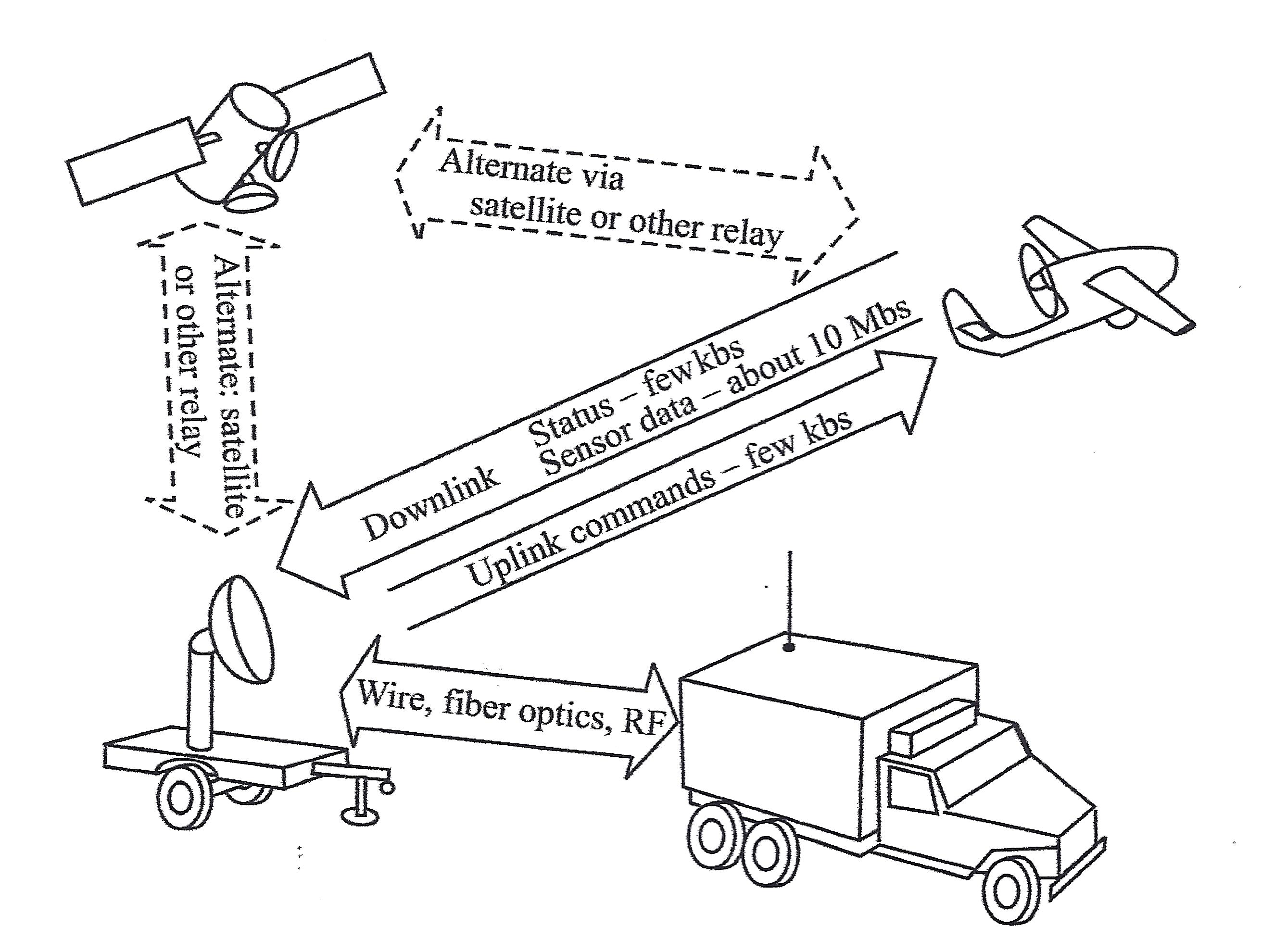

The Communication Data Link

The term data link is used to describe how commands are communicated back and forth between the ground control system and the autopilot. The data link is a key subsystem for any UAS, as it provides a two-way communication to ensure that missions are executed safely and according to plan. A good data link is illustrated in Figure 2.2:

- The uplink that is illustrated in Figure 2.2, below, operates with a bandwidth of a few kHz and secures sending data to control the UAS flight path and to communicate with the payload;

- The downlink from the UAS to the ground control station uses a low data rate to acknowledge commands and to send status information about the air vehicle, and uses a high data rate (1-10 Mbs) for sending payload sensor data, such as video, down to the ground control station. The downlink signal can also be used to locate and measure the position of the air vehicle (range and azimuth) in reference to the ground antenna and to improve the overall accuracy of target locations measured by the payload sensors.

This schematic drawing illustrates the workings of a data link system. The system is composed of four elements:

- the Ground Antenna (which looks like a truck with an antenna extended from the back),

- the Ground Control Station (a satellite dish mounted on an unattached, flat-bed truck trailer),

- the UAS (airborne above the Ground System, which is the Ground Antenna and Ground Control Station together) and

- a Satellite, floating in space.

Between the Ground Antenna and the Ground Control Station is a two-way arrow containing the words "Wire, fiber optics, RF"

An arrow points from the Ground Control Station to the UAS. It contains the words "Uplink commands - few kbs"

An arrow points from the UAS to the Ground Control Station. It contains the words "Status - few kbs; Sensor data - about 10Mbs."

A two-directional arrow between the UAS and the Satellite contains the words "Alternate via satellite or other relay."

A two-directional arrow between the Ground Control Station and the Satellite contains the words "Alternate: satellite or other relay."

There are two different modes for operating a UAS. Those are:

- The radio frequency line of sight (LOS) operation, where a direct communication link is established between the UAS and the ground station, and

- The beyond line of sight (BLOS) operation. This mode of operation is used when the UAS is controlled from far distances beyond the LOS capability. Communications satellites are usually used in this mode of communication.

More details on the two operating modes will be covered in lesson 4.

To Read

- Section 3.4 of chapter 3 of textbook 1 "Introduction to the Unmanned Aircraft Systems"

- Chapter 13 of textbook 2 "Introduction to UAV Systems (Aerospace Series)"

The Command and Control Element

The command and control element is the nerve center for the UAS operation. It controls the following tasks:

- launching the vehicle,

- flying the vehicle,

- recovery of the vehicle,

- receiving and processing data from internal sensors of the flight system,

- receiving and processing data from external sensors of the payload,

- controlling the operations of the payload, and

- providing the interfaces between the UAS and the outside world.

The command and control element utilizes several subsystems to accomplish its missions. They are:

- UAV status and controls,

- payload data display and control,

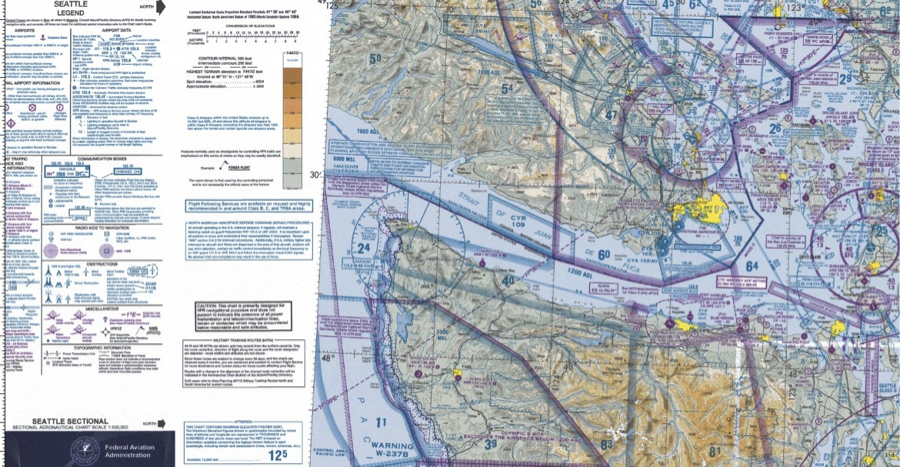

- map displays for mission planning and for monitoring the flight path,

- autopilot to provide the ability for the UAV to execute its mission based on preprogrammed instructions without operator intervention,

- ground terminal for two-way communication with the UAV and the payload,

- computer(s) to:

- provide an interface between the UAV and operator,

- control the data link and the data flow between the UAV and the command and control station,

- perform the navigation functions for the system,

- perform necessary computations for the autopilot and the payload control functions,

- communication links to other organizations for command and control and for dissemination of information gathered by the UAV.

The most important parts of the command and control element are the Autopilot and the ground control station, as described in the following subsections:

Autopilot

Autopilot is the sub-system that enables partial or fully autonomous flight. A UAV can be operated completely by a remote control, where an operator steers the air vehicle all the time, or a UAV can be flown autonomously, where a pre-programmed path is fully executed from takeoff to landing by the autopilot sub-system without any pilot intervention. Small, light-weight autopilots are readily available and are made by a few manufacturers. Besides guiding the air vehicle throughout the pre-set flight path, the autopilot also executes a “lost link” routine if the UAV loses contact with the ground control station. The lost link procedure guides the UAV to a known waypoint, where contact with the ground control station can again be established. The following scenario was developed for a typical emergency procedure based on loss of link between the Yamaha RMAX UAS and the ground Control Station:

Emergency Procedures for Yamaha RMAX UAS

The RMAX utilizes a redundant communication system to ensure constant contact between the aircraft and the remote pilot. The ground control station provides real-time data regarding aircraft location, altitude and flight characteristics. The pilot constantly monitors the flight information provided to the ground control station, and through the assistance of a trained observer, maintains a visual line of sight to the aircraft. In the event of a loss of link between the aircraft and the ground control station, the subsequent procedures are followed:

- Preflight Actions - Prior to any flight, and as part of the mission preparation, the mission operator will insert appropriate lost link settings to allow the RMAX to safely return to the predetermined landing location. The settings are stored on the aircraft so that in the event of a lost link, the RMAX is able to continue operations under autonomous control.

The mission operator will identify a safe altitude and a location for the aircraft to fly to once the RMAX detects a lost link. Once the aircraft reaches the specific GPS location, it will begin at auto descent and shut off the rotors upon landing.

- In the Air - The RMAX continuously monitors the status of communication with the ground station. When the RMAX detects a loss of link with the ground station, it starts a timer. This timer value (typically 5 seconds) is set by the operator in the mission settings page. When this timer expires, the RMAX goes into lost communication mode and will command the vehicle to an operator-indicated lost communication waypoint at a predetermined altitude. The aircraft then commands a 20-second descent until touchdown. Once the aircraft lands, the aircraft automatically turns the rotors off.

| Problem: | Sign of Problem: | Monitored throughout: | Solution: |

|---|---|---|---|

| Low Signal | Vehicle is slow to respond to manual commands or PCC commands. Autopilot terminates steering mode. Audible and warning light alarms. | Yes, signal strength displayed in percentage and packet update rate. | Turn Autopilot on and abandon manual flight. Initiate auto-land. |

| Loss of Communication | Autopilot terminates manual control or fails to respond to PCC commands. Audible communication alarm and warning light. | Yes. | The vehicle returns to loss communication waypoint, hovers until elapse of flight timer, then commences auto-land procedure. |

| Loss of GPS | First indication is poor altitude hold performance, also poor position hold during hover. | Yes, indicated by the number of satellites tracked and GPS Quality PDOP. | Assume manual control of aircraft and land. |

| Low Power Avionics | Lower than nominal voltage displayed. | Yes. | Land Immediately. |

| Engine Failure | Noise level or RPM changes, engine loses power. | Yes, monitored by rotor RPM through the RPM sensor. | Return and land immediately. If the engine dies, initiate autorotation procedure. |

| Tail Rotor Failure | Loss of tail control. | No. | Switch to manual control and initiate autorotation procedure. |

Ground Control Station

The ground command station (GCS) is the site where the pilot controls the UAV during the flight. The GCS size and sophistication depends on the category of the UAS/UAV. Some large UASs require a formal facility with multiple workstations and personnel, while a GCS for small UAS can be a handheld transmitter. Most UASs used by the geospatial community are small UASs that do not require a dedicated GCS.

To Read

- Section 3.3 of chapter 3 of Introduction to the Unmanned Aircraft Systems

- Chapter 9 of the textbook Introduction to UAV Systems (Aerospace Series)

- The paper "Towards Low-cost Cooperative Multispectral Remote Sensing Using Small Unmanned Aircraft Systems [22]"

Payload

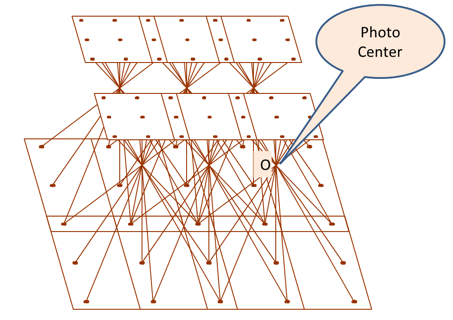

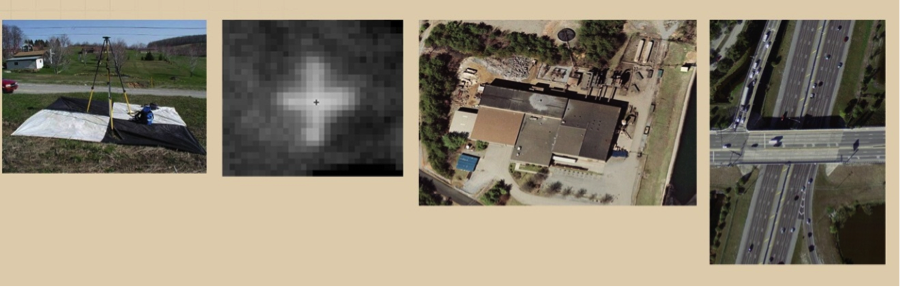

Payload refers to air vehicle (aircraft) cargo. It is also defined as the amount of cargo weight an air vehicle can safely carry. Carrying a payload on board is the sole purpose for most UASs. Payloads come in a variety of sizes, weights, and functions. In our business of geospatial remote sensing, we focus on remote sensing sensors and the necessary navigation systems accompanying them. A UAS dedicated to remote sensing and mapping missions is usually equipped with one or more of the following sensors.

Remote Sensing Sensors for the Unmanned Aerial System

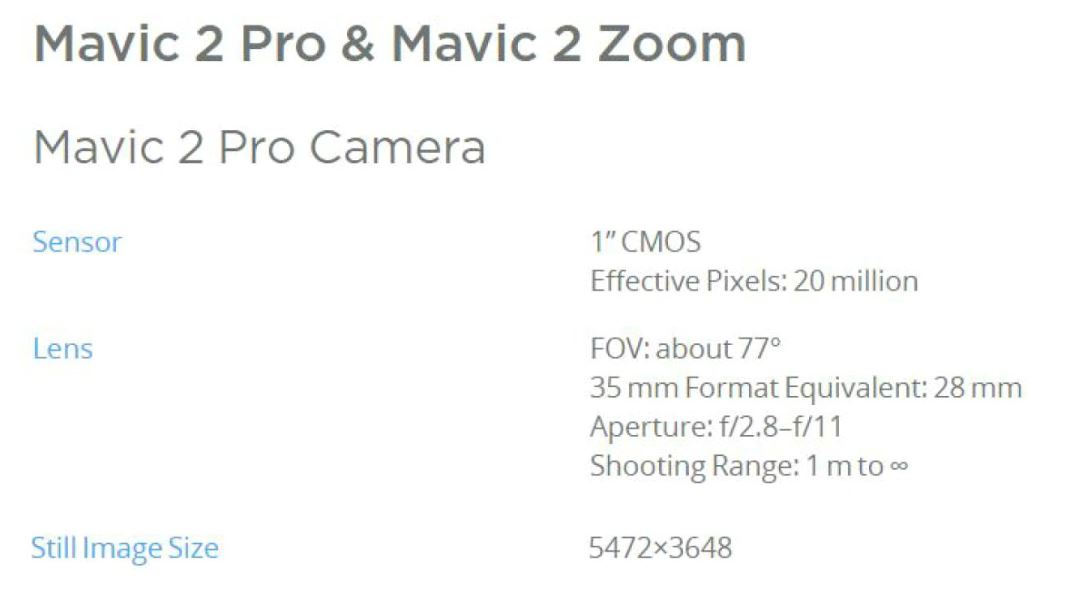

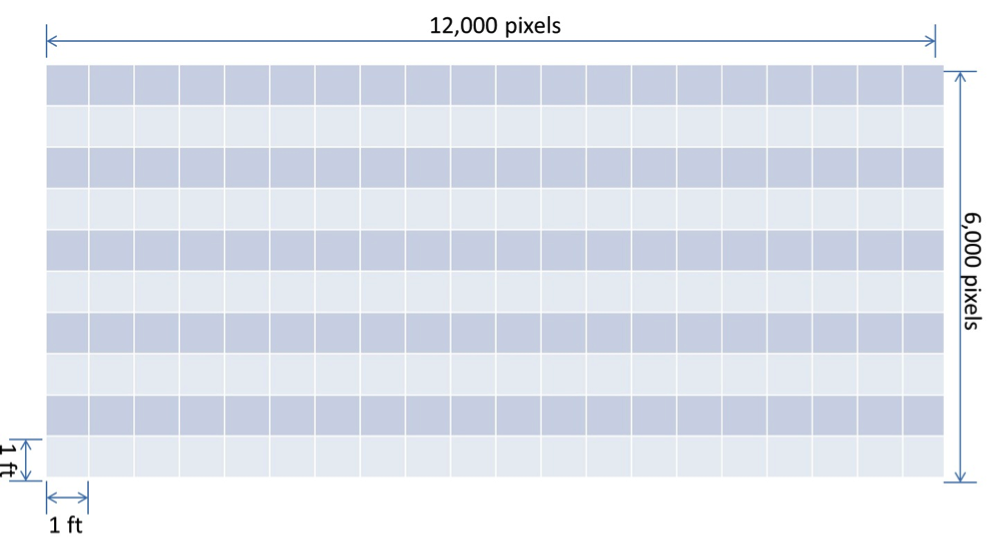

- Electro Optical Sensors: such as cameras (still and video, film and digital). Aerial imaging is considered one of the most acceptable applications for UASs. Recent cameras are all digital cameras (versus film). Digital aerial cameras are categorized as follows:

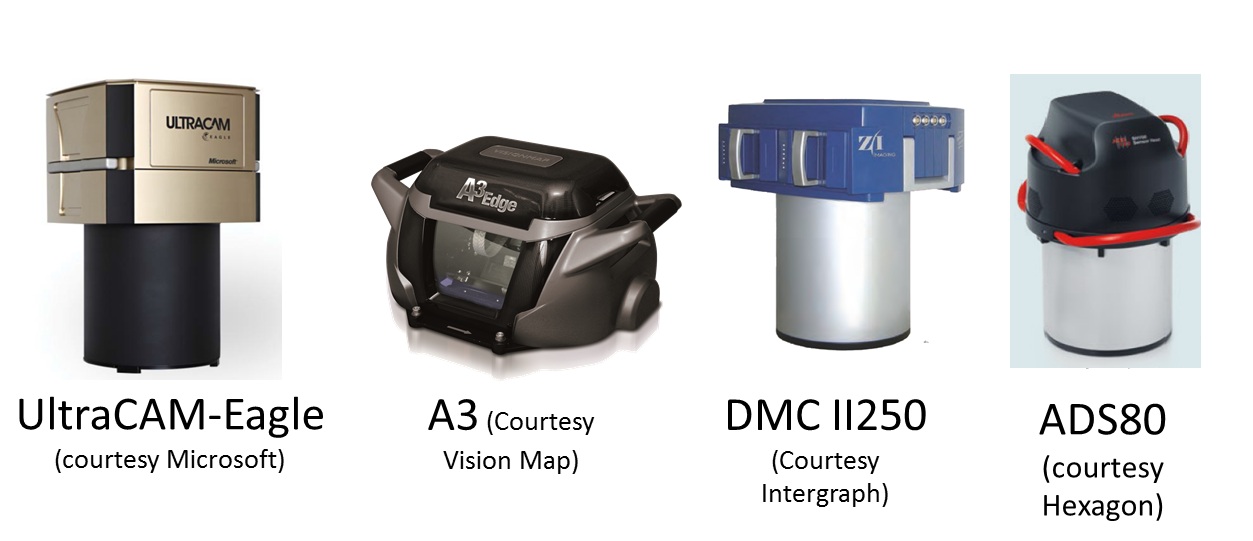

- Large Format Cameras: These are mainly used on board manned aircraft and large UASs. They are very heavy for small and medium-sized UASs. Large format cameras are used to cover large areas, such as entire counties or states. Figure 2.3 illustrates a few of the most common aerial cameras used today.

Figure 2.3 Large Format Aerial CamerasSource: (as cited by each image)

Figure 2.3 Large Format Aerial CamerasSource: (as cited by each image) - Medium Format Cameras (not compact): These are cameras that are smaller than large format cameras and more suitable for medium and large UASs. Cameras in this class are still too heavy for small UASs. They are widely used for manned aircraft and can be suitable for large size UASs.

- Small and Medium Miniaturized Format Cameras: This class of cameras is similar to the cameras we own at home and use for recreational purposes, but with compacted size to be suitable for UAS use. Miniaturized cameras are the newest development in the field of digital cameras; they are developed mainly for small UASs. Examples of miniaturized cameras are the Imperx Bobcat 2 [25]camera (Figure 2.4), which has a 16 mega pixel array, weighs only 369 grams (13 ounces) and has a length of 53 mm (2 inches), and the iXA [26] camera system from Phase One Industrial (Figure 2.5). In their latest development, Phase One released their latest payload for UAS, the P3 DJI [27] which is based on mounting an iXA on DJI M300 using DJI mounting hardware and app. Here is additional information [28] about the Phase One suite of sensors for UAS.

- Large Format Cameras: These are mainly used on board manned aircraft and large UASs. They are very heavy for small and medium-sized UASs. Large format cameras are used to cover large areas, such as entire counties or states. Figure 2.3 illustrates a few of the most common aerial cameras used today.

- Infrared Sensors: An infrared sensor operates in the infrared range of the electromagnetic spectrum. Infrared sensors for remote sensing are designed to operate in two regions of the electromagnetic spectrum (EMS), those are:

- The Near Infrared Region (NIR): NIR is barely outside the Red of the visible region of the EMS, see Figure 2.6. When the NIR band mixed with the red and green bands of the visible light, it forms a false colored infrared (CIR) image when it is displayed in the order of NIR, R, G instead of the usual R,G,B. False colored infrared imagery is effective in studying vegetation indices and vegetation health and conditions. Precision Hawks runs successful applications [31] for the precision Ag industry. Few sensors designed for UAS to provide multi-spectral imagery, including the NIR band. PARROT SEQUOIA+ [32] is one of those affordable sensors, Figure 2.7.

- Thermal Infrared Region (TIR): TIR sensor records the sensed heat and displays it as an image. There are two different technologies used in building such sensors. The uncooled sensor is usually less expensive and less sensitive when compared to heavyweight cooled sensors. Thermal Infrared sensors are widely used for survey and inventory of buried hot water and steam pipes and to inspect heat loss from these pipes. They are also employed for roof inspection, looking for water leaks and heat loss. An example of an infrared sensor is the FLIR A6700SC, [35] shown in Figure 2.8. Recently, FLIR offered a suite of smaller thermal infrared cameras suitable for small UAS such as the VUE PRO, Figure 2.9. In a teaming agreement with DJI, new payloads by DJI such as Zenmuse XT simultaneously carry an RGB camera and a FLIR thermal sensor.

- Laser Sensors: These sensors use laser light for range finding. In addition to the laser source, LiDAR systems use GPS and Inertial Measurement Units (IMU) for precise geolocation of point cloud or terrain mapping. Laser ranging, when combined with necessary auxiliary sensors such as GPS and IMU (see details below), makes a laser-based terrain mapping system called Light Detection and Ranging (LiDAR) [37]. LiDAR systems can map the terrain generating point cloud, which can be used to precisely model the terrain below the path of the UAS. Recently, miniaturized laser-based systems started their way into UAS payloads. An example of the compact size LiDAR that is suitable for UAS is the Riegl VUX-1 [38] (Figure 2.10) and Velodyne (Figure 2.11). T o learn more about a few different UAS-based lidar systems and how people are evaluating the quality of its products, I encourage you to watch this video [39] about UAS-based lidar systems evaluation.

- SAR Sensors: Synthetic Aperture Radars are usually employed by the military for reconnaissance purposes. They require large size UASs, as they are heavy. We should not expect a civilian UAS with a SAR system as part of the payload in the foreseeable future.

Auxiliary Sensors for the Unmanned Aerial System

Auxiliary sensors here mean the navigation sensors that are necessary to determine the location and the orientation of the UAS and its remote sensors that are mentioned earlier in this section. For the UAS and onboard sensor position determination, the Global Positioning System (GPS) is used, and for the attitudes or orientation of the UAS and the onboard sensors, the Inertial Measurement Unit (IMU) is used.

Global Positioning System (GPS)

The GPS does not need introduction, as everyone is familiar with its definition. It is the same GPS that you might use to drive around town. However, GPS that is used to determine a remote sensor position usually undergoes a post-processing to enhance the accuracy of the position.

UAS are offered with two grades of GPS accuracies. The most common one is the single frequency GPS receivers as it is cheaper, and it does not require post-processing or real-time correction service. Such receivers provide location accuracy of around 1 to 2-meter. For more accurate geospatial products generation, the more accurate dual frequency receiver and precise services are need needed. The latter receivers offer two modes of operations, both of which yield positional accuracy of 1 to 3 cm with little or no ground controls required for the project. UAS vendors are fielding systems with two operational modes, those are:

- The real-time kinematic GNSS (RTK) mode: This mode of operation allows the UAS to receive in real time GPS positions corrections from GPS correction services. This mode of operation has particular requirements:

- RTK requires a GNSS base station equipped with a transmitter with a reliable link to a fairly dynamic moving platform such as UAS.

- The rover (on the UAS) itself requires a dedicated receiver for the corrections.

- The post-processed kinematic (PPK) mode: This mode of operation does not require the real time GPS positions correction, as the acquired GPS data can be post-processed at a later date. RTK operations not only require a stationary base station during the UAS mission, but the location of such base station should be surveyed and located before the UAS flies the project, something may complicate the deployment of the mission in some circumstances. Although PPK requires a base station as well, the base station’s precise location can be determined later after leaving the project area.

In principle, both RTK and PPK promise positional accuracies at the 1-3cm level. The main purpose of RTK and PPK is to minimize or eliminate the need for ground control points, thereby reducing cost. For more details on GPS, please visit GPS Defined. [42]

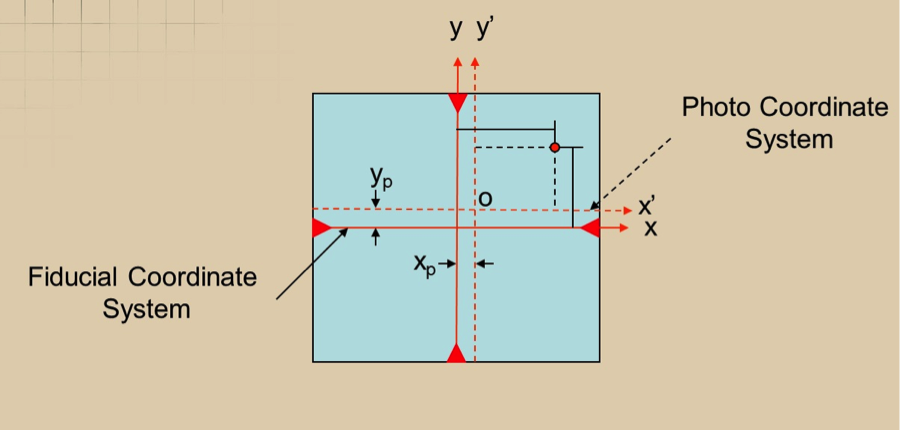

Inertial Measurement Unit (IMU)

An inertial measurement unit, or IMU [43], is an electronic device that measures and reports on aerial vehicle velocity, orientation, and gravitational forces using accelerometers and gyroscopes. IMUs are typically used to control and maneuver manned aircraft, unmanned aerial vehicles (UAVs), and satellites. Another important use for the IMU is that it helps IMU-enabled GPS devices to maintain positioning information when GPS-signals are unavailable, such as in tunnels, inside buildings, or when electronic interference is present.

The IMU is the main component of inertial navigation systems (INS) used in aircraft, spacecraft, watercraft, and guided missiles in Geo-spatial mapping activities. The data collected from the IMUs sensors allows us to determine the orientation of the sensor, which is an important aspect in geolocating on the ground each pixel of the sensor. The IMU, like other components necessary for the operation of UASs, is miniaturized in weight and size to make it fit on small UASs. An example of these small IMUs, which are mainly designed for UASs, is the SBG 500E, [44] illustrated in Figure 2.12.

For more details on the IMU, you can visit the IMU Wikipedia page [43].

To Read

- Section 3.5 of Chapter 3 of Introduction to the Unmanned Aircraft Systems

- Chapter 10, 11, and 12 of Introduction to UAV Systems (Aerospace Series)

Launch and Recovery

The launch and recovery element is an area that requires the most human interaction. Some UASs require elaborate launching procedures, while others can be hand thrown toward the sky. Some large UASs require long runways and other field support equipment such as fuel trucks, ground power units, and ground tugs. Similarly, the requirements for recovery procedures vary widely. Most small UASs that are used for geospatial projects require simple procedures and can be hand held or launched with the use of a catapult.

Some UASs, such as target drones, are air-launched from fixed-wing aircraft. Usually, large UASs are equipped with wheels for takeoff and landing and do not need special equipment, while smaller UASs need a variety of launch and recovery strategies depending on the complexity of the system.

A truck driven at a speed of 60 mph can be used to launch a small UAS assuming that the launching site contains a smooth surface for the truck to use. In this type of launching method, the UAS is held in a cradle above the truck cab with its nose pointed high toward the launching path, Figure 2.13. Once speed is sufficient for takeoff, the UAS is released and lifts upward toward its takeoff path.

Many small and medium-sized UAS launch systems have a requirement to be mobile, or in other words, to be mounted on a truck or a trailer. Such mobile launchers fall within one of the following types:

- Rail Launchers: The UAS is held fast to a guide rail while it is accelerated to launch speed.

- Pneumatic Launchers: Compressed air or gas is used to provide the necessary force for launching the UAS.

- Hydraulic/Pneumatic Launchers: Compressed gaseous nitrogen is used as the power source for launch.

- Zero Length Rocket Assisted (RATO) Launching: There is no rail or track used in this mode of launching. The UAS rises directly from a holding mechanism, and it will be in free flight once the rocket is fired.

For more details on these launchers, refer to chapter 17 of the supplemental textbook Introduction to UAV Systems, 4th edition.

To Read

- Section 3.6 of Chapter 3 of Introduction to the Unmanned Aircraft Systems

- Chapter 17 of Introduction to UAV Systems (Aerospace Series)

The Human Element

Like any other technology that requires human intervention for the safety of operation, human involvement is considered to be the most important element for the successful and safe operation of the UAS. Even with autonomous flights using autopilot, the human role during launch and recovery is crucial to the operation of the UAS. As navigation technology develops further, the human role in operating a UAS will diminish dramatically.

The human element is key in almost all operational aspects of any UAS and plays a great role in the success and survival of its operation. Starting with mission planning, humans have to design and arrange a concept of operation in order to guarantee success. Equally important is the human role in the flight control process. Autopilot can do only so much without the guidance and intervention of the operator.

The role of the pilot and the observer cannot be underestimated, as without them the flight will not occur. This is true even with the most sophisticated drones, such as the Predator. Even the Predator, with sophistication and automation built in, needs a pilot to fly it. The human element is involved in all of the following aspects of operating a UAS:

- Mission planning and control: has to be performed by an operating team.

- Launch and recovery procedure: has to be performed by an operating team.

- Payload management and control: has to be managed by an operating team.

- Data links monitoring: has to be managed by an operating team.

- Ground support equipment coordination and management: has to be performed by an operating team.

Automation in operating a UAS results in less human intervention, but it will never eliminate the role of the human in such an operation. Imagine that an airline invites you to be on board an airplane flown solely by autopilot. There are no pilots on board. Would you accept such an invitation? I am certain your answer would be a big NO. Using the same analogy, could you imagine operating a UAS, which is less sophisticated than a jetliner, without a pilot and without an observer? That is how important the human role is in operating a UAS. That is at least true for the time being. Who knows what the future may bring to this field.

To Read

- Williams, K. Human Factors Implications of Unmanned Aircraft Accidents: Flight-Control Problems [23], FAA report, DOT/FAA/AM-06/8 Office of Aerospace Medicine Washington, DC 20591- April 2006

Summary and final tasks

Summary

Congratulations! You've finished most of the Lesson 2 material. What I hope you learned from this lesson is all you need to know about the different elements that form a UAS. The payload section is very valuable to individuals with background in geospatial mapping, as it goes through the different sensors utilized by the industry today. Understanding the functionality of each of the UAS elements will help you in the common lessons, where we are going to talk about Concepts of Operation (CONOP), risk assessment, and Certificate of Authorization (COA). Therefore, please make sure that you understand the different topics of this lesson and do not hesitate to ask questions.

Final Tasks

| Task | Description |

|---|---|

| 1 | Complete Lessons 1 & 2 Quizzes |

| 2 | Complete the discussion assignment on SWOT analysis in lesson 2 on CANVAS |

| 3 | Install Pix4D software. Pix4D is the data processing software you will use to process UAS imagery. Follow the instructions in Canvas. |

Lesson 3: Concept of Operation (CONOP) and Risk Assessment for UAS

Lesson 3 Introduction

Welcome to Lesson 3! In this lesson, you will become familiar with the concept of operating a UAS and how to design a Concept of Operation (CONOP). The CONOP subject focuses on the pre-flight description of the mission that a UAS operation will go through. You will also learn how to analyze risks surrounding UAS operations and to how to assess and mitigate the impact of such risks.

Lesson Objectives

At the successful completion of this lesson, you should be able to:

- understand the concept of operation design strategy;

- understand risk assessment;

- design a CONOP and Risk assessment for a UAS mission.

Lesson Readings

- Gebre-Egziabher, D., et al., "Analysis of Unmanned Aerial Vehicles Concept of Operations in ITS Applications [46]"

- FAA document “Integration of Unmanned Aircraft Systems into the National Airspace System Concept of Operations [47]”

- Read chapter 7 "Safety Assessment" of textbook 1: Barnhart, et al., Introduction to Unmanned Aircraft Systems, 2nd edition

- Lamb, G., et al., "Air Combat Command [48]"

- CONOP developed by NOAA’s National Weather Service : "River Forecast Center (RFC) Analysis and Gridded Forecast Editor Improvement [49]"

Lesson Activities

- Complete your CONOP and Risk assessment analysis as described above. Drop your completed MS Word document in the drop box in lesson 5. Watch for the actual assignment due date. (7 points)

- Complete the Lesson 3 Quiz.

- Preliminary Project Idea Milestone: submit preliminary project idea/proposal in the "Preliminary project idea" dropbox.

- Pix4D is the data processing software you will use to process UAS imagery. Install the Pix4D software using the instructions in Canvas.

- Download and practice Mission Planner Software with these instructions. [50]

- Complete your CONOP and Risk assessment analysis as detailed in the section entitled "Development of CONOP and Risk Assessment." Drop your completed MS Word document in the drop box. Watch for the actual assignment due date. (7 points)

CONOP Elements

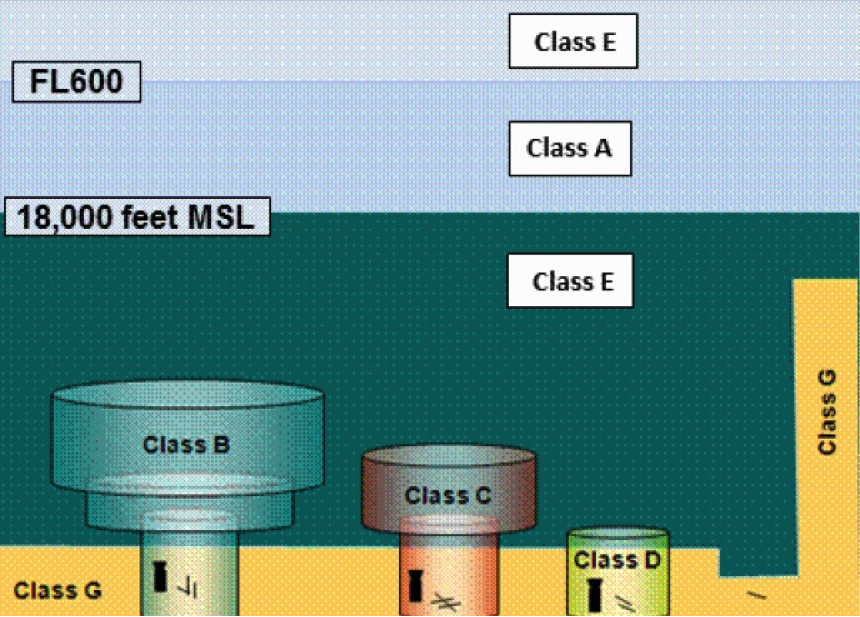

The term CONOP means a complete description of the mission that a UAS operation goes through from launch to recovery. The CONOP includes a procedure for the mission to be carried out to achieve the mission objectives. The procedure depends on the system configuration and capabilities. UAS capabilities determined by its components such as sensors, guidance, endurance (in time), weather limitations (ceilings, wind speeds, etc.), navigation and control play key role in defining the CONOP. CONOP may also depend on other factors such as safety considerations for the UAS as well as to lives and properties along the flying path of the mission. The procedure will also include weather condition such as wind speed and visibility, as the mission may be halted or terminated if the favorable weather condition is not reached.

The FAA expectations from the provided CONOP are:

- to give FAA clear understanding of the proposed operations;

- to include:

- description of UAS;

- details of intended use;

- proposed area of operations;

- intended classes of Airspace.

- to enable or to include the development of the Operational Risk Assessment (ORA).

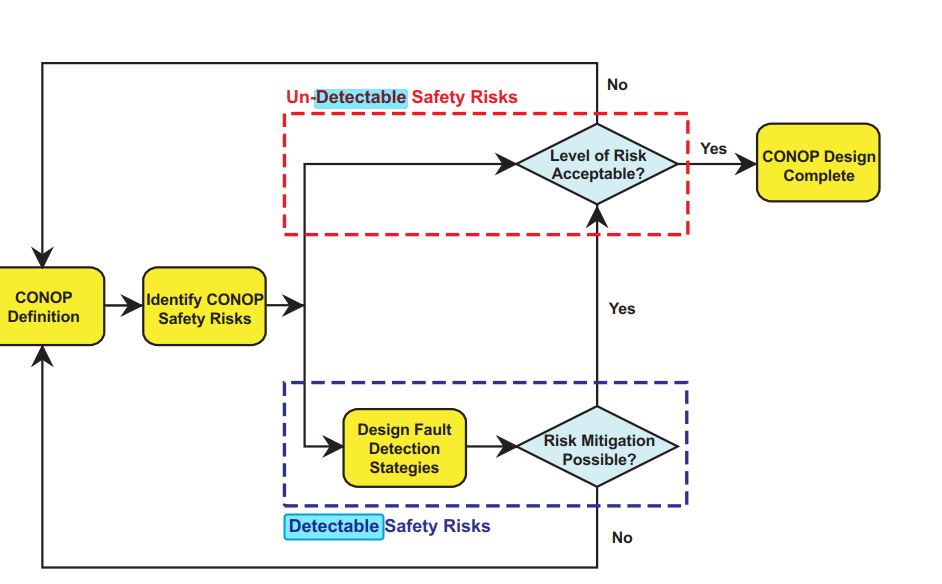

There are many ways to design CONOP one of which is described in the final report published by the ITS Research Institute of the University of Minnesota entitled “Analysis of Unmanned Aerial Vehicles Concept of Operations in ITS Applications”. In that report, the process describes the following main elements of the design:

- CONOP Definition

- Identification of CONOP Safety Risks

- Designing Fault Detection Strategies

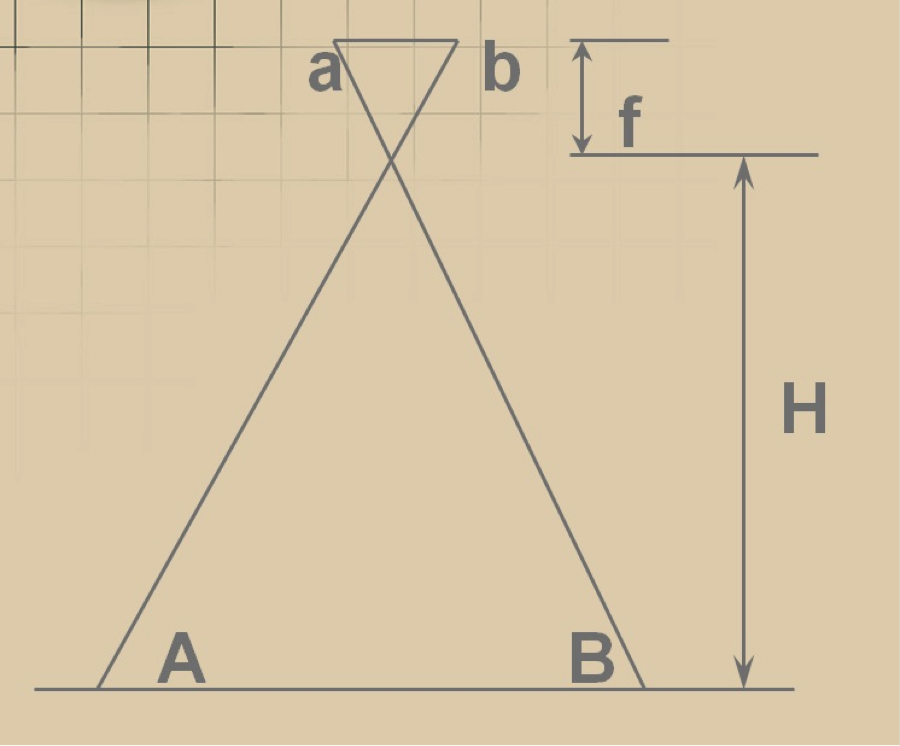

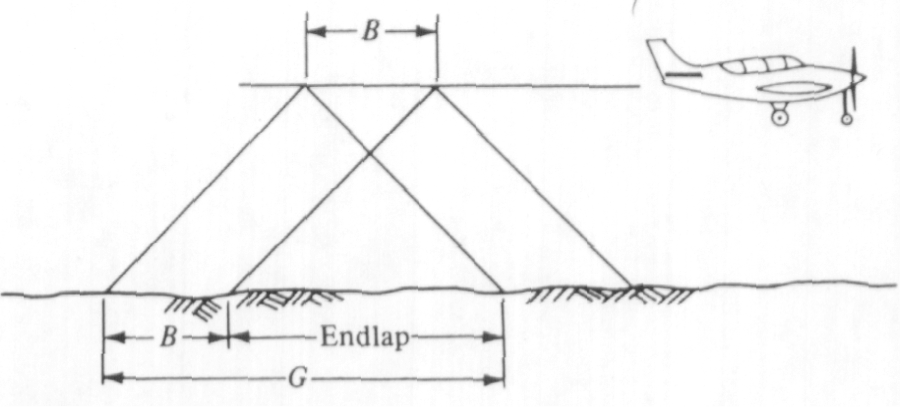

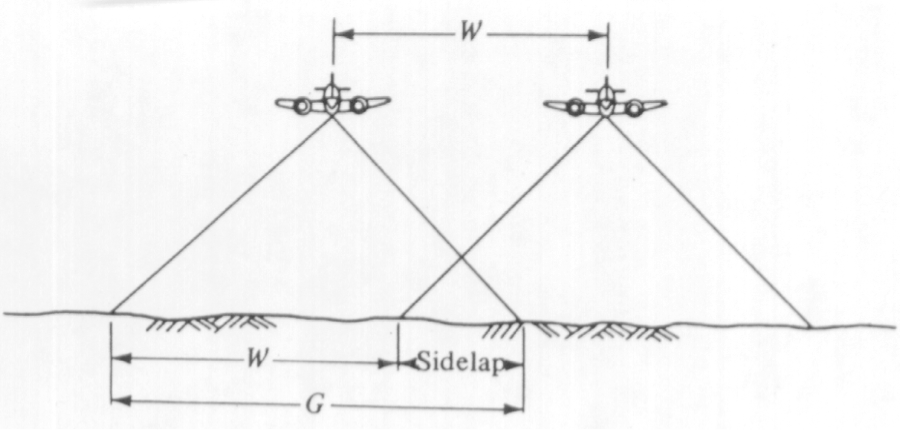

Figure 3.1 illustrates a flow chart for small UAS concept of operation (CONOP) design process.

Many details need to be identified to complete CONOP development. Information such as:

- What are the known elements and the high-level capabilities of the system?

- What are the geographical and physical extents of the mission under execution?

- What is the time-sequence of activities that will be performed?

- What resources are needed to design, build, or retrofit the system?

- Which kind of risks are associated with different components of the system?

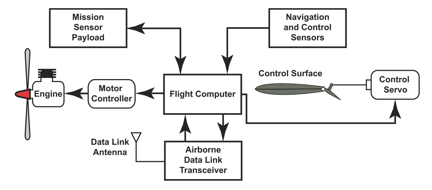

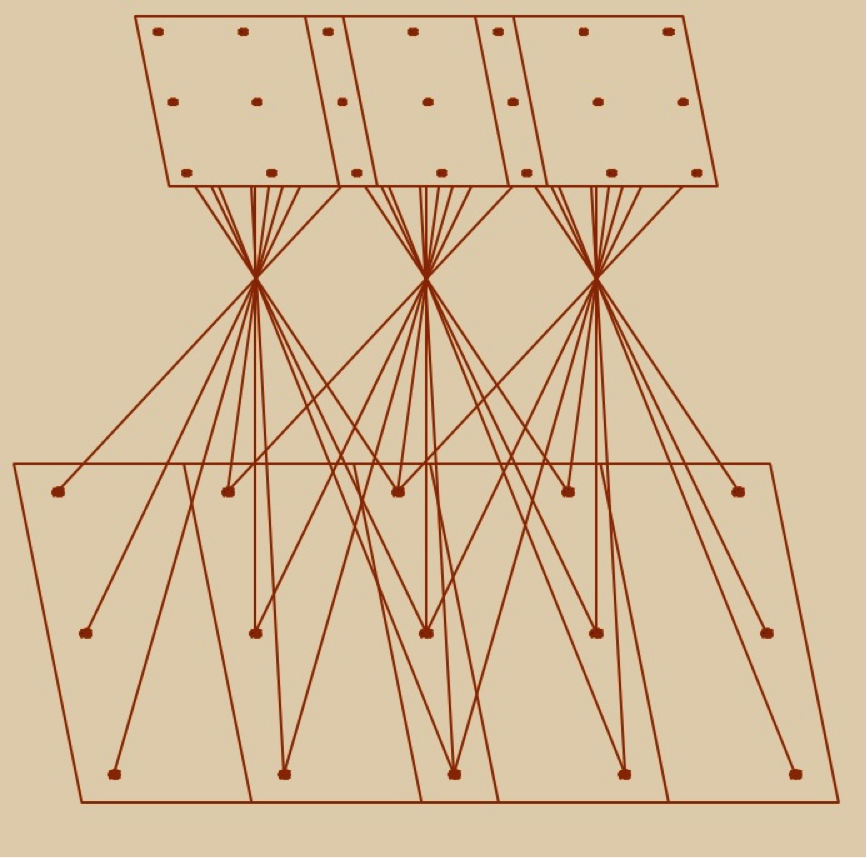

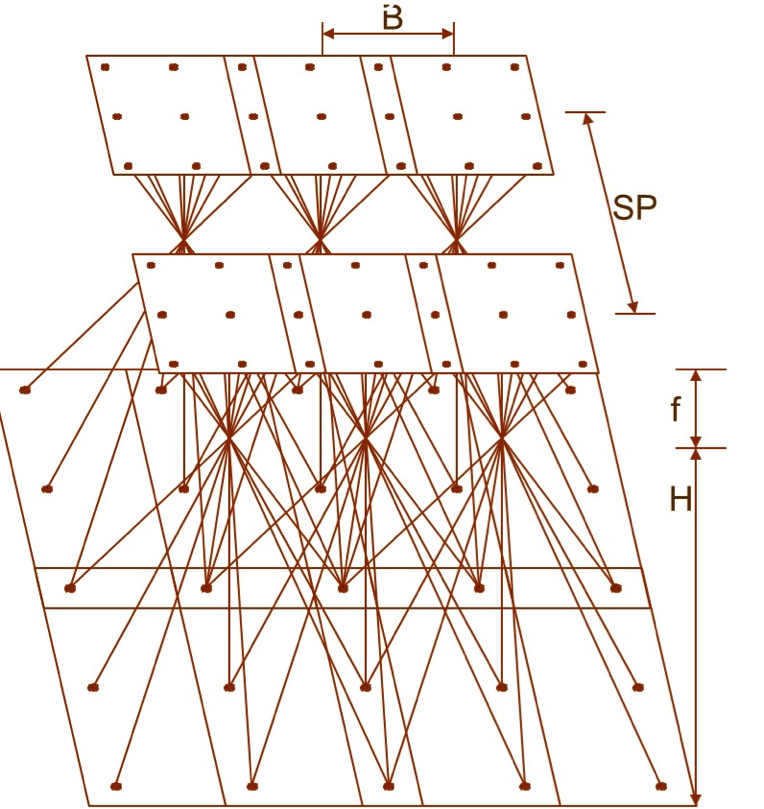

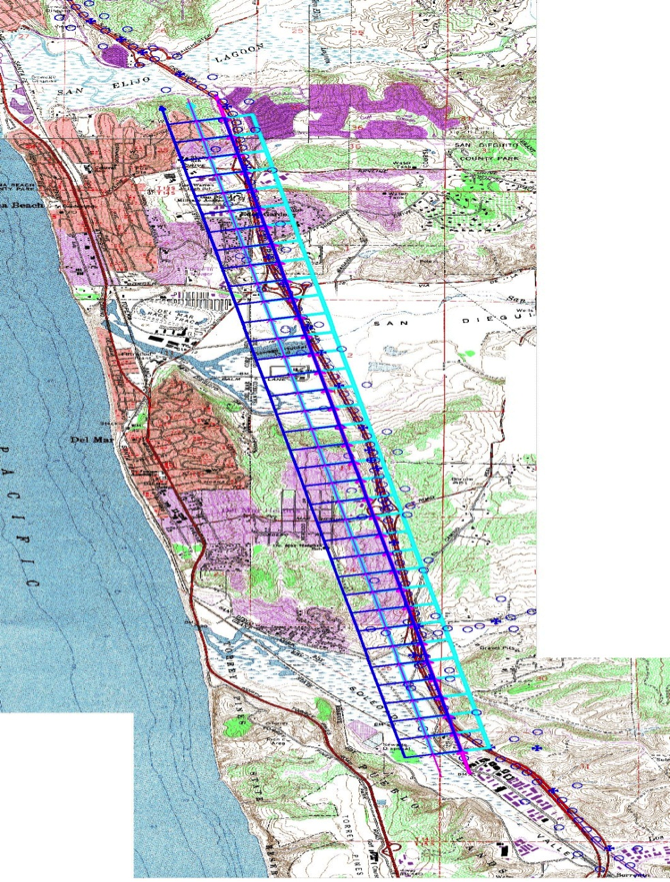

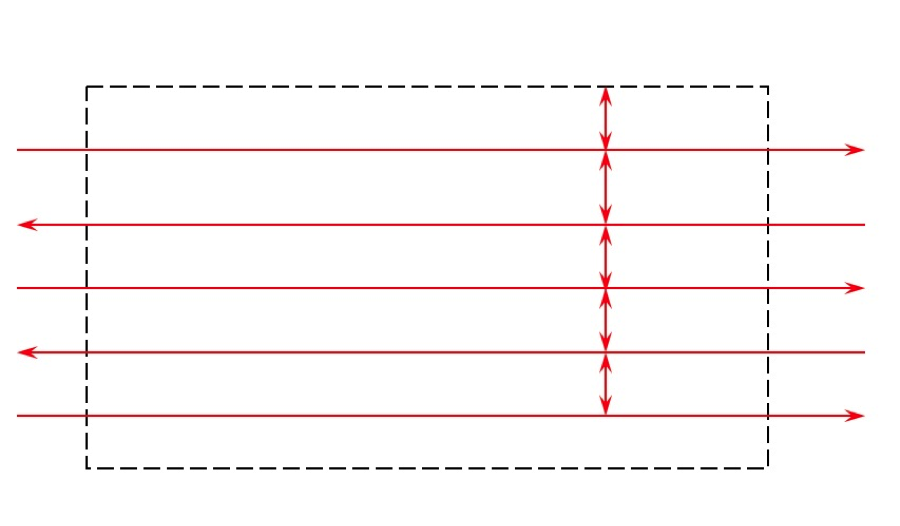

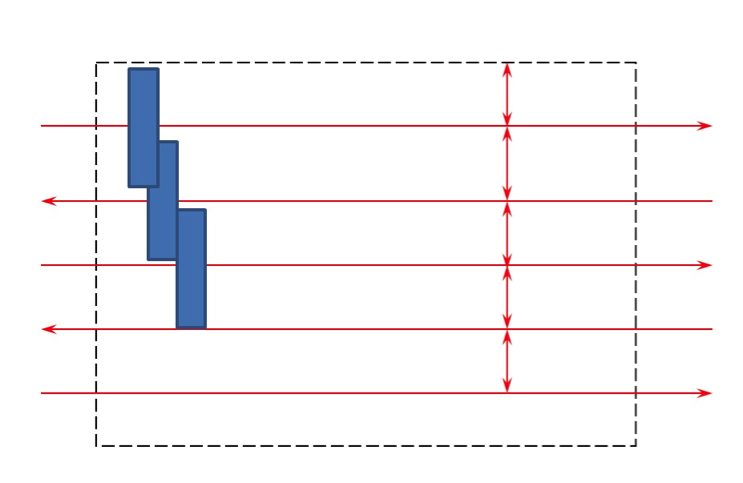

The block diagram in Figure 3.2 illustrates the components that make up most of the UASs available in the market today. Such a diagram is very beneficial to CONOP analysis and development, as it lists all the sub-systems included in a UAS. As you can see below, the main components that concern us in this course are the mission sensor payload, airborne data link, and navigation and control sensors. The mission sensor payload represents the highest priority for geospatial data users. Types and quality of sensors within the mission sensor payload block are directly linked to the end user's needs and expectations. The other two blocks, the airborne data link and navigation and control sensors, mainly concern the FAA and its regulations. Main FAA concerns lie in the quality of the communications and the navigational systems that steer and control the aircraft.

At the center of this schematic drawing is a rectangle containing the words “Flight Computer.” Arrows leave the Flight Computer from the left, the right, and from its top and bottom.