Lessons

Use the links below to link directly to one of the lessons listed.

† indicates lessons that are Open Educational Resources.

Lesson 1: Introduction to Time Series Data

Motivate...

The interactive tool above from NASA called ‘Climate Time Machine’ showcases a selection of variables that have been documented over a period of time. These videos come from a variety of time series. In Meteo 815, you worked with time series data, but in this course, we are going to utilize this data in ways that take advantage of the time component. The two primary purposes for time series data in this course will be 1) to explore a natural phenomenon (such as changes in a variable, like temperature) and 2) for forecasting.

In this lesson, we will begin with the basics. We will focus on breaking down the time series into components, perform initial analyses, and begin to look at common patterns in weather and climate data. There are a lot of time series data out there. I highly recommend checking out the NASA App ‘Eyes on Earth [8]’ which allows you to look and play with some of this data. Or you can check out Science On a Sphere [9] which has a variety of movies (intended for a sphere but still pretty cool) that showcase an enormous amount of weather/climate variables through time. They are both great ways to appreciate the amount of data out there and the length of these datasets.

Lesson Objectives

- List the most important aspects to consider when performing a time series analysis.

- Distinguish between stationary and nonstationary.

- Identify common patterns in weather and climate data.

- Prepare and decompose a time series.

Newsstand

- Srivastava, Tavish. (2015, December 16). A Complete Tutorial on Time Series Modeling in R [10]. Retrieved August 14, 2017.

- NOAA. Natural Climate Patterns [11]. Retrieved August 14, 2017.

- NOAA. Locating Climate and Weather Data and Information [12]. Retrieved August 14, 2017.

- NASA. Eyes on the Earth [8]. Retrieved August 14, 2017.

- NOAA. Dataset Catalog [9]. Retrieved August 14, 2017.

Time Series Overview

Prioritize...

After reading this section, you should be able to define a time series and describe the importance of having long datasets.

Read...

As with Meteo 815, we need to be cautious before we begin an analysis. Before we dive into all the applications of a time series, we will first talk about the time series data itself. In this lesson, you will learn a very simplistic approach meant to illustrate how the components of a time series all add together. We will hit it with more powerful tools in later lessons. For now, let’s begin by defining a time series.

What is a time series?

A time series is a sequence of measurements spanning a period of time. Usually, these measurements are taken at equal intervals. Although we inadvertently used time series data in Meteo 815, we generally discussed the random samples of observations, not the sequence. In fact, the assumption for many of the statistics we computed in Meteo 815 included the need for randomly sampled data. For a time series, the key assumption will instead be that each successive value of the dataset represents consecutive measurements of the variable, usually at equal time intervals.

Why do we care about a time series?

There are two main goals, in this course, for examining a time series. The first is to identify the nature of a weather or climate phenomenon. We might observe something occurring and ask why that event occurred. We could use a time series to investigate that particular event in more detail.

Check out the video below for an example:

[THUNDEROUS SOUNDS]

PRESENTER: Here's the latest from Earth Now.

[BIRDS CAWING]

Extreme weather in the Northern Hemisphere is increasingly blamed on arctic amplification. What is arctic amplification, and how does it affect our weather? The display first shows a satellite image of Arctic sea ice from September 1980.

[WAVES ROLLING]

Ice is colored white. The temperature differences between the cold poles and the warm tropics, combined with the Earth's rotation, cause air to flow eastward fastest over the mid-latitudes, where most of us live. This is called the jet stream and is shown here in red and blue.

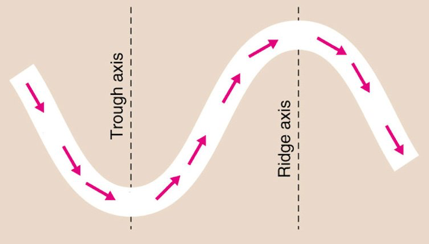

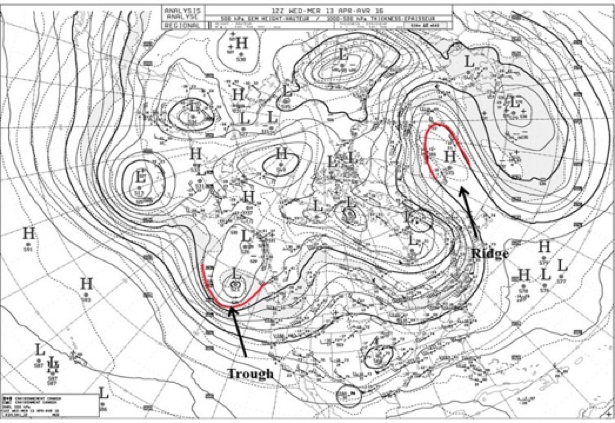

Approaching North America, the jet stream moves northward over the Rocky Mountains, then dips southward, forming a trough towards the east coast, then northward as a ridge over the Atlantic Ocean. It continues in a similar long wave pattern over Europe, Asia, and the Pacific. Storms develop and track along the jet stream, pushing cold air south, shown as the blue portion of the jet stream, and warm air north, shown as the red portions of the jet stream.

Now, the display shows the recent arctic sea ice from September 2012, the lowest ice extent ever recorded by satellites. The current typical jet stream location is now shown, with the previous ice extent and jet stream location typical of 30 years ago shown in pink for comparison.

[BIRDS CAWING]

During the past few decades, general warming in the atmosphere has accelerated arctic ice melt, leading to more seasonal ice cover, which is not as white and therefore absorbs more sunlight, in turn causing more warming. The Arctic has warmed twice as fast as the rest of the Northern Hemisphere. This is arctic amplification.

Scientists have observed that the reduced temperature difference between the North Pole and the tropics is associated with slower west to east jet stream movement and a greater north south dip in its path. This pattern causes storms to stall and intensify rather than move away, as they normally used to do. At mid-latitudes, more extreme weather results from this new pattern, including droughts, floods, cold spells, and heat waves.

[WAVES ROLLING]

And that is how arctic amplification is affecting our weather.

[WAVES ROLLING]

It shows how the Arctic affects extreme weather. You can find a description here of the video [14], but let me briefly describe the premise. The number of extreme weather events has increased over the past several decades. This could be due to a number of reasons, including better monitoring. But one hypothesis is that Arctic Amplification (rapid Arctic warming - twice as fast as the rest of the Northern Hemisphere) may be a cause. So by examining the time series of extreme weather events and Arctic ice melt, a scientist could understand the interaction in more detail.

The second reason we analyze a time series is for forecasting. By identifying patterns in time, we can extrapolate to predict occurrences in the future. We can also use a time series to identify the dominant time scales across which variability occurs. This tells us a lot about which extrapolation forecasts will be useful. Meteo 825 will focus on forecasting so it’s key that you understand this course to utilize the information for the follow on.

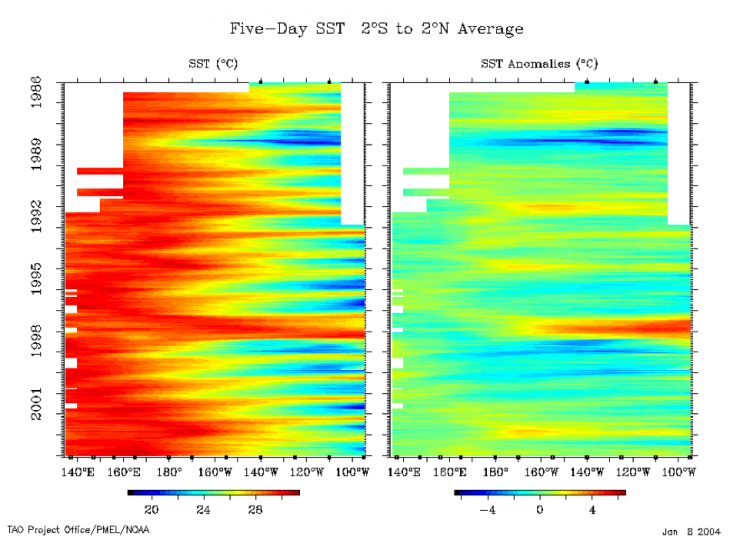

Check out the video below:

[ON SCREEN TEXT]: Ocean temperatures influence the atmosphere above, impacting weather and climate. Temperatures at the ocean's surface are constantly changing. Over time, seasonal patterns emerge. A band of warm weather shifts north and south as the seasons change.

The El Niño/Southern Oscillation (ENSO) cycle is a climate pattern that occurs approximately every five years. ENSO is marked by changing temperatures across the eastern tropical Pacific Ocean. ENSO has two phases: warmer than average and cooler than average When surface temperatures along the eastern tropical Pacific become warmer than average the phase is called El Niño. El Niño drives extreme weather worldwide. El Niño's opposite phase is called La Niña. In 2010, El Niño's warm waters cooled quickly, giving way to a strong La Niña. The 2010-2011 La Niña was behind many of the year's weather disasters. Scientists use sea surface temperature observations to forecast weather and understand the global climate system.

ENSO is an oscillation that will be discussed in more detail later on. The video is showing how we can use the ENSO cycle to predict weather events (like droughts, floods, cold temperatures, etc.) for the season. By analyzing the time series of ENSO and these other weather events, we can use the relationship to forecast future weather events.

In short, performing a time series analysis can be quite valuable both for investigating connections and utilizing the results for forecasting.

What do we need to consider when examining a time series?

Generally speaking, when we perform a time series analysis the main goal is to detect a reproducible pattern. This means that, in most cases, we will assume that the data has a pattern with some random noise and/or error. The random noise is what makes it difficult to pull out patterns.

Throughout the lesson, we will examine some potential issues that may arise with a time series. Whenever you begin a time series analysis, it would be beneficial to consider the points listed below (which will be discussed in more detail later on) as they may pose a problem in the actual analysis. We can correct these potential problems if we detect them before conducting the time series analysis:

- Are there any missing values?

- Are there any abrupt changes or shifts (could be natural or artificial)?

- Is there constant variance over the time period?

- Are there regularly repeating patterns (daily, monthly, etc.)?

- Is there a trend (steady increase or decrease over time)?

Prepare Data for Time Series Analysis

Prioritize...

After reading this section, you should be able to prepare a dataset for a time series analysis, check for inhomogeneity, deal with missing values, and smooth the data if need be.

Read...

As with the statistical methods we discussed in Meteo 815, preparing your data for a time series analysis is a crucial step. We need to understand the ins and outs of the data and account for any potential problems prior to the analysis. If you spend the time investigating and prepping the data up front, you will save time in the long term.

Plot

As with Meteo 815 and most other data analysis courses, you should always begin your analysis by plotting the data. This helps us understand the basics of the data and look for any potential problems. Plotting also allows us to check for missing values or inhomogeneities that may be missed from quantitative approaches. ALWAYS PLOT YOUR DATA!

For a time series analysis, the best plot is a time series plot (as opposed to boxplot, histograms, etc., that we used predominantly in Meteo 815). In R, you can use the function ‘plot’ and set X to time and Y to the data. Or you can use the specific function ‘plot.ts’ that can plot a time series object automatically. You can find more information on ‘plot.ts’ here [15].

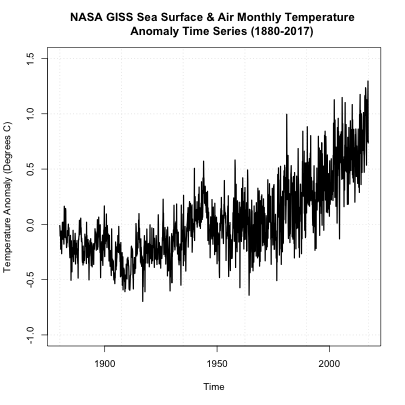

Let’s work through an example. Consider this global SST and air temperature anomaly time series data from NASA GISS [16]. Let’s load the data using the code below:

Your script should look something like this:

# Install and load in RNetCDF

install.packages("RNetCDF")

library("RNetCDF")

# load in monthly SST Anomaly NASA GISS

fid <- open.nc("NASA_GISS_MonthlyAnomTemp.nc")

NASA <- read.nc(fid)

This time series data spans from January 1880 to present. The monthly data is on a 2x2 degree latitude-longitude grid. The base period for the anomaly is 1951-1980, meaning the anomalies are computed by subtracting off the average from the base period. This dataset is a mixture of observations and interpolation. Let’s compute the global average and save a time variable using the code below:

Your script should look something like this:

# create global average NASAglobalSSTAnom <- apply(NASA$air,3,mean,na.rm=TRUE) # time is in days since 1800-1-1 00:00:0.0 (use print.nc(fid) to find this out) NASAtime <- as.Date(NASA$time,origin="1800-01-01")

Now, let’s plot the time series using the ‘plot’ function by running the code below.

You should get a plot like this:

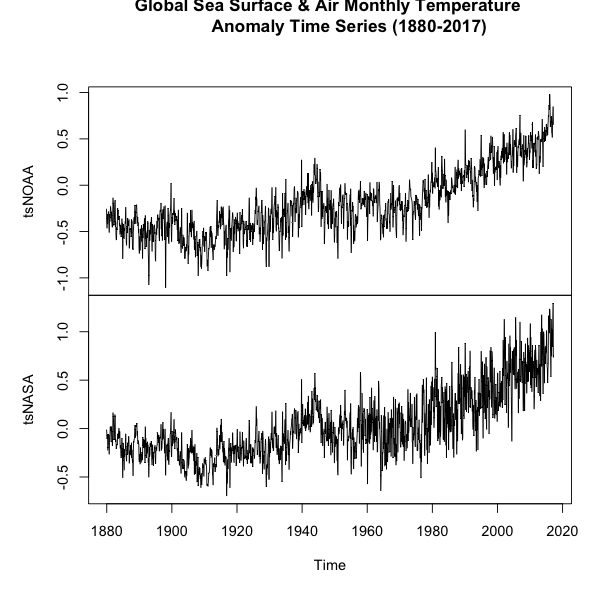

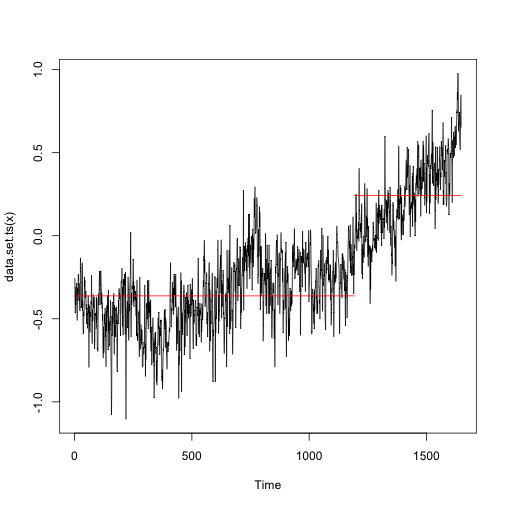

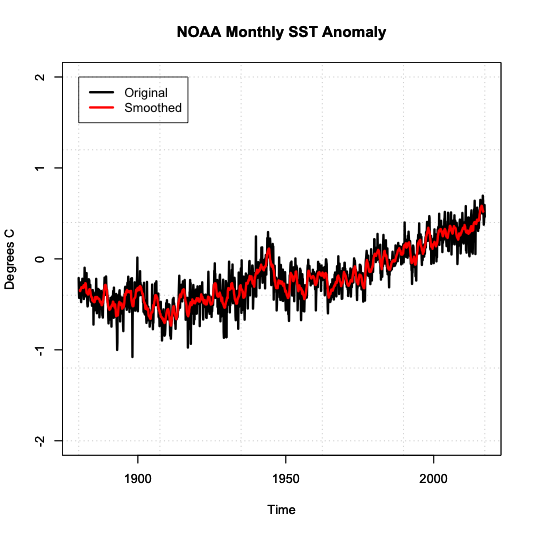

On first inspection, it appears there are no missing data points. There was a steady increase after 1950 and a spike in the 90s. There doesn’t appear to be any super-abnormal points, but the variance seems to change with noisier data points later on (notice the increase in fluctuation from month-month). The figure, to me, suggests we should perform an inhomogeneity test (which we will discuss later on). But before we move forward, let’s also take a look at another dataset of global SST and air temperatures. This dataset comes from NOAA and was originally called ‘MLOST’. You can download it here. [17] Let’s load it in and create our global average using the code below:

Your script should look something like this:

# load in monthly SST Anomaly NOAA MLOST

fid <- open.nc("NOAA_MLOST_MonthlyAnomTemp.nc")

NOAA <- read.nc(fid)

# create global average

NOAAglobalSSTAnom <- apply(NOAA$air,3,mean,na.rm=TRUE)

# time is in days since 1800-1-1 00:00:0.0 (use print.nc(fid) to find this out)

NOAAtime <- as.Date(NOAA$time,origin="1800-01-01")

# create ts object

tsNOAA <- ts(NOAAglobalSSTAnom,frequency=12,start=c(as.numeric(format(NOAAtime[1],"%Y")),as.numeric(format(NOAAtime[1],"%M"))))

tsNASA <- ts(NASAglobalSSTAnom,frequency=12,start=c(as.numeric(format(NASAtime[1],"%Y")),as.numeric(format(NASAtime[1],"%M"))))

# unite the two time series objects

tsObject <- ts.union(tsNOAA,tsNASA)

Now, let's plot the time series using the 'plot.ts' function by running the code below.

You should get a plot like this:

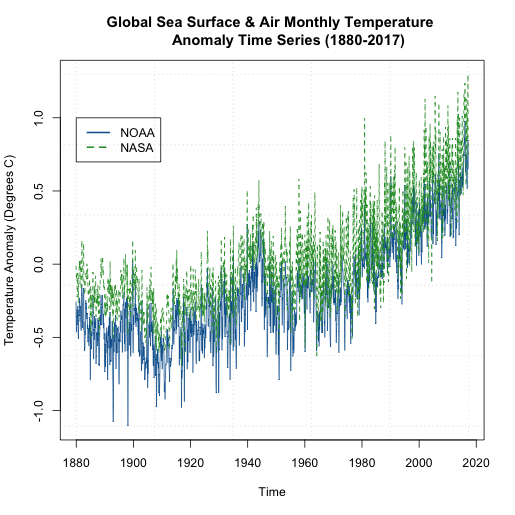

If you examine the figures, you will see that both follow a similar shape, but there are some discrepancies. Let’s overlay the plots to see how different the two datasets are. We will use another time series plot function called ‘ts.plot’. Run the code below.

You should get a figure like this:

We can see that that the global NASA SST and air temperature anomalies are generally ‘above’ the NOAA dataset. But they are plotting the same thing? How can this be? Well, let’s take a closer look at what the NOAA dataset is. For starters, the temperatures come predominantly from observed data (Global Historical Climatology Network) with an analysis of SST merged in. In addition, the NOAA dataset is on a 5x5 degree grid instead of a 2x2 like the NASA GISS version. Furthermore, the NOAA dataset has limited coverage over the polar regions, so when we create the global averages, we are potentially including more points in the NASA version than the NOAA version, with a significant region being discounted in the NOAA version. You can read more about these two versions as well as a few other well documented and used air/SST datasets here [18].

But the bigger question is, if we pick one dataset over the other, are we creating a bias in our time series analysis? It really depends on the analysis, but noting these potential problems is important. I want you to start recognizing potential problems that can affect your outcome. Being skeptical at every step is a good habit to get into.

Missing Values

One of the main goals when performing a time series analysis, is to pull out patterns and model them for forecasting purposes. Missing values, as we saw in Meteo 815, can pose problems for the model fitting process. Before you continue on with a time series analysis, you need to check for missing values and decide on how you will deal with them. In most cases, for this course, you will need to perform an imputation. As a reminder, imputation is the process of filling missing values in. We discussed a variety of techniques in Meteo 815. Below is a list of some of the methods we discussed. If you need more of a refresher, I suggest you review the material from the previous course.

- Central Tendency Substitution - We replace missing values with the mean, median, or mode of the dataset.

- Previous Observation Carried Forward - We replace the missing value with the closest neighbor (both temporally and spatially).

- Regression - We fit one variable to another and use a linear regression to estimate the missing values.

I mentioned that a weakness in the NOAA data set was a lack of information in the polar regions. When we averaged globally, we just left out those values. Instead, let’s perform an imputation and then globally average the data. This is going to be a spatial imputation, so for each month, I will fill in missing values to create a globally covered product. I’m going to perform a simple imputation that takes the mean of the surrounding points.

Let’s start by plotting one month of the data using 'image.plot' to double check that there are missing values. To do this, let’s first extract out the latitudes and longitudes. Remember, when using ‘image.plot’ the values of ‘x’ and ‘y’ (your lat/lon) need to be in increasing order.

Your script should look something like this:

# extract out lat and lon accounting for EAST/WEST NOAAlat <- NOAA$lat NOAAlon <- c(NOAA$lon[37:length(NOAA$lon)]-360,NOAA$lon[1:36]) # extract out one month of temperature NOAAtemp <- NOAA$air[,,1] NOAAtemp <- rbind(NOAAtemp[37:length(NOAA$lon),],NOAAtemp[1:36,])

Now that we have the correct dimensions and order, let’s plot.

Your script should look something like this:

# load in tools to overlay country lines

install.packages("maptools")

library(maptools)

data(wrld_simpl)

# plot global example of NOAA data

library(fields)

image.plot(NOAAlon,NOAAlat,NOAAtemp,xlab="Longitude",ylab="Latitude",legend.lab="Degrees C",

main=c("NOAA Air & SST Anomaly for ", format(NOAAtime[1],"%Y/%m")))

plot(wrld_simpl,add=TRUE)

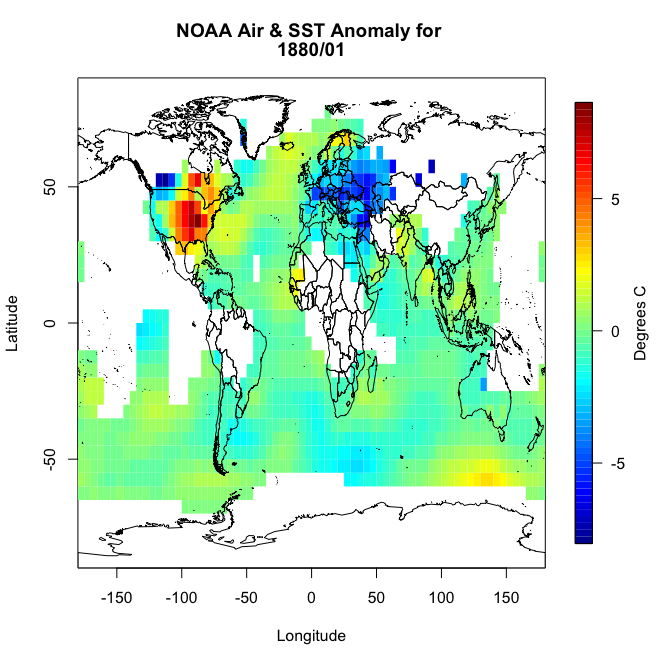

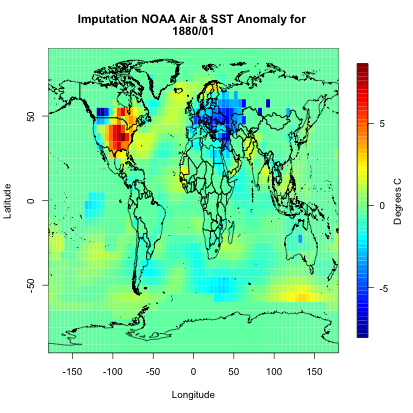

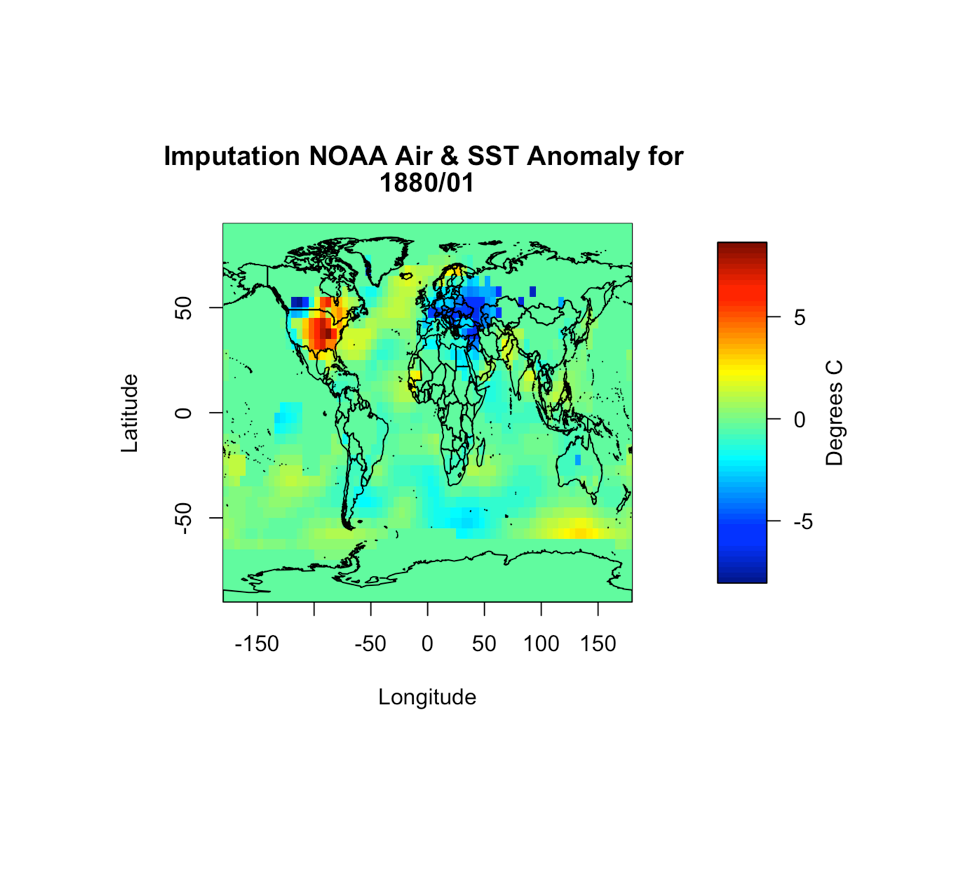

You should get a plot similar to this:

You will notice right away that there are a lot of missing values, not just at the polar regions. So let’s implement an imputation routine. We will apply it first to this particular month so we can replot and make sure it worked. Like I said above, I’m going to fill in a missing point at a particular grid box with the mean of the values surrounding it. Deciding on how far out to go will depend on what data is actually available. We will start with one grid box. Look at the visual below. Let’s say you have a missing point at the orange box. I’m going to look at all the grid boxes that touch the orange box and average them together. The mean of those 8 surrounding boxes will be the new orange value.

In R, you can use the following code:

Your script should look something like this:

# create a new variable to fill in

NOAAtempImpute <- NOAAtemp

# loop through lat and lons to check for missing values and fill them in

for(ilat in 1:length(NOAAlat)){

for(ilon in 1:length(NOAAlon)){

# check if there is a missing value

if(is.na(NOAAtemp[ilon,ilat])){

# Create lat index, accounting for out of boundaries (ilat=1 or length(lat))

if(ilat ==1){

latIndex <- c(ilat,ilat+1,ilat+2) }

else if(ilat == length(NOAAlat)){

latIndex <- c(ilat-2,ilat-1,ilat)

}else{

latIndex <- c(ilat-1,ilat,ilat+1)

}

# Create lon index, accounting for out of boundaries (ilon=1 or length(lon))

if(ilon ==1){

lonIndex <- c(ilon,ilon+1,ilon+2)

}else if(ilon == length(NOAAlon)){

lonIndex <- c(ilon-2,ilon-1,ilon)

}else{

lonIndex <- c(ilon-1,ilon,ilon+1)

}

# compute mean

NOAAtempImpute[ilon,ilat] <- mean(NOAAtemp[lonIndex,latIndex],na.rm=TRUE)

}

}

}

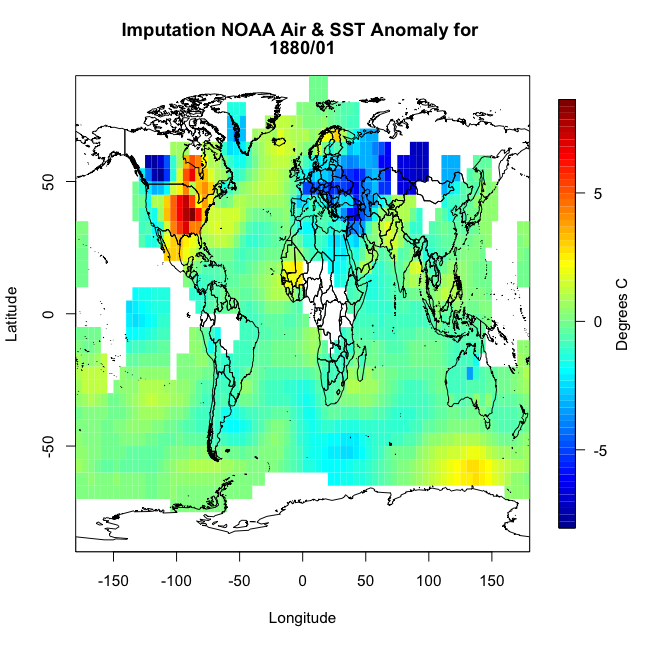

This code is laid out to show you ‘mechanically’ what I did. I looped through the lats and the lons checking to see if there is a missing value. If there is, then we create a lat and lon index that encompasses the grid boxes (blue) we are interested in. You can double check this by running the code over one ilat/ilon and printing out the values ‘NOAAtemp[lonIndex,latIndex]’. This will display 9 values (matching each grid box above). I’ve put in caveats to deal with cases where we are out of the boundary (i.e. ilat=1). If you plot up ‘NOAAtempImpute’ you should get something like this:

The method has filled in values but not enough. This is probably due to the size of the region in which data is missing. There are simply no data points within the corresponding grid boxes to average. We could expand the nearest neighbor, but instead let’s use a built-in R function called ‘impute’. This function (from the package Hmisc) will perform a central tendency imputation. Use the code below to impute with this function.

Your script should look something like this:

# impute using function from Hmisc library(Hmisc) NOAAtempImpute <- impute(NOAAtemp,fun=mean)

If you were to plot this up, you would get the following:

You can see we have a fully covered globe. Now, let’s apply this to the whole dataset.

Your script should look something like this:

# initialize the array

NOAAglobalSSTAnomImpute <- array(NaN,dim=dim(NOAA$air))

# impute the whole dataset

for(imonth in 1: length(NOAAtime)){

NOAAtemp <- rbind(NOAA$air[37:length(NOAA$lon),,imonth],NOAA$air[1:36,,imonth])

NOAAglobalSSTAnomImpute[,,imonth] <- impute(NOAAtemp,fun=mean)

}

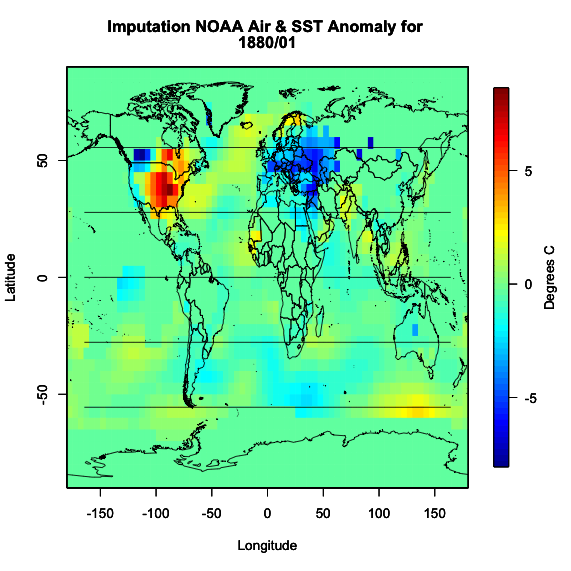

Now, if we plot the same month from our example (first month), we should get the same plot as above. This is something I would encourage you to do whenever you are working with large datasets. It may take some extra time, but going through one example to check if what you are doing is correct and then applying it to your whole dataset and checking back with your first example is good practice.

Your script should look something like this:

image.plot(NOAAlon,NOAAlat,NOAAglobalSSTAnomImpute[,,1],xlab="Longitude",ylab="Latitude",legend.lab="Degrees C",

main=c("Imputation NOAA Air & SST Anomaly for ", format(NOAAtime[1],"%Y/%m")))

plot(wrld_simpl,add=TRUE)

I get the following image which is identical to the one used in our example month.

Now we know that the imputation was successful and did what we expected it to do. We have a full dataset and can now move on.

Inhomogeneity & Outliers

We’ve discussed homogeneity before in Meteo 815, but as a review, homogeneity is broadly defined as the state of being same. In most cases, we strive for homogeneity as inhomogeneity can cause problems. For time series analyses, inhomogeneities can result from abrupt shifts (natural and artificial), trends, and/or heteroscedasticity (variability is different across a range of values). This inhomogeneity can make it difficult to pull out patterns and model the data.

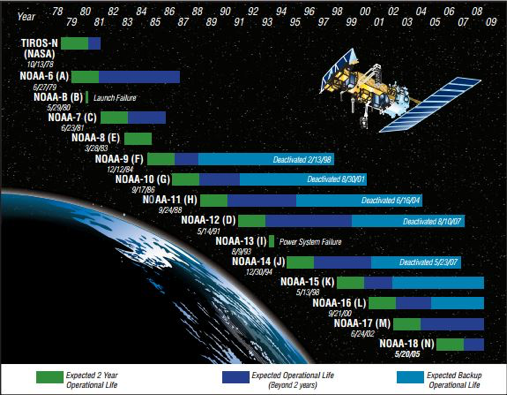

We will discuss some of these sources of inhomogeneity in more detail later on. I do, however, want to talk briefly here about artificial shifts. With a time series analysis, you are likely to encounter an artificial shift due to the issues involved in collecting a long dataset. A long dataset can lead to a higher probability of instruments being moved or malfunctioning, inconsistencies in updating algorithms, or a dataset that is pieced together from multiple sources. These can create artificial shifts in your dataset which in turn will skew your results.

It’s pretty hard to detect an inhomogeneity, especially artificial changes. You should really think about the source of your data, the instrument you are using, and where possible problems could arise. You should also utilize the tests for homogeneity of variance that we discussed in Meteo 815, like the Bartlett test, which applies a hypothesis test to determine whether the variance is stable in time. You can also use function 'changepoint' from the package ‘changepoint’ [19].

Let’s go back to our SST dataset and apply this new function to see whether our dataset is homogenous or not. Use the code below to create a new globally averaged dataset for NOAA (using the imputed data) and compute the change point test for the mean and variance.

Your script should look something like this:

# compute global average for NOAA

NOAAGlobalMeanSSTAnomImpute <- apply(NOAAglobalSSTAnomImpute,3,mean)

# load in changepoint

library('changepoint')

# test for change in mean and variance

cpTest <- cpt.meanvar(NOAAGlobalMeanSSTAnomImpute)

You can use the ‘print’ command or plot the result. What you will find is that there is one change point at index 1193 (corresponding to May 1979). If you plot, you would get this figure.

This suggests there are two distinctly different means and/or variances in the dataset. The red line is the mean estimate for each section with the ‘changepoint’ at index 1193 (showcased by the upward shift in the red line). So this dataset is definitely not homogenous throughout the whole time period.

Outliers can also be problematic depending on your analysis. In most cases, however, we rather keep the value than drop it for a missing value. But for reference, you can use the function ‘tsoutliers’ from the package ‘forecast’ [20] which will test for any outliers in your data. Let's try this out on the NOAA dataset.

Your script should look something like this:

# load in forecast

library('forecast')

tsTest <- tsoutliers(NOAAGlobalMeanSSTAnomImpute)

The result suggests one outlier at index 219 (corresponding to March 1898). What is great about this function is it includes a replacement value. In this example, the suggested replacement value is -0.628944, while the original value was -1.1049. It is up to you whether you would like to use the replacement value or not. Since there is only one ‘outlier’, I’m going to leave it as is for now. If there were a lot of outliers, then I would consider replacing them.

Basics of Time Series Modeling

Prioritize...

After reading this section, you should be able to describe the difference between a stationary and non-stationary time series and apply tests to your data to see which one best fits.

Read...

Now that we have prepared our data and checked for any missing values, outliers, and inhomogeneity, we can begin talking about modeling. The common goal of a time series analysis is modeling for prediction but, before we dive in, we need to cover the basics. In this section, we will focus on the assumptions required to perform an analysis and how we can transform the data to meet those requirements.

Stationary

The easiest time series to model is one that is stationary. Stationarity is a requirement to perform many time series analyses. Although there are functions in R that will avoid this requirement, it’s important to understand what stationarity means, so you can properly employ these functions. So read on!

There are three criteria for a time series to be considered stationary.

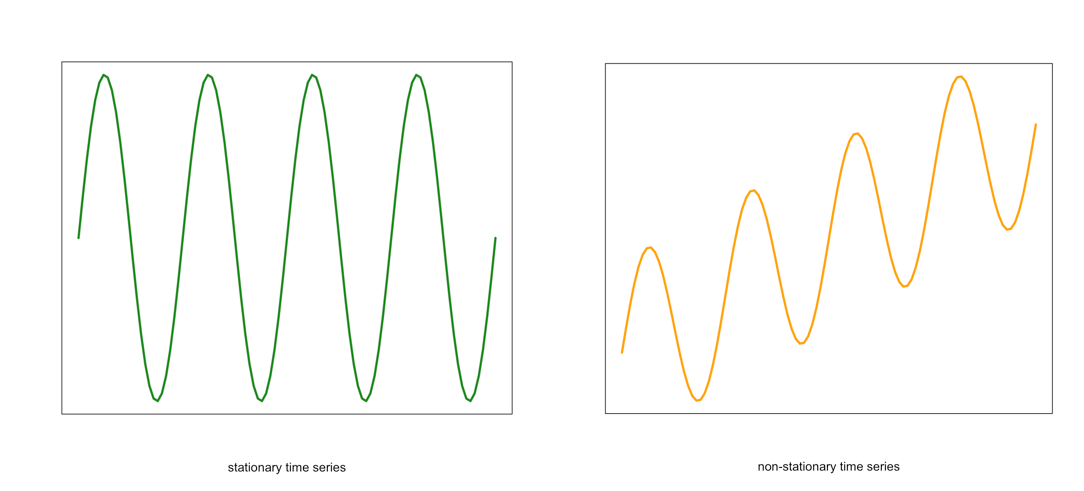

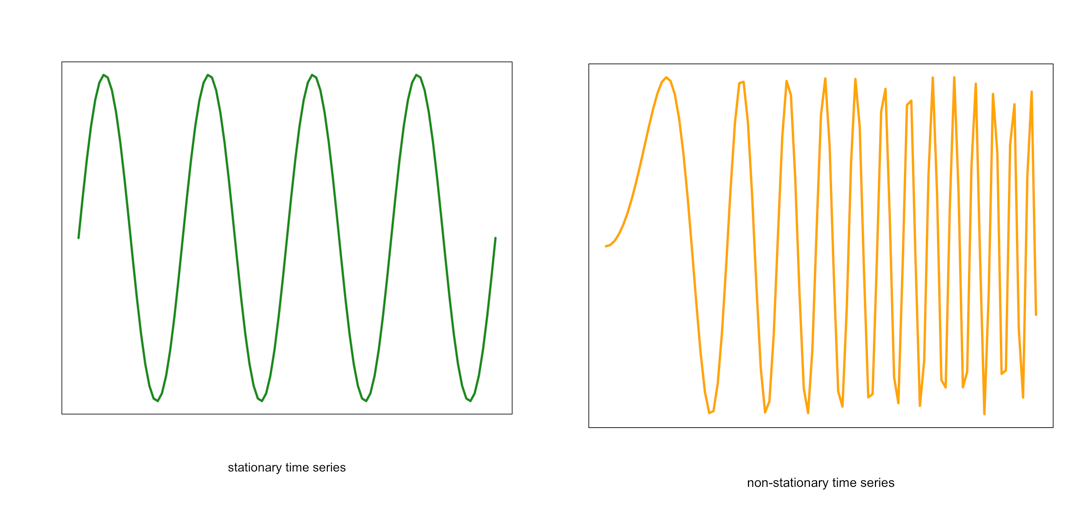

- The mean of the time series should be constant. It should NOT BE a function of time. Look at the graphs below. Notice the time-dependence increase in the orange one - in that case, the mean value changes with time and would not be considered stationary.

Visual of a stationary (left) and non-stationary (right) time seriesCredit: J. Roman

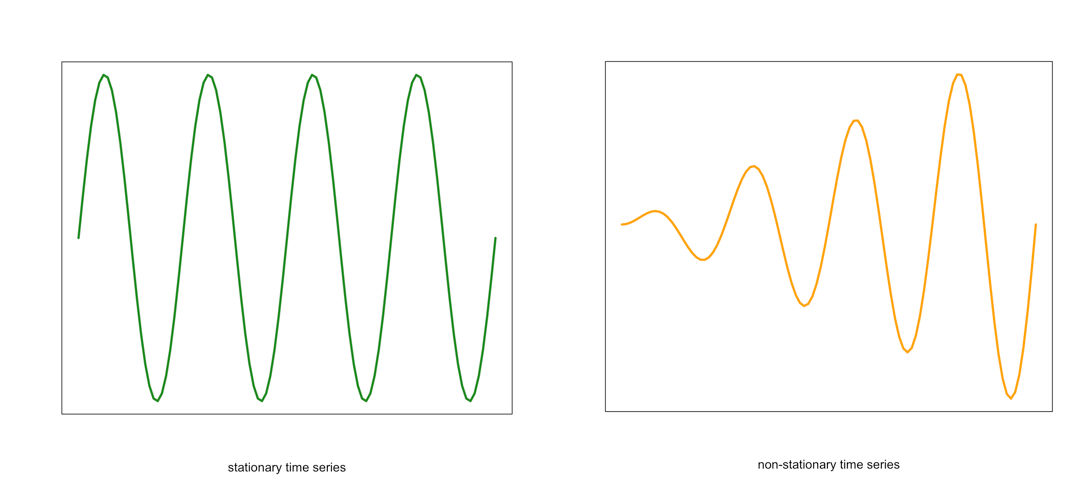

Visual of a stationary (left) and non-stationary (right) time seriesCredit: J. Roman - Similarly, the variance should be constant in time. This is called homoscedasticity. Look at the graphs below. Notice how the spread (range in values) changes with time in the orange one. This is an example of a time series that would not be considered stationary by breaking the requirements of homoscedasticity.

Example of a stationary (left) and non-stationary heteroskedastic (right) time seriesCredit: J. Roman

Example of a stationary (left) and non-stationary heteroskedastic (right) time seriesCredit: J. Roman - The last requirement is that the covariance between sequential data points should NOT BE a function of time. Again, look at the graph below. Notice the change in the spread in the time direction of the orange one. This is an example of a time series that is non-stationary and does not meet the requirement of steady covariance.

Example of a stationary (left) and non-stationary non-constant covariance (right) time seriesCredit: J. Roman

Example of a stationary (left) and non-stationary non-constant covariance (right) time seriesCredit: J. Roman

If your data meets these assumptions, you are good to go. If not, you need to explore methods for dealing with non-stationary datasets. Continue on to find out what we can do.

Non-stationary

For the purpose of this course, any time series that does not meet the stationary requirements will be called non-stationary. Like I said previously, some functions in R can automatically deal with a non-stationary time series, but there are ways to transform the data to make it stationary.

Data transformations were discussed briefly in Meteo 810. Because of this, I will only provide a review of the more common techniques. I suggest you re-read those sections if you need more of a refresher.

The first data transformation is differencing. Differencing is simply taking the difference between consecutive observations. Differencing usually helps stabilize the mean of a time series by removing the changes in the level of a time series. This, in turn, eliminates the trend. In R, you can use the function ‘diff()’.

We can also compute an anomaly to remove the seasonality cycle or cycles that repeat periodically. To compute the anomaly, we subtract off a climatology (a mean over a pre-determined period). For example, let’s say I have monthly temperature data. We know that the temperature in the Northern Hemisphere is warmest during summer months (JJA) and is coldest in winter (DJF). This is a cycle that repeats every year. We can remove this cycle by subtracting off the monthly climatology. That is subtracting off a mean monthly value. So for a given observation in January, we subtract off the mean of all Januarys in that dataset (or over a predetermined time period). For a February observation, we subtract off the mean of all Februarys, and so on. We are left with an anomaly that has removed this cycle.

The next common method is log. To transform the data this way, we take the natural log of each value. A log transformation is usually used if the variation increases with the magnitude of the local mean (e.g., if the range of potential instrument error increases as the true value increases). In such cases, the log transformation stabilizes the variance. In R, you can use the function ‘log’.

The last transformation we will review is the inverse. The inverse is just the reciprocal of the value (1/x). This is considered a power transformation, similar to the log, and is used to stabilize the variance in situations where small measurements are more accurate than large. In R, you can use the ‘1/x’ command.

When you run into a non-stationary dataset, my recommendation is to attempt a transformation to try and meet the stationary requirement. If this does not work, then you can exploit the functions in R that do not require a stationary dataset.

How to test for stationarity

We’ve discussed the requirements for a time series to be stationary, and I have shown a few figures that display a typical stationary and non-stationary time series. But sometimes it will be very difficult to simply look at the time series and determine if the assumptions are met. So we will talk about different ways to check for stationarity.

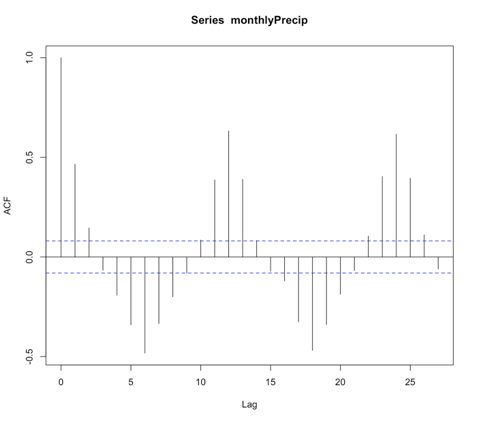

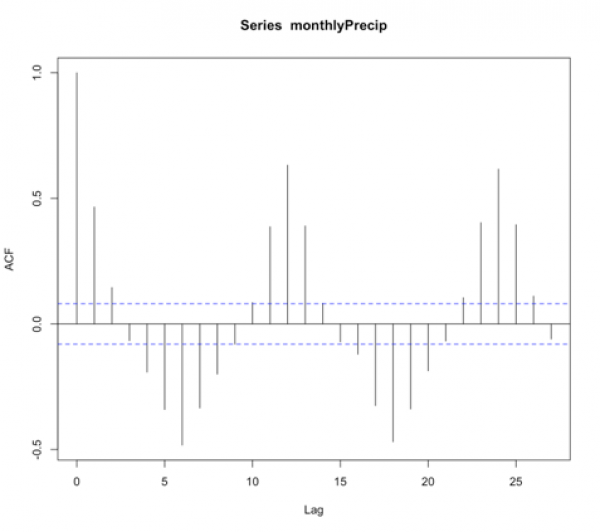

- Check the ACF and PACF

The function ‘acf’ estimates the autocorrelation. Autocorrelation is the correlation between elements in time. The correlation coefficient is computed between the values at two different time periods. For example, the value at time t is compared to the value at time t+1, t+2, t+3…. And so on. These are called ‘lags’. Similar to correlation, if the coefficient is close to 1, then the two values are strongly related. Autocorrelation will be discussed in more detail later on. For now, just know that if there is a high autocorrelation (close to 1) for all lags, the time series is not stationary.

The function ‘pacf’ computes the partial autocorrelation. It is similar to the autocorrelation function but controls for the values of a time series at all shorter lags. For example, if you are looking at the correlation between time t and t+3, the partial autocorrelation takes into account the values at time t, t+1, t+2, and t+3. Whereas the autocorrelation only examines the values at time t and t+3. Again, if the correlation is high for all lags, the series is not stationary.

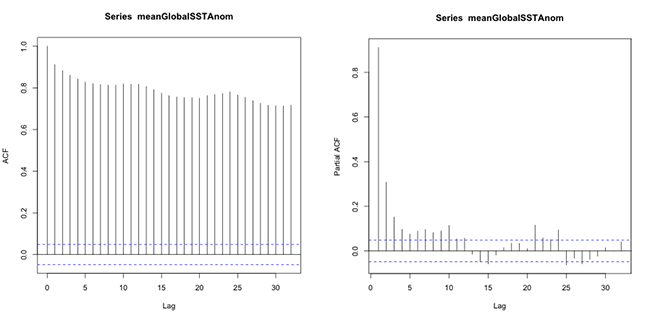

Let’s test this out with our SST data. We will first compute the mean global SST anomaly. To do this, use the code below:Show me the code...Now we can compute the ACF and PACF. Run the code below:Your script should look something like this:

# compute global mean meanGlobalSSTAnom <- apply(NOAAglobalSSTAnomImpute,3,mean)

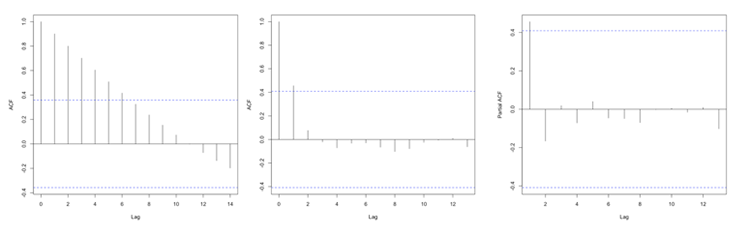

You should get the following figures:The ACF shows strong correlation for all lags, while the PACF does not. So while the ACF suggest non-stationary, the PACF does not. How do we decide what to do? Let’s continue on to find out some more quantitative ways to check for stationarity. ACF (left) and PACF (right) of NOAA global mean SST and air temperature anomaliesCredit: J. Roman

ACF (left) and PACF (right) of NOAA global mean SST and air temperature anomaliesCredit: J. Roman - Kwiatkowski-Phillips-Schmidt-Shin (KPSS) test

To confirm your visual results, you can run a KPSS test [21]. The KPSS is a hypothesis test that evaluates whether the data follows a straight line trend with stationary errors. The null hypothesis is that the trend does. The alternative is that it doesn't. So if the p-value is small, we reject the null in favor of the alternative and the trend is assumed to have non-stationary errors.

Let’s test this out with our SST data. Use the code below to compute the KPSS TestShow me the code...The result is a p-value less than 0.01. This means we reject the null in favor of the alternative. The trend does not have stationary errors, so our time series is not stationary.Your script should look something like this:

# load in tseries library library("tseries") # compute KPSS test kpss.test(meanGlobalSSTAnom, null=”Trend”) - Augmented Dickey-Fuller (ADF) test

Another test is the Augmented Dickey-Fuller t-statistic test [21]. This hypothesis test determines whether the unit root is in a time series. A unit root is a feature in some data that can cause problems in our analysis and suggests that the variable is non-stationary. For this test, the null hypothesis is that the unit root exists in the data. The alternative hypothesis is that it doesn’t exist, and the data is considered stationary. So, if the p-value is small, we reject the null in favor of the alternative suggesting the data is stationary.

Let’s test this out with our SST data. Use the code below to perform this test.Show me the code...The result is a p-value less than 0.01 suggesting the data is stationary.Your script should look something like this:

# compute ADF test library(tseries) adf.test(meanGlobalSSTAnom)

There are many more tests out there that you can try. These were just a few examples of what you can do to visualize and quantify the stationary aspect of your dataset. And like the example highlights, you might get conflicting answers. You will have to decide what route is the best route to go based on your results.

Now test yourself. Using the app below, select a variable from the list and examine the plots and output from the hypothesis tests. Try and decide, based on the quantitative and qualitative results, if the time series is stationary.

Identifying Patterns - Quasi-Periodic

Prioritize...

When you have completed this section, you should be able to recognize common quasi-periodic patterns in a dataset.

Read...

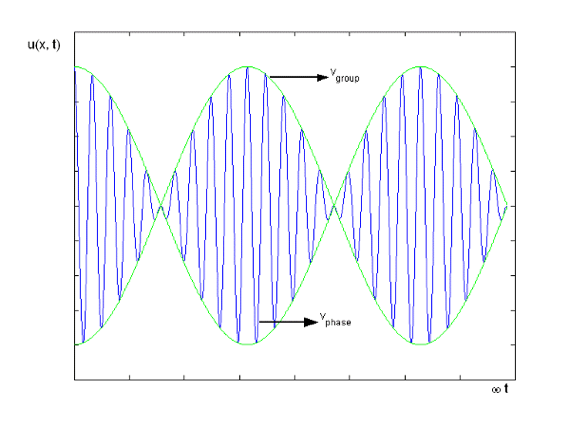

There are two general groups of patterns: periodic and trend. Periodic is a pattern that repeats at a regular timescale. We can break periodic down into quasi-periodic (roughly predictable across many cycles) and chaotic (predictable for only a cycle or so). You should note that trends and periodicity can and do occur in the same time series, which can make it difficult to detect and or differentiate the two patterns. For now, we are going to focus on some quasi-periodic patterns that are quite common in many datasets you will use for weather and climate analytics.

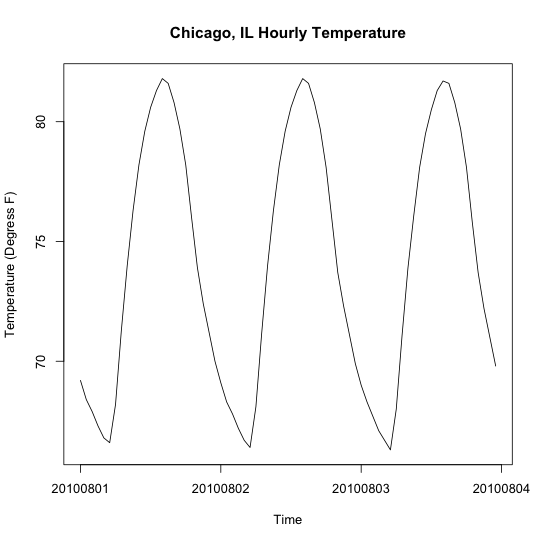

Diurnal

Some variables exhibit a daily pattern, i.e., they change systematically from night to day. These patterns are called diurnal and are the shortest time scales we will discuss. Specifically, think about temperature. At night, the temperature is generally cooler, while during the day it is warmer. This pattern is diurnal and can be seen if you plot hourly temperatures for multiple days.

Let’s check this out. Here are some hourly temperature data from Chicago, IL [22] during the month of August 2010. Open the file and extract the temperature out using the code below.

Your script should look something like this:

# load in data

mydata <- read.csv("hourly_temp_chicago.csv")

# extract out date and temperature

dtimes <-paste0(substr(mydata$DATE,1,4),"-",substr(mydata$DATE,5,6),"-",substr(mydata$DATE,7,8),

" ",substr(mydata$DATE,10,11),":",00,":",00)

dtparts = t(as.data.frame(strsplit(dtimes,' ')))

thetimes = chron(dates=dtparts[,1],times=dtparts[,2],format=c('y-m-d','h:m:s'))

hourlyTemp <- mydata$HLY.TEMP.NORMAL

Now, let’s plot the hourly temperature for 3 days.

Your script should look something like this:

# plot hourly temperatures grid(col="gray") par(new=TRUE) plot(thetimes[1:72],hourlyTemp[1:72],xlab="Time", ylab="Temperature (Degress F)", main="Chicago, IL Hourly Temperature", xaxt='n',type="l",lty=1) axis.Date(1,thetimes[1:72],format="%Y%m%d")

You should get a figure like this:

You can see the continuous pattern over the three-day period, where the temperature reaches a maximum during the day and a minimum at night. This is a prime example of a diurnal cycle and falls under the quasi-periodic category.

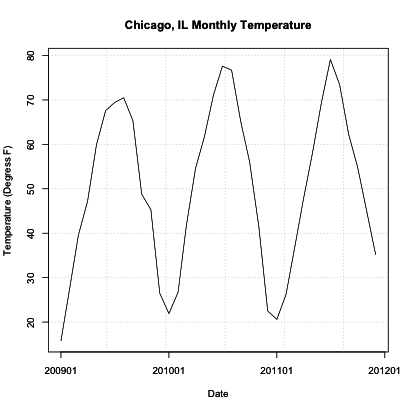

Annual

Similar to the diurnal pattern, an annual pattern, especially in temperature, can be observed. In the Northern Hemisphere during the months of June, July, and August (summer), temperatures are warmer than in the months of December, January, February (winter). If you were to plot monthly temperatures, you would see this peak in summer and dip in winter. This is quasi-periodic because we can rely on this pattern to continuously occur for many cycles - meaning, every year we expect the temperatures to be warmer in summer and cooler in winter for the Northern Hemisphere.

Let’s test this out. Load in the monthly temperatures for Chicago, IL [23].

Your script should look something like this:

# load in data

mydata <- read.csv("monthly_temp_chicago.csv")

# extract out date and temperature

DATE<-as.Date(paste0(substr(mydata$DATE,1,4)

,"-",

substr(mydata$DATE,6,7),"-","1"))

monthlyTemp <-mydata$TAVG

Now, plot the monthly temperatures over three years, and see if there is a repeating pattern.

Your script should look something like this:

# plot monthly temperatures grid(col="gray") par(new=TRUE) plot(DATE,monthlyTemp,xlab="Date",ylab="Temperature (Degress F)", main="Chicago, IL Monthly Temperature",xaxt='n',type="l",lty=1) axis.Date(1,DATE,format="%Y%m")

You should get a figure like this:

Similar to the diurnal example, we see a repeating pattern of warm and cool temperatures. In this case, though, we see very cold temperatures during the Northern Hemisphere winter months and warm temperatures during the Northern Hemisphere summer months. Although they are not constantly the same low monthly temperature, this pattern is quite visible and is to be expected each year (quasi-periodic).

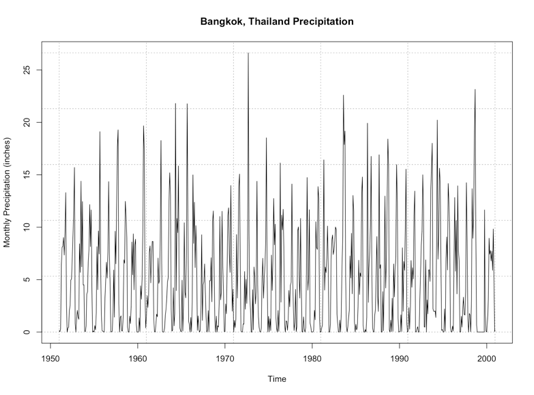

In certain geographical regions, splitting up the year by the common seasons we are used to in the United States is not appropriate. Instead, we split the year up between wet and dry season. Sometimes called the monsoon season, this is another example of an annual cycle. This cycle, however, is characterized by periods of rain and periods of dry and is quite prominent in the Tropics.

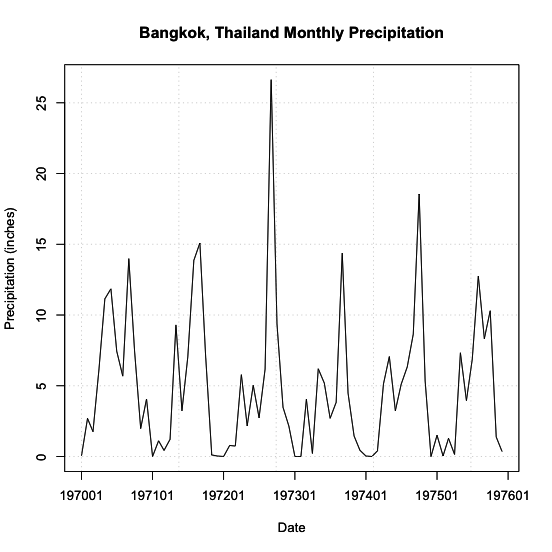

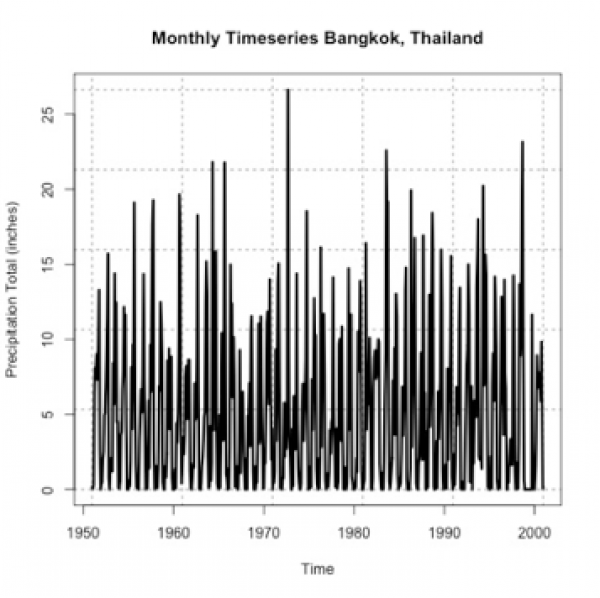

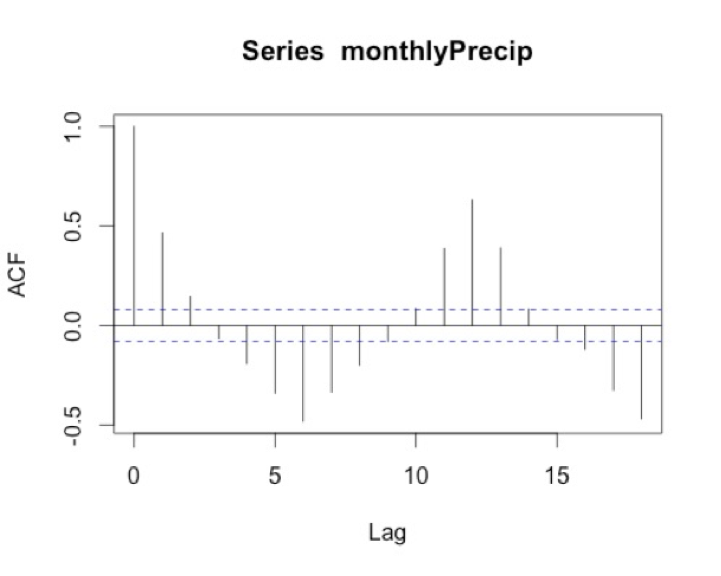

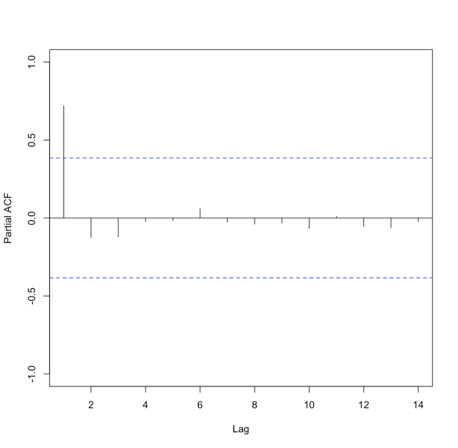

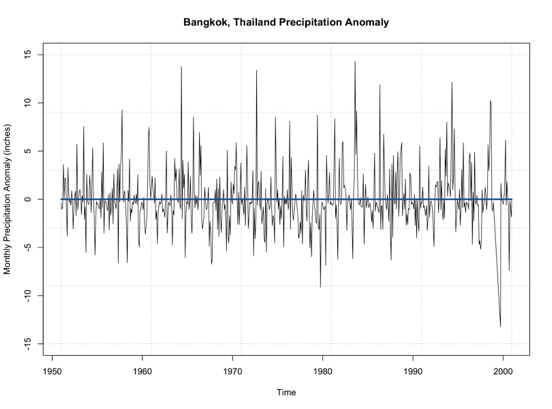

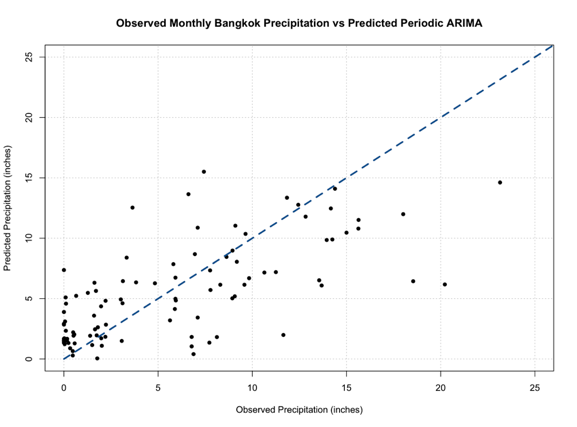

Again, let’s test this out. We are going to plot monthly precipitation for Bangkok, Thailand. Start by loading in the data [24] and extracting out the time and precipitation variables.

Your script should look something like this:

# load in data

mydata <- read.csv("monthly_precip_bangkok.csv")

# extract out date and temperature

DATE<-as.Date(paste0(substr(mydata$DATE,1,4),"-",substr(mydata$DATE,6,7),"-","1"))

monthlyPrecip <-mydata$PRCP

Now, let’s plot the first 6 years of data.

Your script should look something like this:

# plot monthly precipitation grid(col="gray") par(new=TRUE) plot(DATE[1:72],monthlyPrecip[1:72],xlab="Date", ylab="Precipitation (inches)", main="Bangkok, Thailand Monthly Precipitation", xaxt='n',type="l",lty=1) axis.Date(1,DATE[1:72],format="%Y%m")

You should get a figure similar to this:

Identifying Patterns - Chaotic Periodicity

Prioritize...

Once done with this section, you should be able to recognize common meteorology-specific patterns in data and know the typical features of these patterns.

Read...

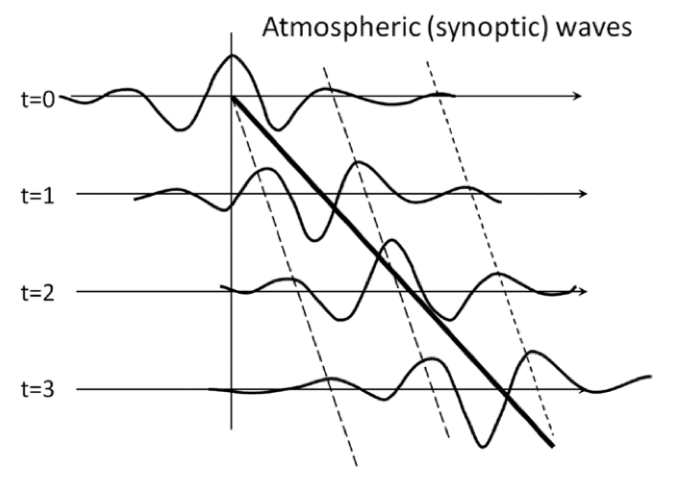

In the atmosphere and ocean, there are some common climatic patterns. A lot of these climatic patterns result in teleconnections - meaning when certain phases of this pattern occur, we can expect a particular meteorological feature elsewhere (spatially). Teleconnections are very useful for forecasting! You should note that the goal right now is simply for you to identify patterns in a dataset, know which variables you need to detect those patterns, and the potential teleconnections. In future lessons, you will have to account for these patterns when performing certain time series analyses. So being able to identify these patterns is key! If you are interested, there is a pretty good blog by NOAA climate that includes articles on natural climate patterns [11].

Teleconnections

Before we go into specifics, let’s talk a little bit more about teleconnections. The reason we are interested in these slowly varying multi-region patterns is that if we are in certain phases of these patterns, we can sometimes infer something about the weather (i.e., temperature, precipitation, etc.). This is a result of teleconnections.

Teleconnections are defined in the AMS (American Meteorological Society) glossary as a “linkage between weather changes occurring in widely separated regions of the globe.” Something occurring in one geographic location can impact another location. These teleconnections can help identify certain scenarios that may play out. For example, if we know increased SST in one region can bring droughts in another location, we can expect/predict when those droughts may occur based on observing the SST.

There are many atmospheric/oceanic teleconnections (ENSO, AO, NAO, MJO). These teleconnections are generally referred to as long timescale variability, but they can last for several weeks to several months and even years. Because of their long timescale, these teleconnections can aid in the understanding of both the inter-annual variability and inter-decadal variability. For more information on the general concept of teleconnections in weather and climate, check out this CPC site [25].

Now, let’s start looking at some common chaotic patterns that you may find in this field.

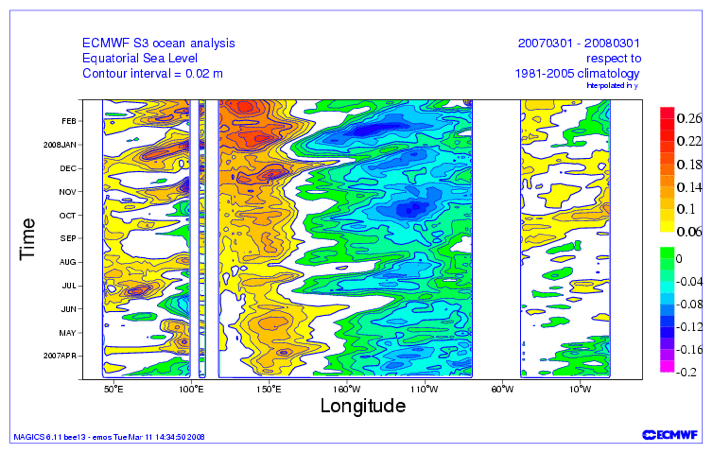

ENSO

Out of all the patterns that exist in the atmosphere and or ocean, ENSO is probably the most well known. You’ve probably heard about it being talked about during extreme floods or droughts. The El Niño-Southern Oscillation (ENSO) is a natural pattern characterized by the fluctuations of Sea Surface Temperatures (SST) [26] in the equatorial Pacific.

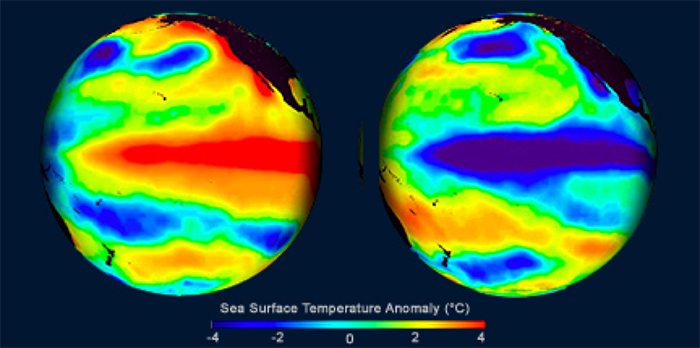

With respect to ENSO, there are two main phases: warmer than normal central and eastern equatorial Pacific SSTs (called El Niño) and cooler than normal SSTs in central and eastern Pacific (called La Niña). Below is an example of El Niño conditions on the left and La Niña on the right.

You can check out the current conditions here [28]. These states last about 9–12 months, peaking in December-April and decaying during the months of May to June. Prolonged episodes have lasted 2 years in the past. The periodicity is chaotic but, on average, we expect the pattern to reoccur every 3–5 years.

There are several ways to monitor the ENSO state. One is using the Oceanic Niño Index (ONI). You can read more about the index here [29]. In short, a 3-month running SST anomaly mean is measured in an area called the Niño 3.4 region. If the value is above 0.5 °C, then it is considered to be in the warmer phase; if it’s less than -0.5 °C, it is characterized as being in the cooler phase. To be defined as the La Niña or El Niño phase, there must be 5 consecutive months in which the 3-month running average was below or above the threshold.

So why do we care about an anomaly in the equatorial Pacific Ocean? Well, it has to do with the teleconnections. The impacts of an El Niño or a La Niña vary by not only region but when, temporally, the phase is occurring.

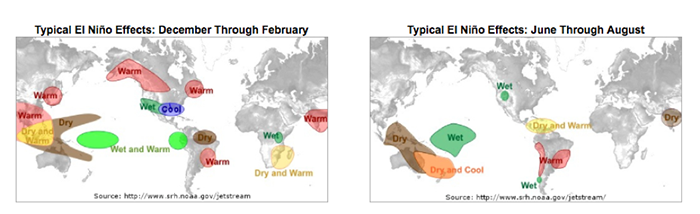

For El Niño, this plot below shows the teleconnections during the months of December through February on the left and June through August on the right.

This figure shows the general impact. For example, the southwestern US generally has wetter than normal conditions during the months of December–February when an El Niño event is occurring.

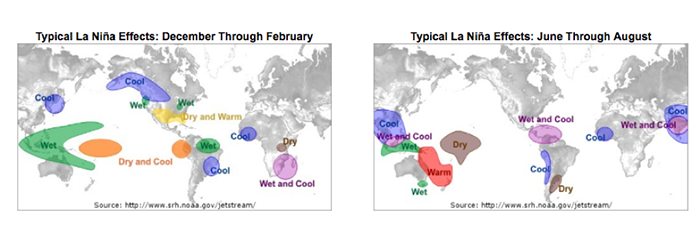

Similarly, we expect teleconnections for La Niña. During the months of December through February, the typical La Niña effects are displayed on the left, while the months of June through August are displayed on the right.

Again, we discuss the general regions as being wetter or drier than normal, and cooler or warmer than normal. For example, during the months of June through August, the western coast of South America extending from Peru down into Chile is generally characterized by cooler than normal conditions when the La Niña phase occurs.

To summarize:

- ENSO is characterized by fluctuations in SST.

- We use the Oceanic Niño Index to determine the phase.

- If there are 5 consecutive months with an anomaly greater than 0.5 °C, then we are in the warm phase called El Niño.

- If there are 5 consecutive months with an anomaly less than -0.5 °C, then we are in the cool phase called La Niña.

- The general periodicity is 3–5 years.

- Impacts vary not only by region, but by the month the phase is occurring in.

AO and NAO

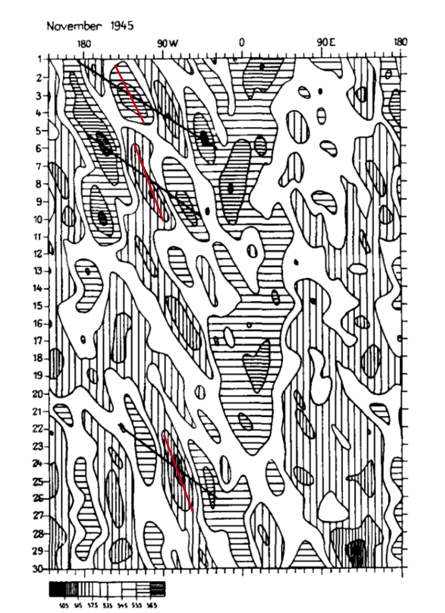

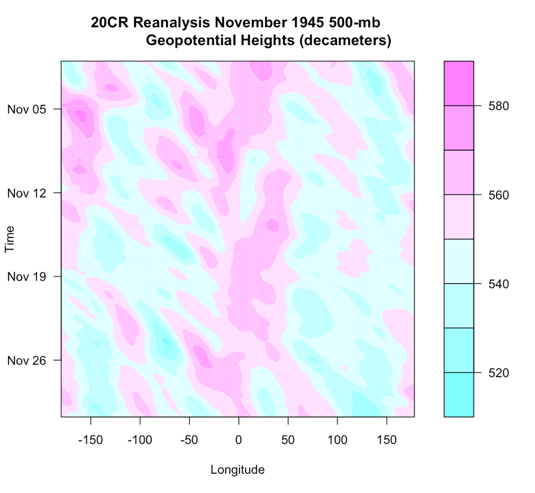

The Arctic Oscillation (AO) and the North Atlantic Oscillation (NAO) are characterized by changes in pressure or geopotential heights [30].

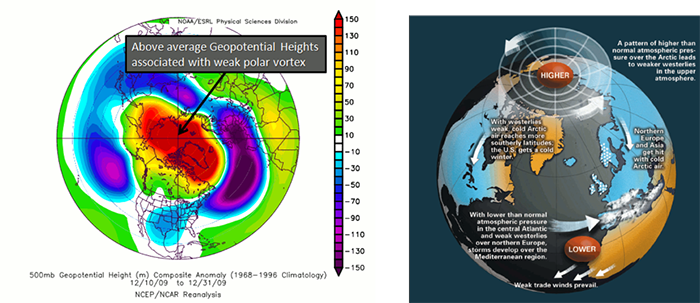

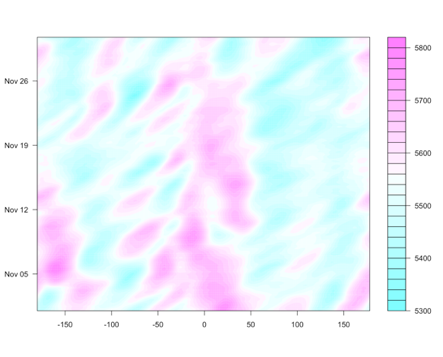

The AO consists of a negative and positive phase. If we know the phase of the AO, we have some clues about the large-scale weather patterns a week or two in the future. The negative phases occur when above-average geopotential heights are observed in the Arctic. This means there is a weaker than normal polar vortex. During this phase, the westerlies weaken, resulting in cold air plunging into the middle of the US and Western Europe. The figure on the left shows the above average geopotential heights associated with a weak polar vortex, highlighting the negative AO phase, while the right figure shows the consequences of this pattern change.

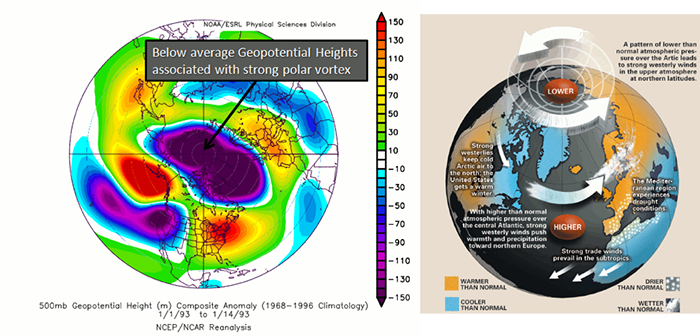

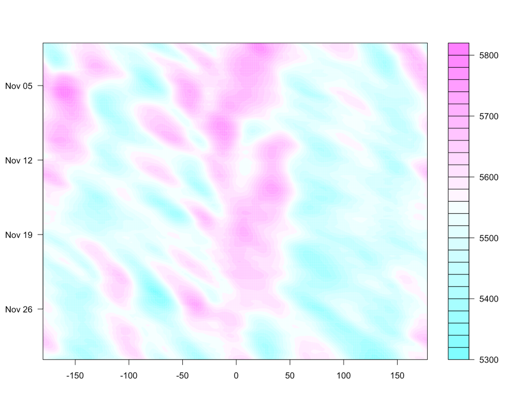

In contrast, the positive phase occurs when geopotential heights are below average in the Arctic. This means there is a stronger than normal polar vortex. During this phase, the westerlies are stronger, resulting in milder weather in the US and weaker storms. The figure on the left shows the below average heights associated with a strong vortex, highlighting the positive AO phase. The figure on the right shows the impacts of this pattern.

To check out the current state of the AO, look at this site [32]. The AO index is computed by projecting the daily (00z) 1000 mb height anomalies poleward of 20°N onto the pattern of the AO. If the AO index [33] is positive, it is in the positive phase, and if it’s negative, it’s in the negative phase. The AO is important for the weather in North America, but there is no preferred timescale.

To summarize:

- AO is characterized by changes in geopotential height or pressure in the Arctic.

- We use the AO index to determine the phase.

- If AO index > 0 positive phase.

- If AO index < 0 negative phase.

- There is no general periodicity.

- The negative phase results in stormy weather in Europe and generally colder weather in the US. During the positive phase, we expect milder weather in the US, with droughts in Europe.

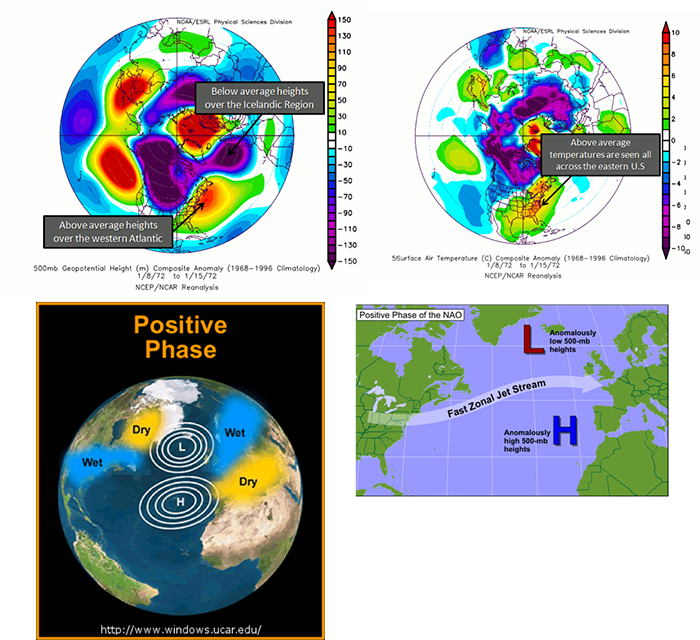

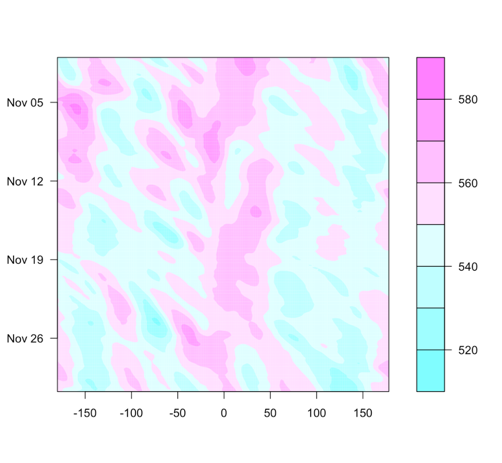

The NAO is similar to the AO, but the geopotential heights are observed near Iceland and the Azores islands in the Atlantic Ocean instead of the Arctic. During a positive NAO, the 500 mb heights over Iceland are anomalously low while the 500 mb heights over the Azores are anomalously high, favoring strong westerlies. During this phase, the temperatures in the eastern US tend to be milder with weaker storms. Northern Europe is also milder, but wetter than average, while central and Southern Europe are drier. The figures below characterize the heights during a positive NAO, the temperature anomalies, and the precipitation anomalies.

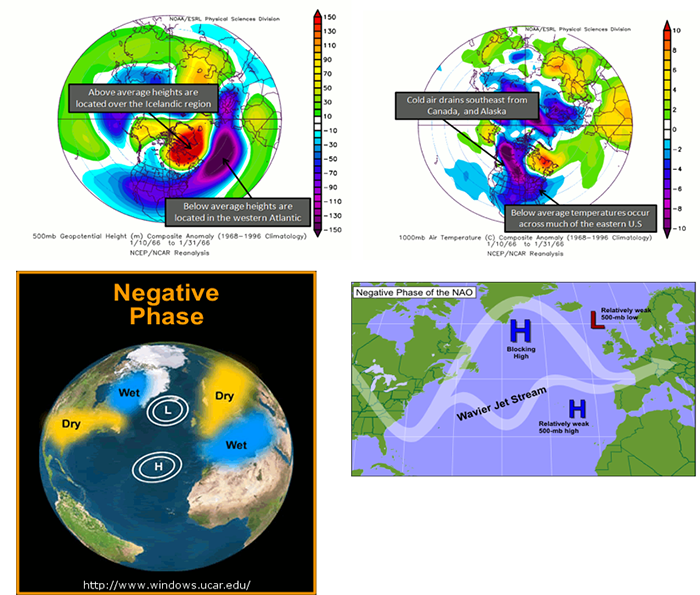

During a negative NAO, the Icelandic height anomalies are high, while the Azores are low. This tends to weaken the westerlies. What results is a pressure high over the North Atlantic that blocks and slows down the progression of a weather system across the US. This favors colder outbreaks in the eastern US and favors the development of strong mid-latitude cyclones leading to East Coast snowstorms. Particularly, during the winter season, a predominantly negative phase can lead to cold snow across the eastern part of the US and Europe. Below are figures demonstrating the cause and impacts of a negative phase.

To see the current NAO state, you can use the NAO index here [34]. A positive NAO index suggests a positive phase, while a negative index suggests a negative phase. Similar to the AO, there is no general periodicity.

To summarize

- NAO is characterized by changes in geopotential height or pressure near Iceland and the Azores.

- We use NAO index to determine the phase,

- If NAO index > 0 positive phase.

- If NAO index < 0 negative phase.

- There is no general periodicity.

- The negative phase results in cold, snowy weather across the eastern US and Europe. During the positive phase, milder weather is observed in the eastern US, while wetter than average weather can occur in Northern Europe and drier than average in central and Southern Europe.

You can see that the AO and NAO are very similar, and you might be wondering which one you should use. Some researchers believe the NAO is part of the AO and, therefore, you should use the AO, while others believe the measurements for the NAO are physically more meaningful and, therefore, more representative of the true nature of the westerlies, leading to stronger predictions. You will generally see both oscillations utilized.

PNA

The Pacific-North American (PNA) pattern is a complement to the NAO and is characterized by geopotential heights. It can be used to estimate the state of the westerlies over the Pacific and North America. Research suggests that there is a linkage between the PNA and ENSO.

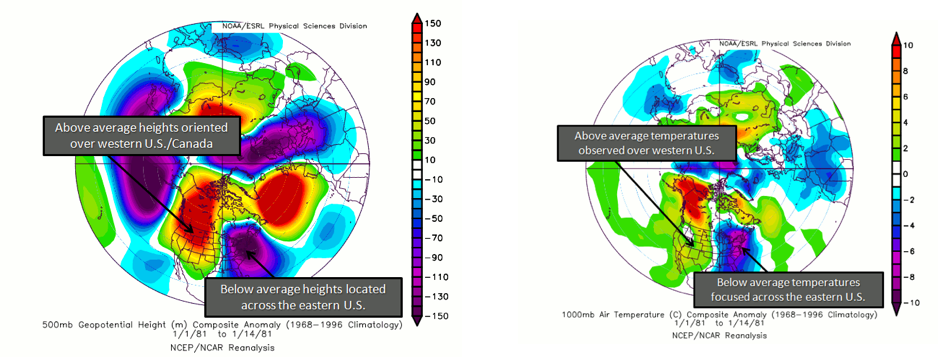

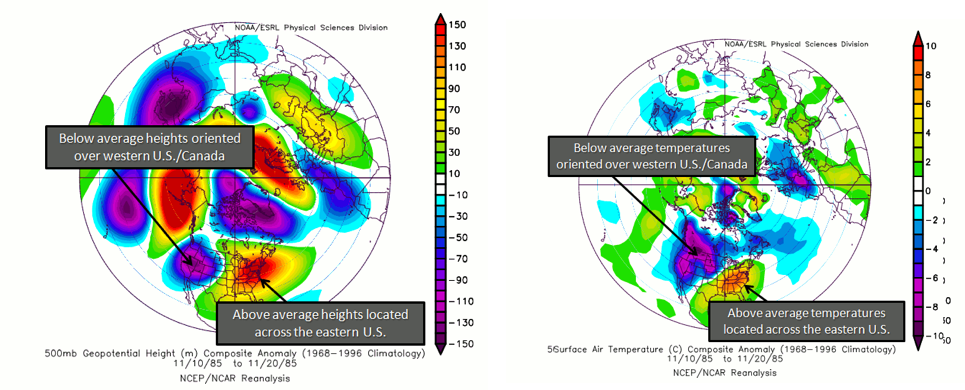

Again, there are two phases. The positive phase consists of above normal geopotential heights over the western US and anomalous lows over the eastern US. During this phase, cold air in Canada is forced southeastward, leading to anomalously low temperatures over the eastern US and anomalously high temperatures over the western US. Cyclogenesis (formation of cyclones) is also enhanced near the west coast of North America. In addition, anomalously high precipitation along the west coast of Canada and the Pacific Northwest is generally observed. A positive PNA tends to dominate during El Niño years. Below is a figure showing the typical geopotential heights during the positive phase and the corresponding temperatures.

During the negative phase, there are anomalously low geopotential heights over the western US, with above normal over the eastern US. During this phase, the average temperatures for the western US are low, while for the eastern US they are high. It is more likely that Pacific air can cross the US, limiting outbreaks of cold weather and the development of major cyclones. Precipitation tends to be high in the Mississippi and Ohio Valleys, while the southeastern US is drier. Please note that the precipitation anomalies are less robust when it comes to the PNA than in other patterns. Generally, a negative PNA occurs during La Niña years. Below is a figure showing the typical geopotential heights during the negative phase with the corresponding temperature information.

You can check out the current conditions here [36] using the PNA index. The periodicity is again somewhat spontaneous, but due to the linkage with ENSO, you could expect every 3-5 years.

To summarize:

- PNA is characterized by changes in geopotential height over the western US and the eastern US.

- We use the PNA index to determine the phase.

- If PNA index > 0 positive phase.

- If PNA index < 0 negative phase.

- There is no general periodicity, but the connection to ENSO might suggest 3–5 years as a starting base.

- The negative phase brings anomalously low temperatures to the western US and high temperatures to the Eastern US, while limiting the outbreak of cold weather and the development of major cyclones. The positive phase forces cold air from Canada over the eastern US, with higher than normal temperatures over the western US. Cyclogenesis is also enhanced during this phase.

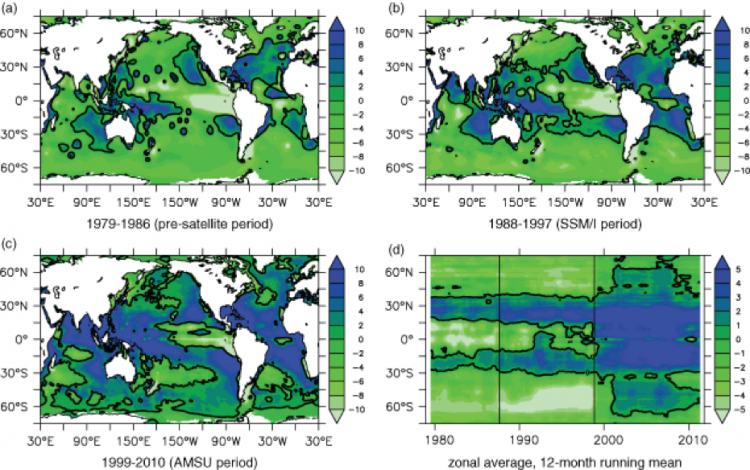

PDO

The variable of interest for the Pacific Decadal Oscillation (PDO) is SST. The PDO is considered a pattern of Pacific climate variability similar to ENSO. There is a warm and cool phase that can impact hurricane activity, droughts, and flooding. The interaction with ENSO can magnify or weaken the impacts, depending on if they are in phase. Please note that the PDO is still a highly researched teleconnection and not as much is known compared to other patterns. This is partly due to the long periodicity of 20–30 years.

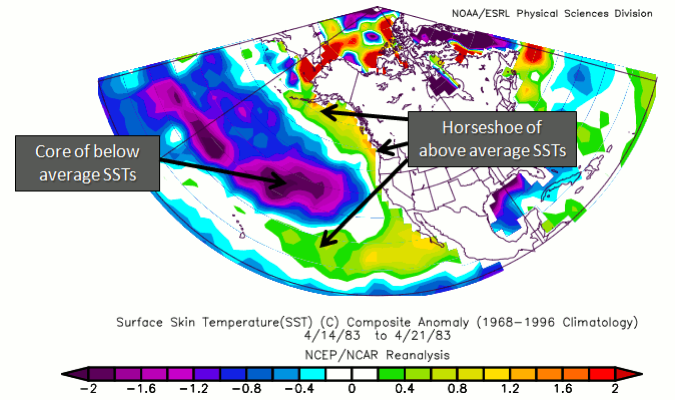

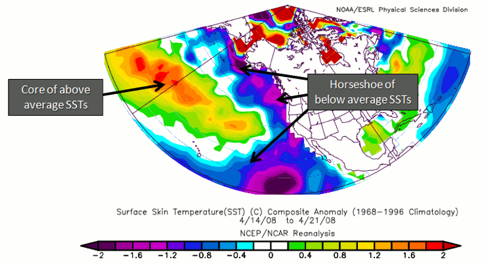

A warm phase of the PDO is characterized by anomalously high SSTs off the coast of North America from Alaska to the equator wrapping around cooler than normal SSTs in a horseshoe-like feature. If a warm PDO coincides with a La Niña, the impacts of a warm PDO weaken. If a warm PDO coincides with El Niño, the impacts magnify. It is believed that a warm PDO can enhance coastal biological productivity in Alaska but inhibit it off the west coast of the US. A warm PDO can result in warmer than average temperatures in the Pacific Northwest, British Columbia, and Alaska, with lower than average temperatures in Mexico and the southeastern US. In terms of precipitation, a warm PDO is associated with above-average precipitation in Alaska, Mexico, and the southwestern US, with below average in Canada. Here is an example of the signature SST for a warm PDO.

In contrast, the cold phase is the exact opposite in terms of SST, with anomalously low SSTs off the coast of North America wrapping around warmer than normal ones. If a cold PDO coincides with a La Niña, the impacts are magnified. Impacts are exactly opposite than in the warm phase. That is, coastal biological productivity in Alaska is reduced but enhanced off the west coast of the CONUS. Cooler than average temperatures in the Pacific Northwest, British Columbia, and Alaska are observed, with higher than average in Mexico and the southwestern US. For precipitation, lower than average is expected in Alaska, Mexico, and the southwestern US, with higher than average in Canada. Here is an example of the signature SST for a cold PDO.

To summarize:

- PDO is characterized by a horseshoe signature of cold or warm temperatures off the west coast of the US.

- If the temperatures along the coast are warm (cold), then the PDO is in a warm (cold) phase. If El Niño (La Niña) coincides with the warm (cold) phase, impacts are enhanced.

- This is a less studied pattern, but the periodicity is believed to be 20–30 years.

- The warm phases enhance coastal biological productivity in Alaska, while inhibiting it off the west coast of CONUS. During the warm phase, the PDO results in warmer than average temperatures in the Pacific Northwest, British Columbia, and Alaska, with lower than average in Mexico and the southwestern US. A cold phase results in below-average precipitation in Alaska and above average in Canada.

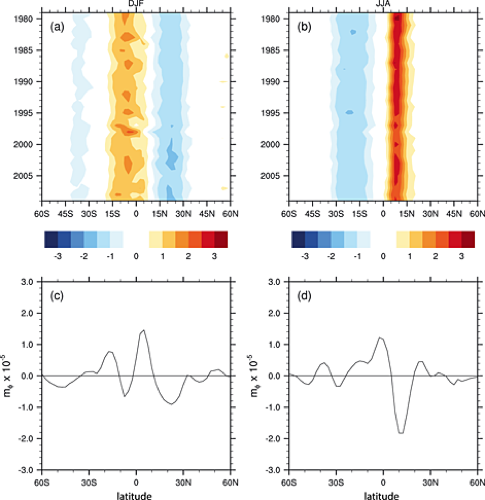

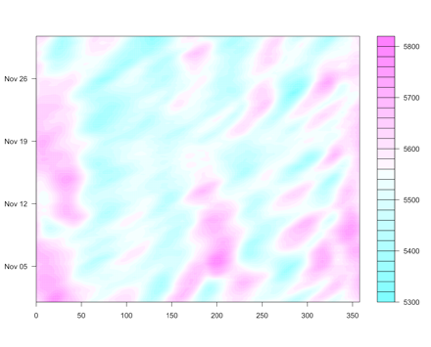

MJO

The Madden Julian Oscillation (MJO) is formally an intra-seasonal wave that occurs in the tropics, which manifests itself in the form of alternating areas of enhanced and suppressed rainfall that migrates eastward from the Indian Ocean to the central Pacific Ocean and sometimes beyond. Precipitation will be the variable of interest.

There are 8 phases of the MJO (Wheeler and Hendon 2004 [38]), with a timescale of about 6 days for each phase. However, there is large variability in the speed of the progression. A complete cycle can take 30–60 days, but there have been instances near 90 days. In addition, the intensity can be highly variable. Here is an animation of the cycle:

In the video above, blue shades are anomalously high rainfall rates (at least 1 mm/day more than normal), while orange represents low rainfall (at least 1 mm/day less than normal). Note the eastward progression of the MJO as it works through the eight phases (upper-right corner of animation).

There are many teleconnections with the MJO. For Tropical connections, the MJO acts as a modulator of global tropical cyclone activity. For Mid-Latitude connections, when the MJO is more intense, a 40% increase in extreme rainfall occurs globally (as compared to a weak MJO). Wintertime floods in the northwest are common when the MJO is in phase 2 or 3, while severe flooding in the northwest is most common in phase 6.

I suggest checking out this summary of the MJO for more detail, [40] as the scope of the pattern is too large to cover everything right here.

To summarize:

- MJO is characterized by precipitation anomalies that migrate eastward from the Indian Ocean to the central Pacific Ocean.

- There are 8 phases of the MJO typically lasting 6 days each.

- The progression is generally 30–60 days long, although a 90-day period has been observed.

- When the MJO is in phase 2 or 3, flooding during wintertime can occur in the northwest US, while severe flooding occurs during phase 6.

Identifying Patterns - Trends

Prioritize...

After reading this section, you should be able to define trend and note the impacts a trend can have on a time series analysis.

Read...

Unlike the patterns we just discussed that are periodic, trends (I specifically mean linear trends, but from here on out will refer to them as trends unless otherwise noted) are a constant increase or decrease over time. As a reminder, trends and periodicity can and do occur at the same time. Again, when performing different time series analyses, you may have to account for trends, but the main goal right now is to know how to identify trends in a given dataset.

What is a trend?

Let’s start with the very basics. What is a trend? For this course, specifically this lesson, a trend is a monotonic increase or decrease in the dataset over a time period. Sometimes, you will have different trends over different time periods, where the rates of change are different. While other times, it will be one long steady increase or decrease. There are other types of trends, such as exponential, that exist in weather and climate data, but right now, we will focus on linear trends.

How to identify a trend?

You might think it should be pretty evident if your data has a trend, but it can be much trickier. When trying to identify a trend, there are two components to consider. The first is the long-term trend you are trying to identify, and the second is the short-term variability. The short-term variability is what makes it difficult to identify the trend. The short-term variability can come from natural variability (the natural fluctuation that occurs in the variable you are measuring) or errors (anything that is non-natural, such as errors from the instrument). The short-term variability can also include any periodicity we discussed previously, further complicating the problem. For the purpose of finding the trend, the trend is the signal and the short-term variability is the noise. This will change later in the course when we start analyzing periodicities.

Rarely will you be able to visualize a trend by simply plotting your data. Usually, there is too much short-term variability to pull out the long-term trend. Many times, we need to either smooth the data or transform it to pull out the trend. Smoothing data will average out the noise, reducing the jagged fluctuations you observe. Once you smooth the data, you are usually left with a dataset that is easier to detect a trend with. We will learn more about this topic later on.

Steps to Explore a Time Series

Prioritize...

After reading this section, you should be able to prepare a dataset for a time-series analysis, apply various techniques to clean the dataset, and decompose the time series.

Read...

At this point, you should know the different components of a time series (trend, periodicity, and noise) and a variety of common patterns that may exist in your data. Now, we need to actually apply this knowledge to decompose the time series into each individual component. In this section, we are going to work through an example of how to prepare your data for a variety of time series analyses we will learn about in this course. Please note that depending on the time series analysis you pick, these steps may change a bit. But this is the general order you should follow to set up the data:

- Form a Question and Pick the Dataset

- Clean Data

- Plot Time Series

- Smooth Data

- Transform Data

- Plot Again

- Decompose Data into Components (trend, periodicity, and noise)

Forming a Question and Selecting a Dataset

There are a lot of questions we can ask when it comes to performing a time series analysis. However, we need to refine the question to be specific enough to actually perform an analysis and interpret the results. If your question is clear and concise, your interpretation of the results will be more straightforward. For example, let’s say we wanted to look at the global change in temperature. At first glance, you might think that is straightforward…..it’s not. Let’s start with the ‘global’ aspect. Is this a global average? How is global computed? And then, what is the change? The monthly change? The yearly change? How does the global value now compare to the global value 10 years ago? And then, finally, temperature. There are many temperature variables we could be talking about: air temperature, sea surface temperature, the temperature at 2 meters, deep ocean temperatures, temperatures at a specific pressure level... The problem with such a vague question is that if you don’t know what you are trying to ask, then you will have problems selecting the data and the appropriate analysis which, in turn, will lead to poor interpretations of the result.

In short, I tend to be as specific as possible, so I know what I’m trying to solve to make it easier for me to explain my results. You should include something about the spatial aspect, the temporal aspect, vertical if relevant, the name of the dataset, and the variable of choice. Sometimes, however, the question doesn’t fully present itself until after you try a few things….and that is totally fine! Refining your question is always acceptable based on limitations you may meet when it comes to obtaining data or other aspects of the analysis. But when presenting your material, make sure you are clear about what question you are asking.

Now that I said be specific, I’m going to be somewhat unspecific for this example, mostly because I’m trying to show you the different routes you might take based on the question you are asking. I’m going to say that I will look at the gridded monthly global sea surface temperature anomalies from January 1880 through April 2017 from the NOAA MLost dataset. The first caveat is that the NOAA grid is coarse (5 degrees x 5 degrees). This can potentially affect the spatial aspect if I average. Note that I didn’t exactly say what I’m looking at (a change in temperature or something else). That’s because I want to explore the dataset first with the intention of refining the question later on.

Let’s begin the exploration. Start by loading in the two datasets.

Your script should look something like this:

# load in monthly SST Anomaly NOAA MLOST

fid <- open.nc("NOAA_MLOST_MonthlyAnomTemp.nc")

NOAA <- read.nc(fid)

# create global average

NOAAglobalSSTAnom <- apply(NOAA$air,3,mean,na.rm=TRUE)

# time is in days since 1800-1-1 00:00:0.0 (use print.nc(fid) to find this out)

NOAAtime <- as.Date(NOAA$time,origin="1800-01-01")

Now, we can continue preparing the data for the analysis.

Clean Data

When I say ‘clean’ your data, I mean make sure the dataset is ready for the analysis. This means taking care of any known issues with the dataset and dealing with missing values. Whenever you clean your data, the first step is to always look at the ‘readme’ file. This will tell you about questionable data and possible quality control flags you should utilize. It’s a good starting point to make sure you have only good, reliable observations or data points. Unfortunately, with the data we have selected, there is no easily accessible readme file. This will happen from time to time. Instead, they have several journal articles to look through. You can also check the home pages of the datasets, like for NOAA [41], to see if there is any useful information. A further step is to 'dump' the netcdf file (print.nc command) to check for any notes by the authors of the datasets or for anything that might look like a QC flag. Based on looking through the references and dumping the file, there appear to be no QC flags or anything noted by the authors. So, we can move on.

After we’ve checked for any questionable data points, we need to decide what to do with the missing values. Missing values can come from bad data points (such as the removal process we just talked about) or from the lack of an observation. Sometimes you can just leave the missing values in and the R function will deal with it automatically, but this is incredibly risky if you don’t understand what the R function is doing (meaning the process it uses to deal with the missing values- does it fill it in, drop the point, etc.). We’ve discussed some methods already on how to fill the values in, so I will leave it up to your discretion, but let’s go ahead and fill in our values in the NOAA dataset as we did previously (using the ‘impute’ function from Hmisc package).

Your script should look something like this:

# extract out lat and lon accounting for EAST/WEST

NOAAlat <- NOAA$lat

NOAAlon <- c(NOAA$lon[37:length(NOAA$lon)]-360,NOAA$lon[1:36])

library(Hmisc)

# initialize the array

NOAAglobalSSTAnomImpute <- array(NaN,dim=dim(NOAA$air))

# impute the whole dataset

for(imonth in 1: length(NOAAtime)){

NOAAtemp <- rbind(NOAA$air[37:length(NOAA$lon),,imonth],NOAA$air[1:36,,imonth])

NOAAglobalSSTAnomImpute[,,imonth] <- impute(NOAAtemp,fun=mean)

}

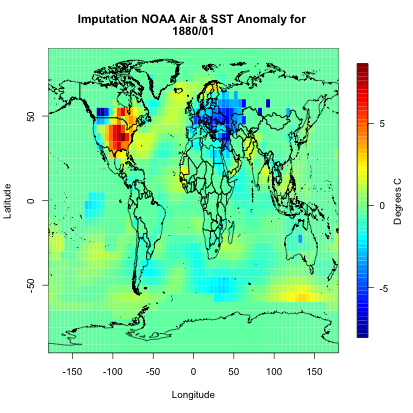

Note that I had to reverse the latitudes so I can use the ‘image.plot’ command. Let’s plot the first month to ensure the imputation worked.

Your script should look something like this:

# load in tools to overlay country lines

library(maptools)

data(wrld_simpl)

# plot map of first month of data

image.plot(NOAAlon,NOAAlat,NOAAglobalSSTAnomImpute[,,1],xlab="Longitude",

ylab="Latitude",legend.lab="Degrees C",

main=c("Imputation NOAA Air & SST Anomaly for ", format(NOAAtime[1],"%Y/%m")))

plot(wrld_simpl,add=TRUE)

You should get the following figure:

Based on the figure, it appears the imputation worked and all the values are filled in. So now we have dealt with uncertain data values as well as missing values. We can continue on with the process.

Plot Time Series

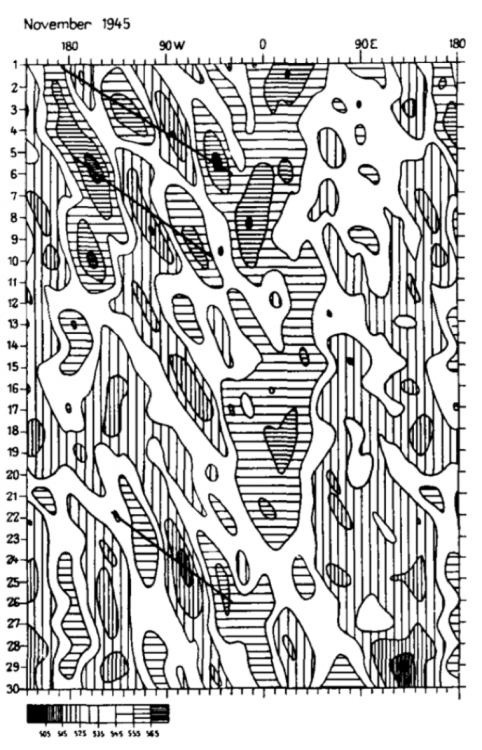

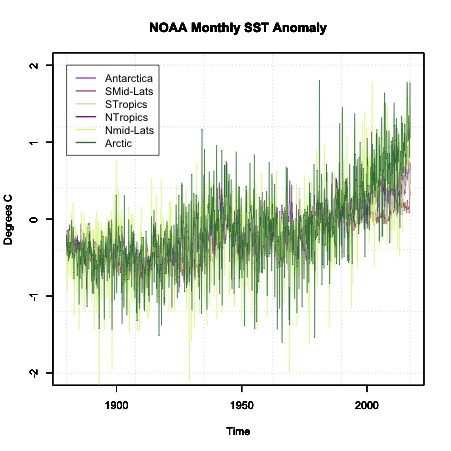

At this point, we have a good quality dataset to work with. The next actions will be due to the analysis you wish to perform, not due to the data. The first step is to, as usual, plot. But specifically, we need to plot the time series. If you are working with only time (at one location or averaged), you can use the ‘plot’ or ‘plot.ts’ functions. If you are working with multiple locations (several stations, gridded data, etc) like we are, you will have to get creative. If it’s a relatively small number of locations, you can use different colors or symbols. But if it’s the whole globe (like what we have), you should think of ways to ‘chunk’ the data together for plotting. Here are two ways I use often.

The first is to break down the globe by latitude zone. You create the latitude zones to be however big or small as you want, but I typically create 6 zones: 90S-60S, 60S-30S, 30S-0, 0-30N, 30-60N, 60N-90N. You average spatially for all longitudes within each latitude zone. Then, you can plot each zone separately or color-code the lines on one plot. Let’s give this a try with the NOAA data. First, set up your latitude zones.

Your script should look something like this:

# create lat zones lat_zones <- t(matrix(c(-90,-60,-60,-30,-30,0,0,30,30,60,60,90),nrow=2,ncol=6))

Here’s an example of the boundaries for the latitude zones I created:

Now, loop through each zone for each month and compute the average over all longitudes.

Your script should look something like this:

# create lat zone averages

NOAASSTAnomLatZone <- matrix(nrow=length(NOAAtime),ncol=dim(lat_zones)[1])

for(izone in 1:dim(lat_zones)[1]){

# extract the latitudes in this zone

latIndex <- which(NOAAlat < lat_zones[izone,2] & NOAAlat >= lat_zones[izone,1])

for(itime in 1:length(NOAAtime)){

NOAASSTAnomLatZone[itime,izone] <- mean(NOAAglobalSSTAnomImpute[,latIndex,itime])

}

}

Plot the time series by running the code below:

Here is a larger version of the figure:

Note that the colors are random and will change each time you run the code.

Another way to chunk your global data is through a classification system. One such system is the Koppen Climate Classification System [42]. This system is broken into 5 major climatic types that are determined by temperature and precipitation climatologies. If you are creating a global analysis, this is a good way to break up the data into 5 plots or colors (1 for each region) and it makes synthesizing the data much easier. Similar to the latitude zones, you average all lats/lons that fall in each climate zone together. One caveat, the Koppen climate system is, in general, only used over land. For this reason, I will not show you an example of breaking our data into it. But what you can do is download the data for the Koppen climate classification [43]. The data is in the format lon/lat/koppen identifier. You can find the classification code on the same page. If you need help or an example of how to set this up, please let me know.

So now we have a time series plot. What next? Well, the next step depends on the analysis you are trying to perform. Since we do not have a clear question, I’m going to focus on the idea that we are trying to determine whether a trend is a component in our dataset. Now, at first glance, you can see some changes in the data suggesting a trend exists, but there is a lot of variability from month to month. So, what can we do? The two main options are to transform the data and/or smooth the data.

We’ve talked about transformation already, so I won’t go into much detail, but when it comes to trends, your best bet is to perform an anomaly conversion. Our example is already looking at the anomaly, so we don’t need to do this. But please remember, many times when examining data for trends, looking at the anomaly first is a good start.

The second aspect is smoothing the data. For smoothing data, we are trying to remove some of the noise by reducing the variability. Again, the most common type is a moving average. We’ve already applied a moving average imputation spatially, but let’s try a moving average temporally. Apply an 11-month smoother and plot the results for Antarctica (zone 1) by running the code below:

Below is a larger version of the overlay:

Notice how the variability has decreased. We have a nice smoothed running average of the SST anomaly. The noise has been decreased, and we can start to see the actual trend better.

Types of Decomposition

Now that we have a clean dataset that’s been smoothed to reduce noise and the time series plot suggests a trend exists, we can start to decompose the time series. Decomposing a time series means that we break a time series down into its components. For this course, we will have three components. A periodicity component (P), a trend component (T) and an irregular/remainder/error component (E).

There are two classical types of decomposition. These are still widely used, but please note more robust methods exist and will be talked about later on in the course. The first type is called the Additive Decomposition:

For each time stamp (t), the data (Y) at time t (Yt) can be decomposed into the periodicity component (Pt) at time t (which includes Quasi-Periodic and Chaotic), a trend component (Tt) at time t, and the remainder/error (Et) at time t.

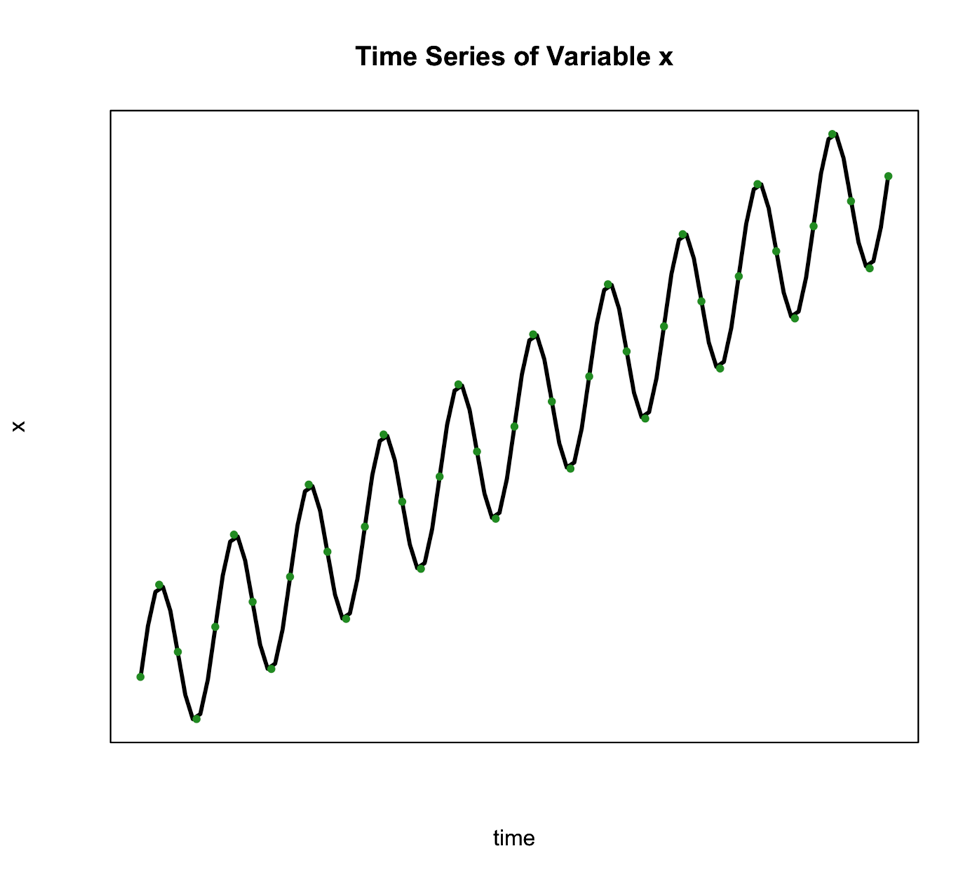

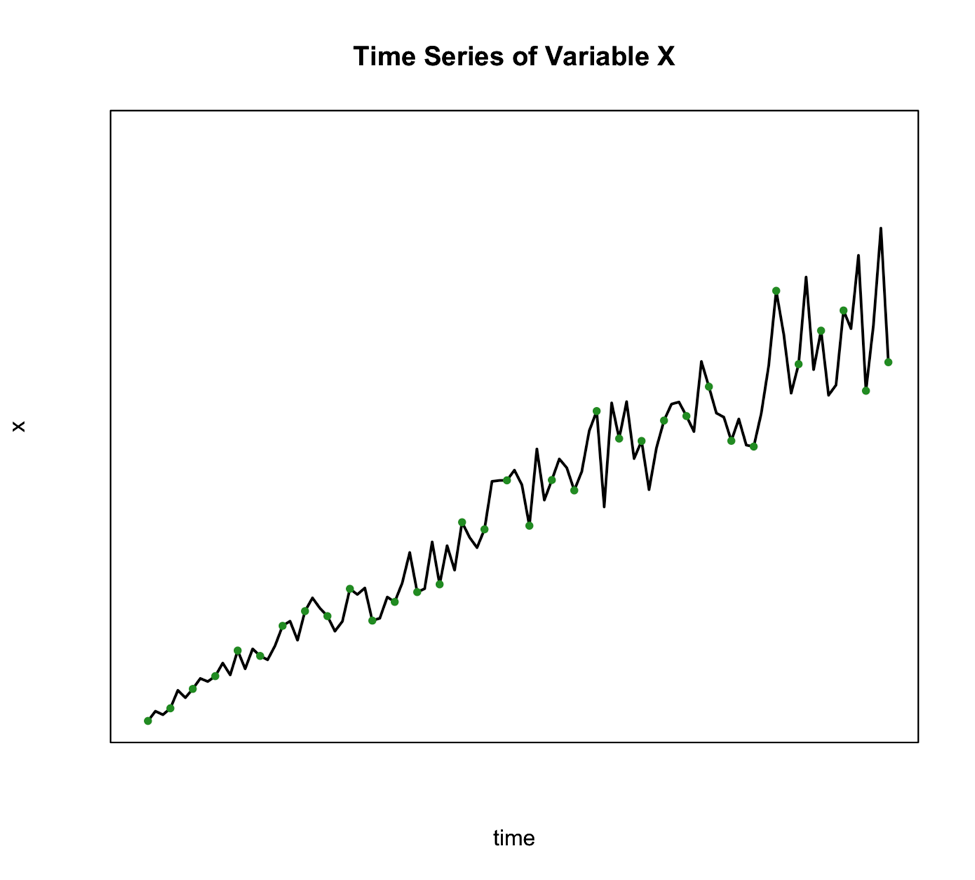

We use this type of decomposition primarily when the seasonal variation is constant over time. Such is the case in the figure below:

The second classical decomposition type is called Multiplicative and takes the form:

We use this method when the seasonal variation changes over time such as the figure below shows:

An alternative to the multiplicative model is to transform your data to meet the requirements of the additive. One of the best transformations to do this is log.

So, what would you select for our example? Well, for the Antarctica case, the additive might be reasonable as the variation doesn’t change much through time.

Basic Steps of Decomposition

Now that we know which method we are going to use, we can decompose the series into its components. Below is a basic step by step guide you can follow.

- Estimate the trend component (T)

We can do this by using a linear regression model, which you learned about in Meteo 815. The result is your trend component. If the trend, however, is not linear but perhaps exponential, we need to use a log transformation to obtain a linear trend and then perform the linear regression analysis. - Periodic Effects (P)

Before we can obtain the periodic effect, we need to de-trend the series. If the method is additive, you subtract the trend estimate from the series. If it’s multiplicative, you divide the series by the trend values. Then, we average the de-trended values over your periodic time span. For example, if you wanted a seasonal effect, you compute the average of the de-trended values for each climatological season. That is, take an average of all the Decembers, Januarys, and Februarys, then take the average of all March, April, May months, and so on. - Random Component (E)

To obtain the random component if you are using the additive format, you solve for E: To obtain the random component if you are using the multiplicative format, you solve for E again:

Please note that you do not have to perform these calculations yourself. Instead, you can use the function ‘decompose’ in R which allows you to decompose any time series by ‘type’ (set to additive or multiplicative). All you need is a time series function. What is important in these steps is that you understand what’s going on. That is, we simply pull out each component. If it seems basic, it is! Don’t overthink this.

Let’s decompose our Antarctica SST time series in R. Use the code below to first make a time series object.

Your script should look something like this:

# create a time series object tsNOAA <- ts(movingAveNOAAAnom[,1],frequency=12,start=c(as.numeric(format(NOAAtime[1],"%Y")),as.numeric(format(NOAAtime[1],"%M")))

Now, let’s decompose by running the code below:

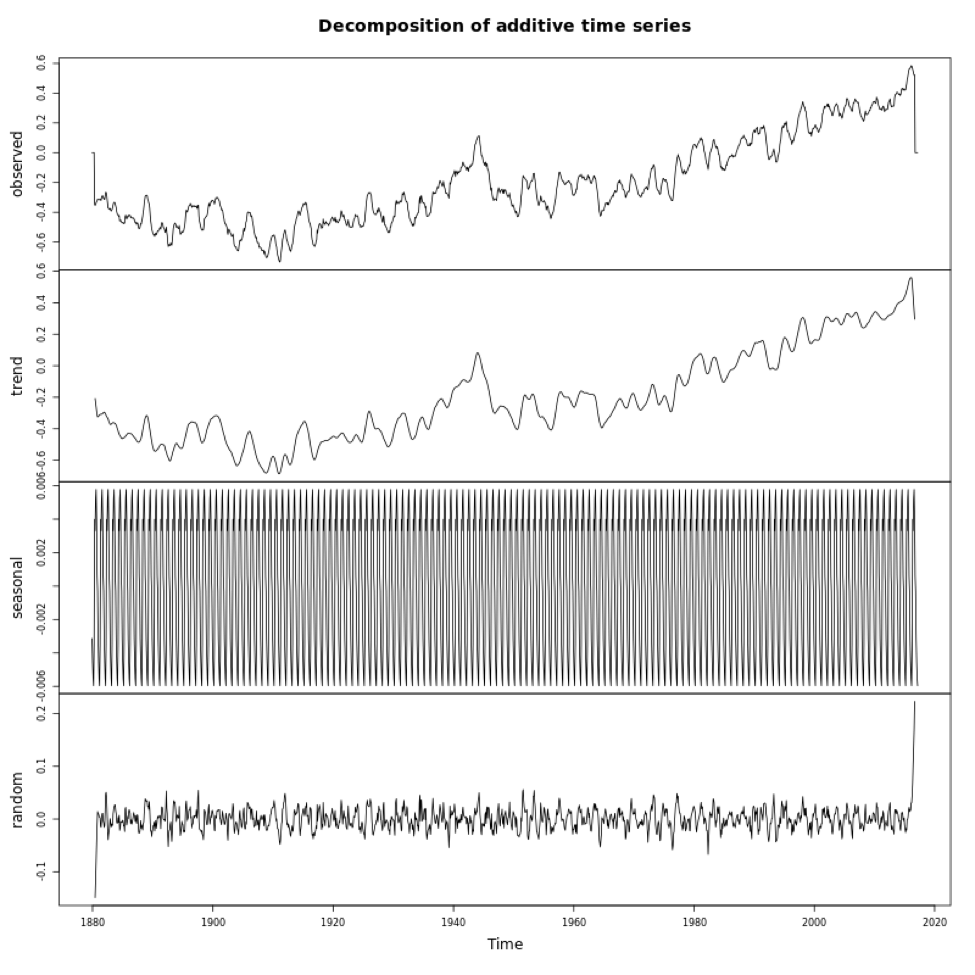

If you look at the variables in DecomposeNOAA, you will see each component: Trend, Seasonal (what we call Periodicity in our course) and Random (error). You can also plot the object. Below is a larger version of the figure.

This figure shows you the data in the first panel, the trend component in the second, the periodicity in the third, and the random in the fourth. Note that the seasonality component depends on how we adjust the ‘frequency’ parameter in the metadata (time series object). It is important that you get this right when decomposing.

So there you have it, your time series has been broken down. You know each component. Now, this may seem kind of pointless, but it will be helpful later on when we try to identify other patterns and diagnose different aspects of your time series. This is a very simplistic approach, one you will probably not use, but it is meant to illustrate how these components all add together. We will hit it with more powerful tools in later lessons.

Self Check

Test your knowledge...

Lesson 2: Autocorrelation and ARIMA

Motivate...

PRESENTER: How does rice grow? Rice is a grain. It looks like grass when it's fully grown. Most of the rice we eat in the UK comes from India, Thailand, Cambodia, Vietnam, Pakistan, and Italy. People in these countries have grown rice for thousands of years. Actually, you can grow rice in most places as long as the plants aren't exposed to the cold.