Course Outline

Lesson 1: Retrieving Data From a Relational Database

Overview

Overview

One of the core skills required by database professionals is the ability to manipulate and retrieve data held in a database using Structured Query Language (SQL, sometimes pronounced "sequel"). As we'll see, queries can be written to create tables, add records to tables, update existing table records, and retrieve records meeting certain criteria. This last function, retrieving data through SELECT queries, is arguably the most important because of its utility in answering the questions that led the database developer to create the database in the first place. SELECT queries are also the most fun type to learn about, so, in this first lesson, we will focus on using them to retrieve data from an existing database. Later, we'll see how new databases can be designed, implemented, populated and updated using other types of queries.

Objectives

At the successful completion of this lesson, students should be able to:

- use the Query Builder GUI in MS-Access to create basic SQL SELECT queries;

- understand how settings made in the Query Builder translate to the various clauses in an SQL statement;

- use aggregation functions on grouped records to calculate counts, sums, and averages;

- construct a query based on another query (subquery).

Questions?

Conversation and comments in this course will take place within the course discussion forums. If you have any questions now or at any point during this week, please feel free to post them to the Lesson 1 Discussion Forum.

Checklist

Checklist

Lesson 1 is one week in length (see the Canvas Calendar for specific due dates). To finish this lesson, you must complete the activities listed below:

- Download the baseball stats.accdb [1] (right-click and Save As) Access database that will be referenced during the lesson.

- Work through Lesson 1.

- Complete Project 1 and upload its deliverables to the Project 1 Dropbox.

- Complete the Lesson 1 Quiz.

SELECT Query Basics

SELECT Query Basics

Why MS-Access?

A number of RDBMS vendors provide a GUI to aid their users in developing queries. These can be particularly helpful to novice users, as it enables them to learn the overarching concepts involved in query development without getting bogged down in syntax details. For this reason, we will start the course with Microsoft Access, which provides perhaps the most user-friendly interface.

A. Download an Access database and review its tables

Throughout this lesson, we'll use a database of baseball statistics to help demonstrate the basics of SELECT queries.

- Click to download the baseball database. [1]

- Open the database in MS-Access.

One part of the Access interface that you'll use frequently is the "Navigation Pane," which is situated on the left side of the application window. The top of the Navigation Pane is just beneath the "Ribbon" (the strip of controls that runs horizontally along the top of the window).

The Navigation Pane provides access to the objects stored in the database, such as tables, queries, forms, and reports. When you first open the baseball_stats.accdb database, the Navigation Pane should appear with the word Tables at the top, indicating that it is listing the tables stored in the database (PLAYERS, STATS, and TEAMS). - Double-click on a table's name in the Navigation Pane to open it. Open all three tables and review the content. Note that the STATS table contains an ID for each player rather than his name. The names associated with the IDs are stored in the PLAYERS table.

B. Write a simple SELECT query

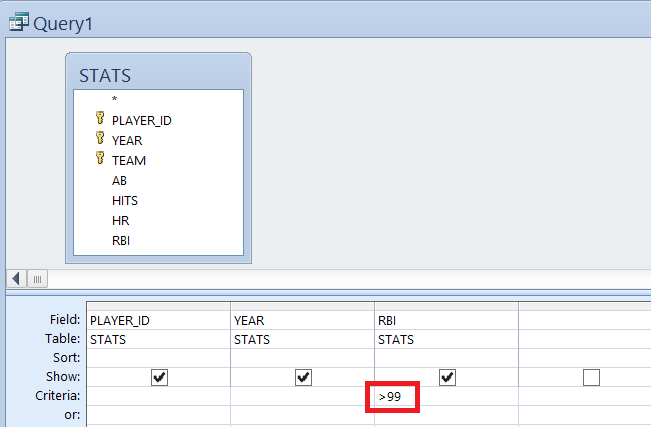

With our first query, we'll retrieve data from selected fields in the STATS table.

- Click on the Create tab near the top of the application window.

- Next, click on the Query Design button (found on the left side of the Create Ribbon in the group of commands labeled as Queries).

When you do this in Access 2010 and higher, the ribbon switches to the Design ribbon. - In the Show Table dialog, double-click on the STATS table to add it to the query and click Close.

- Double-click on PLAYER_ID in the list of fields in the STATS table to add that field to the design grid below.

- Repeat this step to add the YEAR and RBI fields.

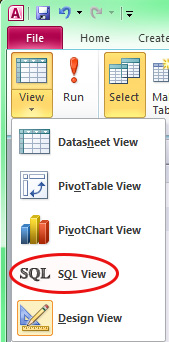

- At any time, you can view the SQL that's created by your GUI settings by accessing the View drop-down list on the far-left side of Design Ribbon (it is also available when you have the Home tab selected, as shown below).

As you go through the next steps, look at the SQL that corresponds to queries you are building.

C. Restrict the returned records to a desired subset

- From the same View drop-down list, select Design View to return to the query design GUI.

- In the design grid, set the Criteria value for the RBI field to >99.

- Test the query by clicking on the red exclamation point on the top left next to the View dropdown (it should return 103 records).

D. Sort the returned records

- Return to Design View by selecting it from the View dropdown.

- In the design grid, click in the Sort cell under the RBI column and select Descending from the drop-down list. This will sort the records from highest RBI total to lowest.

- Test the query.

E. Add additional criteria to the selection

- Return to Design View and set the Criteria value for the YEAR field to >1989. This will limit the results to seasons of over 100 RBI since 1990.

- Test the query (it should return 53 records).

- Return to Design View and modify the Criteria value for the YEAR field to >1989 And <2000, which will further limit the results to just the 1990s.

- Test the query (it should return 34 records).

- Return to Design View and change the Criteria value for the YEAR field back to >1989, beneath that cell (in the or: cell) add <1960.

As you should be able to guess, I'm asking you to write a query that identifies 100-RBI seasons since 1989 OR prior to 1960. However, the query as written at this point doesn't quite yield that result; look at the WHERE line in the SQL view. Instead, it would return 100-RBI seasons since 1989 and all seasons prior to 1960 (not just the 100-RBI ones). To produce the desired result, you need to repeat the >99 criterion in the RBI field's or: cell. Check the SQL view to see the change. - Test the query (it should return 74 records).

You've probably recognized by now that the output from these queries is not particularly human friendly. In the next part of the lesson, we'll see how to use a join between the two tables to add the names of the players to the query output.

Joining Data From Multiple Tables

Joining Data From Multiple Tables

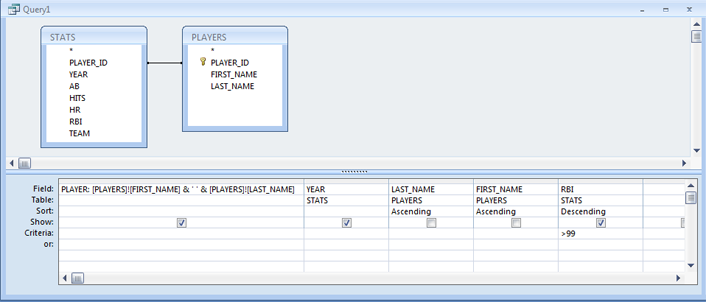

One of the essential functions of an RDBMS is the ability to pull together data from multiple tables. In this section, we'll make a join between our PLAYERS and STATS tables to produce output in which the stats are matched up with the players who compiled them.

A. Retrieve data from multiple tables using a join

- Return to Design View and click on the Show Table button (near the middle of the Ribbon).

- In the Show Table dialog, double-click on the PLAYERS table to add it to the query, then Close the Show Table dialog. Create the join by click-holding on the PLAYER_ID field name in one of the tables and dragging to the PLAYER_ID field name in the other table. If you make the wrong join connection, you can right-click on the line and delete the join.

Note:

You'll probably notice the key symbol next to PLAYER_ID in the PLAYERS table and next to PLAYER_ID, YEAR and TEAM in the STATS table. This symbol indicates that the field is part of the table's primary key, a topic we'll discuss more later. For now, it's good to understand that a field need not be a key field to participate in a join, and that while the fields often share the same name (as is the case here), that is not required. What's important is that the fields contain matching values of compatible data types (e.g., a join between a numeric zip code field and a string zip code field will not work because of the mismatch between data types).

- Modify the query so that it again just selects 100-RBI seasons without any restrictions on the YEAR.

- Double-click on the FIRST_NAME and LAST_NAME fields to add them to the query.

- Test the query. Looking at the output, you may be thinking that the PLAYER_ID value is not really of much use anymore and that the names would be more intuitive on the left side rather than on the right.

- To remove the PLAYER_ID field from the output, return to Design View and click on the thin gray bar above the PLAYER_ID field in the design grid to highlight that field. Press the Delete key on your keyboard to remove that field from the design grid (and the query results).

- To move the name fields, click on the FIRST_NAME field's selection bar in the design grid and drag to the right so that you also select the LAST_NAME field. With both fields selected, click the selection bar and drag them to the left so that they now appear before the YEAR and RBI fields.

- Test the query.

B. Use the Expression Builder to concatenate values from multiple fields

- Return to Design View and right-click on the Field cell of the FIRST_NAME column; on the context menu that appears, click on Build to open the Expression Builder.

You should see the FIRST_NAME field listed in the expression box already. Note the Expression Elements box in the lower left of the dialog, which provides access to the Functions built into Access (e.g., the LCase function for turning a string into all lower-case characters), to the tables and fields in the baseball_stats.accdb database (e.g., STATS), to a list of Constants (e.g., True and False) and to a list of Operators (e.g., +, -, And, Or). All of these elements can be used to produce expressions in either the Field or Criteria rows of the design grid. Note that it's also OK to type the expression directly if you know the correct syntax. Here, we're going to create an expression that will concatenate the FIRST_NAME value with the LAST_NAME value in one column. - First, delete the FIRST_NAME text from the expression box.

- Expand the list of objects in the baseball_stats.accdb database by clicking on the plus sign to its left. Then expand the list of Tables.

- Click on PLAYERS to display its fields in the middle box. Double-click on the FIRST_NAME field to add it to the expression. (Note that the field is added to the expression in a special syntax that includes the name of its parent table. This is done in case there are other tables involved in the query that also have a FIRST_NAME field. If there were, this syntax would ensure that the correct FIRST_NAME field would be utilized.)

- The concatenation character in Access is the ampersand (&). Click in the expression text box after [PLAYERS]![FIRST_NAME] to get a cursor and type:

& ' ' &

So, you type &-space-single quote-space-single quote-space-&. The purpose of this step is to add a space between the FIRST_NAME and LAST_NAME values in the query results. - Double-click on the LAST_NAME field in the field list to add it to the expression.

- Finally, place the cursor at the beginning of the expression and type the following, followed by a space:

PLAYER:

This specifies the column's name in the output. Your expression should now look like this:Text Description (click to reveal)

PLAYER: [PLAYERS]![FIRST_NAME] & ’ ’ & [PLAYERS]![LAST_NAME] - When you've finished writing the expression, click OK to dismiss the Expression Builder.

- The LAST_NAME column is now redundant, so remove it from the design grid as described above.

- Run the query and confirm that the name values concatenated properly.

C. Sorting by a field not included in the output

Suppose you wanted to sort this list by the last name, then first name, and then by the number of RBI. This would make it easier to look at each player's best seasons in terms of RBI production.

- In Design View, double-click on LAST_NAME, then FIRST_NAME to add them back to the design grid.

- Choose the Ascending option in the Sort cell for both the LAST_NAME and FIRST_NAME fields.

- Again, these fields are redundant with the addition of the PLAYER expression. Uncheck the Show checkbox for both fields so that they are not included in the query results. Note that the ordering of the Sort fields in the design grid is important. The results are sorted first based on the left-most Sort field, then by the next Sort field, then the next, etc.

- If necessary, click on the thin gray selection bar above the RBI field in the design grid to select that column. Click and drag to the right so that it is moved to the right of the _NAME columns.

- Choose Descending as the Sort option for the RBI field.

- Run the query and confirm that the records sorted properly. (Hank Aaron should be listed first.)

D. Performing an outer join

If you were to count the number of players being displayed by our query, you'd find that there are 10 (PLAYER_IDs 1 through 10).

- Open the PLAYERS table and note that there is a player (Alex Rodriguez, PLAYER_ID 11) who is not appearing in the query output.

- Now open the STATS table and note that there are records with a PLAYER_ID of 12, but no matching player with that ID in the PLAYERS table. These records aren't included in the query output either.

The unmatched records in these tables aren't appearing in the query output because the join between the tables is an inner join. Inner joins display data only from records that have a match in the join field values.

To display data from the unmatched records, we need to use an outer join.

Close the tables. - Leave Query1 open, as we'll come back to it in a moment. Create a new query (Create > Query Design).

- Add the PLAYERS and STATS tables to the design grid of the new query, and then close the Show Table dialog.

- Create a join between the PLAYER_ID fields.

- Add the PLAYER_ID, FIRST_NAME and LAST_NAME fields from the PLAYERS table to the design grid.

- Add the PLAYER_ID, YEAR and HR fields from the STATS table.

- Choose the Sort Ascending option for the PLAYER_ID field (the one from the STATS table) and the YEAR field.

- Right-click on the line connecting the PLAYER_ID fields and select Join Properties.

Note that join option 1 is currently selected (only include rows where the joined fields from both tables are equal). - Click on the Include ALL records from 'PLAYERS' option, then click OK. Whether this is option 2 or 3 will depend on whether you clicked and dragged from PLAYERS to STATS or from STATS to PLAYERS. In any case, note that the line connecting the key fields is now an arrow pointing from the PLAYERS table to the STATS table.

- Test the query. The query outputs PLAYER_ID 11 (at the top) in addition to 1 through 10. Because there are no matching records for PLAYER_ID 11 in the STATS table, the STATS columns in the output contain NULL values.

- Return to Design View and bring up the Join Properties dialog again. This time choose option 3, so that all records from the STATS table will be shown, then click OK. The arrow connecting the key fields is now reversed, pointing from the right (STATS) table to the left (PLAYERS) table.

- Test the query. If you scroll to the bottom, you'll see that the query outputs the stats for PLAYER_ID 12. This player has no match in the PLAYERS table, so the PLAYERS columns in the output contain NULL values.

- Close Query2 without saving.

E. Performing a cross join

Another type of join that we'll use later when we write spatial queries in PostGIS is the cross join, which produces the Cartesian, or cross, product of the two tables. If one table has 10 records and the other 5, the cross join will output 50 records.

- Create another new query adding the PLAYERS and TEAMS tables to the design grid. You won't see a join line automatically connecting fields in the two tables (they don't share a field in common). This lack of a line connecting the tables is actually what we want, as it will cause a cross join to be performed.

- Add the PLAYER_ID field from the PLAYERS table and the ABBREV field from the TEAMS table to the design grid.

- Test the query. Note that the query outputs 275 records (the product of 11 players and 25 teams). Each record represents a different player/team combination.

- Close Query2 without saving.

The usefulness of this kind of join may not be obvious given this particular example. For a better example, imagine a table of products that includes each product's retail price and a table of states and their sales taxes. Using a cross join between these two tables, you could calculate the sales price of each product in each state.

F. Creating a query with multiple joins

Finally, let's put together a query that joins together data from 3 tables.

- Create another new query adding the PLAYERS, STATS and TEAMS tables to the design grid.

- Create the PLAYER_ID-based join between the PLAYERS and STATS tables.

- Click on the TEAM field in the STATS table and drag over to the ABBREV field in the TEAMS table, (recall that field names do not have to match when creating a join).

- Add the FIRST_NAME and LAST_NAME fields from the PLAYERS table, the YEAR and HR fields from the STATS table and the CITY and NICKNAME fields from the TEAMS table.

- Test the query.

- Close Query2 without saving.

In this way, it is possible to create queries containing several joins. However, in a real database, you are likely to notice a drop in performance as the number of joins increases.

In this section, we saw how to pull together data from two or more related tables, how to concatenate values from two text fields, and how to sort query records based on fields not included in the output. This enabled us to produce a much more user- friendly output than we had at the end of the previous section. In the next section, we'll further explore the power of SELECT queries by compiling career statistics and calculating new statistics on the fly from values stored in the STATS table.

Aggregating Data

Aggregating Data

One of the beauties of SQL is in its ability to construct groupings from the values in a table and produce summary statistics for those groupings. In our baseball example, it is quite easy to calculate each player's career statistics, seasons played, etc. Let's see how this type of query is constructed by calculating each player's career RBI total.

A. Adding a GROUP BY clause to calculate the sum of values in a field

- Return to Design View (your Query 1 design should still be there) and remove the LAST_NAME and FIRST_NAME fields from the design grid.

- With the Design tab selected, click on the Totals button on the right side of the Ribbon in the Show/Hide group of buttons. This will add a Total row to the design grid with each field assigned the value Group By.

- Click in the Total cell under the RBI column and select Sum from the drop-down list (note the other options available on this list, especially Avg, Max, and Min).

- Grouping by both the player's name and the year won't yield the desired results, since the data in the STATS table are essentially already grouped in that way. We want the sum of the RBI values for each player across all years, so remove the YEAR column from the design grid and remove the >99 from the Criteria row.

- Modify the query so that the results are sorted from highest career RBI total to lowest.

- Run the query and note that the RBI column name defaults to 'SumOfRBI'. You can override this by returning to Design View and entering a custom name (such as CareerRBI) and a colon before the RBI field name (following the PLAYER field example).

B. Use the Expression Builder to perform a calculation based on multiple field values

Home run hitters are often compared based on the frequency of their home run hitting (i.e., 'Player X averages a home run every Y times at bat'). Let's calculate this value for each player and season.

- Click on the Totals button on the query Design toolbar to toggle off the Total row in the design grid.

- Remove the RBI field from the design grid.

- Add the YEAR field to the design grid.

- In the third column (which is empty), right-click in the Field cell and click on Build to pull up the Expression Builder as done earlier.

- Navigate inside the database and click on STATS to see a list of its fields.

- Use the GUI to build the following expression:

ABPERHR: [STATS]![AB]/[STATS]![HR]

- If a player had 0 HR in a season, this calculation will generate an error (can't divide by 0, test the query and look for #Div/0!), so add the HR field to the design grid, add >0 to its Criteria cell and uncheck its Show checkbox.

- Sort the query results (not shown below) by the ABPERHR value from lowest to highest (since low values are better than high values).

- Run the query and note that the calculated values include several digits after the decimal point. These digits aren't really necessary, so return to Design View, right-click on the ABPERHR field and select Properties.

- A Property Sheet pane will appear on the right side of the window. Select Fixed from the Format drop-down list and set the Decimal Places value to 1.

- Re-run the query and confirm that you get the desired results.

SELECT Query Syntax

SELECT Query Syntax

Hopefully, you've found the MS-Access query builder to be helpful in learning the basics of retrieving data from a database. As you move forward, it will become important for you to learn some of the syntax details (i.e., how to write the statements you saw in SQL View without the aid of a graphical query builder). That will be the focus of this part of the lesson.

A. The SELECT clause

All SELECT queries begin with a SELECT clause whose purpose is to specify which columns should be retrieved. The desired columns are separated by commas. Our first query in this lesson had a SELECT clause that looked like the following:

SELECT STATS.PLAYER_ID, STATS.HR, STATS.RBI

Note that each column name is preceded by the name of its parent table, followed by a dot. This is critical when building a query involving joins and one of the desired columns is found in multiple tables. Including the parent table eliminates any confusion the SQL interpreter would have in deciding which column should be retrieved. However, you should keep in mind that the table name can be omitted when the desired column is unambiguous. For example, because our simple query above is only retrieving data from one table, the SELECT clause could be reduced to:

SELECT PLAYER_ID, HR, RBI

Selecting all columns

The easiest way to retrieve data from a table is to substitute the asterisk character (*) for the list of columns:

SELECT *

This will retrieve data from all of the columns. While it's tempting to use this syntax because it's so much easier to type, you should be careful to do so only when you truly want all of the data held in the table or when the table is rather small. Retrieving all the data from a table can cause significant degradation in the performance of an SQL-based application, particularly when the data is being transmitted over the Internet. Grab only what you need!

Case sensitivity in SQL

Generally speaking, SQL is a case-insensitive language. You'll find that the following SELECT clause will retrieve the same data as the earlier ones:

SELECT player_id, hr, rbi

Why did I say "generally speaking?" There are some RDBMS that are case sensitive depending on the operating system they are installed on. Also, some RDBMS have administrative settings that make it possible to turn case sensitivity on. For these reasons, I suggest treating table and column names as if they are case sensitive.

On the subject of case, one of the conventions followed by many SQL developers is to capitalize all of the reserved words (i.e., the words that have special meaning in SQL) in their queries. Thus, you'll often see the words SELECT, FROM, WHERE, etc., capitalized. You're certainly not obligated to follow this convention, but doing so can make your queries more readable, particularly when table and column names are in lower or mixed case.

Finally, recall that we renamed our output columns (assigned aliases) in some of the earlier query builder exercises by putting the desired alias and a colon in front of the input field name or expression. Doing this in the query builder results in the addition of the AS keyword to the query's SELECT clause, as in the following example:

SELECT PLAYER_ID, HR AS HomeRuns, RBI

B. The FROM clause

The other required clause in a SELECT statement is the FROM clause. This specifies the table(s) containing the columns specified in the SELECT clause. In the first queries we wrote, we pulled data from a single table, like so:

SELECT STATS.PLAYER_ID, STATS.HR, STATS.RBI FROM STATS

Or, omitting the parent table from the column specification:

SELECT PLAYER_ID, HR, RBI FROM STATS

The FROM clause becomes a bit more complicated when combining data from multiple tables. The Access query builder creates an explicit inner join by default. Here is the code for one of our earlier queries:

SELECT PLAYERS.FIRST_NAME, PLAYERS.LAST_NAME, STATS.YEAR, STATS.RBI FROM PLAYERS INNER JOIN STATS ON PLAYERS.PLAYER_ID = STATS.PLAYER_ID

An inner join like this one causes a row-by-row comparison to be conducted between the two tables looking for rows that meet the criterion laid out in the ON predicate (in this case, that the PLAYER_ID value in the PLAYERS table is the same as the PLAYER_ID value in the STATS table). When a match is found, a new result set is produced containing all of the columns listed in the SELECT clause. If one of the tables has a row with a join field value that is not found in the other table, that row would not be included in the result.

We also created a couple of outer joins to display data from records that would otherwise not appear in an inner join. In the first example, we chose to include all data from the left table and only the matching records from the right table. That yielded the following code:

SELECT [PLAYERS]![FIRST_NAME] & ' ' & [PLAYERS]![LAST_NAME] AS PLAYER, STATS.YEAR, STATS.RBI

FROM STATS LEFT JOIN PLAYERS ON STATS.PLAYER_ID = PLAYERS.PLAYER_ID

Note that the only difference between this FROM clause and the last is that the INNER keyword was changed to the word LEFT. As you might be able to guess, our second outer join query created code that looks like this:

SELECT [PLAYERS]![FIRST_NAME] & ' ' & [PLAYERS]![LAST_NAME] AS PLAYER, STATS.YEAR, STATS.RBI

FROM STATS RIGHT JOIN PLAYERS ON STATS.PLAYER_ID = PLAYERS.PLAYER_ID

Here the word LEFT is replaced with the word RIGHT. In practice, RIGHT JOINs are more rare than LEFT JOINs since it's possible to produce the same results by swapping the positions of the two tables and using a LEFT JOIN. For example:

SELECT [PLAYERS]![FIRST_NAME] & ' ' & [PLAYERS]![LAST_NAME] AS PLAYER, STATS.YEAR, STATS.RBI

FROM PLAYERS LEFT JOIN STATS ON STATS.PLAYER_ID = PLAYERS.PLAYER_ID

Recall that the cross join query we created was characterized by the fact that it had no line connecting key fields in the two tables. As you might guess, this translates to a FROM clause that has no ON predicate:

SELECT STATS.PLAYER_ID, TEAMS.ABBREV

FROM STATS, TEAMS;

In addition to lacking an ON predicate, the clause also has no form of the word JOIN. The two tables are simply separated by commas.

Finally, we created a query involving two inner joins. Here is how that query was translated to SQL:

SELECT PLAYERS.FIRST_NAME, PLAYERS.LAST_NAME, STATS.YEAR, STATS.HR, TEAMS.CITY, TEAMS.NICKNAME

FROM (PLAYERS INNER JOIN STATS ON PLAYERS.PLAYER_ID = STATS.PLAYER_ID) INNER JOIN TEAMS ON STATS.TEAM = TEAMS.ABBREV;

The first join is enclosed in parentheses to control the order in which the joins are carried out. Like in algebra, operations in parentheses are carried out first. The result of the first join then participates in the second join.

C. The WHERE clause

As we saw earlier, it is possible to limit a query's result to records meeting certain criteria. These criteria are spelled out in the query's WHERE clause. This clause includes an expression that evaluates to either True or False for each row. The "True" rows are included in the output; the "False" rows are not. Returning to our earlier examples, we used a WHERE clause to identify seasons of 100+ RBIs:

SELECT STATS.PLAYER_ID, STATS.YEAR, STATS.RBI

FROM STATS

WHERE STATS.RBI > 99

As with the specification of columns in the SELECT clause, columns in the WHERE clause need not be prefaced by their parent table if there is no confusion as to where the columns are coming from.

This statement exemplifies the basic column-operator-value pattern found in most WHERE clauses. The most commonly used operators are:

| Operator | Description |

|---|---|

| = | equals |

| <> | not equals |

| > | greater than |

| >= | greater than or equal to |

| < | less than |

| <= | less than or equal to |

| BETWEEN | within a value range |

| LIKE | matching a search pattern |

| IN | matching an item in a list |

The usage of most of these operators is straightforward. But let's talk for a moment about the LIKE and IN operators. LIKE is used in combination with the wildcard character (the * character in Access and most other RDBMS, but the % character in some others) to find rows that contain a text string pattern. For example, the clause WHERE FIRST_NAME LIKE 'B*' would return Babe Ruth and Barry Bonds. WHERE FIRST_NAME LIKE '*ar*' would return Barry Bonds and Harmon Killebrew. WHERE FIRST_NAME LIKE '*ank' would return Hank Aaron and Frank Robinson.

Note:

Text strings like those in the WHERE clauses above must be enclosed in quotes. Single quotes are recommended, though double quotes will also work in most databases. This is in contrast to numeric values, which should not be quoted. The key point to consider is the data type of the column in question. For example, zip code values may look numeric, but if they are stored in a text field (as they should be because some begin with zeroes), they must be enclosed in quotes.

The IN operator is used to identify values that match an item in a list. For example, you might find seasons compiled by members of the Giants franchise (which started in New York and moved to San Francisco) using a WHERE clause like this:

WHERE TEAM IN ('NYG', 'SFG')

Finally, remember that WHERE clauses can be compound. That is, you can evaluate multiple criteria using the AND and OR operators. The AND operator requires that both the expression on the left and the expression on the right evaluate to True, whereas the OR operator requires that at least one of the expressions evaluates to True. Here are a couple of simple illustrations:

WHERE TEAM = 'SFG' AND YEAR > 2000

WHERE TEAM = 'NYG' OR TEAM = 'SFG'

Sometimes WHERE clauses require parentheses to ensure that the filtering logic is carried out properly. One of the queries you were asked to write earlier sought to identify 100+ RBI seasons compiled before 1960 or after 1989. The SQL generated by the query builder looks like this:

SELECT STATS.PLAYER_ID, STATS.YEAR, STATS.RBI

FROM STATS

WHERE (((STATS.YEAR)>1989) AND ((STATS.RBI)>99)) OR (((STATS.YEAR)<1960) AND ((STATS.RBI)>99));

It turns out that this statement is more complicated than necessary for a couple of reasons. The first has to do with the way I instructed you to build the query:

This resulted in the RBI>99 criterion inefficiently being added to the query twice. A more efficient approach would be to merge the two-year criteria into a single row in the design grid, as follows:

This yields a more efficient version of the statement:

SELECT STATS.PLAYER_ID, STATS.YEAR, STATS.RBI

FROM STATS

WHERE (((STATS.YEAR)>1989 Or (STATS.YEAR)<1960) AND ((STATS.RBI)>99));

It is difficult to see, but this version puts a set of parentheses around the two year criteria so that the condition of being before 1960 or after 1989 is evaluated separately from the RBI condition.

One reason the logic is difficult to follow is because the query builder adds parentheses around each column specification. Eliminating those parentheses and the table prefixes produces a more readable query:

SELECT PLAYER_ID, YEAR, RBI

FROM STATS

WHERE (YEAR>1989 Or YEAR<1960) AND RBI>99;

D. The GROUP BY clause

The GROUP BY clause was generated by the query builder when we calculated each player's career RBI total:

SELECT LAST_NAME, FIRST_NAME, Sum(RBI) AS SumOfRBI

FROM STATS

GROUP BY LAST_NAME, FIRST_NAME;

The idea behind this type of query is that you want the RDBMS to find all of the unique values (or value combinations) in the field (or fields) listed in the GROUP BY clause and include each in the result set. When creating this type of query, each field in the GROUP BY clause must also be listed in the SELECT clause. In addition to the GROUP BY fields, it is also common to use one of the SQL aggregation functions in the SELECT clause to produce an output column. The function we used to calculate the career RBI total was Sum(). Other useful aggregation functions include Max(), Min(), Avg(), Last(), First() and Count(). Because the Count() function is only concerned with counting the number of rows associated with each grouping, it doesn't really matter which field you plug into the parentheses. Frequently, SQL developers will use the asterisk rather than some arbitrary field. This modification to the query will return the number of years in each player's career, in addition to the RBI total:

SELECT LAST_NAME, FIRST_NAME, Sum(RBI) AS SumOfRBI, Count(*) AS Seasons

FROM STATS

GROUP BY LAST_NAME, FIRST_NAME;

Note: In actuality, this query would over count the seasons played for players who switched teams mid-season (e.g., Mark McGwire was traded in the middle of 1997 and has a separate record in the STATS table for each team). We'll account for this problem using a sub-query later in the lesson.

E. The ORDER BY clause

The purpose of the ORDER BY clause is to specify how the output of the query should be sorted. Any number of fields can be included in this clause, so long as they are separated by commas. Each field can be followed by the keywords ASC or DESC to indicate ascending or descending order. If this keyword is omitted, the sorting is done in an ascending manner. The query below sorts the seasons in the STATS table from the best RBI total to the worst:

SELECT PLAYER_ID, YEAR, RBI

FROM STATS

ORDER BY RBI DESC;

That concludes our review of the various clauses found in SELECT queries. You're likely to memorize much of this syntax if you're called upon to write much SQL from scratch. However, don't be afraid to use the Access query builder or other GUIs as an aid in producing SQL statements. I frequently take that approach when writing database-related PHP scripts. Even though the data is actually stored in a MySQL database, I make links to the MySQL tables in Access and use the query builder to develop the queries I need. That workflow (which we'll explore in more depth later in the course) is slightly complicated by the fact that the flavor of SQL produced by Access differs slightly from industry standards. We'll discuss a couple of the important differences in the next section.

Differences Between MS-Access and Standard SQL

Differences Between MS-Access and Standard SQL

If you plan to use the SQL generated by the Access query builder in other applications as discussed on the previous page, you'll need to be careful of some of the differences between Access SQL and other RDBMS.

A. Table/Column Specification in the Expression Builder

We used the Expression Builder to combine the players' first and last names, and later to calculate their home run per at-bat ratio. Unfortunately, the Expression Builder specifies table/column names in a format that is unique to Access. Here is a simplified version of the HR/AB query that doesn't bother including names from the PLAYERS table:

SELECT STATS.PLAYER_ID, STATS.YEAR, [STATS]![AB]/[STATS]![HR] AS ABPERHR FROM STATS;

The brackets and exclamation point used in this expression is non-standard SQL and would not work outside of Access. A safer syntax would be to use the same table.column notation used earlier in the SELECT clause:

SELECT STATS.PLAYER_ID, STATS.YEAR, STATS.AB/STATS.HR AS ABPERHR FROM STATS;

Or, to leave out the parent table name altogether:

SELECT PLAYER_ID, YEAR, AB/HR AS ABPERHR FROM STATS;

Note that this modified syntax will work in Access.

B. String Concatenation

In our queries that combined the players' first and last names, the following syntax was used:

SELECT [PLAYERS]![FIRST_NAME] & ' ' & [PLAYERS]![LAST_NAME]....

As mentioned above, columns should be specified using the table.column notation rather than with brackets and exclamation points. In addition to the different column specification, the concatenation itself would also be done differently in other RDBMS. While Access uses the & character for concatenation, the concatenation operator in standard SQL is ||. However, there is considerable variation in the adherence to this standard. For a comparison of a number of the major RDBMS [2], see http://troels.arvin.dk/db/rdbms/#functions-concat.

Note:

One of the points of comparison in the linked page is whether or not there is automatic casting of values. All this is getting at is what happens if the developer tries to concatenate a string with some other data type (say, a number). Some SQL implementations will automatically cast, or convert, the number to a string. Others are less flexible and require the developer to perform such a cast explicitly.

Subqueries

Subqueries

There comes a time when every SQL developer has a problem that is too difficult to solve using only the methods we've discussed so far. In our baseball stats database, difficulty arises when you consider the fact that players who switch teams mid-season have a separate row for each team in the STATS table. For example, Mark McGwire started the 1997 season with OAK, before being traded to and spending the rest of that season with STL. The STATS table contains two McGwire-1997 rows; one for his stats with OAK and one for his stats with STL.

If you wanted to identify each player's best season (in terms of batting average, home runs or runs batted in), you wouldn't be able to do a straight GROUP BY on the player's name or ID because that would not account for the mid-season team switchers. What's needed in this situation is a multistep approach that first computes each player's yearly aggregated stats, then identifies the maximum value in that result set.

A novice's approach to this problem would be to output the results from the first query to a new table, then build a second query on top of the table created by the first. The trouble with this approach is that it requires re-running the first query each time the STATS table changes.

A more ideal solution to the problem can be found through the use of a subquery. Let's have a look at how to build a subquery in Access.

A. Building a query on top of another saved query

- Create a new query and add the STATS table to the design canvas.

- Click on the Totals button to add the Total row to the design grid.

- Bring the following fields down into the design grid: PLAYER_ID, YEAR, AB, HITS, HR, RBI, and TEAM.

- Set the value in the Total cell to Group By for the PLAYER_ID and YEAR fields.

- Set the value in the Total cell to Sum for the AB, HITS, HR, and RBI fields.

- Set the value in the Total cell to Count for the TEAM field. These settings specify that we want to group by the player ID and year, sum the player's stats across each player/year grouping, and include a count of the number of teams the player played for each year.

- Run the query and confirm that it produces 227 records. (Compare that against the number of records in the STATS table. Do you understand why there is a difference?)

- Save the query as STATS_AGGR.

- Now, create a new query. In the Show Table dialog, double-click on PLAYERS to add it to the design canvas.

- Next, in the Show Table dialog, switch from the Tables tab to the Queries tab and double-click on STATS_AGGR to add it to the design canvas. Let's identify each player's best season in terms of home runs.

- Again, click on the Totals button.

- Bring down to the design grid the FIRST_NAME and LAST_NAME fields from the PLAYERS table and SumOfHR from the STATS_AGGR query.

- Confirm that the value in the Total cell is set to Group By for the FIRST_NAME and LAST_NAME fields.

- Set the value in the Total cell for the SumOfHR field to Max.

- Save this query as QueryBasedOnAQuery.

B. Building a query within another query.

- Switch to the SQL View of the QueryBasedOnAQuery query (sorry about that). Note that an inner join is conducted between PLAYERS and STATS_AGGR. When Access executes this query, it needs to first evaluate the SQL stored in the STATS_AGGR query before it can join that result set to PLAYERS. In your mind, you can imagine replacing the STATS_AGGR part of the PLAYERS INNER JOIN STATS_AGGR expression with the SQL stored in STATS_AGGR. In fact, our next step will be to literally replace STATS_AGGR with its SQL code.

- Re-open the STATS_AGGR query and switch to SQL View.

- Highlight and copy the SQL (leave behind the trailing semi-colon).

- Return to the SQL View of the QueryBasedOnAQuery query.

- Remove the STATS_AGGR from the PLAYERS INNER JOIN STATS_AGGR and paste in the code on your clipboard.

- Insert parentheses around the code you just pasted and add AS STATS_AGG after the closing parenthesis. Note that we're giving the subquery an alias of STATS_AGG to avoid confusion with the saved query with the name STATS_AGGR.

- Replace the query's references to STATS_AGGR with STATS_AGG, (there is one reference to STATS_AGGR in the SELECT clause and one in the ON clause). Your query should look like this:

SELECT PLAYERS.FIRST_NAME, PLAYERS.LAST_NAME, Max(STATS_AGG.SumOfHR) AS MaxOfSumOfHR FROM PLAYERS INNER JOIN (SELECT STATS.PLAYER_ID, STATS.YEAR, Sum(STATS.AB) AS SumOfAB, Sum(STATS.HITS) AS SumOfHITS, Sum(STATS.HR) AS SumOfHR, Sum(STATS.RBI) AS SumOfRBI, Count(STATS.TEAM) AS CountOfTEAM FROM STATS GROUP BY STATS.PLAYER_ID, STATS.YEAR) AS STATS_AGG ON PLAYERS.PLAYER_ID = STATS_AGG.PLAYER_ID GROUP BY PLAYERS.FIRST_NAME, PLAYERS.LAST_NAME; - Test the query. It should report the highest single-season home run total for each player.

This approach is a bit more complicated than the first, since it cannot be carried out with the graphical Query Builder. However, the advantage is that all of the code involved can be found in one place, and you don't have a second intermediate query cluttering the database's object list.

This completes the material on retrieving data from an RDBMS using SELECT queries. In the next section, you'll be posed a number of questions that will test your ability to write SELECT queries on your own.

Project 1: Writing SQL SELECT Queries

Project 1: Writing SQL SELECT Queries

To demonstrate that you've learned the material from Lesson 1, please build queries in your Access database for each of the problems posed below. Some tips:

- Remember that players who played for multiple teams in the same season (i.e., were traded mid-season) will have a separate row in the STATS table for each team-year combination.

- Even if the requested query does not explicitly ask for player names in the output, please include names to make the output more informative.

- Some of the problems require the use of sub-queries. You will receive equal credit whether you choose to embed your sub-query within the larger query or save your sub-query separately and build your final query upon that saved sub-query.

- You should treat the words 'season' and 'year' in the problems below as synonymous.

Here are the problems to solve:

- Display all records/fields from the STATS table for players on the San Francisco Giants (SFG).

- Output the batting average (HITS divided by AB) for each player and season. Format this value so that exactly 3 digits appear after the decimal point.

For example, Babe Ruth had a batting average of .200 in 1914 (2 hits in 10 at-bats).

- Display all records/fields from the STATS table along with the names of the players in the format “Ruth, Babe”.

- Calculate the number of seasons each player played for each of his teams. (Players who were on two teams in the same season can be counted as having played for both teams.)

For example, Mark McGwire played 12 seasons for the Oakland Athletics and 5 seasons for the St Louis Cardinals (1997 being counted for both teams).

- List the players based on the number of home runs hit in their rookie (first) seasons, from high to low.

For example, Frank Robinson hit 38 home runs in his rookie season of 1956.

- Display the names (only the names, with no duplicates) of players who played in New York (any team beginning with 'NY').

- Sort the players by the number of seasons played (high to low). (Do not double-count in cases in which a player switched teams mid-season.)

For example, Sammy Sosa played 18 seasons, with 1989, which he split between CHW and TEX counted as 1 season, not 2.

- Sort the players by their career batting average (career hits divided by career at-bats, high to low).

For example, Harmon Killebrew had a career batting average of .256 (2086 hits in 8147 at-bats).

- Sort the players by their number of seasons with 100 or more RBI (high to low).

For example, Hank Aaron had 100 or more RBI in a season 11 times.

- Sort the players based on the number of years between their first and last seasons of 40 or more HR (from most to least).

For example, Willie Mays had his first 40-HR season in 1954 and his last in 1965, a difference of 11 years.

Name your queries so that it's easy for me to identify them (e.g., Proj1_1 through Proj1_10).

Deliverables

This project is one week in length. Please refer to the Canvas Calendar for the due date.

- Upload your Access database (.accdb file) containing your solutions to the 10 query exercises above to the Project 1 Dropbox. (100 of 100 points)

- Complete the Lesson 1 quiz.

Lesson 2: Relational Database Concepts and Theory

Overview

Overview

Now that you've gotten a taste of the power provided by relational databases to answer questions, let's shift our attention to the dirty work: designing, implementing, populating and maintaining a database.

Objectives

At the successful completion of this lesson, students should be able to:

- design a relational database and depict that design through an entity-relationship diagram;

- create new tables in MS-Access;

- develop and execute insert, update and delete queries in MS-Access;

- describe what is meant by 1st, 2nd and 3rd normal forms in database design;

- explain the concept of referential integrity.

Questions?

If you have any questions now or at any point during this week, please feel free to post them to the Lesson 2 Discussion Forum.

Checklist

Checklist

Lesson 2 is one week in length, see the Canvas Calendar for specific due dates. To finish this lesson, you must complete the activities listed below:

- Work through Lesson 2.

- Complete Project 2 and upload its deliverables to the Project 2 Dropbox.

- Complete the Lesson 2 Quiz.

Relational DBMSs Within The Bigger Picture

Relational DBMSs Within The Bigger Picture

Before digging deeper into the workings of relational database management systems (RDBMSs), it's a good idea to take a step back and look briefly at the history of the relational model and how it fits into the bigger picture of DBMSs in general. The relational model of data storage was originally proposed by an IBM employee named Edgar Codd in the early 1970s. Prior to that time, computerized data storage techniques followed a "navigational" model that was not well suited to searching. The ground-breaking aspect of Codd's model was the allocation of data to separate tables, all linked to one another by keys - values that uniquely identify particular records. The process of breaking data into multiple tables is referred to as normalization.

If you do much reading on the relational model, you're bound to come across the terms relation, attribute, and tuple. While purists would probably disagree, for our purposes you can consider relation to be synonymous with table, attribute with the terms column and field, and tuple with the terms row and record.

SQL, which we started learning about in Lesson 1, was developed in response to Codd's relational model. Today, relational databases built around SQL dominate the data storage landscape. However, it is important to recognize that the relational model is not the only ballgame in town. Object-oriented databases [3] arose in the 1980s in parallel with object-oriented programming languages. Some of the principles of object-oriented databases (such as classes and inheritance) have made their way into most of today's major RDBMSs, so it is more accurate to describe them as object-relational hybrids.

More recently, a class of DBMSs that deviate significantly from the relational model has developed. These NoSQL databases [4] seek to improve performance when dealing with large volumes of data (often in web-based applications). Essentially, these systems sacrifice some of the less critical functions found in an RDBMS in exchange for gains in scalability and performance.

Database Design Concepts

Database Design Concepts

A. Database design concepts

When building a relational database from scratch, it is important that you put a good deal of thought into the process. A poorly designed database can cause a number of headaches for its users, including:

- loss of data integrity over time

- inability to support needed queries

- slow performance

Entire courses can be spent on database design concepts, but we don't have that kind of time, so let's just focus on some basic design rules that should serve you well. A well-designed table is one that:

- seeks to minimize redundant data

- represents a single subject

- has a primary key (a field or set of fields whose values will uniquely identify each record in the table)

- does not contain multi-part fields (e.g., "302 Walker Bldg, University Park, PA 16802")

- does not contain multi-valued fields (e.g., an Author field shouldn't hold values of the form "Jones, Martin, Williams")

- does not contain unnecessary duplicate fields (e.g., avoid using Author1, Author2, Author3)

- does not contain fields that rely on other fields (e.g., don't create a Wage field in a table that has PayRate and HrsWorked fields)

B. Normalization

The process of designing a database according to the rules described above is formally referred to as normalization. All database designers carry out normalization, whether they use that term to describe the process or not. Hardcore database designers not only use the term normalization, they're also able to express the extent to which a database has been normalized:

- First normal form (1NF) describes a database whose tables represent distinct entities, have no duplicative columns (e.g., no Author1, Author2, Author3), and have a column or columns that uniquely identify each row (i.e., a primary key). Databases meeting these requirements are said to be in first normal form.

- Second normal form (2NF) describes a database that is in 1NF and also avoids having non-key columns that are dependent on a subset of the primary key. It's understandable if that seems confusing, have a look at this simple example [5] [www.1keydata.com/database-normalization/second-normal-form-2nf.php]

In the example, CustomerID and StoreID form a composite key - that is, the combination of the values from those columns uniquely identifies the rows in the table. In other words, only one row in the table will have a CustomerID of 1 together with a StoreID of 1, only one row will have a CustomerID of 1 together with a StoreID of 3, etc. The PurchaseLocation column depends on the StoreID column, which is only part of the primary key. As shown, the solution to putting the table in 2NF is to move the StoreID-PurchaseLocation relationship into a separate table. This should make intuitive sense as it spells out the PurchaseLocation values just once rather than spelling them out repeatedly. - Third normal form (3NF) describes a database that is in 2NF and also avoids having columns that derive their values from columns other than the primary key. The wage field example mentioned above is a clear violation of the 3NF rule.

In most cases, normalizing a database so that it is in 3NF is sufficient. However, it is worth pointing out that there are other normal forms including Boyce-Codman normal form (BCNF, or 3.5NF), fourth normal form (4NF) and fifth normal form (5NF). Rather than spend time going through examples of these other forms, I encourage you to simply keep in mind the basic characteristics of a well-designed table listed above. If you follow those guidelines carefully, in particular, constantly being on the lookout for redundant data, you should be able to reap the benefits of normalization.

Generally speaking, a higher level of normalization results in a higher number of tables. And as the number of tables increases, the costs of bringing together data through joins increases as well, both in terms of the expertise required in writing the queries and in the performance of the database. In other words, the normalization process can sometimes yield a design that is too difficult to implement or that performs too slowly. Thus, it is important to bear in mind that database design is often a balancing of concerns related to data integrity and storage efficiency (why we normalize) versus concerns related to its usability (getting data into and out of the database).

Earlier, we talked about city/state combinations being redundant with zip code. That is a great example of a situation in which de-normalizing the data might make sense. I have no hard data on this, but I would venture to say that the vast majority of relational databases that store these three attributes keep them all together in the same table. Yes, there is a benefit to storing the city and state names once in the zip code table (less chance of a misspelling, less disk space used). However, my guess is that the added complexity of joining the city/state together with the rest of the address elements outweighs that benefit to most database designers.

C. Example scenario

Let's work through an example design scenario to demonstrate how these rules might be applied to produce an efficient database. Ice cream entrepreneurs Jen and Barry have opened their business and now need a database to track orders. When taking an order, they record the customer's name, the details of the order such as the flavors and quantities of ice cream needed, the date the order is needed, and the delivery address. Their database needs to help them answer two important questions:

- Which orders are due to be shipped within the next two days?

- Which flavors must be produced in greater quantities?

A first crack at storing the order information might look like this:

| Customer | Order | DeliveryDate | DeliveryAdd |

|---|---|---|---|

| Eric Cartman | 1 vanilla, 2 chocolate | 12/1/11 | 101 Main St |

| Bart Simpson | 10 chocolate, 10 vanilla, 5 strawberry | 12/3/11 | 202 School Ln |

| Stewie Griffin | 1 rocky road | 12/3/11 | 303 Chestnut St |

| Bart Simpson | 3 mint chocolate chip, 2 strawberry | 12/5/11 | 202 School Ln |

| Hank Hill | 2 coffee, 3 vanilla | 12/8/11 | 404 Canary Dr |

| Stewie Griffin | 5 rocky road | 12/10/11 | 303 Chestnut St |

The problem with this design becomes clear when you imagine trying to write a query that calculates the number of gallons of vanilla that have been ordered. The quantities are mixed with the names of the flavors, and any one flavor could be listed anywhere within the order field (i.e., it won't be consistently listed first or second).

A design like the following would be slightly better:

| Customer | Flavor1 | Qty1 | Flavor2 | Qty2 | Flavor3 | Qty3 | DeliveryDate | DeliveryAdd |

|---|---|---|---|---|---|---|---|---|

| Eric Cartman | vanilla | 1 | chocolate | 2 | 12/1/11 | 101 Main St |

||

| Bart Simpson | chocolate | 10 | vanilla | 10 | strawberry | 5 | 12/3/11 | 202 School Ln |

| Stewie Griffin | rocky road | 1 | 12/3/11 | 303 Chestnut St |

||||

| Bart Simpson | mint chocolate chip | 3 | strawberry | 2 | 12/5/11 | 202 School Ln |

||

| Hank Hill | coffee | 2 | vanilla | 3 | 12/8/11 | 404 Canary Dr |

||

| Stewie Griffin | rocky road | 5 | 12/10/11 | 303 Chestnut St |

This is an improvement because it enables querying on flavors and summing quantities. However, to calculate the gallons of vanilla ordered you would need to sum the values from three fields. Also, the design would break down if a customer ordered more than three flavors.

Slightly better still is this design:

| Customer | Flavor | Qty | DeliveryDate | DeliveryAdd |

|---|---|---|---|---|

| Eric Cartman | vanilla | 1 | 12/1/11 | 101 Main St |

| Eric Cartman | chocolate | 2 | 12/1/11 | 101 Main St |

| Bart Simpson | chocolate | 10 | 12/3/11 | 202 School Ln |

| Bart Simpson | vanilla | 10 | 12/3/11 | 202 School Ln |

| Bart Simpson | strawberry | 5 | 12/3/11 | 202 School Ln |

| Stewie Griffin | rocky road | 1 | 12/3/11 | 303 Chestnut St |

| Hank Hill | coffee | 2 | 12/8/11 | 404 Canary Dr |

| Hank Hill | vanilla | 3 | 12/8/11 | 404 Canary Dr |

| Stewie Griffin | rocky road | 5 | 12/10/11 | 303 Chestnut St |

This design makes calculating the gallons of vanilla ordered much easier. Unfortunately, it also produces a lot of redundant data and spreads a complete order from a single customer across multiple rows.

Better than all of these approaches would be to separate the data into four entities (Customers, Flavors, Orders and Order Items):

| CustID | NameLast | NameFirst | DeliveryAdd |

|---|---|---|---|

| 1 | Cartman | Eric | 101 Main St |

| 2 | Simpson | Bart | 202 School Ln |

| 3 | Griffin | Stewie | 303 Chestnut St |

| 4 | Hill | Hank | 404 Canary Dr |

| FlavorID | Name |

|---|---|

| 1 | vanilla |

| 2 | chocolate |

| 3 | strawberry |

| 4 | rocky road |

| 5 | mint chocolate chip |

| 6 | coffee |

| OrderID | CustID | DeliveryDate |

|---|---|---|

| 1 | 1 | 12/1/11 |

| 2 | 2 | 12/3/11 |

| 3 | 3 | 12/3/11 |

| 4 | 2 | 12/5/11 |

| 5 | 4 | 12/8/11 |

| 6 | 3 | 12/10/11 |

| OrderItemID | OrderID | FlavorID | Qty |

|---|---|---|---|

| 1 | 1 | 1 | 1 |

| 2 | 1 | 2 | 2 |

| 3 | 2 | 2 | 10 |

| 4 | 2 | 1 | 10 |

| 5 | 2 | 3 | 5 |

| 6 | 3 | 4 | 1 |

| 7 | 4 | 5 | 3 |

| 8 | 4 | 3 | 2 |

| 9 | 5 | 6 | 2 |

| 10 | 5 | 1 | 3 |

| 11 | 6 | 4 | 5 |

If one were to implement a design like this in MS-Access, the query needed to display orders that must be delivered in the next 2 days would look like this in the GUI:

It would produce the following SQL:

If you aren't experienced with relational databases, then such a table join may seem intimidating. You probably won't need to do anything quite so complex in this course. The purpose of this example is two-fold:

- To illustrate that multi-table designs are often preferable to a "one-big-spreadsheet" approach.

- To emphasize the importance of SQL in pulling together data spread across multiple tables to perform valuable database queries.

D. Data modeling

Whether it's just a quick sketch on a napkin or a months-long process involving many stakeholders, the life cycle of any effective database begins with data modeling. Data modeling itself begins with a requirements analysis, which can be more or less formal, depending on the scale of the project. One of the common products of the data modeling process is an entity-relationship (ER) diagram. This sort of diagram depicts the categories of data that must be stored (the entities) along with the associations (or relationships) between them. The Wikipedia entry on ER diagrams is quite good, so I'm going to point you there to learn more:

Entity-relationship model article at Wikipedia [6] [http://en.wikipedia.org/wiki/Entity-relationship_model]

An ER diagram is essentially a blueprint for a database structure. Some RDBMSs provide diagramming tools (e.g., Oracle Designer, MySQL Workbench) and often include the capability of automatically creating the table structure conceptualized in the diagram.

In a GIS context, Esri makes it possible to create new geodatabases based on diagrams authored using CASE (Computer-Aided Software Engineering) tools. This blog post, Using Case tools in Arc GIS 10 [7], [http://blogs.esri.com/esri/arcgis/2010/08/05/using-case-tools-in-arcgis -10/] provides details if you are interested in learning more.

E. Design practice

To help drive these concepts home, here is a scenario for you to consider. You work for a group with an idea for a fun website: to provide a place for music lovers to share their reviews of albums on the 1001 Albums You Must Hear Before You Die list [8]. (All of these albums had been streamable from Radio Romania 3Net [9], but sadly it appears that's no longer the case.)

Spend 15-30 minutes designing a database (on paper, no need to implement it in Access) for this scenario. Your database should be capable of efficiently storing all of the following data:

- Artist

- Album Genre

- Record Label

- Album Comments

- Album

- Track Name

- Reviewer ID

- Track Rating

- Year

- Track Length

- Album Rating

- Track Comments

When you're satisfied with your design, move to the next page and compare yours to mine.

Implementing a Database Design

Implementing a Database Design

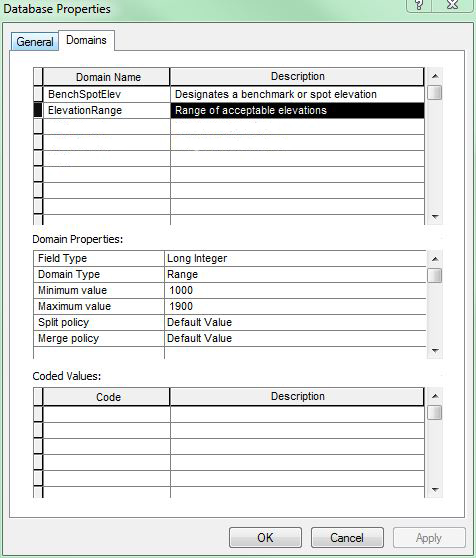

There is no one correct design for the music scenario posed on the last page. The figure below depicts one design. Note the FK notation beside some of the fields. FK stands for foreign key and indicates a field whose values uniquely identify rows in another table. There is no way to designate a field as a foreign key in MS-Access. For our purposes, you should just remember that building foreign keys into your database is what will enable you to create the table joins needed to answer the questions you envision your database answering.

Note also the 1 and * labels at the ends of the relationship lines, which indicate the number of times any particular value will appear in the connected field. As shown in the diagram, values in the Albums table's Album_ID field will appear just once; the same values in the associated field in the Tracks table may appear many times (conveyed by the * character). For example, an album with 10 tracks will have its Album_ID value appear in the Albums table just once. That Album_ID value would appear in the Tracks table 10 times. This uniqueness of values in fields is referred to as cardinality.

If you have questions about this design or see ways to improve it, please share your thoughts in the Lesson 2 Discussion Forum.

In this part of the lesson, we'll create and populate some of the tables depicted in the diagram above to give you a sense of how to move from the design phase to the implementation phase of a database project. Table creation is something that can be accomplished using SQL, as we'll see later in the course. Most RDBMSs also make it possible to create tables through a GUI, which is the approach we will take right now.

An integral part of creating a table is defining its fields and the types of data that can be stored in those fields. Here is a list of the most commonly used data types available in Access:

- Text - for strings of up to 255 characters

- Memo - for strings greater than 255 characters

- Number - for numeric data

- AutoNumber - for automatically incremented numeric fields; more on this below

- Yes/No - for holding values of 'Yes' or 'No'; equivalent to the Boolean type in other RDBMSs

- Date/Time - for storing dates and times

Beyond the data type, fields have a number of other properties that are sometimes useful to set. Among these are Default Value which specifies the value the field takes on by default, and Required, which specifies whether or not the field is allowed to contain Null values. It is also possible to specify that a field is the table's primary key (or part of a multi-field primary key).

Perhaps the most commonly set field property is the Field Size. For Text fields this property defaults to a value of 255 characters. This property should be set to a lower value when appropriate, for a couple of reasons. First, it will reduce the size of the database, and secondly, it can serve as a form of data validation (e.g., assigning a Field Size of 2 to a field that will store state abbreviations will ensure that no more than 2 characters are entered).

When dealing with a Number field, the Field Size property is used to specify the type of number:

- Byte - for integers ranging from 0 to 255

- Integer - for integers in the range of roughly +/- 32,000

- Long Integer - for integers in the range of roughly +/- 2 billion

- Single - for real numbers in the range of roughly +/- 1038

- Double - for real numbers in the range of roughly +/- 10308

As with Text fields, it is good practice to choose the smallest possible Field Size for a Number field.

With this background on fields in mind, let's move on to implementing the music database.

A. Create a new table

- Open Access and from the opening screen click on the Blank Database icon.

- In the right-hand panel, browse to your course folder and give the database the name music.accdb.

- Click the Create button to create the new empty database. Access will automatically create and open a blank table called Table1.

- Select View > Design View to begin modifying this table to meet your needs.

- Give the table the name Albums.

The Design View provides a grid for you to specify the names, data types and other properties for the fields (columns) you want to have in your table. Note that the table automatically has a field called ID with a data type of AutoNumber. The field is also designated as the table's Primary Key.

Note: Most RDBMSs offer an auto-incrementing numeric data type like this. It's common for database tables to use arbitrary integer fields as their primary key. If you create a field as this AutoNumber type (or its equivalent in another RDBMS), you need not supply a value for it when adding new records to the table. The software will automatically handle that for you.

- Rename the field from ID to Album_ID.

- Beneath that field, add a new one called Title.

With the 2013 version of MS Access the interface has changed some when it comes to the next two settings. The list of data types has become more streamlined and the way Field Size is set for numeric fields is different. I will indicate below what the differences are.

- Set the Title field's Data Type to Text.

In Access 2013 - set the Title field's Type to Short Text.

- Set its Field Size to 200. (This and several other options are found in the Field Properties section at the bottom of the window, under the General tab. Note that the maximum value allowed for this property is 255 characters.)

In Access 2013 - no need to set this, the Short Text type holds up to 255 characters.

FYI, the "Long Text" option in Access 2013 ("Memo" option in older Access versions) can hold up to a Gigabyte and can be configured to hold Rich Text formatted information.

- Repeat these steps to add the other fields defined for the Albums table:

In Access 2013 - To set the type and size for numeric data, you first choose Number from the Type list, then under the General tab click the FieldSize property box. Click the arrow and choose the desired field size (Integer, Long Integer, Float, etc.).Table 2.8: Albums Table Name Type Album_ID AutoNumber Title Text (200) Artist_ID Long Integer Release_Year Integer Label_ID Long Integer Note:

Try adding a field with a name of Year to see why we used the name Release_Year instead.

- When finished adding fields to the Albums table, click the Save button.

- To add the next table to the database design, click the Create tab, then Table Design.

- Repeat the steps outlined above to define the Artists table with the following fields:

Table 2.9: Artists Table Name Type Artist_ID AutoNumber Artist_Name Text (200) Year_Begin Integer Year_End Integer - Before closing the table, click in the small gray area to the left of the Artist_ID field to select that field then click on the Primary Key button on the ribbon.

- Create the Labels table with the following fields:

Table 2.10: Labels Table Name Type Label_ID AutoNumber Label_Name Text (200) - Set Label_ID as the primary key of the Labels table.

B. Establish relationships between the tables

While it's not strictly necessary to do so, it can be beneficial to spell out the relationships between the tables that you envisioned during the design phase.

- Click on Database Tools > Relationships.

- From the Show Table dialog double-click on each of the tables to add them to the Relationships layout.

- Arrange the tables so that the Albums table appears between the Artists and Labels tables.

- Like you did when building queries earlier, click on the Artist_ID field in the Artists table and drag it to the Artist_ID field in the Albums table. You should see a dialog titled "Edit Relationships".

- Check each of the three boxes: Enforce Referential Integrity, Cascade Update Related Fields, and Cascade Delete Related Records.

Checking these boxes tells Access that you want its help in keeping the values in the Artist_ID field in sync. For example:- Let's say your Artists table has only three records (with Artist_ID values of 1, 2 and 3). If you attempted to add a record to the Albums table with an Artist_ID of 4, Access would stop you, since that value does not exist in the related Artists table.

- If you change an Artist_ID value in the Artists table, all records in the Albums table will automatically have their Artist_ID values updated to match.

- If you delete an artist from the Artists table, the related records in the Albums table will be deleted as well.

- Click the Create button to establish the relationship between Artists and Albums.

- Follow the same steps to establish a relationship between Albums and Labels based on the Label_ID field.

- Save and Close the Relationships window.

With your database design implemented, you are now ready to add records to your tables.

Adding Records to a Table

Adding Records to a Table

Records may be added to tables in three ways: manually through the table GUI, using an SQL INSERT query to add a single record, and using an INSERT query to add multiple records in bulk.

A. Adding records manually

This method is by far the easiest, but also the most tedious. It is most suitable for populating small tables.

- Double-click on the Labels table in the object list on the left side of the window to open it in Datasheet mode.

- Try entering a value in the Label_ID field. You're not able to because that field was assigned a data type of AutoNumber.

- Tab to the Label_Name field, and enter Capitol. While typing, note the pencil icon in the gray selection box to the left of the record. This indicates that you are editing the record (it may be yellow depending upon the version of Access).

- Hit Tab or Enter. The pencil icon disappears, indicating the edit is complete, and the cursor moves to a new empty record.

- Add more records to your table so that it looks as follows:

Table 2.11: Label ID and Name Label_ID Label_Name 1 Capitol 2 Pye 3 Columbia 4 Track 5 Brunswick 6 Parlorphone 7 Apple Note:

When a record is deleted from a table with an AutoNumber field like this one, the AutoNumber value that had been associated with that record is gone forever. For example, imagine that the Columbia Records row was deleted from the table. The next new record would take on a Label_ID of 8. The Label_ID of 3 has already been used and will not be used again.

B. Adding a single record using an INSERT query

- Click the Create tab, then Query Design.

- Click Close to dismiss the Show Table dialog.

By default, queries in Access are of the Select type. However, you'll note that other query types are available including Append, Update, and Delete. We will work with the Append type in a moment and check out the Update and Delete types later. - Click on the Append button. You'll be prompted to specify which table you want to append to.

- Choose Artists from the drop-down list, and click OK. Note that an Append To row is added to the design grid.

- In the Field area of the design grid, enter "The Beatles" and tab out of that cell.

- Move your cursor to the Append To cell and select Artist_Name from the drop-down list.

- Move to the next column, and enter 1957 into the Field cell.

- Select Year_Begin as the Append To field for this column.

- Move to the next column, and enter 1970 into the Field cell.

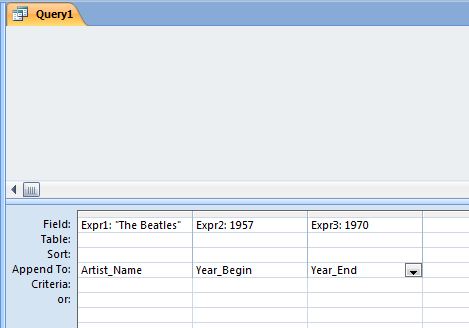

- Select Year_End as the Append To field for this column. Your query design grid should look like this:

Figure 2.3: MS_Access query design grid GUI showing the layout that will result in appending one record to the database table. Note that an MS-Access Append query is actually translated into an SQL Insert query.

Figure 2.3: MS_Access query design grid GUI showing the layout that will result in appending one record to the database table. Note that an MS-Access Append query is actually translated into an SQL Insert query. - Click the Run button to execute this query. Answer Yes when asked whether you really want to append the record to the table.

- Open the Artists table, and confirm that a record for The Beatles exists.

At this point, you may be wondering why we used an Append query when I said we'd use an Insert query. - Go back to the design view window of your query, select View > SQL View, and note that what Access calls an Append query in its GUI is actually an INSERT query when translated to SQL:

INSERT INTO Artists ( Artist_Name, Year_Begin, Year_End ) SELECT "The Beatles" AS Expr1, 1957 AS Expr2, 1970 AS Expr3;

Check the Artists table to verify the addition of the new record. If your Artists table is still open, in the Home ribbon you can select Refresh then Refresh All.

If you're wondering why we bothered to write this query when we could have just entered the data directly into the table, you're right. That would be a waste of time. However, such statements are critical in scenarios in which records need to be added to tables programmatically. For example, if you've ever created a new account through some websites like amazon.com, it is likely that your form entries were committed to the site's database using an INSERT query like this one.

It is important to note that the syntax generated by the Access GUI does not follow standard SQL. Let's modify this SQL so that it follows the standard (and adds another artist to the table). - Change the query so that it reads as follows:

INSERT INTO Artists ( Artist_Name, Year_Begin, Year_End ) VALUES ( "The Kinks", 1964, 1996 );

- Click the Run button to execute the query.

- Finally, modify the query to add one more artist (and note the removal of the Year_End column and value, since The Who are considered an active band):

INSERT INTO Artists ( Artist_Name, Year_Begin ) VALUES ( "The Who", 1964 );

- Execute the query, then close it without saving.

C. Adding records from another table in bulk

The last method of adding records to a table is perhaps the most common one, the bulk insertion of records that are already in some other digital form. To enable you to see how this method works in Access, I've created a comma-delimited text file of albums released in the 1960s by the three artists above.

- Download the Albums.txt file [10] and save it to your machine.

- In Access, go to External Data > Text File. (Note the other choices available, including Access and Excel.)

- Browse to the text file on your machine and select it.

Note the three options available to you: importing to a new table, appending to an existing table and linking to the external table. The second option would make sense in this situation if the column headings in the text file matched those in the target Access table. They do not, so we will import to a new table and build a query to perform the append. - Confirm that the Import option is selected, and click OK.

- The first panel of the Import Text Wizard prompts you to specify whether your text is Delimited or Fixed Width. Confirm that Delimited is selected, and click Next.

- The next panel should automatically have Comma selected as the delimiter and double quotes as the Text Qualifier (i.e., the character used to enclose text strings). Check the First Row Contains Field Names checkbox, and click Next.