Lessons

Lesson 1: Introduction to Remote Sensing

Lesson 1 Introduction

A brief history of remote sensing as a governmental activity, a commercial industry, and an academic field provides the student with a perspective on the development of the technology and emergence of remote sensing applications. Accounts of remote sensing history generally begin in the 1800s, following the development of photography. Many of the early advancements of remote sensing can be tied to military applications, which continue to drive most of remote sensing technology development even today. However, after WWII, the use of remote sensing in science and infrastructure extended its reach into many areas of academia and civilian life. The recent revolution of geospatial technology and applications, linked to the explosion of computing and internet access, brings remote sensing technology and applications into the everyday lives of most people on the planet. One could argue that there are very few aspects of life that are not touched in some way by this powerful and enabling way of viewing, understanding, and managing our world.

This lesson will also introduce the basic scientific principles of light and its interaction with matter that makes remote sensing possible. This foundation will be drawn upon throughout the course to explain how remotely sensed imagery is acquired, processed, and analyzed. Many new terms will be introduced and carefully defined, in this lesson and throughout the rest of the course. While some of these terms may seem familiar, many are often misused in casual communication. It is a responsibility of the remote sensing or geospatial professional to inform and guide those with whom he/she comes in contact, through correct and precise use of these terms.

Throughout this course, you will be guided to many external resources that you can use to complement the course material and continue to refer to after completing the course.

Lesson Objectives

At the end of this lesson, you will be able to:

- describe key milestones in the historical development of remote sensing;

- discuss fundamental principles of electromagnetic radiation that are the basis for remote sensing;

- summarize the role of remote sensing as a fundamental element of GIS analysis.

Questions?

If you have any questions now or at any point during this week, please feel free to post them to the Lesson 1 Questions and Comments Discussion Forum in Canvas.

History of Remote Sensing and Photogrammetry

In Chapter 1 of the textbook, Introduction to Remote Sensing, Jim Campbell provides a narrative of the evolution of remote sensing and photogrammetry over the past two centuries. Some of this history is brought to life in a series of short videos, produced by ASPRS [1].

Watch this video about Aerial Survey Pioneers (1:47).

Video: Aerial Survey Pioneers (High Def) (1:47)

JIM LIVING: Back in 1923, Talbert Abrams bought an airplane, and he was barnstorming. And he found out that people would pay him more to take pictures of their farms and buildings than they would to go for a ride in the airplane. It was people like him that started this whole industry.

MO WEINBERG: Mapmakers, topographers, from the four different sections of the country would bring about a revolution in map making.

MORRIS THOMPSON: They were in an airplane, open cockpit, no shelter. After you get the aerial photos, what do you do with them?

MARILYN O'CUILINN: They saw the possibilities of mapping from the air.

WILLIAM A. RADLINKSKI: Most of the farmers didn't know how many acres they had, so we would determine which acreage was tillable. And that was called mapping.

ALFRED O. QUINN: I tried to get mathematics with Professor Earl Church. But the only course that I could get with him was once known as photogrammetry.

DON LAUER: I had a plan to play for the Army All-Star Basketball team. But Bob had a vision. He convinced me to go to graduate school, profound experience.

MORRIS THOMPSON: I only know of one person around here who would still remember so much detail. And he wasn't there when it began, and I was.

Watch this video about geospatial intelligence in WWII (1:58).

Video: Geospatial Intelligence in WWII (High Def) (1:58)

MARILYN O'CUILINN: Photogrammetry started with the military.

On Screen Text: “The nation with the best photo reconnaissance will win the next war.” General Werner von Fritsch, Chief of the German General Staff, 1938

[Dramatic music]

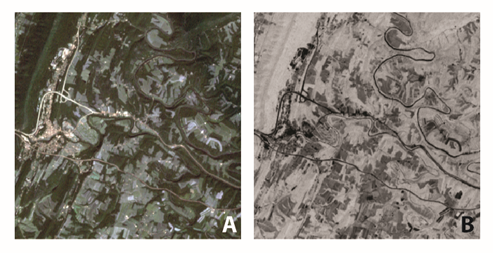

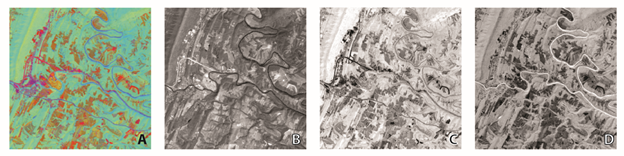

On Screen Text: Case Study: Chattanooga, TN -1939 TVA-USGS team during WWII

MORRIS THOMPSON: We had a combined TVA-USGS force. When the war started in Europe, we got to wondering about who is next.

On Screen Text: a shift in focus…..

MO WEINBERG: Map making was turned over to the Army map service, so that we would map for them.

MORRIS THOMPSON: And where do you suppose we started? China? Japan? Where? Upstate New York.

On Screen Text: defending our coasts

VIRGINIA LONG: They thought the Germans would invade the United States by way of the Saint Lawrence Waterway.

MORRIS THOMPSON: But that's just the beginning.

On Screen Text: mapping enemy territory

On Screen Text: Army Air Corps - 9th Army Topographic Engineer Corps Unit

WILLIAM A. RADLINSKI: We landed in Normandy and went all the way across Europe, going with the front line. We provided topographic maps for the infantry and to the artillery.

On Screen Text: Photo Interpretation U.S. Navy, Pacific Fleet Amphibious Force

ALFRED O. QUINN: After Pearl Harbor, we computed targets for the Naval bombardment prior to an invasion, so that ships could fire at coordinates.

On Screen Text: Army Air Forces B29 Guam Missions

FREDERICK DOYLE: We made target charts and bomb damage assessment charts for the B29 raids on Japan.

On Screen Text: August, 1945

MORRIS THOMPSON: I remember there was one place we were mapping, and then came the news that there's nothing left of it. We were mapping Hiroshima.

On Screen Text: the TVA-USGS effort alone: 500 men and women, 11 countries mapped, 1.5 million hours worked

ALFRED O. QUINN: Without maps, we'd have been lost in WWII.

Watch this video about the role of women in the history of photogrammetry (1:38).

Video: The Role of Women in the History of Photogrammetry (High Def) (1:38)

FRANKLIN D. ROOSEVELT: I asked that the Congress declare a state of war.

ALFRED O. QUINN: A lot of our men, of course, were drafted. And so, we went recruiting young ladies.

GWENDOLYN GILL: Mr. Quinn asked if I was interested in the job. I accepted it immediately. I was real glad to get a job at TVA.

VIRGINIA LONG: I was fresh out of college when I was assigned to maps and surveys.

MARGARET DELAYNEY: We did parcels of property, land, somewhere. It was hush hush because we weren't supposed to know what this was. LOUISE EDWARDS: But when you saw a map that you were compiling in the newspapers, you had a pretty good idea.

MORRIS THOMPSON: They took to it. They learned quickly. Their drafting was nicer than ours.

ALFRED O. QUINN: They had greater patience than most men. They were well adapted to the photogrammetric equipment.

SID IZLAR: And I said, I'd love to get in the multiplex because all these girls they were hiring were making more money than I was.

MARILYN O'CUILINN: In ASPRS, being a woman was not much of a barrier. If you look around now at the USGS and the civilian side, it's truly becoming one world. And this is part of it.

Watch this video about the evolution of analog to digital mapping (2:10).

Video: Evolution of Analog to Digital Mapping (High Def) (2:10)

[MUSIC PLAYING]

ROY MULLEN: Early map preparation was all by foot.

WILLIAM RADLINSKI: You had to go and walk the land.

ALFRED QUINN: I'd never heard of photogrammetry before.

MORRIS THOMPSON: What is a stereo plotting instrument?

VARIOUS VOICES: Oh, there were many things state-of-the-art cameras, various multiplex systems, the Fairchild system,

FREDERICK DOYLE: The Kelsh plotter projector, photographed from the glass plate. We went from optical systems to mechanical systems.

MARILYN O'CUILINN: With every generation, the resolution got better. And the geometric fidelity of the lenses got better.

WILLIAM RADLINSKI: We got into space imagery early on.

MARILYN O'CUILINN: There were the analytical stereoplotters.

JACK DANGERMOND: Computers came along as a tool for design.

MARILYN O'CUILINN: And then forward image motion compensation. We went to softcopy photogrammetry.

ROY MULLEN: And digital orthophotography.

MARILYN O'CUILINN: And then, of course, the digital cameras.

JACK DANGERMOND: This database wasn't about money or about people. It was about geographic information systems.

ROY MULLEN: Because of the ability to digitize directly, photogrammetry, as we knew it, reached its apex of evolution.

MARILYN O'CUILINN: 20 years ago, you would've seen monster pieces of metal.

WILLIAM RADLINSKI: In the world today, it's almost obsolete. Anybody can get a map just by turning on his computer and Googling it.

ROY MULLEN: You put it into your GPS systems that use a base map. It's universally used throughout the world now.

MARILYN O'CUILINN: And the appetite for that sort of digital data is almost insatiable.

[MUSIC PLAYING]

Watch this video about photogrammetry in space exploration (2:00).

Video: Photogrammetry in Space Exploration (High Def) (2:00)

FREDERICK DOYLE: Nowadays, sophisticated programs are used to make the maps. It's not the challenge it was when I was working on it. We were making the maps of the moon. I was in charge of the cameras on the Apollo spacecraft. We established 14,000 points. We had used photographs from four different missions. It was an enormous job. We made a whole series of maps for the ground crews-- what they would see at each station, digital maps and perspective views-- a very fancy package.

GENE CERNAN: Oh, shoot. Oh, you won't believe it.

FREDERICK DOYLE: But when they unloaded the moving vehicle, they broke one of the fenders.

SPEAKER: Oh, there goes a fender.

FREDERICK DOYLE: They decided to patch the fender.

HOUSTON: OK, we'll need to tape it.

FREDERICK DOYLE: They took our set of maps and duct-taped.

GENE CERNAN: How do you want those things taped together?

HOUSTON: Allow about an inch of overlap and tape both sides of them.

FREDERICK DOYLE: So they didn't use them at all on the terrain. When we got back, they said, well, those were the most valuable maps we had ever made.

ROY MULLEN: There may be one map that saves an untold number of lives. It's intangible what value that is. But think about what was avoided by having had that map to begin with.

ALFRED O. QUINN: The use of digital map data in all kinds of investigations, plans, developments-- I think the whole thing is going to continue.

MARILYN O'CUILINN: It's very exciting to watch the progression because it does touch so many aspects of our lives.

As you continue on in this course and in your further studies, bear in mind that the early innovators of remote sensing and photogrammetry did not have access to the sophisticated electronics and computing devices that we take for granted today! In fact, until very recently, it was often difficult to convince decision-makers and managers that imagery could produce information as accurate (or even more accurate) than data collected on the ground.

Today, almost everyone with a computer, a television, or a cell phone is familiar with the common products of remote sensing and digital mapping. The challenge today is not seeking acceptance for these technologies as much as it is making end users and decision makers aware of certain limitations and uncertainties inherent in these products. Whereas production of an image base map used to require an expert and very specialized equipment, today it can be done with inexpensive software on a home computer. It is quite easy to make a very accurate, useful product; it is just as easy to make a very inaccurate one. Professional expertise and experience are still needed to ensure that image base maps and elevation models meet target specifications and that they can be used appropriately in a broad range of applications.

Electromagnetic Radiation

Chapter 2 of Campbell (2007) delves into the scientific principles of electromagnetic radiation that are fundamental to remote sensing. If you have studied an engineering or physical science discipline, much of this may be familiar to you. You will see a few equations in this chapter, and while you won't need to memorize or make computations with these equations, it is important to gain a conceptual understanding of the physical relationships represented.

Electromagnetic energy is described in terms of

- wavelength (the distance between successive wave crests),

- frequency (the number of wave crests passing a fixed point in a given period of time), and

- amplitude (the height of each wave peak).

These important terms are further explained in the course textbook. The visible and infrared portions of the electromagnetic spectrum are the most important for the type of remote sensing discussed in this course. Figure 1.01 below illustrates the relationship between named colors and wavelength/frequency bands; it will be a useful reference.

| Color | Angstrom (A) | Nanometer (nm) | Micrometer (µm) | Frequency(hz x 1014) |

|---|---|---|---|---|

| Ultraviolet, sw | 2,537 | 254 | 0.254 | 11.82 |

| Ultraviolet, lw | 3,660 | 366 | 0.366 | 8.19 |

| Violet (limit) | 4,000 | 400 | 0.40 | 7.50 |

| Blue | 4,500 | 450 | 0.45 | 6.66 |

| Green | 5,000 | 500 | 0.50 | 6.00 |

| Green | 5,500 | 550 | 0.55 | 5.45 |

| Yellow | 5,800 | 580 | 0.58 | 5.17 |

| Orange | 6,000 | 600 | 0.60 | 5.00 |

| Red | 6,500 | 650 | 0.65 | 4.62 |

| Red (limit) | 7,000 | 700 | 0.70 | 4.29 |

| Infrared, near | 10,000 | 1,000 | 1.00 | 3.00 |

| Infrared, far | 300,000 | 30,000 | 30.00 | 0.10 |

Understanding the interactions of electromagnetic energy with the atmosphere and the Earth's surface is critical to the interpretation and analysis of remotely sensed imagery. Radiation is scattered, refracted, and absorbed by the atmosphere, and these effects must be accounted for and corrected in order to determine what is happening on the ground. The Earth's surface can reflect, absorb, transmit, and emit electromagnetic energy and, in fact, is doing all of these at the same time, in varying fractions across the entire spectrum, as a function of wavelength. The spectral signature that recorded for each pixel in a remotely sensed image is unique, based on the characteristics of the target surface and the effects of the intervening atmosphere. In remote sensing analysis, similarities and differences among the spectral signatures of individual pixels are used to establish a set of more general classes that describe the landscape or help identify objects of particular interest in a scene.

Key Definitions

Sensor Footprint

A remote sensing system comprises two basic components: a sensor and a platform. The sensor is the instrument used to record data; a platform is the vehicle used to deploy the sensor. Lesson 2 will discuss imaging sensors and platforms in much greater detail. Every sensor is designed with a unique field of view which defines the size of the area instantaneously imaged on the ground. The sensor field of view combined with the height of the sensor platform above the ground determines the sensor footprint. A sensor with a very wide field of view on a high-altitude platform may have an instantaneous footprint of hundreds of square kilometers; a sensor with a narrow field of view at a lower altitude may have an instantaneous footprint of ten of square kilometers.

Resolution

Resolution, as a general term, refers to the degree of fineness with which an image can be produced and the degree of detail that can be discerned. In remote sensing, there are four relevant types of resolution:

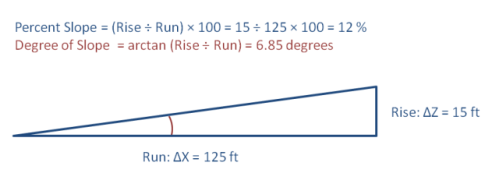

Spatial resolution is a measure of the finest detail distinguishable in an image. Spatial resolution depends on the sensor design and is often inversely related to the size of the image footprint. Sensors with very large footprints tend to have low spatial resolution; and sensors with very high spatial resolution tend to have small footprints. Spatial resolution will determine whether individual houses can be distinguished in a scene and to what degree detailed features of the house or damage to the house can be seen. For imaging satellites of potential interest to the housing inspection program, spatial resolution varies from tens of kilometers per pixel to sub-meter. Spatial resolution is closely tied to Ground Sample Distance (GSD) which is the nominal dimension of a single side of a square pixel in ground units.

Temporal resolution refers to the frequency at which data are captured for a specific place on the earth. The more frequently data they are captured by a particular sensor, the better, or finer, is the temporal resolution of that sensor. Temporal resolution is often quoted as a “revisit time” or “repeat cycle.” Temporal resolution is relevant when using imagery or elevations datasets captured successively over time to detect changes to the landscape. For sun-synchronous satellites of interest to the housing inspection program, revisit times vary from about 2 weeks to 1 day.

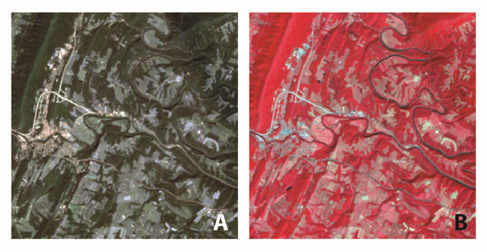

Spectral resolution describes the way an optical sensor responds to various wavelengths of light. High spectral resolution means that the sensor distinguishes between very narrow bands of wavelengths; a “hyperspectral” sensor can discern and distinguish between many shades of a color, recording many gradations of color across the infrared, visible, and ultraviolet wavelengths. Low spectral resolution means the sensor records the energy in a wide band of wavelengths as a single measurement; the most common “multispectral” sensors divide the electromagnetic spectrum from infrared to visible wavelengths into four generalized bands: infrared, red, green, and blue. The way a particular object or surface reflects incoming light can be characterized as a spectral signature and can be used to classify objects or surfaces within a remotely sensed scene. For example, an asphalt parking lot, a corn field, and a stand of pine trees will have all have different spectral signatures. Automated techniques can be used to separate various types of objects within a scene; these techniques will be discussed in Section III below.

Radiometric resolution refers to the ability of a sensor to detect differences in energy magnitude. Sensors with low radiometric resolution are able to detect only relatively large differences in the amount of energy received; sensors with high radiometric resolution are able to detect relatively small differences. The range of possible values of brightness that can be assigned to a pixel in an image file or band is determined by the file format and is also related to radiometric resolution. In an 8-bit image, values can range from 0 - 255; in a 12-bit image, values can range from 0 - 4096; in a 16-bit image, values can range from 0 - 65536; and so on.

The Role of Humans

Chapter 1 of Campbell (2007) defines key aspects of remote sensing data collection and analysis. It also defines a number of key terms that you will hear over and over again throughout this course. Campbell discusses the evolution of government and commercial remote sensing programs, and how remote sensing supports national and international earth resource monitoring. This introduction sets the contextual stage for the highly technical material to come. It is important to understand the motivation behind technology development, and to see how technology contributes to the broader societal, political, and economic framework of geospatial systems, science, and intelligence, be the application to military, business, social, or environmental intelligence.

In another seminal remote sensing textbook, Remote Sensing of the Environment, cited in the course syllabus as an additional reference, John Jensen describes factors that distinguish a superior image analyst. He says, "It is a fact that some image analysts are superior to other image analysts because they: 1) understand the scientific principles better, 2) are more widely traveled and have seen many landscape objects and geographic areas, and/or 3) they can synthesize scientific principles and real-world knowledge to reach logical and correct conclusions. (Jensen, 2007)

Jensen goes on to describe the role of the human being in remote sensing process.

"Human beings select the most appropriate remote sensing system to collect the data, specify the various resolutions of the remote sensor data, calibrate the sensor, select the platform that will carry the sensor, determine when the data will be collected, and specify how the data are processed."

This statement succinctly expresses our goals, as instructors, for developing a remote sensing curriculum within a broader geospatial program. It has been our experience, working with local, state, and federal government agencies, in engineering, environmental, and disaster response and recovery applications, that more expertise in the application of remote sensing is needed. By expertise, we mean a solid, working knowledge of the fundamentals, and use of those fundamentals in combination with good problem-solving and critical thinking skills. In today's world, there are a small number of professionals at a "very expert" level with a particular sensor or application, but there is a shortage in the workforce of people who are knowledgeable at a basic or intermediate level over the broad scope of remote sensing.

As remotely sensed data reaches the general public through tools such as Google Earth, in-car navigation systems, and other web-based and consumer-level technologies, it becomes increasingly important for the basic principles of remote sensing and mapping to become common knowledge. Misinterpretation and ill-informed decision-making can easily occur if the individuals involved do not understand the operating principles of the remote sensing system used to create the data, which is in turn used to derive information. After taking this course, you should have acquired enough knowledge to understand the purpose and scope for each of the activities set forth by Jensen above; you should "know what you don't know," and, when you don't know, you should be armed with the basic concepts and vocabulary that will allow you ask appropriate questions, to seek out the right expert, and to communicate effectively with that person.

Professional Development

The geospatial professional has grown sufficiently to support a large number of professional societies and associations. Some have a public sector focus, some an academic/research focus, and others a commercial focus; they may also be organized around particular applications or disciplines. Most of these organizations encompass remote sensing in one form or another, especially as a source of data or an analysis tool. However, few of these organizations focus on the technology of remote sensing or photogrammetry itself: the design and deployment of sensors, processing of sensor data into usable GIS products, development of tools for large-scale production and analysis of digital imagery and elevation data, etc. The American Society for Photogrammetry and Remote Sensing (ASPRS) and the International Society for Photogrammetry and Remote Sensing (ISPRS) are the two most important sources for information and professional development in these specific areas of interest.

American Society for Photogrammetry and Remote Sensing [1] (ASPRS)

ASPRS was founded in 1934 by a small group of like-minded pioneers in a unique and emerging field. Today, over 7000 individuals worldwide are members. Students in this course may have joined ASPRS to get a discount on the course textbook. There are many other ways that ASPRS membership can support professional development and career advancement.

Watch the following video about ASPRS Membership (1:50)

Video: ASPRS Membership (1:50)

[MUSIC PLAYING] Think of how maps were made 75 years ago. Here's this oblique camera, shooting through the door. And you think how maps are made today. ASPRS has been able to evolve with monumental changes in technology over time. ASPRS is a wonderful forum where great minds all come together. When I was coming up as a graduate student, I would just be in awe of all the people who wrote your textbooks who were here, and you'd see them in real life. It's a very good network to know people who are doing something in photogrammetry and remote sensing. It usually falls upon-- Precisely right. I learned from everybody here every year, and it was a tremendous help. I got involved in two different assistantships through the ASPRS. Definitely getting involved in the professional societies really helped me out. It's rewarding to turn on the news and to recognize that your profession has a huge impact on the lives, on the health and the safety of people around the world. In today's world, we've got a lot of followers. But I believe an organization like this allows you to become a leader. [MUSIC PLAYING]

International Society for Photogrammetry and Remote Sensing [8] (ISPRS)

ISPRS was founded in 1910, and is devoted to the development of international cooperation for the advancement of photogrammetry and remote sensing and their applications. National organizations, such as ASPRS, are the voting members; individuals can take part in activities, conferences, technical Working Groups, and Commissions through affiliation with one of the Member organizations. The ISPRS Congress, an international conference dedicated to photogrammetry and remote sensing, takes place every four years and is hosted by the home country of the elected President.

Lesson 2: Sensors, Platforms, and Georeferencing

Lesson 2 Introduction

Remote sensing can be done from space (using satellite platforms), from the air (using aircraft), and from the ground (using static and vehicle-based systems). The same type of sensor, such as a multispectral digital frame camera, may be deployed on all three types of platforms for different applications. Each type of platform has unique advantages and disadvantages in terms of spatial coverage, access, and flexibility. A student who completes this course should be able to identify the appropriate sensor platform combination for a variety of common GIS applications.

Lesson 2 introduces the most common types of sensors used for mapping and image analysis. These include aerial cameras, film and digital, as well as sensors found on commercial satellites. New cameras and sensors are being introduced every year, as the remote sensing industry grows and technology advances. The principles of sensor design introduced in this lesson will apply to new as well as older instruments used for image data capture. This course will focus on optical sensors, those which passively record reflected and radiant energy in the visible and near-visible wavelength bands of the electromagnetic spectrum. Other courses in this curriculum delve into both active sensors (such as lidar and radar) and passive sensors that operate outside the optical portion of the spectrum (thermal and passive microwave).

Digital images are clearly very useful - a picture is worth a thousand words - in many applications, however, the usefulness is greatly enhanced when the image is accurately georeferenced. The ability to locate objects and make measurements makes almost every remotely sensed image far more useful. Georeferencing of images is accomplished using photogrammetric methods, such as aerotriangulation (A/T) or Structure from Motion (SfM). Geometric distortions due to the sensor optics, atmosphere and earth curvature, perspective, and terrain displacement must all be taken in account. Furthermore, a reference system must be established in order to assign real-world coordinates to pixels or features in the image. Georeferencing is relatively simple in concept, but quickly becomes more complex in practice due to the intricacies of both technology and coordinate systems.

Lesson Objectives

At the end of this lesson, you will be able to:

- describe various types of remote sensing instruments used to create base map imagery and elevation data, including film cameras, digital multispectral and hyperspectral sensors, lidar and radar;

- describe common platforms for deployment of sensors, including fixed-wing and rotary-wing aircraft, satellites, and ground-based vehicles;

- identify appropriate sensor/platform combinations for a variety of geospatial applications;

- describe technologies and methods used to georeference remotely sensed data;

- explain the difference between a datum, coordinate system, and map projection;

- identify primary coordinate systems used for imagery and elevation data in the conterminous United States;

- identify metadata fields that describe georeferencing in a variety of image and elevation data sets acquired from public domain sources;

- import imagery and elevation data into ArcGIS in the correct geographic location, identifying and compensating for missing or incorrect information in the provided metadata.

Questions?

If you have any questions now or at any point during this week, please feel free to post them to the Lesson 2 Questions and Comments Discussion Forum in Canvas.

Remote Sensing Platforms

Using the broadest definition of remote sensing, there are innumerable types of platforms upon which to deploy an instrument. Discussion in this course will be limited to the commercial platforms and sensors most commonly used in mapping and GIS applications. Satellites and aircraft collect the majority of base map data and imagery used in GIS; the sensors typically deployed on these platforms include film and digital cameras, light-detection and ranging (lidar) systems, synthetic aperture radar (SAR) systems, multispectral and hyperspectral scanners. Many of these instruments can also be mounted on land-based platforms, such as vans, trucks, tractors, and tanks. In the future, it is likely that a significant percentage of GIS and mapping data will originate from land-based sources; however, due to time constraints, we will only cover satellite and aircraft platforms in this course.

Since the launch of the first Landsat in 1972, satellite-based remote sensing and mapping has grown into an international commercial industry. Interestingly enough, even as more satellites are launched, the demand for data acquired from airborne platforms continues to grow. The historic and growth trends for both airborne and spaceborne remote sensing are well-documented in the ASPRS Ten-Year Industry Forecast [9]![]() . The well-versed geospatial intelligence professional should be able to discuss the advantages and disadvantages for each type of platform. He/she should also be able to recommend the appropriate data acquisition platform for a particular application and problem set. While the number of satellite platforms is quite low compared to the number of airborne platforms, the optical capabilities of satellite imaging sensors are approaching those of airborne digital cameras. However, there will always be important differences, strictly related to characteristics of the platform, in the effectiveness of satellites and aircraft to acquire remote sensing data.

. The well-versed geospatial intelligence professional should be able to discuss the advantages and disadvantages for each type of platform. He/she should also be able to recommend the appropriate data acquisition platform for a particular application and problem set. While the number of satellite platforms is quite low compared to the number of airborne platforms, the optical capabilities of satellite imaging sensors are approaching those of airborne digital cameras. However, there will always be important differences, strictly related to characteristics of the platform, in the effectiveness of satellites and aircraft to acquire remote sensing data.

One obvious advantage satellites have over aircraft is global accessibility; there are numerous governmental restrictions that deny access to airspace oversensitive areas or over foreign countries. Satellite orbits are not subject to these restrictions, although there may well be legal agreements to limit distribution of imagery over particular areas.

The design of a sensor destined for a satellite platform begins many years before launch and cannot be easily changed to reflect advances in technology that may evolve during the interim period. While all systems are rigorously tested before launch, there is always the possibility that one or more will fail after the spacecraft reaches orbit. The sensor could be working perfectly, but a component of the spacecraft bus (attitude determination system, power subsystem, temperature control system, or communications system) could fail, rendering a very expensive sensor effectively useless. The financial risk involved in building and operating a satellite sensor and platform is considerable, presenting a significant obstacle to the commercialization of space-based remote sensing.

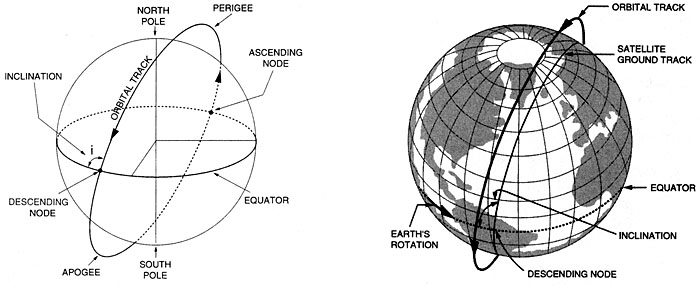

Satellites are placed at various heights and orbits to achieve desired coverage of the Earth's surface [11]. When the orbital speed exactly matches that of the Earth's rotation, the satellite stays above the same point at all times, in a geostationary [12] orbit. This is useful for communications and weather monitoring satellites Satellite platforms for electro-optical (E/O) imaging systems are usually placed in a sun-synchronous [13], low-earth orbit (LEO) so that images of a given place are always acquired at the same local time (Figure 2.02). The revisit time for a particular location is a function of the individual platform and sensor, but generally, it is on the order of several days to several weeks. While orbits are optimized for time of day, the satellite track may not always coincide with cloud-free conditions or specific vegetation conditions of interest to the end-user of the imagery. Therefore, it is not a given that usable imagery will be collected on every sensor pass over a given site

Aircraft often have a definite advantage because of their mobilization flexibility. They can be deployed wherever and whenever weather conditions are favorable. Clouds often appear and dissipate over a target over a period of several hours during a given day. Aircraft on site can respond with a moment's notice to take advantage of clear conditions, while satellites are locked into a schedule dictated by orbital parameters. Aircraft can also be deployed in small or large numbers, making it possible to collect imagery seamlessly over an entire county or state in a matter of days or weeks simply by having lots of planes in the air at the same time.

Aircraft platforms range from the very small, slow, and low flying (Figure 2.03), to twin-engine turboprop and small jets capable of flying at altitudes up to 35,000 feet. Unmanned platforms (UAVs) are becoming increasingly important, particularly in military and emergency response applications, both international and domestic. Flying height, airspeed, and range are critical factors in choosing an appropriate remote sensing platform, and you will learn about this in more detail later in the lesson. Modifications to the fuselage and power system to accommodate a remote sensing instrument and data storage system are often far more expensive than the cost of the aircraft itself. While the planes themselves are fairly common, choosing the right aircraft to invest in requires a firm understanding of the applications for which that aircraft is likely to be used over its lifetime.

The scale and footprint of an aerial image is determined by the distance of the sensor from the ground; this distance is commonly referred to as the altitude above the mean terrain (AMT). The operating ceiling for an aircraft is defined in terms of altitude above mean sea level. It is important to remember this distinction when planning for a project in mountainous terrain. For example, the National Aerial Photography Program [14] (NAPP) and the National Agricultural Imagery Program [15] (NAIP) both call for imagery to be acquired from 20,000 feet AMT. In the western United States, this often requires flying much higher than 20,000 feet above mean sea level. A pressurized platform such as the Cessna Conquest (Figure 2.04) would be suitable for meeting these requirements.

With airborne systems, the flying height is determined on a project-by-project basis depending on the requirements for spatial resolution, GSD, and accuracy. The altitude of a satellite platform is fixed by the orbital considerations described above; scale and resolution of the imagery are determined by the sensor design. Medium-resolution satellites, such as Landsat, and high-resolution satellites, such as GeoEye, orbit at nearly the same altitude, but collect imagery at very different ground sample distance (GSD).

1 Sun-Synchronous Orbit. (2007, November 27). On Wikipedia, The free encyclopedia. Retrieved December 4, 2007, from http://en.wikipedia.org/wiki/Sun-synchronous_orbit [13]

Optical Sensors

In this lesson, you will be introduced to three types of optical sensors: airborne film mapping cameras, airborne digital mapping cameras, and satellite imaging systems. Each has particular characteristics, advantages, and disadvantages, but the principles of image acquisition and processing are largely the same, regardless of the sensor type. Lesson 3 will cover photogrammetric processing of data from these sensors to produce orthophotos [16] 1 and terrain models.

The size, or scale, of objects in a remotely sensed image varies with terrain elevation and with the tilt of the sensor with respect to the ground, as shown in Figure 2.05. Accurate measurements cannot be made from an image without rectification, the process of removing tilt and relief displacement. In order to use a rectified image as a map, it must also be georeferenced to a ground coordinate system.

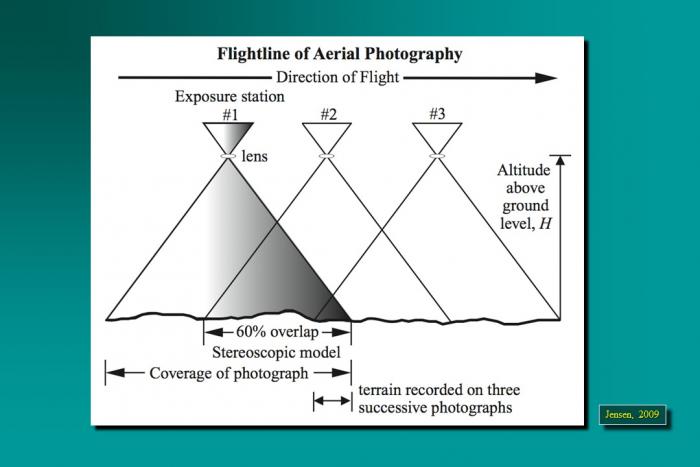

If remotely sensed images are acquired such that there is overlap between them, then objects can be seen from multiple perspectives, creating a stereoscopic view, or stereomodel. A familiar application of this principle is the View-Master [17] 2 toy many of us played with as children. The apparent shift of an object against a background due to a change in the observer's position is called parallax [18] 3. Following the same principle as depth perception in human binocular vision, heights of objects and distances between them can be measured precisely from the degree of parallax in image space if the overlapping photos can be properly oriented with respect to each other; in other words, if the relative orientation is known (Figure 2.06).

Airborne film cameras have been in use for decades. Black and white (panchromatic), natural color, and false color infrared aerial film can be chosen based on the intended use of the imagery; panchromatic provides the sharpest detail for precision mapping; natural color is the most popular for interpretation and general viewing; false color infrared is used for environmental applications. High-precision manufacturing of camera elements such as lens, body, and focal plane; rigorous camera calibration techniques; and continuous improvements in electronic controls have resulted in a mature technology capable of producing stable, geometrically well-defined, high-accuracy image products. Lens distortion can be measured precisely and modeled; image motion compensation mechanisms remove the blur caused by aircraft motion during exposure. Aerial film is developed using chemical processes and then scanned at resolutions as high as 3,000 dots per inch. In today's photogrammetric production environment, virtually all aerotriangulation, elevation, and feature extraction are performed in an all-digital work flow.

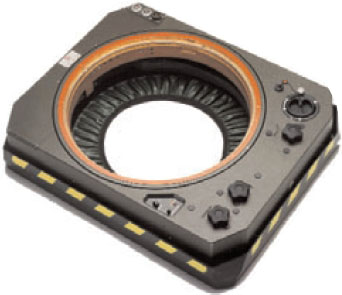

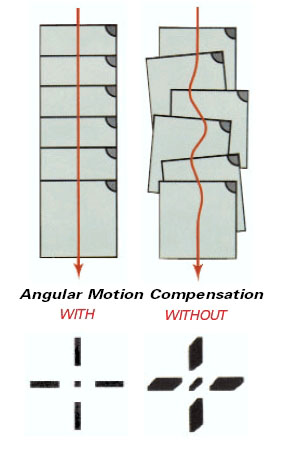

Figure 2.07 shows a Leica RC-30 aerial film camera. A hole is cut in the fuselage of the aircraft, and the camera is set in a gyro-stabilized mount as shown in Figure 2.08. This minimizes the effects of instantaneous aircraft motion and keeps the camera pointing perpendicular to the ground; the result is a sharper image and more controlled coverage from photo to photo (Figure 2.09).

In the United States, laboratory calibration of film aerial cameras is performed by the US Geological Survey, Optical Science Laboratory, in Reston, VA. If you are ever in that area, you can arrange for a visit to this unique and interesting facility; the services provided there have ensured the quality and accuracy of photogrammetrically-produced maps in the US for many decades. Most aerial survey projects require the aerial camera and lens to have been calibrated by the USGS no less than three years before the beginning of the project.

You can find a great number of USGS camera calibration certificates on the web in the Keystone Aerial Surveys Calibration Report [19] database. Camera systems are calibrated as a unique combination of camera body, lens, and film magazine. If you search the Keystone database for lens number 13366, for example, you should see the following result.

Table 3.01: Example Keystone database search results.

| Cam Num | Lens Num | CFL | Report Date | Lens Type | Platen | Report |

|---|---|---|---|---|---|---|

| 5325 | 13366 | 153.287 | 5/12/2000 | 6 | 707 | 5325-051200 |

| 5325 | 13366 | 153.301 | 10/1/2003 | 6 | 5325-707 | 5325-100103 |

| 5325 | 13366 | 153.298 | 10/30/2006 | 6 | 707 | 5325-103006 |

Lens 13366 has been calibrated 3 times, always with camera number 5325. When using the Keystone database, you can click on the report link for the most recent calibration to see the complete report. The camera is a Wild RC30 4; one of the most advanced and precise aerial film mapping cameras manufactured. Pay particular attention to the sections on

- calibrated focal length

- lens distortion

- lens resolving power (AWAR)

- principal points and fiducial coordinates.

Now look at the calibration report for lens number 13081, camera number 2961, and dated 1/5/2005. This is Wild RC10 camera manufactured in the 1980s, an early predecessor to the RC30. Compare the reports, particularly the values shown for AWAR. Mapping projects executed today often specify a minimum allowable AWAR for the camera lens.

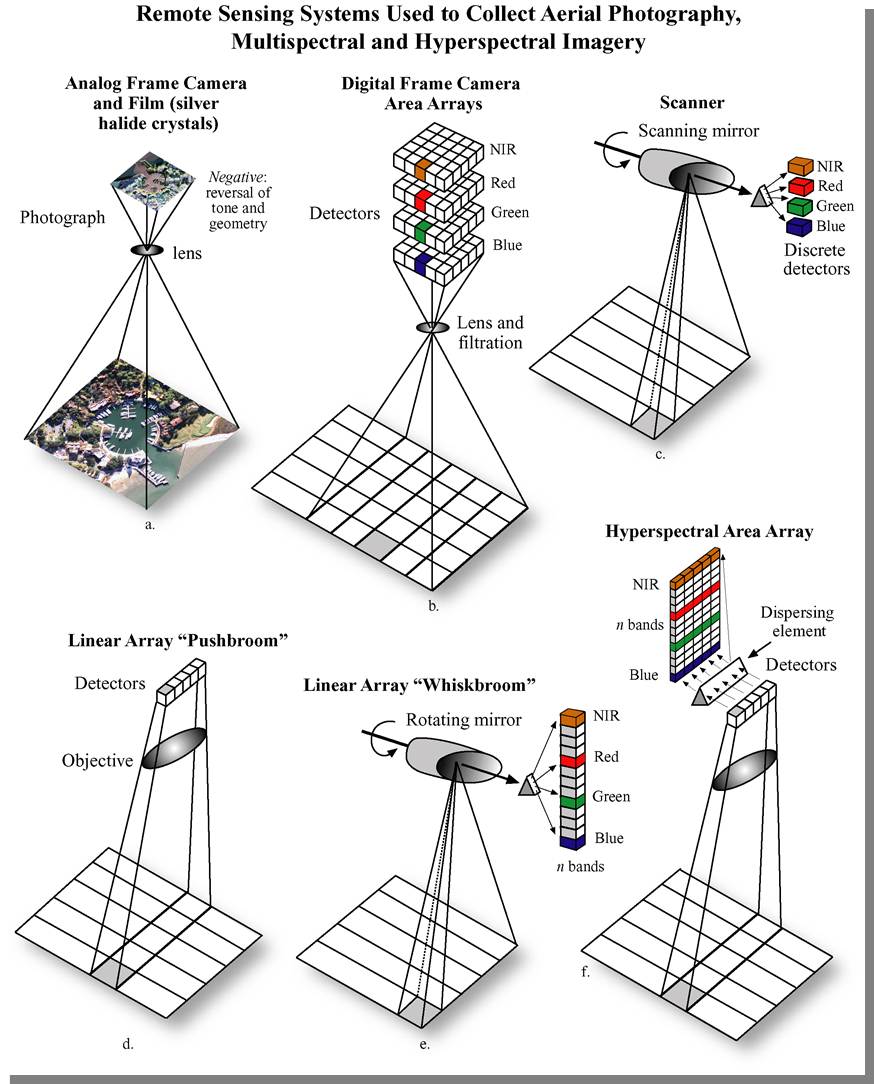

Airborne digital mapping cameras have evolved over the past few years from prototype designs to mass-produced operationally stable systems. In many aspects, they provide superior performance to film cameras, dramatically reducing production time with increased spectral and radiometric resolution. Detail in shadows can be seen and mapped more accurately. Panchromatic, red, green, blue, and infrared bands are captured simultaneously so that multiple image products can be made from a single acquisition (Figure 2.10).

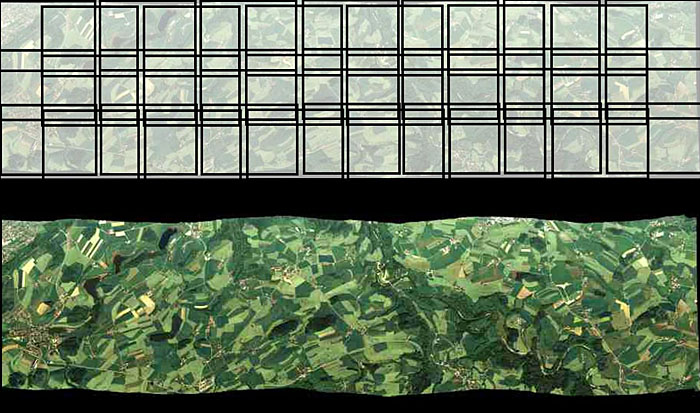

Digital camera designs are of two types: area arrays, and linear push-broom, or line-scanning, sensors. An area array camera, such as the Intergraph DMC (Figure 2.12) uses one or more two-dimensional charge-coupled device (CCD) arrays to create an image equivalent to a single frame image from an aerial film camera. The entire scene is imaged at the same moment in time, providing the same rigid geometric relationship for all points in the image with respect to each other. Coverage of an area of interest is provided with a block of overlapping photos, as shown in Figure 2.11. A push-broom sensor, such as the Leica ADS40 (Figure 2.13 and Figure 2.14), comprises multiple linear arrays, facing forward, down, and aft, which simultaneously capture along-track stereo coverage not in frame images, but in long continuous strips, or pixel carpets, made up of individual lines 1 pixel deep. Multiple linear CCD arrays capture panchromatic, near-infrared, red, green, and blue bands (Figure 2.15). Pointing the CCD arrays at aft, nadir, and forward viewing angles allows the sensor to capture multiple perspectives of the same point on the ground in a single pass, creating the stereo views required to extract elevation information (Figure 2.16).

Credit: R. Reulke, S. Becker, N. Haala, U. Tempelmann. “Determination and improvement of spatial resolution of the CCD-line-scanner system ADS40.” ISPRS Journal of Photogrammetry and Remote Sensing, Volume 60, Issue 2, 81-90. https://doi.org/10.1016/j.isprsjprs.2005.10.007 [20]

High-resolution satellite imagery is now available from a number of commercial sources, both foreign and domestic. The federal government regulates the minimum allowable GSD for commercial distribution, based largely on national security concerns; 0.6-meter GSD is currently available, with higher-resolution sensors being planned for the near future (McGlone, 2007). The image sensors are based on a linear push-broom design. Each sensor model is unique and contains proprietary design information; therefore, the sensor models are not distributed to commercial purchasers or users of the data. Through commercial contracts, these satellites provide imagery to NGA in support of geospatial intelligence activities around the globe.

Calibration of digital aerial cameras and satellite sensors is a much more complex process than calibration of a film camera. The digital sensor should be characterized for its radiometric response as well as for internal geometry. ASPRS and a number of federal government agencies have been working with sensor manufacturers to establish guidelines and procedures for sensor calibration and data product characterization. It is an ongoing effort, and standards are just beginning to emerge. The USGS Remote Sensing Technologies Project maintains a website with information on digital camera calibration [21] efforts, as well as collaborative efforts, such as the Interagency Digital Imagery Working Group [22] (IADIWG) and the Joint Agency Commercial Imagery Evaluation [23] (JACIE) group.

As digital aerial photography has matured, it has become integrated into many consumer-level, web-based applications, such as Google Earth and numerous navigation and routing packages. Microsoft has recently deployed a large number of aerial survey planes equipped with the Vexcel UltraCam sensor in an ambitious Global Ortho [24] program. Their goal is to provide very high-resolution color imagery over the entire land surface of the Earth, made publicly available through the Bing Maps platform. The following video (4:47) describes the data acquisition experience and process.

Video: Keystone Aerial Surveys: I Fly UltraCam (4:47)

[MUSIC PLAYING] We have 16 airplanes that we fly all over the United States. We have the largest fleet of aircraft totally dedicated to aircraft imagery collection in the United States, if not the world. Keystone purchased their first UltraCam in 2005. Keystone was in a situation where they had been collecting analog inventory for years, ever since the '60s. We identified that we had to go digital. We had to do it to survive. So our choice then was to pick out the right camera. There was a lot of competition from other companies, and we felt that to be state-of-the-art and to offer our clients what they needed, we chose UltraCam because of price point and what we considered quality and service that they provided.

If we had not upgraded to digital, specifically the UltraCam, we would have been considered a second class operator. We went from having one UltraCam-D to three UltraCam-X systems in only a matter of years. I think the real telling thing is once we have flown a project in digitally with our UltraCam, they don't want film anymore. Keystone's coming to a point where we're about to take our one millionth UltraCam frame. [MUSIC PLAYING] So here we are. The mount is green. We're about to take pictures here in about two seconds. And there's our first shot. I'm getting a quick view. An exact representation of the imagery that we are recording right now. So we'll go up on a four hour flight and at the end of it, I'm pretty certain that that imagery was good. Whereas, with film, you really don't know what's on it. You don't want to do a flight twice. So we're basically able to operate cheaper.

This spring was almost the tipping point. We were actually setting film cameras on the ground in our big season with people asking for digital imagery instead of the film imagery because of the quality. We had another client when we first delivered the digital imagery for them, they were able to read the serial number on a manhole cover. It's not just an image, there's so much more. Time, altitude, who flew it, camera serial numbers, the airplane it was installed in, the exact time of the photo, the sun angle of the photo. Basically, if you can type it, we can record it. The advantage of the UltraCam with its collecting all the bands allows us to do things with it that were not possible with an analog camera just using one film. We're collecting panchromatic, RGB color, and infrared at the same time. Infrared allows you to see wetland studies, impervious surface studies. Also [? distressed ?] foliage studies. You're dealing with nearly 14 bits. That 14 bits gives you the ability to see inside of shadows or fly under lower light conditions. With the X, we can now change cassettes in flight and really go all day long without having to land to download that.

They have three people on staff that, phone call away, they will either send us the parts or they'll be here to fix our camera. They have a service facility in Boulder where they can actually calibrate the camera. We chose to upgrade because Microsoft seems to have a very strong commitment to keeping their product up-to-date. Version 2.1 has some of our specific requests for reporting tools and better logging tools. The radiometry upgrades have also made it virtually a no-brainer to upgrade. The advantage that Vexcel has given us is the constant upgrades of these cameras. They're coming up with new inventions all the time. They had the D. Then they came up with the X. And now the XP. So when you have an UltraCam, you're basically buying into a product line that you will be able to use into the future. It's allowed Keystone to stay at the forefront of the profession in terms of offering the best equipment. UltraCam gives you beautiful, accurate imagery. It's reliable and has support that's second to none I'm Ken Potter from Keystone Aerial Surveys and I fly UltraCam.

1 Orthophoto. (2007, November 21). In Wikipedia, The free encyclopedia. Retrieved December 4, 2007, from http://en.wikipedia.org/wiki/Orthophoto [16]

2 View-Master (2007, November 9). In Wikipedia, The free encyclopedia. Retrieved December 5, 2007, http://en.wikipedia.org/wiki/View-master [17]

3 Parallax. (2007, 29 November). In Wikipedia, The free encyclopedia. Retrieved December 5, 2007, http://en.wikipedia.org/wiki/Parallax [18]

4 Wild was purchased by Leica Geosystems, which has since been incorporated into Hexagon. The camera referenced in this report is of the same type shown in Figure 7.

Lesson 3: Production of Digital Image Base Maps

Lesson 3 Introduction

Remote sensing, as a broad discipline within geospatial science, extracts two types of information from images: thematic (what is it?) and positional (where is it?). Thematic information is extracted through a process of image interpretation and analysis; positional information is extracted through the process of creating maps from remotely sensed data. In Lesson 2, we set the stage to discuss maps and mapping by providing a background in datums, coordinate systems, and georeferencing technology. In Lesson 3, we will begin to connect those concepts with the remotely sensed data itself, concentrating on the aerial photograph; however, we will see in later lessons how these principles are applied to elevation data.

Photogrammetry is defined as "the art, science, and technology of obtaining reliable information about physical objects and the environment through the process of recording, measuring, and interpreting photographic images and patterns of electromagnetic radiant energy and other phenomena. Photogrammetry provides the positional half of the information equation described above. In Lessons 3 - 5, we will concentrate on the photogrammetric principles of precise and accurate measurement that are essential to the creation of good base maps for GIS. In later lessons, we will introduce foundations of interpretation and image analysis that are also an important application of remote sensing. In this lesson, you will learn more about the special geometric relationships between overlapping aerial photographs, which allow creation of an accurate three-dimensional depiction of the ground. Odd as it may seem, we need this accurate three-dimensional model before a spatially accurate two-dimensional image base map (an orthophoto) can be generated. As was mentioned in Lesson 2, the third dimension, elevation, is needed in order to remove relief displacement from the source imagery.

This lesson will also introduce key elements of photogrammetric project planning, including constraints of lighting, weather, and season that apply to all types of passive optical sensors. You will be introduced to the techniques and methods of data extraction using specialized photogrammetric instruments and software, and you will learn to identify common image-based GIS data products. Finally you will use Internet data sources to find and download various types of aerial photography, and you will create an orthophoto base map using a raw aerial photo and a digital elevation model.

Lesson Objectives

At the end of this lesson, you will be able to:

- describe the basic photogrammetric concepts used in orthorectification of imagery.

- explain the difference between simple georeferencing and rigorous orthorectification.

- perform both simple georeferencing and rigorous orthorectification of both airborne and satellite imagery.

- use web-based tools to locate and download remotely sensed imagery.

- identify common image data formats and perform conversions from one format to another.

- overlay imagery data with vector data layer to prepare for visualization and analysis.

Questions?

If you have any questions now or at any point during this week, please feel free to post them to the Lesson 3 Questions and Comments Discussion Forum in Canvas.

Geometry of the Aerial Photograph

The Single Vertical Aerial Photograph

The geometry of an aerial photograph is based on the simple, fundamental condition of collinearity. By definition, three or more points that lie on the same line are said to be collinear. In photogrammetry, a single ray of light is the straight line; three fundamental points must always fall on this straight line: the imaged point on the ground, the focal point of the camera lens, and the image of the point on the film or imaging array of a digital camera, as shown in the figure below.

Picture the bundle of countless rays of light that make up a single aerial photograph or digital frame image at the instant of exposure. The length of each ray, from the focal point of the camera to the imaged point on the ground, is determined by the height of the camera lens above the ground and the elevation of that point on the ground. The length of each ray, from the focal point to the photographic image, is fixed by the focal length of the lens.

Now imagine that the camera focal plane is tilted with respect to the ground, due to the roll, pitch, and yaw of the aircraft. This will affect the length of each light ray in the bundle, and it will also affect the location of the image point in the 2-dimensional photograph. If we want to make precise measurements from the photograph and relate these measurements to real world distances, we must know the exact position and angular orientation of the photograph with respect to the ground. Today, we can actually measure position and angular orientation of the camera with respect to the ground with GPS/IMU direct georeferencing technology. But the early pioneers of photogrammetry did not have this advantage. Instead, they developed a mathematical process, based on the collinearity condition, which allowed them to compute the position and orientation of the photograph based on known points on the ground. This geometric relationship between the image and the ground is called exterior orientation. It is comprised of six mathematical elements: the x, y, and z position of the camera focal point and the three angles of rotation: omega (roll), phi (pitch), and kappa (yaw), with respect to the ground. The mathematical process of computing the exterior orientation of elements from known points on the ground is referred to by the photogrammetric term, space resection.

Refer again to the figure above. If we were together in a classroom, I could demonstrate the concept of space resection using my desktop and a photograph taken of my desktop from above. I could use pieces of string to represent individual rays of light; each string is of a fixed length based on the distance from the desktop to the camera when the photo was taken. You'll now have to try to picture this demonstration as I describe it in words. If you feel frustrated, imagine yourself as Laussedat trying to figure this out by himself back in the 1800s.

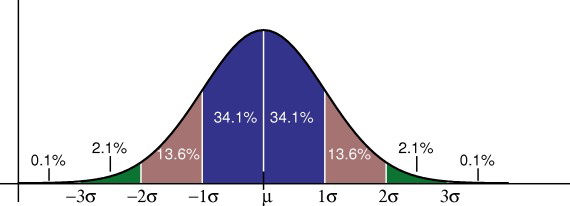

I attach one end of one piece of string to a particular point on my desktop, I attach the other end of the string to the image of that point on the photograph, and I pull the string taut. With that single piece of string, I cannot precisely locate or fix the position of the camera focal point or the orientation of the camera focal plane as it was when the photograph was taken. Now, I choose a second point, adding a second piece of string, and I pull both strings taut. I can't move the photograph around as much as I could with only one piece of string attached, but the photograph can still be rolled and twisted with respect to the desktop. If I identify yet a third point (that does not lie in a straight line with the first two) and attach a third piece of string, I now have a rigid solution; the geometric relationship between the desktop and the photograph is fixed, and I can locate the focal point of the camera in my desktop model. Adding more points adds to the strength of the geometric solution and minimizes the effects of any small errors I might have made, either cutting the string to its proper length or attaching the strings exactly to the points I identified. When we overdetermine a solution by adding additional, redundant measurements, we can make statistical calculations to quantify the precision of our geometric solution.

We don't have time in this course to go into the mathematics of analytical photogrammetry, but hopefully you can get a sense of it as a true measurement science. In fact, photogrammetry has traditionally been taught as a subdiscipline of civil engineering and surveying, rather than geography. Photogrammetry is not just about making neat and useful maps; a key function of the photogrammetrist, as a geospatial professional, is to make authoritative statements about the spatial precision and accuracy of photogrammetric measurements and mapping products. As you'll see in this lesson and the ones to come, you can easily be trained to push buttons in software to produce neat and interesting remote sensing products for use in GIS. It takes a more rigorous education to make quantitative statements about the spatial accuracy of those products. In my opinion, it is as much the duty of the photogrammetrist or GIS professional to make end users aware of the error contained in a data product as it is to give them the product in the first place. Understanding errors and the potential consequences of error is a very important part of the decision-making process. There's also a particular language used to articulate statements about error and accuracy. You will learn a little of this language in Lesson 6.

The Stereo Pair

Once the exterior orientation of a single vertical aerial photograph is solved, other points identified on the photographic image can be projected as more rays of light, more pieces of string, passing through the focal point of the camera and intersecting the target surface (the ground or my desktop). If the target surface is perfectly flat, then the elevations of the three known points determine a mathematical plane representing the entire surface. It is then possible to precisely locate any other point we can identify in the image on the target surface, merely by projecting a single, straight line. In reality, the target surface is never perfectly flat. We can project the ray of light from the image through the focal plane, but we can't determine the point at which it intersects the target surface unless we know the shape of the surface and the elevation of that point. In the context of our demonstration above, we need to know the exact length of the new piece of string to establish the location of the point of interest on the target surface. This is where the concept of stereoscopic measurement comes into play. If you are interested in learning more about the principles and history of stereoscopy, there is a long but very interesting YouTube video of a seminar with Brian May and Denis Pellerin titled, Stereoscopy: The Dawn of 3-D. Brian May and Denis Pellerin [26]. We've been able to build an entire science of measurement around something that is a natural, built-in, characteristic of the physical human being.

The advantage of stereo photography is that we can extract 3-dimensional information from it. Let's return to the example I described above. This time, imagine I have two photographs of my desktop taken from two separate vantage points, and that the individual images actually overlap. One image is taken from over the left side of my desk, and the other is taken from over the right side of my desk. The middle of my desktop can be seen in both photographs. Now, let's assume that we have established the exterior orientation parameters for each of the two photographs; so, we know exactly where they both were at the moment of exposure, relative to the desktop. We now have two bundles of light rays, some of them intersecting in the middle of my desktop. It is a fundamental postulate of geometry that if two lines intersect, their intersection is exactly one point. Voilà! Now we can precisely locate any point on the desktop surface, regardless of its shape, in 3-dimensional space. For any given point common to both photographs, we now know the exact length of each of the two pieces of string (one from each photograph) that connect to the imaged point on the ground. In photogrammetry, we call this a space intersection. If we have two photographs precisely oriented in space relative to each other, we can always intersect two lines to find the 3-dimensional ground coordinate of any point common to both photographs. The two photographs, oriented relatively to each other, are referred to as a stereo model.

By extension, we could have a large block of aerial photographs, overlapping in the direction of flight as well as between adjacent flight lines, all oriented relatively to each other. This block, once constructed, represents all of the intersecting bundles of light rays from all of the overlapping photos. You can imagine that many of the points will be seen in a number of photographs. In fact, with 60% forward overlap, every point in a single flight line is seen 3 times. If the point falls in the 30% sidelap area between two flight lines, it will be seen 6 times; six rays will all intersect at one point. Actually, because some degree of measurement error is unavoidable, the intersection will occur within some sort of sphere, which represents the uncertainty in the projected coordinate of the point in question. As I mentioned earlier, the mathematical equations of photogrammetry allow us to quantify this uncertainty in statistical terms. Your readings will take you into greater depth and detail, but I hope my explanation helps you create a 3-dimensional picture in your mind, making the readings easier to understand.

Acquisition of Aerial Photography

For most photogrammetric mapping purposes, the goal is to image as much of the actual ground surface as possible. For this reason, it is customary to fly projects when deciduous trees are without leaves, when the ground is clear of snow and ice, when lakes and streams are within their normal banks, and when the sun is high overhead, minimizing shadows. In many parts of the world, this limits the optimal season for mapping to early spring, when days are getting long, but trees are relatively bare. Add the need for cloud-free skies to all these other requirements, and you can see why the flight operations of most photogrammetric mapping companies are not a big profit center. For any given location, there are a relatively small number of days per year when all the conditions are right for image acquisition.

By the way, these requirements are no different for space-based image acquisition. You already know that the orbits of passive imaging satellites are designed to follow local noon. That takes care of the time of day requirement. Add in all the other requirements, plus the constraints of orbital parameters and revisit times, and you can easily see why large-scale state and county mapping projects are still accomplished with aircraft. I'm sure we'll eventually see seamless coverage of states and nations with large-scale (1 meter/pixel GSD or better) satellite imagery, but it won't all be taken in the same season or even in the same year. Not until there are many, many more high-resolution imaging satellites circling the globe.

Sample Aerial Photography Specifications from Indiana Department of Transportation [27]

Aerial Imagery Guidelines from URISA Quick Study [28]

Several key computations related to flight planning are identified in these documents. These are:

- flight altitude above the ground required to achieve specified photo scale

- number of flightlines required to cover project area

- distance between (and total number of) flightlines required to achieve specified sidelap

- distance between (and total number of) successive exposures to achieve specified overlap

- total number of exposures required to complete project

In most aerial photography contracts, the aerial survey company provides a flight plan, based on customer specifications for scale and resolution, that enumerates all of the quantities outlined above along with a cost estimate based on those quantities.

Control and Georeferencing

The subject of control and georeferencing of a photogrammetric block could be the subject of several lessons in a course dedicated entirely to photogrammetry. I'm going to do my best to explain it conceptually without the math. Earlier in this lesson, you learned about the six parameters of exterior orientation that define the geometric relationship between an aerial photograph and the ground. I explained space resection and the way a minimum number of ground points can be used to solve the exterior orientation of a single vertical photograph. You may have thought to yourself how labor-intensive, expensive, and impractical it would be to actually solve the exterior orientation of each individual photograph in a large block by this method.

Photogrammetric mapping, based on very large blocks of hundreds or even thousands of aerial photographs, was made practical by the development of a technique called aerotriangulation, sometimes also called aerial triangulation, and often abbreviated as “A/T.” This short video from ASPRS [29] gives an overview of the concept, but a deep understanding comes from either years of training under a master, or a rigorous study of the mathematics. My reference to a master may sound a little overblown, but in my years of experience managing photogrammetric projects, a bad aerotriangulation solution that goes undetected is one of the most costly and time-consuming problems to correct. A/T is usually performed by the most experienced photogrammetrist in the organization, and there simply aren't that many true experts in it, perhaps a thousand or two at most worldwide. There are many software programs available to compute an A/T adjustment, but it’s easier to get a bad result than a good one, especially if the person designing the block and evaluating the statistical results doesn't have a deep theoretical understanding combined with a lot of practical experience.

The easiest way I have found to simply explain the concept of aerotriangulation is an extension of my desktop analogy. Picture again the bundles of intersecting rays generated by two overlapping photographs; the figure below depicts 3 photos in a single flight line. Now extend that picture in your mind's eye to include a second flight line "side-lapping" the first, also imaging points A, D, and G on the ground.

Point D, for example, is seen from 3 perspectives in the first flight line, and from another 3 perspectives in the side-lapping flight line I've asked you to visualize. Imagine that both flight lines continue on with many more end-lapping photos. All points common to adjacent flight lines, such as points D, G, and beyond, are going to be viewed with 6 intersecting rays. Even if we did not have a ground coordinate for every single one of these "tie points," you can imagine that, by enforcing the condition that the 6 rays must precisely intersect, there is still a lot of geometric stability in the block. This is the basic premise of aerotriangulation: enforcing the collinearity condition and the redundant intersection of image rays in "object space" creates a bridge from one photo to the next, thereby reducing the amount of ground control needed to reconstruct the exterior orientation for every photo.

If GPS/IMU direct georeferencing is available, the effect is to add additional control at each of the camera focal points, the photo centers, as they are often called. Remember that direct georeferencing gives us the 6 parameters of exterior orientation for every photo. We can use the direct georeferencing measurements as the first approximation of the aerotriangulation solution, and we no longer need actual ground control points. The GPS/IMU measurements for each photo center will inevitably contain errors, but we can reduce the impact of these errors in subsequent iterations of the block adjustment computation, simply by enforcing ray intersection for all the image tie points. This is done mathematically for all photos in the entire block simultaneously, and the A/T results give the photogrammetrist a quantitative assessment of the "goodness of fit" for this system of many redundant observations.

Photogrammetric Data Collection

Stereoplotters and Photogrammetric Workstations

A stereoplotter is an instrument that can be used to recreate stereo models, so that a 3-dimensional model of the ground can be viewed, measured, and mapped by an operator. Compare the historical stereoplotters in the Lesson 1 reading to the softcopy workstation shown below. Binoculars have been replaced with other, less restrictive, forms of 3-dimensional viewing. In the example below, the glasses worn by the operator are polarized so that his right eye is seeing one image of the stereo pair, and his left eye is seeing the other.

Feature and Elevation Collection

Prior to the advent of digital workstations, map features were scribed by photogrammetric technicians on a drawing table integrated into the stereoplotter apparatus, often employing a pantograph [30] to enlarge the drawing to the final, desired map scale. Skilled cartographers would then use colored inks on semi-transparent materials, such as mylar, to create master map sheets that could be reproduced by blueprint or photo offset processes. These cartographers would add labeling and annotation as required. The final map was as much a work of art as it was a feat of technology. Today, feature data can be easily captured as 2-dimensional or 3-dimensional points, lines, and polygons when a Computer Aided Design (CAD) or GIS system is integrated into the softcopy workstation. Points might describe the location of fire hydrants, light poles, or simple building locations; lines could represent road or stream edges and centerlines; polygons are often used to represent detailed building footprints, stands of vegetation, water bodies, etc. In either a CAD or GIS package, each feature can be collected with unique line styles, line weights, and colors. If GIS software is used, the operator can also collect attribute information, such as road surface material, or building type, insofar as he can interpret this information from the aerial photograph.

Elevation data can be captured in a variety of ways; as in the case of feature data, methods have evolved along with photogrammetric technology. The earliest method for collecting elevation data was to manually draw isolines of elevation, or contours. It requires a great amount of skill and experience for an operator to draw spatially accurate, aesthetically pleasing contours. Contours are still one of the most effective representations of 3-dimensional topography on a 2-dimensional map; they are laborious to draw by hand, and while they can be generated automatically in a number of software packages, it usually requires a lot of manual editing to smooth jagged lines, to remove small artifacts, and to add labels. Many engineers learned to use contours early in their careers and still prefer them to other more modern, automated forms of digital elevation data.

Profiling is a technique of elevation data capture that was used extensively by the USGS and many private firms to create elevation data intended for input into the orthorectification of imagery. In this method, the stereoplotter is set to automatically drive the digitizing mark in evenly spaced profiles running the length or width of the stereo model. The operator's job is to keep reading elevations as accurately as possible in this dynamic environment; the stereo plotter automatically records elevations at regular intervals. The end result is a grid of regularly spaced points, which may or may not correspond to interesting or significant features of the actual terrain. This method of profiling works best in flat areas and in applications where accuracy and detail are not required. It was used quite a bit in the early days of orthophoto production, but is less common today.

Most elevation data derived from photogrammetry today is captured as randomly spaced mass points and breaklines. The photogrammetric technician collects individual 3-dimensional points at whatever frequency and in whatever pattern he deems appropriate to represent the ground surface. In addition, important linear features, such as road edges, curbs, walls, ridges, drains, etc., are captured as 3-dimensional lines. The mass points and breaklines are combined using surface modeling software to create a triangulated irregular network, or TIN. These elevation data products will be explained in more detail in the next lesson.

Image Data Products

Orthophotos

The term orthorectification refers to the process that removes effects of relief displacement, optical distortions from the sensor, and geometric perspective from a photograph or digital image. In a normal photograph, objects closer to the camera appear larger than objects of equal size that are further away from the camera. This presents an obvious difficulty in measuring objects accurately or determining their precise location in a reference coordinate system. In order to use perspective imagery as a map or in a geographic information system (GIS) environment, these geometric distortions must be corrected. The resulting image is referred to as an orthophoto or orthoimage.

Orthoimages can be created from any perspective image, regardless of the source, as long as three things are known:

- interior orientation, which describes the internal geometry of the camera system;

- exterior orientation, which describes the geometric relationship between the image and the ground;

- shape of the ground surface, which must be known in order to remove the effects of relief displacement. A digital terrain model must be supplied as input to the orthorectification process, in addition to the internal and external sensor geometry described above.

Returning to our familiar desktop example, the orthoimage is what would result if we took the 3-dimensional model created by all the intersecting light rays and projected every point straight down from the ground surface to an arbitrary flat plane. Each point would be in its appropriate planimetric (x, y) location, and all the effects of relief would be removed. The resulting image would have the exact same scale everywhere.

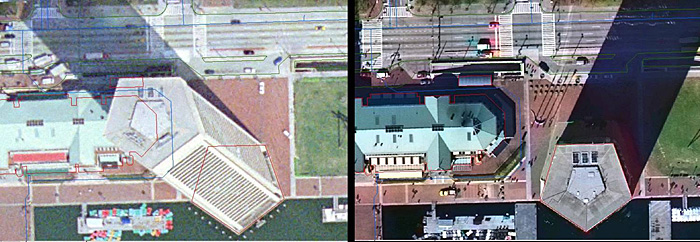

An orthophoto created using an elevation model representing only the bare ground surface will exhibit building lean everywhere except directly below the vantage point of the camera. All USGS and USDA orthophotos are created this way, as are the vast majority of state, county, and municipal orthophotos. On page 188 of Jensen (2007), he shows a comparison of this type of ground orthophoto to a true orthophoto, one which was created using a surface model comprising all of the above ground features at their proper elevation. True orthophotos are preferable in dense urban areas where the lean of tall buildings would obscure many important features on the ground between buildings. Building lean is also very distracting to GIS users, when they overlay building footprint data on top of an orthophoto backdrop. In a true orthophoto, the building footprints will line up with the images of the rooftops; in a ground orthophoto, they will not. Even though both datasets may be equally accurate and correct, the map still doesn't "look right."

Orthophoto Product Specifications

The specification for a digital orthophoto product deliverable must stipulate a ground coordinate system, pixel dimensions, spatial accuracy requirements, and the image file format. These specification elements are usually driven by the end user's application.